Comparison of different machine learning algorithms

The learning and training of four algorithms are implemented based on the sklearn machine learning package in the python open source library, and the hyperparameters of each algorithm are set as follows (Table 2), and the optimal parameters are selected by the GridSearchCV search in “Hyperparameters tuning”.

BayesianRidge regression

Four control parameters, lpha_1 controls the normal prior, lpha_2 controls the observation error, ambda_1 controls the strength of all regression coefficients gradually approaching 0, and ambda_4 controls the strength of all regression coefficients gradually approaching a common value. The optimized values of the control parameters were obtained by applying a grid search. Table 2 shows the range of control parameter values chosen to obtain the optimal parameters, which were obtained from the search: lpha_1: 1.0, lpha_2: 1e−08, ambda_1: 1e−08, ambda_2: 1.

Decision tree regression

Decision tree regression mainly controlled by the maximum depth of the tree, in order to prevent overfitting in Table 2 set up for obtaining the optimal parameters of the control range, through the grid search to obtain the optimal decision tree model depth of 6.

XGBoost

XGBoost consists of seven control parameters, max_depth controls the maximum depth of the tree; learning_rate indicates the learning rate, which controls the iteration rate and prevents overfitting; n_estimators indicates the number of integrated weak estimators, the larger the n estimators are, the stronger the learning ability of the model is, and the easier the model is to overfitting; subsample controls the proportion of sampling from the sample; colsample_bytree controls the proportion of all features randomly sampled when constructing each tree; reg_alpha:L1 regularization coefficient; reg_lambda: :L2 regularization coefficient. Table 2 shows the range of control parameter values chosen to obtain the optimal parameters. The optimal parameters obtained from the search are: max_depth: 3, learning_rate: 0.1, n_estimators: 1000, subsample: 0.7, colsample_bytree: 1.0, reg_alpha. 0.01, reg_lambda: 1.0.

LightGBM

LightGBM controlled by four parameters, num_leaves is the number of leaf nodes on a tree, and max_depth to control the shape of the tree, the parameter has a great impact on the performance of the model, need to focus on regulating the parameters. learning_rate indicates the learning rate, choose a relatively small learning rate can obtain stable and better model performance, max_depth controls the maximum depth of the tree, too large a value of overfitting will be more serious, min_child_weight is the sum of all the samples in the smallest child node, the parameter is too large the model will be underfitting, too small will lead to overfitting, need to be adjusted according to the data. The model will be underfitted if the parameter is too large and overfitted if it is too small. The optimal parameters obtained through grid search are: num_leaves: 20, learning_rate: 0.05, max_depth: 4, min_child_weight: 0.1.

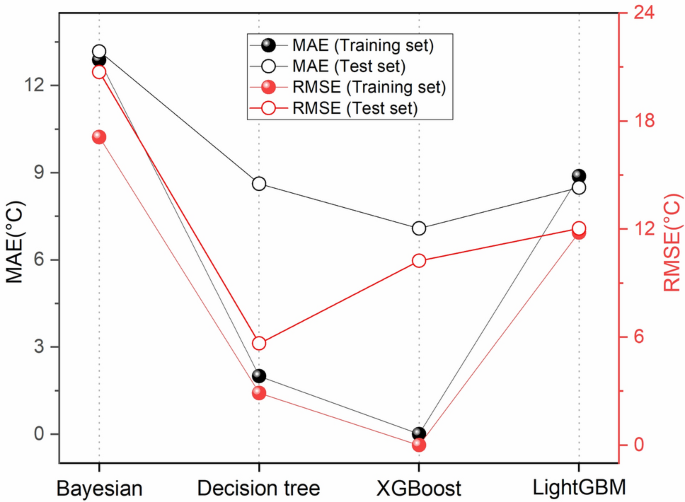

The prediction performance of the four machine learning algorithms was analyzed by optimal parameter selection, and Fig. 5 shows the prediction errors of the training dataset and the test dataset. From the figure, it can be concluded that the prediction error of the test dataset is basically similar to that of the training dataset. Among the four methods, the MAE of the XGBoost training dataset and the test dataset are 0.002 and 7.08, and the RMSE is 0.003 and 10.24, indicating that the algorithms have relatively low generalization. The LightGBM training dataset and the test dataset have the highest MAE and RMSE errors are the smallest and the generalizability is the highest. all four algorithms have good MAE and RMSE, indicating that the above machine learning algorithms can be used to predict reservoir temperature.

MAE and RMSE for the training and test sets of the 4 models (the solid center of the figure shows the training set and the hollow center shows the results of the test set; MAE is shown in black and RMSE is shown in red).

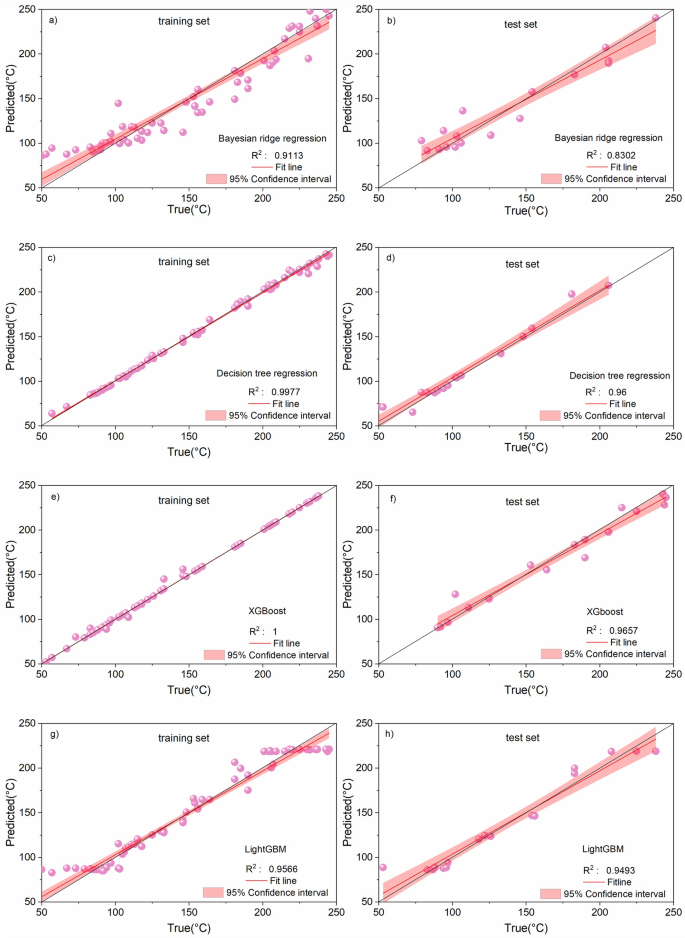

The prediction results of the training set and test set were plotted (Fig. 6), all the models had good prediction results in the training set. Migrating the trained models to the test set, the results showed that, the R2 of Bayesian Ridge Regression Algorithm = 0.8302, Decision Tree Algorithm R2 = 0.96, XGBoost Algorithm R2 = 0.9657 and LightGBM Algorithm R2 = 0.9493, Except for the Bayesian ridge regression algorithm, the predicted values of the three tree-based algorithms match well with the true values in the test set (all of them reach more than 94%). This shows that the tree-based machine learning algorithms can accurately predict the underground reservoir temperature. After the comprehensive evaluation of MAE, RMSE and R2 indexes, it can be seen that the machine learning algorithm using XGBoost has the best accuracy in predicting the results, and LightGBM and decision tree algorithms are the second best.

Prediction results and R2 for the training and test sets of the 4 models.

Comparison of different feature combinations

In this section, based on the correlation of features (Fig. 3) and combining the significance of different features, different forms of feature combinations are constructed and listed in Table 3, which are compared with the prediction performance of F-4 to explore the effect of feature selection on the temperature prediction performance.

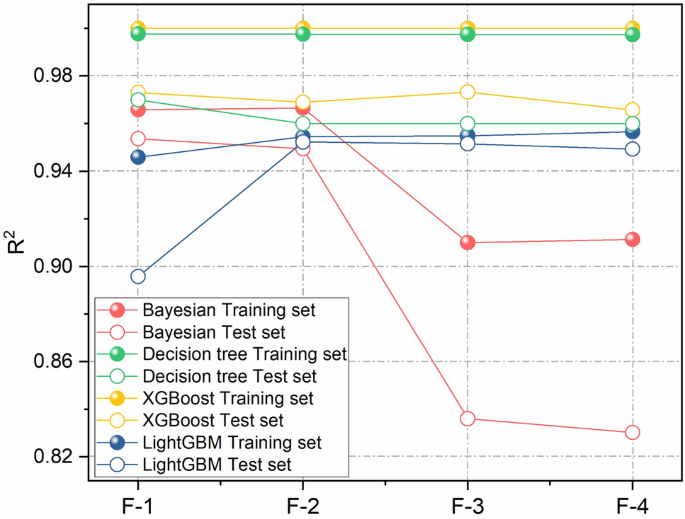

Four algorithms were used to train the model for each of the above forms of feature combinations, and the prediction results of the training and test sets were plotted (Fig. 7). The results show that Bayesian Ridge regression has a better predictive ability of the model when choosing reasonable input parameters (F-1, F-2), and the predictive effect of the model decreases with the input of lower influencing factors (Fig. 7, red F-3, F-4); Decision Tree regression and XGBoost model have a small difference in the prediction error of the reservoir temperature under the conditions of different feature combinations (green and yellow dotted lines); LightGBM model has higher predictive ability of the model at F-2 and F-3, and the predictive effect of the model decreases at F-1 and F-4 (blue dotted line).

R2 of different algorithms using different feature combinations.

The test results of the four algorithms with the four combination forms are further compared (Fig. 7 and Table 4). The results show that the model accuracies of different algorithms with different feature combinations range from 0.8302 to 0.9732, and XGBoost performs the best with an average accuracy of 0.9703, followed by Decision Tree algorithm with an average accuracy of 0.9625, and LightGBM with an average accuracy of 0.9373. Among them, XGBoost and Decision Tree algorithms do not depend on the selection of features, and XGBoost does not depend on the selection of features with different feature combinations. XGBoost and Decision Tree algorithms are not strongly dependent on the selection of features, and the accuracy of XGBoost is in the range of 0.9657 to 0.9732 for different combinations of features; Decision Tree algorithm is the next best, and its accuracy is in the range of 0.96 to 0.97 for different combinations of features.The average value of the accuracy of Bayesian Ridge The accuracy of Bayesian Ridge Regression and LightGBM algorithms is unstable and sensitive to the selection of features, Bayesian Ridge Regression algorithm has the largest fluctuation in accuracy, with a minimum of 0.8302 when using the feature combination F-4, and a maximum of 0.9537 when using the feature combination F-1; LightGBM algorithm has a maximum of 0.9537 when using the feature combination F-1. The accuracy of LightGBM algorithm is slightly lower than 0.9 when only feature combination F-1 is used, and the average accuracy of different algorithms is maximum 0.9577 when feature combination F-2 is used, followed by feature combination F-1 (the average accuracy of the algorithms is 0.9482), and the average accuracy of the algorithms is minimum 0.9263 when feature combination F-4 is used.

By comparing the prediction performance of the four modeling algorithms with the F-4 combination, it is shown that a reasonable selection of input features can improve the prediction results of the model.

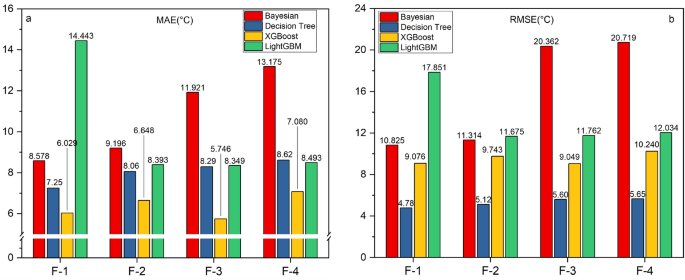

Furthermore, the evaluation results of different algorithms using various feature combinations based on the MAE and RMSE metrics are presented in Table 5 and Fig. 8. The results indicate that, for different algorithms using different feature combinations, the Mean Absolute Error (MAE) ranges from 6.0287 to 14.4431, and the Root Mean Squared Error (RMSE) ranges from 4.78 to 20.7185. XGBoost and Decision Tree algorithms exhibit the smallest prediction errors. XGBoost achieves an MAE ranging from 6.0287 to 7.08 across different feature combinations, followed by the Decision Tree algorithm with an MAE range of 7.25 to 8.62. However, Bayesian Ridge Regression and LightGBM algorithms exhibit instability and sensitivity to feature selection. When using only feature combination F-1, the LightGBM algorithm shows larger MAE and RMSE values. The Bayesian Ridge Regression algorithm demonstrates the greatest fluctuation in error, with RMSE exceeding 20 when using feature combinations F-3 and F-4. With feature combination F-2, the algorithms exhibit the smallest average errors, with an MAE of 7.3392 and RMSE of 10.1981. Next is feature combination F-3, with an average MAE of 7.9094 and RMSE of 12.3658. Feature combination F-4 results in the largest average errors for the algorithms, with an MAE of 8.5995 and RMSE of 12.9033.

MAE and RMSE of different algorithms using different combinations of features.

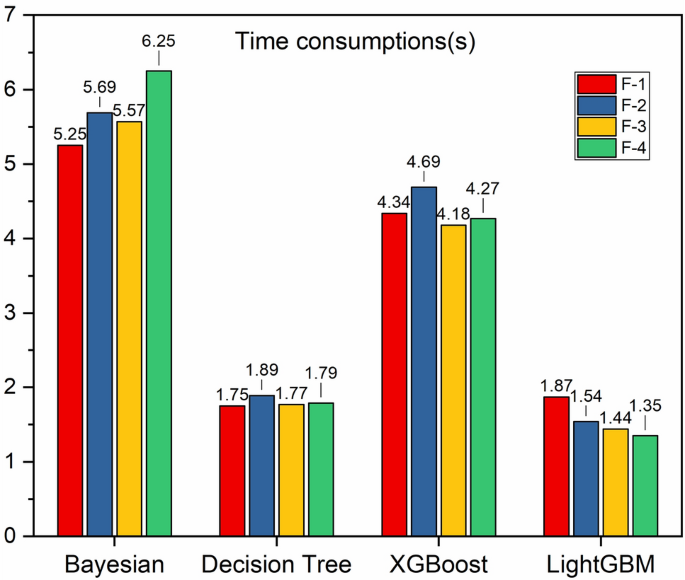

We further calculated the running time of each model under various combinations (Fig. 9), which reflects the computational performance of each model by recording the execution time of each model’s learning curve, and the sum of the validation time for the validation sample size. Usually, an optimal model requires less running time, while a bad model will be very time-consuming, as can be seen in Fig. 9, the running efficiencies are LightGBM, Decision Tree Regression, XGBoost, and Bayesian Ridge Regression in descending order.

Time consumptions for learning curves of machine learning models.

Taking into consideration the evaluation results of the four metrics mentioned above, the optimal combination of reservoir temperature prediction is as follows: when the feature combination F-3 is adopted and XGBoost algorithm is selected, the model error is minimum and the accuracy is maximum of 0.9732.

Comparison with traditional geothermometer methods

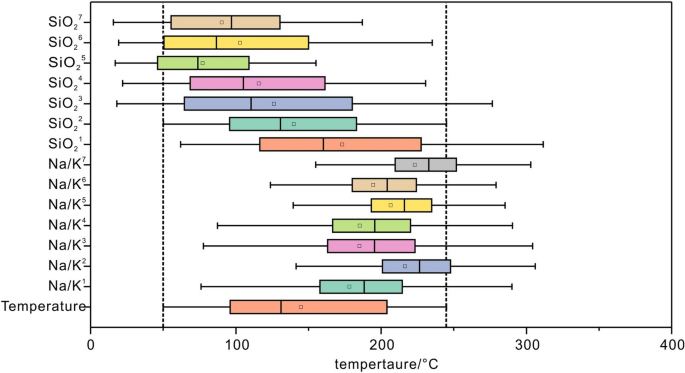

Based on the sodium–potassium cation and SiO2-based geothermometers (Suppl. Appendix B), a comparison between the predicted reservoir temperatures using the geochemical geothermometer formula and the measured temperatures is presented (Fig. 10). and The sodium–potassium geothermal temperature scale formula is based on cation-exchange reactions and does not apply to hot water where mixing of hot water of different origins occurs, nor does it apply to acidic water with a pH much less than 711,12,13. The silica geothermometer method is based on the solubility of silica minerals and is applicable in the interval of 20 to 250°C51. Therefore, only the alkaline (pH greater than 7) dataset was selected for the calculations when sodium–potassium geothermal temperature scaling method was used.

Comparison of predicted reservoir temperature with measured temperature based on chemical geothermometer.

The results show that the Na/K1 geothermometer has good prediction results mainly for reservoirs above 150 °C and is not applicable to this dataset; the results of the geothermometers by Na/K2, Na/K3, Na/K4, Na/K5 Na/K6 and Na/K7 are much larger than the actual temperatures, which are not in accordance with the actual situation. For the SiO2 ground thermometer, the estimated temperature of SiO21 is slightly larger than the actual temperature; the estimated temperatures of SiO24, SiO25, SiO26, and SiO27 are lower than the actual temperature; the estimated temperatures of SiO22 and SiO23 are close to the actual temperature and provide reasonable predictions for the geothermal field. The SiO22 and SiO23 methods yield Mean Absolute Errors (MAE) of 30.80 and 51.16, respectively, and Root Mean Square Errors (RMSE) of 57.56 and 71.65, respectively.

The SiO22 and SiO23 geothermometer estimation results are compared with the machine learning based reservoir temperature prediction. All the prediction results based on machine learning are superior to the SiO22 and SiO23 geothermometer method. The difference in the error distribution of the two types of geothermometers is large, which indicates that the machine learning algorithms have a certain degree of superiority.

Generalizability analysis of the model

In the most ideal case, we expect the model to perform without substantial performance bias when applied to different datasets. That is, a model with good generalization is able to successfully apply the patterns or laws learned during training to new and different datasets, rather than just performing well on the training data. In this section, we validate the generalizability of the model by applying data from previous published results [Shadfar Davoodi, Hung Vo Thanh (2023), Shadfar Davoodi, Mohammad Mehrad (2023)], brought into our trained model for prediction. Table 6 compares the RMSE, MAE and R2 values achieved using the modeling algorithms of this study for predictions presented in published studies. The results in Table 6 show that placing new data into the model is still able to make good predictions and the most accurate predictions are for the XGBoost model of this study with RMSE ,MAE and R2 of 0..328, 0.228 and 0.997 respectively.