Scientists are grappling with the important challenge of inter-study generalization in the spectral analysis of stars, especially the transfer of knowledge from low- to intermediate-resolution spectroscopic surveys. Xiaosheng Zhao, Yuan-Sen Ting, and Rosemary FG Wyse will collaborate with colleagues from the Johns Hopkins University Department of Physics and Astronomy, the Ohio State University Department of Astronomy, the Chinese Academy of Sciences School of Astronomy and Space Sciences, and the Johns Hopkins University Department of Applied Mathematics and Statistics to demonstrate a new approach using pre-trained neural. network. Their research focuses on leveraging data from the LAMOST low-resolution survey to improve stellar parameter estimates from the DESI medium-resolution survey. This is an important task for large-scale galactic archeology and understanding of stellar populations. By comparing a multilayer perceptron trained directly on the spectrum to a multilayer perceptron utilizing embeddings from a transformer-based model and evaluating different fine-tuning strategies, the team reveals that a simple pre-trained model can achieve competitive results, paving the way for more efficient and accurate stellar analysis across diverse datasets.

Scientists are tackling a long-standing challenge in astronomy: reliably classifying stars using disparate observational data. Obtaining consistent stellar properties across surveys is essential to building a comprehensive picture of galaxies. This study demonstrates a surprisingly effective technique for using artificial intelligence to bridge the gap between datasets of varying quality.

Scientists are grappling with a fundamental challenge in modern astronomy: ensuring consistency when analyzing star spectra from different surveys. Cross-study generalization, that is, the ability to accurately estimate stellar properties such as temperature, chemical composition, and surface gravity from data collected with a variety of instruments and methods, is critical to maximizing the scientific benefits from the wealth of spectroscopic data currently available.

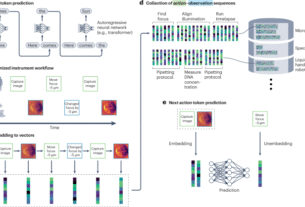

In this study, we present a detailed investigation of the techniques for transferring the knowledge gained from the LAMOST low-resolution spectra (LRS) to the more detailed medium-resolution spectra (MRS) provided by the DESI instrument. The researchers demonstrated that a pretrained multilayer perceptron (MLP), a type of neural network, can achieve surprisingly strong performance in this cross-survey setting without any model tuning.

Specifically, this work focuses on leveraging a model initially trained on the large-scale LAMOST dataset and adapting it for use on the DESI spectrum. The researchers compared the effectiveness of training an MLP directly on raw spectral data versus leveraging spectral embedding, a compressed representation of the spectrum produced by a more complex self-supervised transformation-based model (often referred to as the underlying model).

These underlying models are pre-trained on vast amounts of data to learn common spectral features. The study also considered various fine-tuning strategies, including changing only a small portion of the parameters of a pre-trained model, to optimize performance on DESI data. The results show that transformer-based embedding offers advantages when analyzing stars with relatively high metal content ([Fe/H] > -1.0), a simple MLP trained directly on LAMOST spectra performs well for metal-poor stars.

This finding suggests that a complex underlying model is not necessarily necessary to effectively generalize across studies. Furthermore, the optimal approach for fine-tuning the model depends on the parameters of the particular star being estimated. While this study highlights the potential for simple pre-trained neural networks to deliver competitive results, it also suggests that further research is needed to fully understand the role of spectrally-based models in this context. Ultimately, this research will pave the way for more efficient and reliable analysis of large spectroscopic datasets, allowing astronomers to build a more complete picture of the Milky Way and the stars within it.

Cross-survey stellar abundance determination using pre-trained multilayer perceptrons and transformer embeddings

The pre-trained multilayer perceptron shows strong performance in transferring stellar spectral analysis from the LAMOST low-resolution survey to the DESI medium-resolution spectrum without any fine-tuning, establishing a baseline for survey-to-survey generalization. Subsequent moderate fine-tuning using DESI spectra improves these results, demonstrating the adaptability of the pre-trained model to new datasets.

Specifically, iron abundance ([Fe/H]), embeddings derived from transformer-based models offer advantages only in metal-rich regions and exceed the performance of MLPs trained directly on LAMOST spectra. [Fe/H] Greater than -1.0. However, in metal-poor situations, MLPs trained directly on low-resolution LAMOST spectra consistently perform better than MLPs that utilize embeddings from transformer-based models.

This suggests that the benefits of spectrally-based models are not uniform across the range of stellar metallicity. Further analysis revealed that the optimal fine-tuning strategy is parameter dependent. Different star properties benefit from different adaptation approaches. Residual head adapters, LoRA, and full fine-tuning are all evaluated, with the most effective method varying based on the specific stellar parameters being estimated.

These findings highlight the potential of simple pre-trained MLPs as a viable solution for cross-survey generalization in stellar spectroscopy. These models can achieve competitive results without relying on complex underlying models, at least when it comes to specific stellar populations and parameter estimates. Although transformer-based models are promising for metal-rich stars, their effectiveness requires further study, especially in metal-poor regions where simpler architectures are currently superior.

Transfer of stellar parameter estimates from LAMOST low-resolution spectra to DESI medium-resolution spectra using multilayer perceptrons

Multilayer perceptrons (MLPs) form the core of research on cross-study generalization in stellar spectral analysis, specifically addressing the transfer of information from low-resolution to medium-resolution data. We pre-train these MLPs using data from the Large Sky Area Multi-Object Fiber Spectroscope Telescope (LAMOST) low-resolution spectra (LRS) and then fine-tune them to apply to spectra acquired by the Dark Energy Spectroscopy Instrument (DESI) medium-resolution spectra (MRS).

This approach allows evaluating the potential of pre-trained models to overcome discrepancies between datasets acquired with different instrumentation. MLP is designed to directly map spectral information to stellar parameters and accepts as input embeddings derived from continuum normalized spectra or transformer-based models. When operating with LRS flux, the network processes data restricted to 1,462 logarithmically spaced wavelength bins spanning 400 to 560 nm.

The architecture consists of fully connected layers incorporating ReLU activations, culminating in an output layer that predicts target parameters with approximately 2.06 million trainable parameters. We leverage a pre-trained MLP originally developed by Zhao et al. to predict log abundance ratios. [Fe/H] and [α/Fe]. for [Fe/H] Model,The training data consists of 90,106 LAMOST LRS spectra,with labels sourced from the APOGEE DR17 catalog of,supermetallicity stars. [Fe/H] > -2.0, supplemented by data from PASTEL, SAGA, and other VMP/UMP datasets at lower metallicities.

of [α/Fe] This model utilizes 85,400 spectra also labeled with APOGEE DR17 data. The data is split into 9:1 training and validation sets, and the model is optimized using the AdamW optimizer with a learning rate of 1×10−5, weight decay of 1×10−4, and batch size of 32, and trained over 100 epochs with checkpoint selection to guide validation performance. To further explore feature representations, we also evaluate the use of spectral embeddings produced by the SpecCLIP framework, a transformer-based model designed to reconcile spectra from diverse studies by creating a shared representation space. This allows us to compare the performance of MLPs trained directly on spectra with those trained on embeddings derived from these underlying models and evaluate the benefits of leveraging pre-trained, self-supervised models.

Machine learning models effectively combine data from spectroscopic surveys of different stars

Scientists are increasingly relying on large-scale spectroscopic surveys to map the Milky Way and understand the history of galaxy formation. However, these surveys are not created equal. Differences in instrument resolution and spectral range pose major obstacles when attempting to combine data, a process known as cross-study generalization. Astronomers have struggled for years to reliably transfer calibrations and analyzes between surveys, often requiring painstaking manual adjustments.

This study provides a promising step toward automating that process, demonstrating that a relatively simple machine learning model can bridge the gap between low- and intermediate-resolution stellar spectra with surprising effectiveness. It is particularly noteworthy that the multilayer perceptron was successful without extensive fine-tuning. This suggests that a significant amount of information is stored in the wide range of spectral features captured by low-resolution instruments, and that the model can “learn” and adapt to high-resolution data.

Although more complex transformer-based models show some advantage in certain scenarios, their performance is not superior across the board, indicating that model selection should be carefully considered. The finding that the optimal fine-tuning strategy varies depending on the estimated stellar parameters is also important, highlighting that there is no one-size-fits-all solution.

In the future, the focus may shift to refining these machine learning pipelines and exploring the potential of even larger and more diverse datasets. For example, the limitations of current fundamental models for metal-poor stars require further investigation. The ultimate goal is not only to accurately estimate stellar parameters, but also to unlock the full potential of multiple survey datasets to gain a more complete and nuanced understanding of our galaxy.

👉 More information

🗞 Generalization of low-to-medium resolution spectra with neural networks for stellar parameter estimation: A case study using DESI.

🧠ArXiv: https://arxiv.org/abs/2602.15021