Basil Kyriacou and colleagues at Terra Quantum AG have developed shot-based quantum encoding (SBQE). This is a new strategy that takes advantage of quantum computer-specific measurement repetitions called “shots” to represent classical data according to a predefined classical probability distribution. This method addresses the limitations inherent in existing data encoding techniques by efficiently distributing computational resources and directly creating mixed-state representations that are intrinsically linked to classical data. Importantly, SBQE effectively realizes the structure of multilayer perceptrons in quantum circuits and may provide a path to more efficient quantum machine learning. Benchmarks on standard image datasets demonstrate the potential of SBQE, achieving improved accuracy for both Semeion and Fashion MNIST compared to amplitude encoding, without the need for additional data encoding gates, and in the case of Semiion, comparable to the performance of classical neural networks.

Shot-based encoding outperforms classical methods in quantum machine learning benchmarks

Our test accuracy of 89.1% ±0.9% on the Semeion dataset represents a significant advance in quantum machine learning, outperforming previously established amplitude encoding methods by 5.3% and comparable to the accuracy of width-matched classical networks. Quantum machine learning has so far been hampered by the difficulty of efficiently loading classical data into quantum systems without exceeding the limitations of current quantum hardware. Existing data encoding techniques such as angular, amplitude, and basis encoding have inherent drawbacks. Angular encoding is relatively shallow, but often requires an exponential number of qubits. Amplitude encoding can be a highly efficient use of qubits, but requires circuit depth that exceeds the coherence budget of noisy intermediate-scale quantum (NISQ) hardware. Basis encoding offers a different approach, but also struggles with scalability and consistency requirements. SBQE provides a clear alternative by circumventing these limitations.

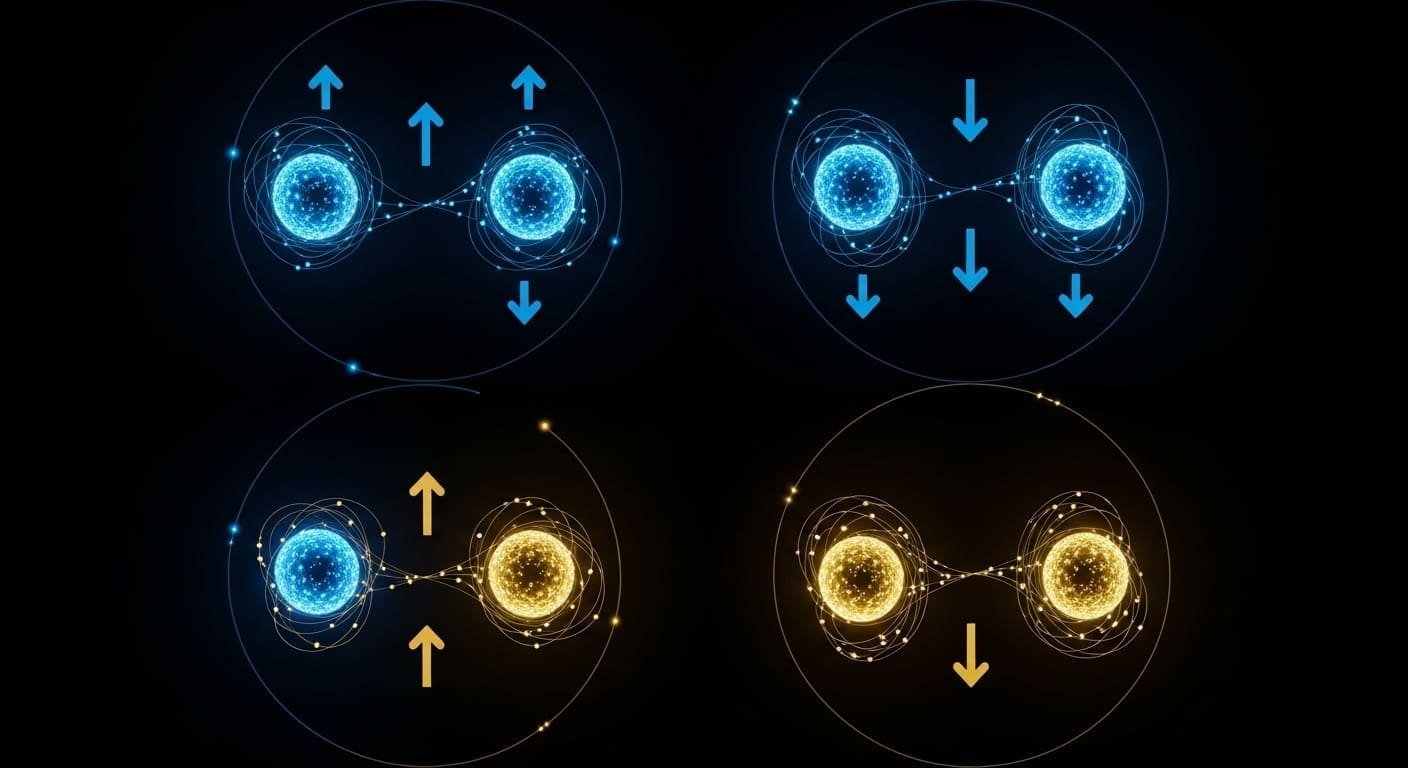

Shot-based quantum encoding (SBQE) avoids these problems by exploiting quantum computers’ unique “shots,” which are repeated executions of the same quantum circuit, to construct a mixed-state representation directly linked to classical data. This effectively realizes a multilayer perceptron within a quantum circuit, enabling complex data processing. Mixed-state representations are created by preparing a quantum system with different initial states according to probabilities derived from classical data. The benchmark included 10 independent initializations for each model to ensure statistical durability, account for training variation, and provide robust performance estimates. The method also achieved a test accuracy of 80.95% ±0.10% on the Fashion MNIST dataset, a commonly used benchmark for image recognition. This represents a 2.0% improvement compared to existing amplitude encoding techniques and a 1.3% improvement compared to linear multilayer perceptrons. Despite these advances, the reported accuracy does not yet show a clear advantage over highly optimized classical machine learning models, given the significant overhead associated with utilizing quantum hardware. However, SBQE suggests that further optimization and advances in quantum hardware could lead to more competitive results by simplifying circuit construction and reducing potential sources of error by avoiding complex data encoding gates. Eliminating data encoding gates reduces the number of noise-sensitive operations and potentially improves the fidelity of quantum computations.

Scaling quantum data loading with repeated measures techniques

Efficiently loading data into quantum computers has long been a fundamental obstacle for real-world machine learning applications. While the exponential scaling of Hilbert space offers vast computational possibilities, it requires efficient methods to map classical data to quantum states. This new technique cleverly takes advantage of the repeated measurements, or “shots,” inherent in quantum computing to represent classical data, potentially providing a more scalable solution. Important questions remain unanswered. The question is whether this method can be scaled to handle very large and complex datasets typical of real-world problems, such as those encountered in natural language processing or high-resolution image analysis. Although initial demonstrations are still limited to image datasets such as Fashion MNIST and Semeion, which contain 60,000 and 5,938 images, respectively, the underlying principles provide a path to processing larger amounts of data. Further research is needed to evaluate its performance on highly dimensional and complex datasets.

This represents a major step forward in addressing the data loading challenges of quantum machine learning. This approach, which allows analysis of larger and more complex datasets that are currently unattainable by many quantum algorithms, could open the door to practical applications as the power of quantum computers increases and coherence times improve. Distributing these shots into multiple initial quantum states, guided by the data itself, creates a mixed quantum state that is a probabilistic representation of the data, resulting in a quantum circuit that is structurally equivalent to a multilayer perceptron. This structural equivalence enables the application of established machine learning techniques within a quantum framework. Future developments will focus on adapting the method to increasingly complex real-world datasets and exploring compatibility with different quantum architectures. The potential for scalability arises from leveraging existing quantum hardware capabilities without the need for complex data encoding circuits, providing a potentially viable solution for expanding the scope of quantum machine learning. As dataset sizes increase, investigating resource requirements such as number of qubits and circuit depth becomes important to determine practical limits for SBQE.

Schott-based quantum encoding (SBQE) successfully embeds classical data into quantum states, achieving test accuracies of 89.1% +/- 0.9% on the Semion dataset and 80.95% +/- 0.10% on Fashion MNIST. The method improves on existing quantum data encoding techniques, such as amplitude encoding, and rivals the performance of classical neural networks of similar size. SBQE distributes shots, a native resource of quantum hardware, depending on the data, creating a mixed quantum state that acts like a multilayer perceptron. The researchers plan to evaluate the performance of SBQE on larger and more complex datasets to further understand its scalability and compatibility with various quantum architectures.