Scientists are grappling with the challenge of integrating complex and chaotic data from experiments into the limited capabilities of quantum processors. Tie-Jun Wang, Run-Qing Zhang, Ling Qian and colleagues from Beijing University of Posts and Telecommunications and Institute of Plasma Physics, Chinese Academy of Sciences present a new physics-based framework that combines Koopman operator theory and quantum machine learning. Their work establishes a structural link between the Koopman operator and quantum evolution that simplifies nonlinear dynamics and enables a way to compress experimental waveforms into a form suitable for quantum processing. Validated on data from a tokamak experiment consisting of 4,763 labeled arrays, the model achieves 97.0% accuracy in identifying corrupted diagnostic data, matching the performance of traditional deep learning with significantly fewer parameters, paving the way for scalable quantum-enhanced data analysis at the edge.

The Koopman operator streamlines classical data for short-term quantum machine learning applications.

Scientists have developed a new hybrid classical-quantum framework that will significantly improve the processing of complex data for use in near-future quantum computers. This breakthrough addresses a critical bottleneck in quantum machine learning: the difficulty of connecting high-dimensional classical data with the limited resources of noisy intermediate-scale quantum (NISQ) processors.

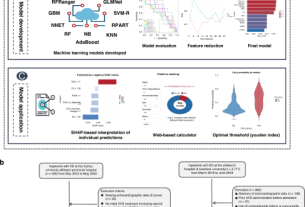

This study introduces a physics-based approach based on the mathematical relationship between the Koopman operator and quantum evolution to linearize nonlinear dynamics. This theoretical foundation enables the creation of practical pipelines in which the Koopman operator acts as a “data distiller” and compresses complex waveforms into compact features amenable to quantum processing.

Specifically, the team designed a system in which the Koopman operator reduces the dimensionality of classical data before it is processed by a modular parallel quantum neural network. Validation of this framework included analysis of 4,763 labeled channel arrays derived from 433 electrical discharges in a tokamak system, a critical step in fusion energy research.

The resulting model achieved 97.0% accuracy in identifying corrupted diagnostic data, comparable to the performance of state-of-the-art deep classical convolutional neural networks. Importantly, this is achieved with orders of magnitude fewer trainable parameters, indicating a significant reduction in computational demand.

This work establishes a new paradigm for leveraging quantum processing in resource-limited environments and paves the way for quantum-enhanced edge computing. This framework prepares classical data for efficient quantum coprocessing by intelligently reducing data dimensionality using physics-based modeling.

This approach is not limited to fusion diagnostics, but is broadly applicable to any field that deals with high-dimensional, dynamically complex data governed by underlying physical laws, such as fluid dynamics, climate modeling, and financial analysis. Empirical results from tokamak data demonstrate state-of-the-art anomaly detection accuracy of approximately 97.0%, with significantly reduced parameter complexity compared to traditional deep learning methods. These discoveries provide compelling evidence for the feasibility of quantum-enhanced computing in data-intensive scientific workflows and represent an important step toward realizing practical quantum benefits in the NISQ era and beyond.

Koopman operator-based feature extraction and variational quantum classification for tokamak diagnostics offers a promising path for real-time control

The physics-based Koopman Hybrid Framework underpins this research to address the challenge of interfacing complex classical data with resource-limited quantum processors. This study began by utilizing the Koopman operator to linearize nonlinear dynamics in tokamak diagnostic data and establishing structural isomorphism between this operator and quantum evolution.

This theoretical foundation enabled the design of a two-stage pipeline in which the Koopman operator acts as a physics-aware data distiller, compressing high-dimensional waveforms into compact quantum-compatible features. These extracted features were then processed by a modular shallow variational quantum circuit tuned to noisy intermediate-scale quantum (NISQ) constraints.

The research team validated this framework using 4,763 labeled channel sequences obtained from 433 releases of the tokamak system, focusing on automated diagnostic screening to identify corrupted data. This involves classifying the time series data denoted by x = {xt}T −1 t=0 (T represents the number of time steps) as either a valid discharge (y = 1) or an anomaly (y = 0).

The model achieved 97.0% accuracy in screening corrupted diagnostic data, comparable to the performance of state-of-the-art deep classical convolutional neural networks. Remarkably, this performance is achieved with orders of magnitude fewer trainable parameters, demonstrating a significant reduction in computational complexity.

This methodological innovation, which combines Koopman-based dimensionality reduction with parallel quantum operations, provides a scalable path for quantum-enhanced edge computing and addresses critical bottlenecks in preparing classical data for quantum co-processing. This work establishes a practical paradigm for leveraging quantum processing in constrained environments and can be applied to various fields such as turbulence modeling and financial time series analysis.

Screening tokamak diagnostic data with physics-based Koopman operator and quantum feature extraction improves anomaly detection and control

An accuracy of 97.0% was achieved by screening corrupted diagnostic data using a new physics-based Koopman hybrid framework. This model matched the performance of state-of-the-art classical deep convolutional neural networks while significantly reducing trainable parameters. This study validates this framework on 4,763 labeled channel sequences obtained from 433 releases of a tokamak system and demonstrates robust performance against complex real-world data.

This study established a theoretical foundation based on the structural isomorphism between the Koopman operator and quantum evolution to linearize nonlinear dynamics. This enabled the design of a feasible noisy intermediate-scale quantum (NISQ)-friendly pipeline in which the Koopman operator acts as a physically aware data distiller.

This process compresses the waveform into compact quantum-enabled features suitable for subsequent processing. The resulting features were processed by a modular parallel quantum neural network, allowing efficient data processing within the constraints of current quantum hardware. This approach represents a practical paradigm for leveraging quantum processing in constrained environments and provides a scalable path for quantum-enhanced edge computing.

The framework’s ability to reduce parameter complexity by orders of magnitude is a significant achievement. Beyond diagnostic applications, this work establishes a generalizable paradigm to bridge the representational gap between classical data and quantum processors. Intelligent dimensionality reduction and feature extraction performed by the Koopman operator prepares classical big data for efficient quantum co-processing, which can be applied to various fields such as turbulence modeling and financial time series analysis. This study provides empirical evidence supporting the feasibility of quantum-enhanced computing in data-intensive scientific workflows.

Koopman operator isomorphism improves accuracy and facilitates quantum classification for tokamak diagnostics

Researchers have developed a new physics-based machine learning framework that effectively extracts complex classical data for processing on short-term quantum computers. This hybrid approach utilizes the Koopman operator to linearize nonlinear dynamics and compress high-dimensional waveforms into compact features suitable for analysis by quantum circuits and subsequent neural networks.

Validation using data from tokamak discharges demonstrated an accuracy of 97.0% in identifying corrupted diagnostic data, comparable to the performance of advanced classical convolutional neural networks. The importance of this research lies in its ability to overcome a major bottleneck in quantum machine learning: the difficulty of interfacing chaotic classical data with resource-constrained quantum processors.

By establishing a theoretical isomorphism between the Koopman operator and quantum evolution, this framework enables a scalable path to quantum-enhanced edge computing. The model achieves performance comparable to state-of-the-art classical methods while using significantly fewer trainable parameters, demonstrating the potential for efficient quantum implementation.

The authors focus on specific diagnostic applications within tokamak systems and acknowledge that current scope has limitations. Future research may explore the broader applicability of this framework to other data-intensive scientific fields and investigate the potential for further optimization of the quantum-classical interface.