The use of generative artificial intelligence threatens to make an already difficult time for human rights around the world even worse, says Harold Hong-joo Koh, a professor at Yale Law School who has held senior positions in the U.S. State Department and is a leading contributor to the debate about how international law should apply to modern technology.

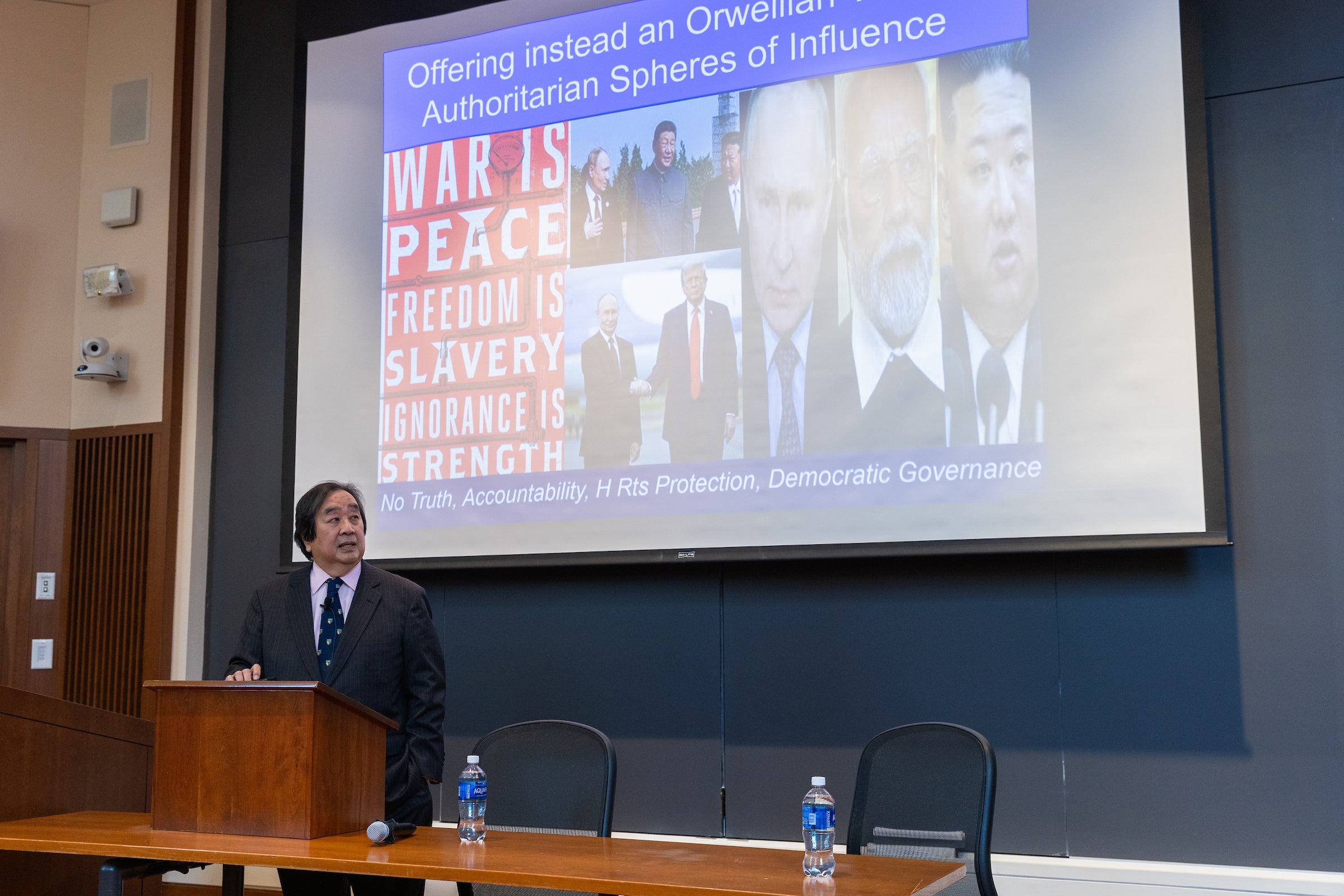

AI itself raises serious ethical concerns, including well-documented issues with bias and the technology’s heavy use of energy and water resources. And with authoritarianism on the rise around the world, including in the United States, AI could serve as a “giant megaphone” for misinformation campaigns (including so-called deepfakes), aid in surveillance, and target perceived enemies, Koh said.

Koh, who spoke at a session titled “Human Rights Under Stress in the Age of AI” at Harvard Law School on November 13, was introduced by his former classmate Gerald L. Newman ’80, the school’s J. Sinclair Armstrong Professor of International, Foreign, and Comparative Law and director of the Human Rights Program, which sponsored the event.

A leading international law expert and practitioner, Mr. Coe served as General Counsel at the State Department from 2009 to 2013 and as the U.S. Assistant Secretary of State for Democracy, Human Rights, and Labor from 1998 to 2001. He currently represents Ukraine in various international law cases.

Mr Koh said international law can play a role in protecting people and holding states accountable against human rights violations caused or reinforced by artificial intelligence, but states must first agree on common principles and understandings. That is becoming increasingly difficult as some countries attack the very foundations of international human rights law, including truth, accountability and democratic governance.

“[The concept of human rights] It is being attacked by a whole group of authoritarians trying to surround and fill the gap created by America’s non-participation. ”

Mr Coe said the concept of human rights as “international rights held by all to all” was a “remarkable proposition” when it was formalized after World War II.

“Today, that vision is under attack by a whole group of authoritarians… They appear to be developing an alliance of dictators that surrounds and fills the gap created by America’s non-participation,” he said.

Mr Koh is part of a group of international law experts who formed the Oxford Process on International Law Protection in Cyberspace during the COVID-19 pandemic. They issued multiple statements clarifying how international law applies to cyber operations, including in areas such as health care, elections, and infrastructure, each signed by more than 100 leaders in each field. The group will also address AI concerns.

“Different groups were developing these norms, but they all stalled,” he said during the Q&A portion of the event. “So we thought, ‘Why not do this in a grassroots way?'”

The United Nations has since cited the group’s work, he said.

“…When governments are faced with a firmly enunciated legal norm, they tend to accept it,” Mr Koh said. “You can’t beat something for nothing. It helps to have a set of rules that a lot of people agree on.”

Mr. Coe also addressed the potential development and use of lethal autonomous weapons and their impact on warfare. Human-piloted drones are already widely used in conflict settings, including Russia’s war against Ukraine.

“What we’re heading into is a video game war,” he says. “War will be the cheapest option. If war is the cheapest option and diplomacy is harder and more expensive, we will be at war forever.”

“What we’re headed for is a video game war. . . . War will be the cheapest option. If war is the cheapest option and diplomacy is harder and more expensive, we will be at war forever.”

Mr Koh suggested that an agreement modeled on the Anti-Personnel Mine Ban Treaty could be possible, and that such an agreement would require a “last clear chance” principle, meaning those who gave final approval to any action could be held responsible for the consequences. Coe noted that dozens of states have signed on to the 2024 Political Declaration on the Responsible Military Use of Artificial Intelligence and Autonomy issued by President Joe Biden’s administration. The document aims to “build a global consensus on responsible behavior and guide countries in the development, deployment, and use of military AI.”

Coe stands by the principles he set out in 2001, which he sums up as telling the truth. Be consistent with past, present and future human rights violations. And to build stronger systems to prevent atrocities in the first place.

He ended his talk with a story about his experience in Kosovo when he was asked to swear in three new judges as Assistant Secretary of State for Human Rights. They did not want to be sworn to religious documents or Serbian or Yugoslav documents. Instead, they chose to lay hands on the Universal Declaration of Human Rights, the International Covenant on Civil and Political Rights, and the European Convention on Human Rights.

“One of them said to me, ‘In this troubled world, this is the only faith we share,'” Koh said. “And I think that’s still true. Even now, as human rights are under stress in the age of AI, this is the only faith we share. We must do our best to uphold it.”

Want to stay up to date with Harvard Law Today? Sign up for our weekly newsletter.