So far, scientists have relied on aggressive reinforcement learning to train LLMS, but the opposite seems to have better results, Satyen K. Bordoloi discovers…

This is a discovery that old-fashioned parents will have high-class AI researchers. Researchers have found that in punishing large-scale language models (LLM), negative reinforcement, or false answers, is more shockingly effective than rewarding the right, or standard reinforcement.

Forget the participation trophy for everyone, this study suggests screaming “I'm wrong!” can help you get better.. No, not kids, LLM. Why is parenting parallel? Because LLM appears to thrive with harsh love while humans resent this approach. Also, research on “The incredible effectiveness of negative reinforcement in LLM reasoning” suggests turning traditional AI training into your mind to adapt techniques straight from old-world parenting.

Carrots and Binary Sticks: Traditional reinforcement learning methods rely on human assessments, which are subjective or complex learning reward models. However, RLVR or reinforcement learning with verifiable rewards (RLVR) has recently emerged as a way to train in areas where accuracy can be clearly stated, such as mathematical inference and coding.

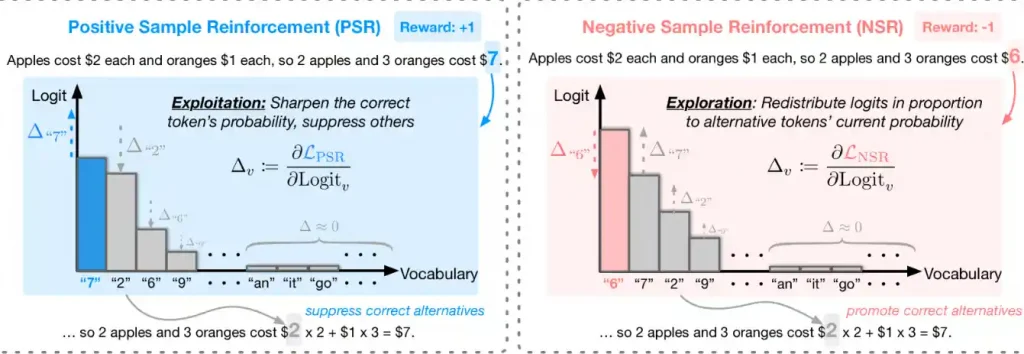

To further this, researchers in this paper tested models such as QWEN2.5 and QWEN3 by giving binary rewards. This simplicity is intended to bypass the muddyness of judgment based on human preferences. A genius idea that researchers came up with is to analyze RLVR into two psychological modes: positive sample enhancement (PSR) like a passionate coach cheering for the correct answer, and negative sample enhancement (NSR) behaving like a strict professor who circles the red ink error.

This NSR is not just about punishing. With surgical accuracy, it suppresses false tokens and redistributes probability to other options based on existing knowledge of the model. It's like the teacher saying, “Please try again, but this time we may take a different approach.” She suggests not just sc, but also changing how you change it.

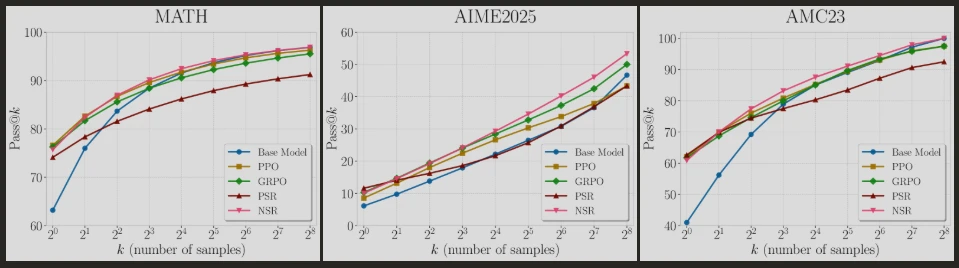

When the wrong answer shapes a genius: The researchers designed a rigorous experiment to pit the PSR against the NSR. They trained QWEN2.5-MATH-7B and QWEN3-4B on 7,500 problems from the mathematical data set. For each problem, the model generated eight inference paths (“rollouts”). They changed the test by updating the only when some models fail (NSR), while others only when they succeed (PSR).

Overall performance was measured using Pass@K. This is the probability that at least one answer of “K” reaches the mark. Why this metric? In the real world, reasoning is not about getting the answer in the first attempt, nor about exploring before the conclusion. Generating 256 solutions for cracking tricky issues reflects how our minds are brainstormed. However, with the exception of AI, it happens at the speed of light. Importantly, both NSR and PSR used fewer samples than industry-standard reinforcement learning algorithms, namely PPO (proximal policy optimization or GRPO), to prove relative policy optimization for groups. So who won – PSR or NSR?

Why “wrong” works incrediblely: The results surprised the researchers because they were counterintuitive. NSR, that is, training on failure alone, surpassed all other training methods. The “punished” model for the wrong answer also improved the pass @K score at K=256, often matching or exceeding refined algorithms such as PPO and GRPO. Surprisingly, NSR boosted pass @1 without seeing one correct example similar to aping tests after studying only the error without knowing the correct answer. Perhaps to explain this, I would paraphrase Sherlock. Anything that remains after denying all the wrong answers must be the correct answer.

This was not the only finding of this study, as PSR (reward-only training) revealed a fatal flaw. It showed an improved immediate accuracy (@1 pass), but its performance swayed at a higher “k” value. why? The PSR model appears to be a one-trick pony that compulsively recycles the enhanced path. As diversity increased, diversity in output collapsed. This is similar to a student who remembers one formula for all problems. In the “non-thinking” mode of QWEN3-4B, the PSR could not unlock the model-specific skills, but the NSR acted like a drill sergeant extracting peak performance.

They discovered the secret after performing gradient analysis. When punishing the wrong token, the NSR method enhances the system's ability to find alternatives proportionately to existing possibilities or researchers. Think of it like a chef who made a mistake. The NSR helps to cook alternative approaches such as reducing salt instead of abandoning the entire dish.

Additionally, NSRs will self-regulate, i.e. update or change their approach only if errors continue. Once you find the correct answer or approach, you stop chasing new approaches, become trapped in successful approaches, and become overloaded. This is probably what LLMS needs. Once a model reaches the scaling limit, techniques that enhance inference rather than chasing size become essential.

Goldilocks algorithm: So, should we abandon the PSR completely and go to the NSR itself? The answer lies in the way we raise our children, although we are sorry to bring the analogy of parenting again. Parents who use too much negativity (NSR) in their children will be traumatized for life. There's no opposite productivity either. That is, parents who have no old children at all (modern approach), always positive (PSR), raising vulnerable snowflakes and unfortunate adults who will fight for life. As the Buddha said, the correct way is the middle path. In fact, it was something researchers found at LLM.

PSR improves path @1, but if there are fewer variables, path @k will deteriorate and “k” will increase. The opposite applies to NSR. This means that a large “k” value is better, but a small “k” value can cause poor performance. Therefore, the researchers propose an intermediate pathway: “a simple weighted combination of PSR and NSR,” “enable the model to learn from both the correct and incorrect samples.” They call this proposed weighted reinforce. This is a balanced purpose that quiets the enthusiasm of the PSR while simultaneously amplifying the NSR. The results are promising as such models are superior to PPO and GRPO by maintaining diversity and accuracy.

So, what does NSR teach you about effective machine learning? a lot. First and foremost, it rejects more Western ideas from LLMS. That is, it does not necessarily require more data to train the model better. Something like Sam Altman is preaching while seeking over a trillion dollars to create an AGI. There is no need to inject new behavior into the model, especially if the base model is strong. All you need to do is improve your existing knowledge and modify the training parameters to improve the performance of your model. W-Reinforce offers a simple, open source alternative to complex reinforcement learning pipelines.

This is not without challenges. NSR falls into extended training without PSR to keep things under control. Second, it works best when the final result is objective and verifiable, like mathematics or code. Additionally, a lot of work needs to be done to understand the impact of NSR on weak models, or the practicality of LLM training.

Despite these, this is a new idea and opens the door to humanity. We have to get into it to understand better ways of machine learning so that we can create better AI. And who knows what else? The fate of AI and human progress depend on it.