Ensemble learning

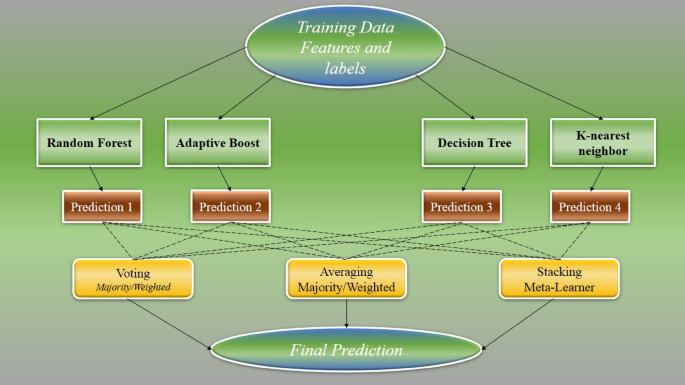

The proposed method employs an advanced ensemble learning framework, which merges the predictions of multiple, diverse machine learning algorithms to achieve improved predictive performance and model robustness. Ensemble learning has gained high importance recently due to its capacity to exploit the varying strengths and methodological perspectives of individual learners. Instead of relying on a single algorithm that may be prone to overfitting or strong inductive bias, ensemble approaches aggregate the outputs of multiple models, thereby minimizing the likelihood of systematic errors and increasing reliability.

In this study, the ensemble is constructed by integrating several well-established algorithms, namely K-Nearest Neighbors (KNN), Decision Trees, Random Forests, and Adaptive Boosting (AdaBoost). Each constituent model contributes unique advantages to the ensemble. KNN offers a non-parametric approach that adapts to the underlying local structure of the data, making it effective for complex, nonlinear relationships. Decision Trees are known for their simplicity and interpretability, forming hierarchical structures that facilitate the extraction of decision rules. Random Forests, as an extension of Decision Trees, mitigate overfitting and increase predictive stability by aggregating the outputs from an ensemble of trees trained on different bootstrapped samples and randomly selected feature subsets.

Furthermore, AdaBoost introduces a sequential training mechanism in which weak learners are trained iteratively, and greater emphasis is placed on instances that were previously misclassified. This adaptive re-weighting strategy enables the overall model to focus learning on harder cases, thus systematically reducing both bias and variance in the final prediction. The distinct methodologies of these algorithms result in varied error patterns; when their outputs are combined, the ensemble is able to neutralize the individual weaknesses inherent in each model, leading to more accurate and robust predictions.

The scientific basis for the enhanced performance of ensemble models rests on their ability to reduce both the variance and bias that are characteristic limitations of single learners. Bagging methods, exemplified by Random Forests, primarily operate by lowering variance through averaging predictions across multiple models, thereby stabilizing the outputs and enhancing generalizability. Boosting methods, as implemented in AdaBoost, address both bias and variance by guiding the sequential focus of weak learners toward difficult-to-classify data points. The aggregate effect is a model that is less sensitive to noise and random fluctuations in the training data, and better equipped to handle new, unseen samples.

Overall, the ensemble strategy, which incorporates KNN, Decision Trees, Random Forests, and AdaBoost, exemplifies a robust and scientifically grounded approach to machine learning. By integrating the outputs of these complementary algorithms, the ensemble can achieve predictive accuracies that consistently outperform those attainable by any single constituent model. A schematic illustration of the integrated ensemble architecture is depicted in Fig. 2, elucidating the manner in which the predictions from diverse base learners are synthesized into a unified output. This approach underscores the value of leveraging algorithmic diversity to create models that are both reliable and generalizable across complex datasets36,37,38.

Ensemble learning flowchart utilized in this study.

AdaBoost

The method employed in this study is Adaptive Boosting (AdaBoost), a robust and widely utilized ensemble learning algorithm that demonstrates efficacy in both classification and regression tasks. AdaBoost constructs a strong predictive model by sequentially combining multiple weak learners, each of which is only slightly better than random guessing. The central mechanism of AdaBoost is its adaptive reweighting process, through which it dynamically focuses attention on the more challenging training samples, thereby improving ensemble accuracy in successive iterations.

At the outset, every data point in the training set is assigned an equal weight. Specifically, for a training set containing N samples, the initial weights ωi(1) for each sample i are defined as

$$\omega _{i}^{{(1)}}=\frac{1}{N}$$

(1)

Within each iteration t, AdaBoost trains a weak classifier, denoted as ht(x), to minimize the total weighted classification error over all training examples. The weighted error rate, ϵt, for the weak learner at iteration t, is computed by

$${\varepsilon _t}=\sum\limits_{{i=1}}^{N} {\omega _{i}^{{

(2)

Where wi

(3)

This equation ensures that weak classifiers with lower error rates receive higher weights, thereby exerting greater influence on the aggregated prediction. Once αt is obtained, the algorithm updates the weights associated with each data point. This updating process increases the weights of those samples that were misclassified in the current round, thus compelling the next weak learner to focus more intensely on these harder examples. The update rule for the weights is given by

$$\omega _{i}^{{(t+1)}}=\omega _{i}^{{

(4)

where the true label yi and the weak learner’s prediction ht(xi) are typically encoded as + 1 or − 1. If an instance is misclassified, this rule increases its associated weight for the next iteration. After updating, the weights are normalized so that they sum to one across the entire training set, maintaining a valid probability distribution.

The boosting process continues for a predetermined number of iterations T or until satisfactory accuracy is achieved. The final ensemble prediction for a new input x is determined through a weighted majority vote (for classification), which aggregates the predictions of all weak learners as follows:

$$H(x)=sign\left( {\sum\limits_{{t=1}}^{T} {{\alpha _t}{h_t}(x)} } \right)$$

(5)

In this equation, H(x) denotes the predicted class label, and the sign function maps the combined, weighted votes to a binary outcome. Through this strategy of adaptive weighting and aggregation, AdaBoost capitalizes on the strengths of multiple weak learners, resulting in a final model that exhibits both increased accuracy and resilience to overfitting compared to any single weak classifier39,40,41.

Decision tree

This approach is a form of supervised learning algorithm widely utilized for both classifications and regressions due to their intuitive interpretability and flexible handling of various data types. The underlying principle involves recursively partitioning the data into subsets by evaluating specific features and splitting points, thereby constructing a tree-like structure. This recursive partitioning continues until the terminal nodes, or leaves, are reached. Each internal node within trees is a decision according to a feature and a corresponding condition, while each branch represents an outcome of that condition.

At each stage of partitioning, the algorithm aims to create child nodes that are as homogeneous as possible, which in the context of classification means that a single class dominates the samples in each leaf node. In regression tasks, the objective is to minimize the prediction error, typically measured by metrics such as mean squared error.

For classification tasks, the splitting criterion at each node typically involves either maximizing Information Gain (IG) or minimizing the Gini Impurity. Information Gain is based on the concept of entropy, H(S), which measures the uncertainty or impurity in a dataset S. The entropy at a node is calculated as:

$$H(S)= – \sum\limits_{{k=1}}^{k} {{p_k}{{\log }_2}({p_k})}$$

(6)

where Pk denotes the class K sample proportions, and K represents maximum class number. A perfectly homogeneous node, where all samples belong to a single class, will have an entropy of zero.

Data Gain quantifies the decrease of entropies attained via data partitioning according to a specific attribute. For a parent node that splits into a set of child nodes, the Information Gain (IG) from a split is computed as:

$$IG=H(parent) – \sum\limits_{{j=1}}^{M} {{\omega _j}H(chil{d_j})}$$

(7)

Here, H(parent) denotes to the parent node’s entropy, H(childj) is the entropy of the j-th child node, ωj is the proportion of samples in the j-th child node relative to the parent, and M is the total number of child nodes. The optimal split is the one that maximizes information gain, i.e., that reduces entropy the most.

Alternatively, the Gini Impurity is another metric frequently employed to evaluate the quality of a split. For a node, the Gini Impurity is calculated as:

$$Gini=1 – \sum\limits_{{k=1}}^{K} {P_{k}^{2}}$$

(8)

where, again, Pk represents the calss K samples. A node is considered pure (impurity of zero) if all contained samples belong to a single class. During the tree-building process, the approach selects the part minimizing the Gini Impurity, striving to produce the purest possible child nodes.

In regression settings, instead of entropy or Gini impurity, the decision tree algorithm seeks to minimize metrics such as Mean Squared Error (MSE) across the splits. The mean squared error for a node containing N samples, with observed values yi and mean prediction y, is calculated as:

$$MSE=\frac{1}{N}\sum\limits_{{i=1}}^{N} {{{({y_i} – \mathop y\limits^{\_} )}^2}}$$

(9)

Where yi represents the real magnitude of the ith samples, and Ӯ denotes to the middle data of all samples in the node.

Through recursive partitioning using these splitting criteria the decision tree algorithm efficiently organizes data into a series of nested, interpretable decisions. This process ultimately produces a model where each path from the root to a leaf node represents a unique series of rules for predicting a target value or class42,43,44,45.

Random forest

The approach is a supervised machine learning technique belonging to the ensemble learning family. This method constructs a collection of decision trees, each trained on a unique, randomly sampled subset of the data, and aggregates their results to provide a final prediction. For classification tasks, the output is determined by a majority voting scheme, while for regression problems, the prediction is based on the average of all individual tree outputs.

The fundamental strength of Random Forest arises from its application of two principal techniques: bootstrap aggregating (bagging) and the random subspace method. Bagging generates multiple, diverse training sets by sampling from the original dataset with replacement. For a dataset with N samples, each subset created for an individual tree is also of size N, but may contain repeated observations due to sampling with replacement. Suppose the original labeled dataset is D=((X1, Y1), (X2, Y2), …, (XN, YN)), each tree is trained on a bootstrap sample Db drawn randomly from D46,47.

In addition to bagging, the random subspace method introduces further diversity by selecting a random subset of features to consider at each candidate split in the tree construction process. If there are M total features, only m features (m < M) are randomly chosen at each split, over which the best split is determined. This reduces the correlation between individual trees and thus enhances the ensemble’s robustness.

For classification tasks, the final prediction for an input x is determined as follows:

$$\hat{y} = \frac{1}{T}\sum\limits_{{t = 1}}^{T} {h_{t} (x)}$$

(10)

The effectiveness of each individual decision tree in the forest depends on its internal split criteria, often based on metrics such as Gini Impurity or Entropy, which are respectively defined as

$$Gini=1 – \sum\limits_{{i – 1}}^{k} {p_{i}^{2}}$$

(11)

$$H(S)= – \sum\limits_{{i=1}}^{k} {{p_i}{{\log }_2}({p_i})}$$

(12)

where pi is the proportion of samples belonging to class i in node S, and K is the number of classes. These metrics measure the purity of the nodes and guide the choice of optimal splits, but Random Forest does not rely on a single global objective function for its final outcome. Instead, its predictive power is derived from the statistical aggregation of numerous weak, decorrelated learners.

A key advantage of the Random Forest algorithm is its capacity to significantly decrease variance compared to a single decision tree, thereby decrease overfitting probability and augment the its ability in generalizing. This ensemble approach naturally results in increased accuracy and robustness with respect to noise and outliers in the training data.

While Random Forests are fundamentally defined by the aggregation process, additional post-hoc analyses, such as the calculation of feature importance, are often performed. These analyses leverage measures such as the Mean Decrease in Impurity (MDI) or the Mean Decrease in Accuracy (MDA), which provide insights into how much each feature contributes to the predictive power of the model. However, these feature importance metrics are ancillary to the core predictive mechanism of the Random Forest algorithm48,49,50,51,52,53.

Support vector regression

SVR is a robust algorithm tailored for regression tasks, developed as an extension of the SVM framework. In contrast to conventional regression models that aim to minimize the deviation between predicted and actual values, SVR formulates regression as an optimization problem. The goal is to determine a function that maintains the greatest possible flatness while fitting most targets within a specified margin of tolerance, known as the epsilon (ε) margin. This approach ensures that only those observations lying outside the margin significantly influence the model, thereby increasing its resilience to the effects of outliers.

The regression function in SVR is defined as

$$f(x)=\left\langle {\omega ,\phi (x)} \right\rangle +b$$

(13)

where f(x) is the predicted value for the input vector x, ω is the weight vector, ϕ(x) represents a mapping of the input data into a higher-dimensional feature space, and b is the bias term. The transformation ϕ(x) allows the algorithm to capture nonlinear patterns in the data by implicitly projecting inputs into a space where linear regression becomes feasible.

To optimize the SVR model, the algorithm seeks to minimize the norm of the weight vector, promoting model flatness, while allowing violations of the epsilon margin through the introduction of slack variables. The corresponding convex optimization problem can be expressed as

$$\mathop {\hbox{min} }\limits_{{\omega ,b,\xi ,\xi _{i}^{*}}} \frac{1}{2}||\omega |{|^2}+C\sum\limits_{{i=1}}^{N} {({\xi _i}+\xi _{i}^{*})}$$

(14)

subject to the constraints

$$\begin{gathered} {y_i} – \left\langle {\omega ,\phi ({x_i})} \right\rangle – b \leqslant \varepsilon +{\xi _i} \hfill \\ \left\langle {\omega ,\phi ({x_i})} \right\rangle +b – {y_i} \leqslant \varepsilon +\xi _{i}^{*} \hfill \\ \xi ,\xi _{i}^{*}\begin{array}{*{20}{c}} {}&{}&{} \end{array}\begin{array}{*{20}{c}} {}&{} \end{array} \geqslant 0 \hfill \\ \end{gathered}$$

(15)

where yi is the true target value corresponding to input xi, N denotes the total number of training samples, ε is the margin of tolerance, and ξi,ξi* are slack variables introduced to allow some observations to lie outside the epsilon-tube. The parameter C > 0 serves as a regularization constant, balancing the trade-off between the model’s flatness and the degree to which deviations beyond ε are permitted.

A crucial feature of SVR is the use of kernel functions, which supply the mapping ϕ(x) without explicitly computing the high-dimensional transformation. Among the most widely utilized Common types of kernels include the linear kernel, polynomial kernel, and radial basis function (RBF) kernel. The kernel function is defined as

$$K({x_i},{x_j})=\left\langle {\phi ({x_i}),\phi ({x_j})} \right\rangle$$

(16)

For example, the RBF kernel is given by

$$K({x_i},{x_j})=\exp ( – \gamma ||{x_i} – {x_j}|{|^2}$$

(17)

where γ is a hyperparameter controlling the width of the Gaussian function, and ∥xi−xj∥ is the Euclidean distance between samples xi and xj.

Overall, SVR constructs a regression function that is optimally flat in the high-dimensional feature space and focuses on those training samples (support vectors) that fall outside the epsilon margin. By leveraging the kernel trick, SVR effectively models complex nonlinear relationships in data, while its loss function and regularization mechanism confer enhanced generalization performance and robustness against anomalous observations54,55,56.

Convolutional neural networks

CNNs represent a distinguished subclass of deep neural architectures meticulously engineered to process and extract features from structured data types, including images, audio signals, and video frames, which naturally exhibit a grid-like topology. The fundamental operation characterizing CNNs is the convolution, a mathematical operation that systematically applies a set of learnable filters across the input data to produce feature maps. Given an input matrix X and a convolutional kernel or filter K, the two-dimensional convolution operation for a single element S(i, j) in the resulting feature map can be expressed as

$$S(i,j)=(X*K)(i,j)=\sum\limits_{m} {\sum\limits_{n} {X(i – m,j – n)K(m,n)} }$$

(18)

where X(i, j) refers to the pixel value at spatial coordinates (i, j) of the input, K(m, n) is the kernel coefficient at position (m, n), and ∗∗ denotes the convolution operation. This process allows the network to learn localized spatial features, such as edges and textures, by sliding the filters over the input domain.

After the application of convolutional layers, CNNs typically incorporate pooling layers, which execute a form of nonlinear downsampling. The primary objective of pooling is to reduce the spatial dimensions of the feature maps, thus decreasing computational burden and introducing invariance to minor translations or deformations in the input. A common pooling operation is max pooling, defined as

$$P(i,j)=\mathop {\hbox{max} }\limits_{{(m,n) \in {R_{i,j}}}} F(m,n)$$

(19)

where F denotes the input feature map and Ri, j represents the pooling region associated with spatial location (i, j). This operation retains the maximum value within each local region, which preserves prominent features while discarding less informative activations.

As the hierarchical feature extraction progresses through stacked convolutional and pooling layers, the extracted feature maps encode increasingly abstract and high-level input presenting. These high-level features are subsequently processed by one or more fully connected layers (dense layers), which compute the final output of the network. The operation in a fully connected layer can be written as

where x is the input feature vector, W is the weight matrix, b is the bias vector, and f denotes the nonlinear activation function (such as ReLU or softmax, depending on the task). The fully connected layers serve as classifiers or regressors, mapping the learned representations to the desired output, such as category labels in classification or real values in regression.

CNNs demonstrate exceptional performance on perceptual tasks due to their ability to learn hierarchical, spatially invariant representations. The initial layers in a CNN are tailored to capture simple and local patterns, such as oriented edges or color gradients, while deeper layers are adept at spanning larger receptive fields, thereby identifying complex structures and semantic content present in the input data. This progressive abstraction makes CNNs particularly suitable for challenging applications in computer vision, including image recognition, object detection, and semantic segmentation. By combining localized feature extraction, parameter sharing, and hierarchical composition, CNNs achieve both computational efficiency and high accuracy in structured data analysis57,58,59.

Multilayer perceptron-artificial neural network

MLP-ANN is a foundational model in the domain of ANNs, designed to approximate complex nonlinear functions in both classification and regression tasks. As a subclass of feedforward neural networks, the MLP-ANN draws inspiration from biological nervous systems, wherein artificial neurons are organized into multiple interconnected layers. The architecture of an MLP-ANN consists of an input layer, one or more hidden layers, and an output layer. Each layer comprises a set of artificial neurons that communicate with subsequent layers through directed, weighted connections.

In an MLP-ANN, the computation within a single artificial neuron in layer l involves the aggregation of inputs from the previous layer (l − 1), weighted by synaptic strengths and biased by a dedicated parameter. This transformation can be formalized as

$$z_{j}^{{(l)}}=\sum\limits_{{i=1}}^{{{n_{l – 1}}}} {\omega _{{ji}}^{{(l)}}a_{i}^{{(l – 1)}}+b_{j}^{{(l)}}}$$

(21)

where zj(l) is the a value before of neuron j in lth layer, wji(l) is the connection mass neuron i in layer l − 1 to neuron j in layer l, (l − 1)ai(l−1) represents the active from of neuron i in the before layer, bj(l) is the bias for neuron j in layer l, and nl−1 is the number of neurons in layer l − 1.

Following this linear combination, a non-linear activation function f(⋅) is applied to obtain the neuron’s output activation:

$$a_{j}^{{(l)}}=f(z_{j}^{{(l)}})$$

(22)

Common activation functions in MLP-ANNs include the sigmoid, hyperbolic tangent (tanh), or rectified linear unit (ReLU), each enabling the network to capture non-linear and intricate relationships inherent in the data.

The fundamental task during training an MLP-ANN is to optimize the set of weights and biases that minimize the discrepancy between the model’s predictions and the true target values. This discrepancy is quantified by a loss function. For instance, in a regression context, the MSE loss function is commonly employed, defined as

$$L = \frac{1}{N}\sum\limits_{{k = 1}}^{N} {(y_{k} – \hat{y}_{k} )^{2} }$$

(23)

where L is the average loss, yk is the target value for the k-th example, yk is the predicted output of the network, and N is the total number of training examples.

Optimization of the MLP-ANN is typically achieved through the backpropagation algorithm in combination with gradient-based methods such as stochastic gradient descent (SGD). During backpropagation, the gradient of the loss function with respect to each weight is computed recursively from the output layer back to the input layer. Weights are then updated according to

$$\omega _{{ji}}^{{(l)}} \leftarrow \omega _{{ji}}^{{(l)}} – \eta \frac{{\partial L}}{{\partial \omega _{{ji}}^{{(l)}}}}$$

(24)

Here, wji(l) denotes the current value of the weight, η is the learning rate determining the magnitude of the update. This cycle of forward propagation, loss evaluation, backpropagation, and weight adjustment is repeated over multiple training epochs. As the network iteratively refines its weights and biases, the MLP-ANN progressively reduces its prediction error on the training data while acquiring the ability to generalize to new, unseen data. The ability of the MLP-ANN to approximate arbitrary continuous functions underpins its widespread application across domains such as signal processing, pattern recognition, and predictive modeling60,61,62.

Lasso regression

The approach is an advanced linear regression method that enhances both the predictive accuracy and interpretability of statistical models, particularly in high-dimensional data scenarios. The essential innovation in Lasso Regression is the inclusion of an L1 regularization penalty within the loss function. This technique enables simultaneous coefficient shrinkage and automatic variable selection, distinctly setting it apart from traditional regression approaches.

The objective function optimized by the Lasso Regression model augments the ordinary least squares (OLS) cost function with the sum of the absolute values of the coefficients. Mathematically, this is expressed as

$$\mathop \beta \limits^{\_} =\arg \mathop {\hbox{min} }\limits_{\beta } \{ \frac{1}{{2n}}\sum\limits_{{i=1}}^{n} {{{({y_i} – X_{i}^{T}\beta )}^2}+\lambda \sum\limits_{{j=1}}^{p} {|{\beta _j}|\} } }$$

(25)

In this formulation, yi denotes the target variable for the ℎith instance, xi is a p-dimensional row vector of predictors corresponding to that instance, β=[β1,β2,…,βp]T is the vector of regression coefficients, n is the number of observations, and p is the number of predictors. The regularization parameter λ ≥ 0 determines the strength of the penalty applied to the model.

$$\frac{1}{{2n}}\sum\limits_{{i=1}}^{n} {{{({y_i} – X_{i}^{T}\beta )}^2}}$$

(26)

represents the empirical MSE of the model on the training data. This loss quantifies the discrepancy between observed targets and the model’s predictions. The second term,

$$\lambda \sum\limits_{{j=1}}^{p} {|{\beta _j}|}$$

(27)

is the L1 penalty, which is proportional to the sum of the absolute values of the regression coefficients. This penalty has the effect of both regularizing the model and, crucially, driving some of the βj coefficients exactly to zero. As a direct result, Lasso inherently performs feature selection by retaining only the most relevant variables within the model.

The amount of regularization in Lasso Regression is controlled by the hyperparameter λ. A larger value of λ increases the penalty for nonzero coefficients, resulting in a sparser model with more coefficients set to zero. Conversely, a smaller λ reduces the regularization effect, making the model approach standard least squares estimation (Eq. 2 without the penalty). The optimal value of λ is typically determined using cross-validation to ensure the best balance between model simplicity and predictive accuracy.

Lasso Regression is especially beneficial in settings where interpretability and feature reduction are important. In fields such as bioinformatics, Lasso can identify key genetic markers from thousands of potential predictors. In econometrics and finance, it assists in pinpointing the most influential indicators from large datasets. In engineering, Lasso is used for sparse signal reconstruction and for reducing model complexity in signal processing tasks.

Despite its strengths, Lasso Regression also faces limitations. When predictor variables exhibit high collinearity, Lasso may arbitrarily select one variable from a group of correlated variables, making feature selection less stable. Additionally, careful and computationally intensive tuning of the regularization parameter λ is required for optimal performance. In cases where predictors are highly correlated, alternative regularization techniques such as Ridge Regression or Elastic Net may offer improved prediction and stability63,64,65,66.