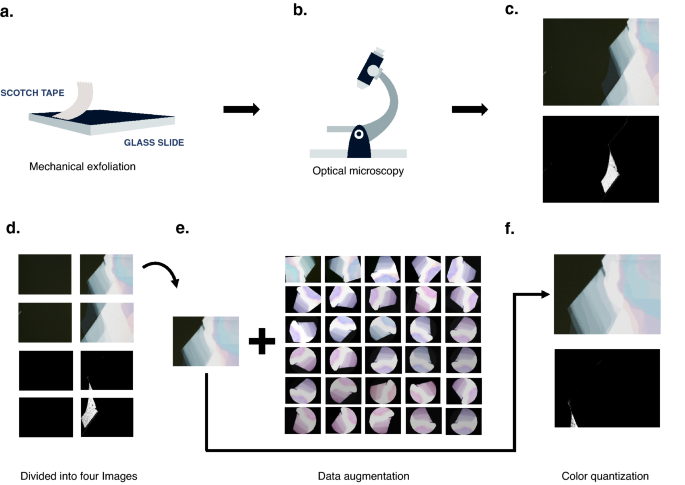

The optical images used in this study include transition metal dichalcogenide (TMD) flakes on SiO.2/Si substrate, TMD flakes on polydimethylsiloxane (PDMS), and TMD flakes on SiO2/Si and PDMS (if present). Using multiple types of substrates models a more realistic flake manufacturing environment and enhances algorithm robustness. All these samples were mechanically exfoliated with 99.999% N.2-Filled glove box (Figure 1a). Optical images were also acquired in the same environment and were not exposed to ambient conditions during the fabrication and imaging process (Fig. 1b). 83 Moses2 The images used throughout this study were taken at 100x magnification by various members of the Hui Deng group who chose different amounts of light to illuminate the samples (Fig. 1c). These images were divided into four smaller symmetric images containing randomized amounts of flakes and bulk material and manually reclassified (Fig. 1d).

Moses2 Flake production and image collection and processing. (a) Mechanical exfoliation of MoSe2 Produce flakes using scotch tape.b) taken with an optical microscope. (c) A typical optical image of the flakes and the surrounding bulk material and a masked version of the image below, where only the flakes are shown in white. (d) if the original image is (c) is split with the following masked versions: (e) 30 images of the results produced by the padding, rotation, flipping, and color jitter extension methods. (fart) image recreated with 20 colors again in the masked version below.

The very time-consuming process of finding flakes makes these datasets small. This is a common phenomenon in many fields such as medicine and physics. However, deep learning models such as CNNs typically contain a large number of parameters to learn and need to be trained on large-scale data to avoid severe overfitting.Data augmentation is a practical solution to this problemtwenty fourData augmentation produces training data with increased diversity and sample size by generating new samples based on existing data. This allows training deep learning models with better performance (see Supplementary Methods). There are two advantages to applying data augmentation. First, scale up the data on which the CNN is trained. Second, the randomness induced by data augmentation forces CNNs to capture and extract spatially invariant features to make predictions, increasing model robustness.twenty fourIn fact, augmentation is very common when using CNNs even on large datasets for this reason. Different augmented images are typically generated on the fly during the model training period to further help the model to extract robust features. Due to limited computing resources, we generated augmented data before fitting the model and augmented the data from 332 images to 10,292 images (Fig. 1e).

Once extended, we applied color quantization to all images (Fig. 1f). Quantization reduced noise and image colors to the manageable numbers needed to extract features for tree-based algorithms. Color quantization algorithms use pixel-by-pixel vector quantization to reduce the colors in an image to the desired amount while preserving the original quality.16We used K-means clustering to identify the required number of color cluster centers using 1-byte and pixel representations in 3D space. K-means clustering trains on a small sample of images, predicts the color indices of the rest of the images, and recreates them with a specified number of colors (see Supplementary Methods).Recreated the original MoSe2 We use images with 5, 20, and 256 colors to find out which resolution produced the most effective and generalizable model. The image was not recreated with less than 5 colors because the resulting image consisted only of the background color and did not show the small flakes of the original image. The 20-color reproduced image looked almost indistinguishable from the original, but the noise was greatly reduced. I recreated the image with 256-color clusters to mimic the unquantized image. Compare the accuracy of tree-based algorithms and CNNs on datasets of images reproduced in 5 and 20 colors. We also compare the performance of tree-based algorithms on images recreated with 256 colors to CNNs on unquantized images (without the need to perform quantization for CNN classification).

After processing the optical images, we use a tree-based deep learning algorithm for their classification. Tree-based algorithms are a family of supervised machine learning that perform classification or regression based on feature values in a constructed tree-like structure. The tree consists of an initial root node, a decision node indicating whether the input image contains 2D flakes, and childless leaf nodes (or terminal nodes) to which target variable classes or values are assigned.twenty fiveVarious advantages of decision trees include their ability to successfully model complex interactions with discrete and continuous attributes, high generalizability, robustness to predictor outliers, and easy interpretation. It includes the decision-making process that26,27These attributes facilitate the coupling of tree-based algorithms and optical microscopy to accelerate 2D material identification. Specifically, decision trees are used in conjunction with ensemble classifiers such as random forests and gradient-boosted decision trees to improve prediction accuracy and smoother classification boundaries.28,29,30.

The single-tree and ensemble-tree features mimic the physical method of identifying graphene crystallites against a thick background using color contrast.The flakes are thin enough that the interference colors create a visible optical contrast for identification, unlike an empty wafer11Computes the similar color contrast for each input image. Tree-based methods use this color contrast data to make decisions and classify images.

This color contrast for the tree-based method is computed from the 2D matrix representation of the input image as A 2D matrix representation of the input image is fed to a quantization algorithm that recreates the image with a specified number of colors. Then, based on the RGB color code, calculate the color difference between all combinations of color clusters to model the optical contrast. These differences fall into different color contrast ranges that include the extreme values of the data. Specifically for the ensemble classifier, only three relevant color-contrast ranges were selected for model training and testing to prevent model overfitting. The lowest range, the middle range representing the color contrast between the flakes and the background material, and the highest range (see Supplementary Methods). This list of the number of color differences in each range is what the tree-based method uses for classification.

Once these features were computed, a k-fold cross-validation grid search was used to determine the optimal values of the hyperparameters for each estimator. In k-fold cross-validation, an iterative process of dividing the training data into k partitions, one partition is used for validation (testing) and the remaining k − 1 are used for training during each iteration.31For each tree-based method, the estimator with the hyperparameter combination that produced the highest accuracy on the test data was selected (see Supplementary Methods). We employed 5-fold cross-validation with a standard 75/25 training/test split. After fine-tuning the hyperparameters of the decision tree with k-fold cross-validation, we created an estimator visualization to evaluate the physics of the decision. Gradient-boosted decision trees and random forest estimators represent an ensemble of decision trees, so the overall properties of their decisions can be inferred from a single decision tree visualization.

In addition to tree-based methods, we also looked at deep learning algorithms. Recently, deep neural networks that use successive layers of abstraction to learn more flexible latent representations have achieved great success in various tasks, including object recognition.32,33A deep convolutional neural network takes an image as input and outputs class labels or some other kind of result, depending on the purpose of the task. In the feedforward step, a series of convolution and pooling operations are applied to the image to extract the visual. The CNN model we employ is ResNet18.34trains a new network from scratch by initializing its parameters with uniform random variables35 This is because there are no public neural networks pre-trained on similar data. The training for ResNet18 is as follows. 75% of the original images and all augmented images were used as training. This can be further split into training and validation sets when tuning the hyperparameters. We used a small batch size of 4 and ran 50 epochs using stochastic gradient descent with momentum.36We used a learning rate of 0.01 and a momentum factor of 0.9. Various efforts have been made to generate accurate visualizations of the inner layers of CNNs, including Grad-CAM, which we employ. Grad-CAM cannot fully visualize a CNN as it only uses information from the last convolutional layer of the CNN. However, this last convolutional layer is expected to have the best trade-off between high-level semantics and spatial information rendering Grad-CAM successfully visualized what CNNs use to make decisions. will betwenty two.