“AI (artificial intelligence) videos are scary.”

Aubrey Lim, who works in the tourism industry, doesn’t remember the account behind the first AI video that hooked her, but the content is etched in her mind.

The video shows a cat being coaxed out of a typical Japanese train station by a security guard. For cat lovers like her, it’s Matatabi. She didn’t think much of it until she went to the comments and saw a few users flagging it as AI-generated.

Once the 38-year-old realized that what she had just been watching was AI, she immediately expressed on Instagram that she had “no interest” in viewing such content.

“These videos are becoming too real,” Lim lamented.

There has been a lot of discussion about the malicious use of AI to create deepfakes. In some cases, this technology is being used to spread misinformation. Senior Minister Lee Hsien Loong, Prime Minister Lawrence Wong and Former President Halimah Yacob All have been deepfaked in the past few years.

The police investigated just last November. Deepfake nude photos of female students Created and shared by other students at Singapore Sports School.

What’s less discussed is the proliferation of AI-generated videos depicting surreal everyday scenarios, such as the cat video Lim saw.

“What’s the point in doing this?” Mr. Lim asked.

From cute cats to angry bait

Videos of cats riding trains, Gather in a circle on the Yishun Void Deck They may seem harmless, such as wholesome, humorous, or cute, but some are designed to provoke a stronger reaction.

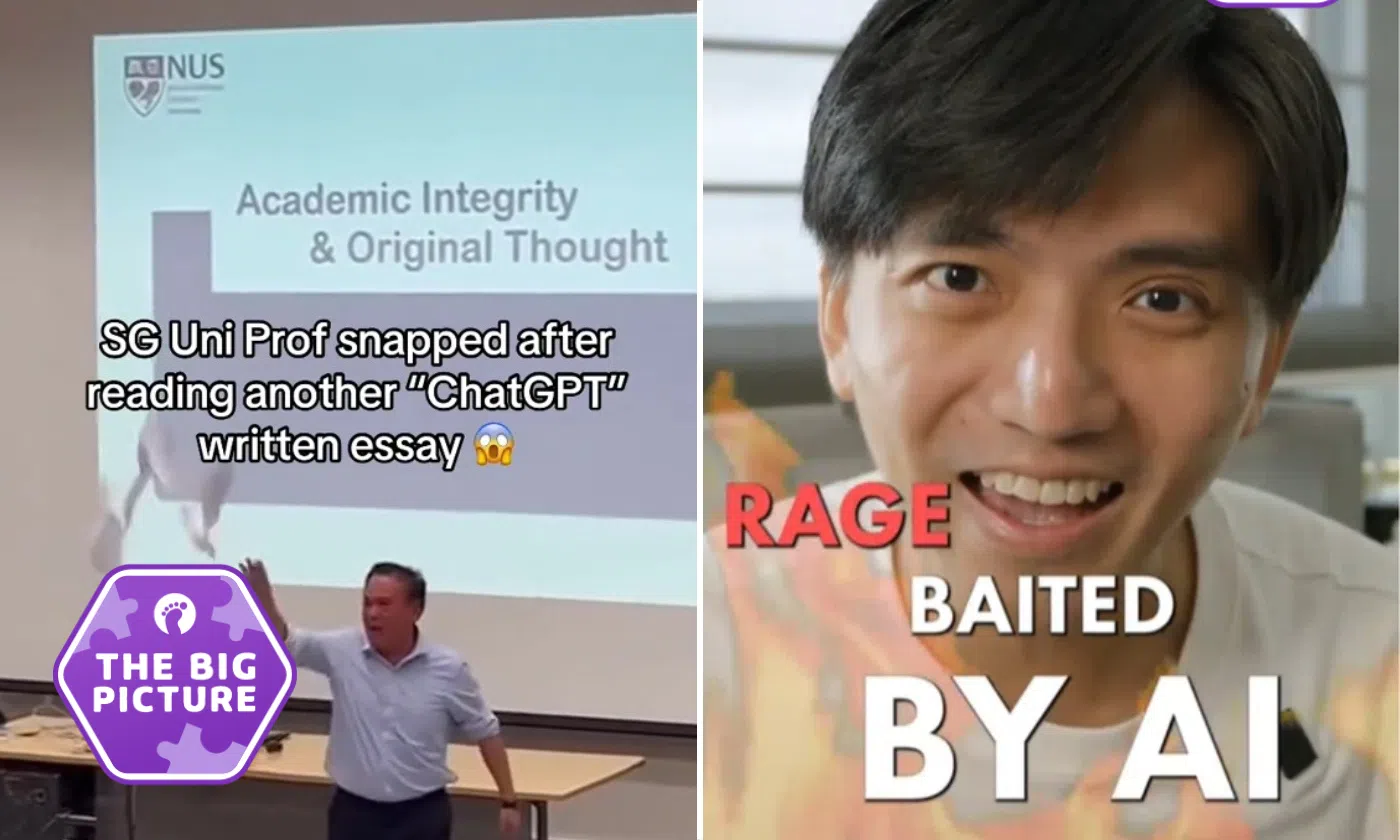

Recently, an AI-generated video purportedly showed: Professor at the National University of Singapore uses abusive language during a class on the use of ChatGPT.

In a similar video posted by TikTok user @Attap.Kia, Professor slams students who rely on ChatGPT Their challenge garnered at least 6.3 million views, 214,600 likes, 8,356 comments, and 22,200 saves.

The video, labeled as AI-generated by the account, sparked a number of emotional reactions.

While some commenters expressed support for instructors, others offered advice on how students can leverage AI to help with their schoolwork without relying too heavily on it. Notably, the overwhelming number of comments are written in Malay, suggesting that it has ripples beyond our borders.

“I never thought the professor’s video would go viral, especially not in Malaysia,” Atap Kia, who wanted to be known only by his TikTok handle, told Stomp.

Still, content creators weren’t particularly surprised by the huge response. “When a topic is about an issue that is close to people’s hearts, it’s natural for people to be passionate about it,” Atap Kia explained.

Billy Heng, 31, who works in video production and social media, echoed this view.

In October, Mr Heng I posted a video about getting enraged by AI clips A video of a woman ranting about how most hawker centers sell the same food. In the video, he explained how he was angry at the “bad view” of women and wanted to “correct” it.

He soon discovered that the account was @HDB Lifewas marked as AI-generated content.

Many AI content creators clearly label their videos. Some still have watermarks from the AI software used, making it clear that the footage is not real.

When asked why he and others continue to “fall prey” to such videos, Mr Heng told Stomp: “The tags at the bottom of the video, coupled with the realism of the visuals and tonality of the voice, are very hard to notice when scrolling mindlessly, which is why people continue to be fooled by such videos.”

Associate Professor Elmy Nekmat, from the National University of Singapore, suggested that the term “AI-generated” itself is “vague”, regardless of where it appears on the screen.

Dr. Elmy explained that the term can mean anything from AI editing and AI styling to content completely created and posted by AI. This ambiguity often causes viewers to ignore the labels instead of stopping to understand which parts were created by AI.

Mr Heng and Mr Lim both said they were more cautious when approaching AI content. Mr Heng further said that he would “never” engage with such content as interacting with robots “means nothing” to his “existence”.

Is it just for fun?

Stomp reached out to several AI content creators, including The HDB Life. @elonmuskgives and @daily_mrt_. Attap Kia was the only creator to respond.

“I have always wanted to explore content creation, and AI tools have made it easier to do so,” says Attap Kia.

Content creators see their videos as a way to “move beyond realism to short fictional stories and microdramas” and profess that they are “interested in using these tools to tell stories that reflect Singaporean life and issues that people care about.”

For Attap Kia, these videos are satirical storytelling meant to be entertaining, with humor forming the ‘core’ of the work.

“Social commentary naturally emerges from the scenarios I create, but I leave much of that discussion to the audience and social media engagement.”

Attap Kia acknowledged that people can sometimes mistake these videos for reality, but insisted that all content is clearly tagged as being generated by AI.

“In some cases, we may exaggerate elements or add captions to emphasize that the content is inspired by real issues but is not real footage,” Atap Kia said.

Mr Heng remains perplexed as to why creators would “make such nonsense”.

“I don’t understand why someone would willingly choose to produce five to 10 videos a day when they are of virtually no benefit to society,” the 31-year-old said.

Where does the law draw the line?

AI videos like Attap Kia’s may not appear to be breaking the law, but that doesn’t absolve creators of legal liability.

“If a video makes it seem like a real person said or did something that they did not actually do, that could be defamation,” Jen Guan Lam, a lawyer at Clyde & Company, told Stomp.

The lawyer added: defamation lawcopyright law, and Online Falsehoods and Manipulation Protection Act voluntary frameworks such as the Model AI Governance Framework can help manage the risks of AI content.

That being said, the gap “probably” exists.

Lam noted that there is no comprehensive law specifically targeting synthetic media and deepfakes, and questions continue to arise over liability when AI content is harmful.

Furthermore, governance frameworks provide guidance and are not strictly legally binding, leaving enforcement and accountability “potentially uncertain.”

The lawyer shared some tips for creators of AI-generated satire and commentary.

- Don’t present false facts about real people

- Be careful with copyrighted material. Please get permission or use the original one.

- Don’t make it seem like someone is endorsing your work without your consent

- Avoid harassment and leakage of personal information

- Pay close attention during elections

- Label your content as AI-generated, preserve the creative record, and follow privacy rules when using data from real people

Lam warned that these measures do not eliminate all legal risks, but demonstrate “responsible intentions” and a “narrow scope of risk” under current law.

Stay safe in the age of AI content

Heng accepts AI videos as a form of “creative expression” but feels they cross the line when they “send certain harmful messages that are not true,” such as inciting racial tensions or impersonating officials.

Although he is not a strong advocate of reporting content, he believes social media platforms should regulate AI content to prevent it from going “out of control.”

Atap Kia agreed, saying, “We support increased labeling of AI-generated content to promote transparency and help viewers understand what they are watching.”

However, Atap Kia warned that prominent watermarks can add “clutter” to videos and can “hinder” visual appeal and storytelling.

Omar Dapur, CEO of Deepfaic, a Singapore-based company that develops tools for enterprises to develop AI threats, said AI has advanced to the point where some videos are undetectable by deepfake detection platforms, including Deepfaic.

“There is no doubt that motivated actors with sufficient resources can already create these types of videos at scale,” Daple said.

He said it’s “much harder now” to tell what’s real and what’s fake. He cited Denmark’s move to give people a copyright in their likenesses as an example of authorities doubling down on deepfakes, and argued for regulations to penalize the use of malicious AI.

That said, he advocates for individuals to adopt a “critical mindset” as their “best defense” when it comes to day-to-day social media use.

Social media users need to understand that “the main purpose of social media is engagement” is not necessarily true. They need to be careful about what they consume and be aware of their own cognitive biases.

Dr Elmy said: “People tend to be biased and pay attention to information that is negative, threatening, emotional, strange, or unconventional.”

“When AI is used to create content, such biases can be exploited in more extreme ways to evoke feelings of fear, injustice, disgust, and anger in viewers.”

Ultimately, he feels that AI videos like this are here to stay because the tools are cheap or free, social platforms reward high-arousal content, and creators rely on AI to quickly create material.

“It will not only grow, but may even become a core feature of the online media landscape, and we must all be prepared to adapt as quickly and safely as possible.”

join the conversation