Variational mode decomposition

Variational mode decomposition (VMD)twenty three This method is an innovative and completely non-recursive data decomposition approach that is adaptive in nature. This method decomposes the original signal x into a set of eigenmode functions (IMFs) by finding an optimal solution to a constrained variational problem.

$$ \left\{ {\begin{array}{*{20}l} {\frac{\min }{{\left\{ {\mu_{k} ,\omega_{k} } \right\}} }\sum\limits_{k = 1}^{k} {\left\| {\partial_{t} \left[ {\left( {\delta \left( t \right) + \frac{j}{\pi t}} \right) \otimes \mu_{k} \left( t \right)} \right]e^{{ – j\varpi k^{t} }} } \right\|_{2}^{2} } } \hfill \\ {\sum\limits_{k = 1}^{k} {\ mu_{k} = x} } \hfill \\ \end{array} } \right., $$

(1)

where \(k\) represents the number of \(IMF_{S}\); \(\left\{ {\mu_{K}

(2)

where \(\alpha\) Represents the quadratic penalty factor. \(\lambda\) indicates the Lagrangian multiplier. \(\lambda \left( t \right)\) is the value of \(\lambda\) at the time \(t\)and \(x\left( t \right)\) is the value of \(X\) at the time \(t\). Use an alternating direction multiplier iterative algorithm to solve the saddle point in Equation (1). (2).

Improved particle swarm optimization

In the basic particle swarm optimization algorithm, the parameters \(\omega\),\(c_{1}\),\(c_{2}\) When is a constant, the optimization process is very likely to fall into local optimization, and the optimization capabilities are relatively poor when dealing with multiple objective functions and constraints. Therefore, an optimized refinement of the basic particle swarm optimization algorithm is proposed with the aim of making it more suitable for multi-objective problem solving.

$$ \left\{ {\begin{array}{*{20}l} {\omega^{\prime} = \omega_{\min } + \left( {\omega_{\max } – \omega_{\ min } } \right)\left( {\frac{{t_{cur} }}{{t_{\max } }}} \right)^{2} } \hfill \\ \begin{gathered} c^{ \prime}_{1} = c_{1i} + (c_{1f} – c_{1i} )\sqrt {\frac{{t_{cur} }}{{t_{\max } }}} \hfill \ \ c^{\prime}_{2} = c_{2i} + (c_{2f} – c_{2i} )\left( {\frac{{t_{cur} }}{{t_{\max } } }} \right)^{2} \hfill \\ \end{gathered} \hfill \\ \end{array} } \right., $$

(3)

where \(\Omega^{\Prime}\) is the improved inertia weight coefficient, \(\omega_{\max }\) set to 0.9, \(\omega_{\min }\) up to 0.2; \(c^{\prime}_{1}\) and \(c^{\prime}_{2}\) A sophisticated learning element. \(t_{cur}\) Represents the current generation number. \(t_{\max }\) Maximum number of iterations. \(c_{1f}\) and \(c_{2f}\) is the ending value \(c_{1}\) and \(c_{2}\)are set to 0.5 and 2, respectively. \(c_{1i}\) and \(c_{2i}\) are initial values of 2 and 0.5, respectively.

$$ \overline{P} = \frac{1}{N}\sum\limits_{i = 1}^{N} {P_{ij}^{t} } , $$

(Four)

where \(\overline{P}\) It is the average of the optimal values of all individual particles. \(N\) is the number of particles. \(P_{ij}^{t}\) is the position of the optimum value of the individual particle. The formula for the improved algorithm is:

$$ \upsilon_{ij}^{t + 1} = \omega^{\prime}\upsilon_{ij}^{t} + c^{\prime}_{1} r_{1} (\overline{P } – x_{ij}^{t} ) + c^{\prime}_{2} r_{2} (P_{gj}^{t} – x_{ij}^{t} ), $$

(Five)

where \(\upsilon_{ij}^{t + 1}\) is the velocity of the particle. \(t\) is the number of generations selected. \(r_{1}\), \(r_{2}\) It is a random number within the interval [0–1], \(x_{ij}^{t}\) is the position of the particle \(t\) Repetition. \(P_{gj}^{t}\) is the current optimal position of all particles in the population.

Bidirectional long-term short-term memory neural network

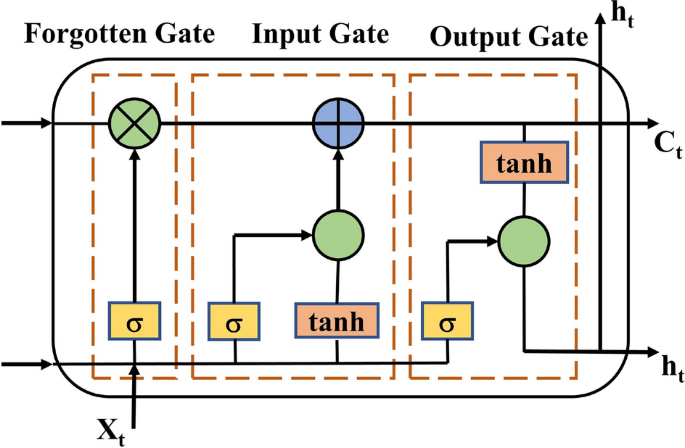

LSTMtwenty four It is a deep neural network that can accurately and efficiently learn long-term dependent information by introducing a gating mechanism that allows the model to selectively retain the ability to transmit long-term timing data information.twenty five. As shown in Figure 1, it consists of three gates: an input gate, an output gate, and a forgetting gate, and one core computation node. The forget gate, input gate, and output gate jointly provide control over the unit state and selectively add or remove information from the unit state.

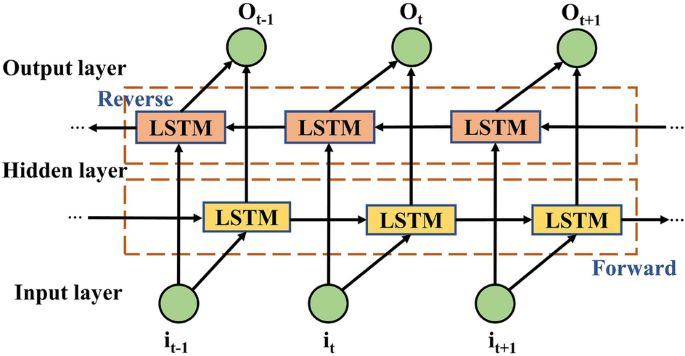

BiLSTM26 The network is composed of forward and backward LSTM neural networks, which realizes two forward and backward LSTM training for time series, effectively improving the comprehensiveness and completeness of feature selection. can do. Structure of BiLSTM27 Shown in Figure 2.

output \(\vec{h}_{t}\) of the forward LSTM layer in Figure 2 is connected to the output \(\mathop{h}\limits^{\leftarrow} _{t}\) of the backward LSTM layers that are weighted and fused to obtain the final power output value. \(O_{t}\). The BiLSTM equation is:

$$ \vec{h}_{t} = \overrightarrow {{L_{LSTM} }} (h_{t – 1} ,i_{t} ),\,\,\,t = 1,2, \cdots , n $$

(6)

$$ \mathop{h}\limits^{\leftarrow} _{t} = \overleftarrow {{L_{LSTM} }} (h_{t + 1} ,i_{t} ),\,\,t = 1 ,2, \cdots ,n $$

(7)

$$ O_{t} = f\left( {W_{{\vec{h}}} \vec{h}_{t} + W_{{\mathop{h}\limits^{\leftarrow} }} \ mathop{h}\limits^{\leftarrow} _{t – 1} + b_{t} } \right), $$

(8)

where \(that}\) is the input eigenvector. \(\vec{h}_{t}\), \(\mathop{h}\limits^{\leftarrow} _{t}\) Forward and backward power prediction. \(\overrightarrow {{L_{LSTM} }} ( \cdot )\), \(\overleftarrow {{L_{LSTM} }} ( \cdot )\) It is a bidirectional computation process of the network. \(W_{{\vec{h}}}\), \(W_{{\mathop{h}\limits^{\leftarrow} }}\) is the weight matrix for bidirectional output connections. \(b_{t}\) is the output layer bias, \(O_{t}\) Prediction of the final output power of the network.