A creepy account that almost certainly uses AI to generate videos of fictitious New Yorkers criticizing incoming mayor-elect Zoran Mamdani raises the frightening prospect that deepfakes could be used to impersonate not just politicians but voters.

Accounts on several social media platforms, which use similar profile pictures and appear to be linked, call themselves “Citizens Against Mamdani.” In recent days, these accounts have been filled with confessions and rants from “New Yorkers” accusing Mamdani of anti-Americanism, plans to raise taxes, and false promises for rent and transportation. They seem to be trying to emulate New York’s diversity, and many of their videos feature some classic New York accents.

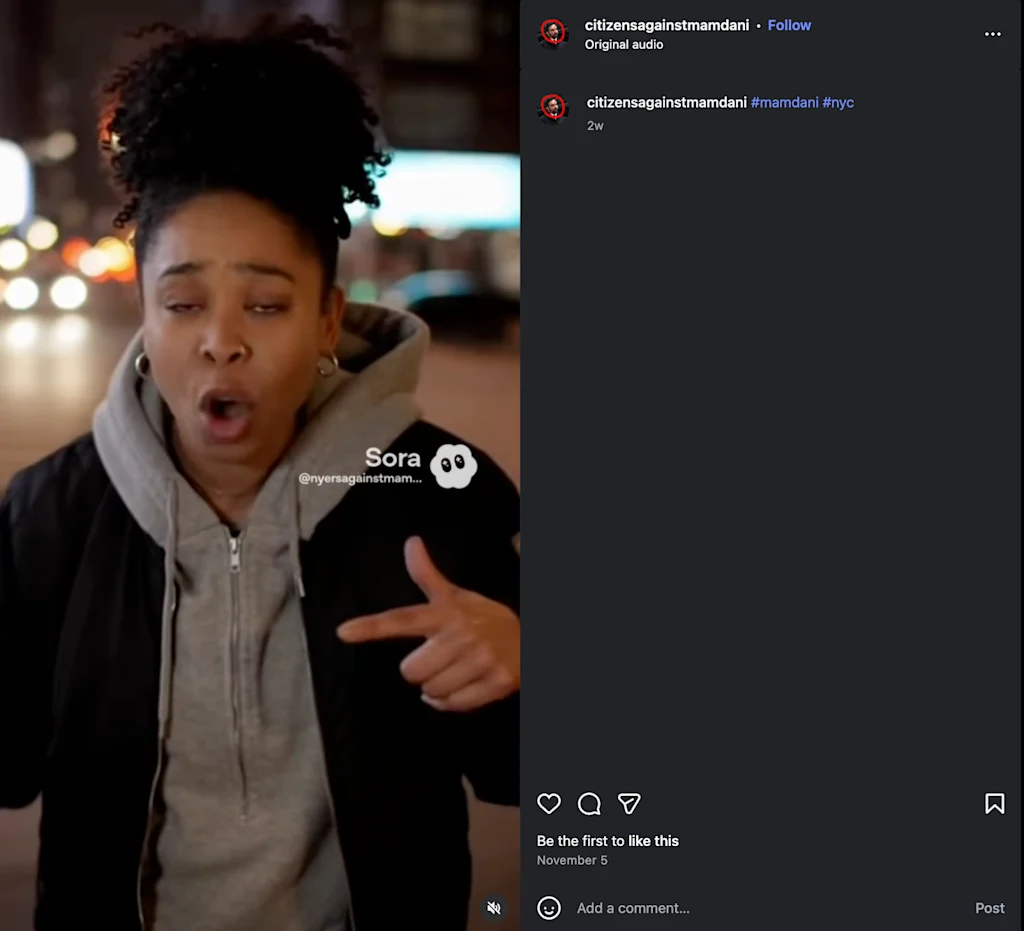

None of the videos went viral, but some were posted to TikTok, Twitter, and Instagram, with some racking up tens of thousands of views. The TikTok account itself has about 30,000 likes. fast company We reached out to their Instagram and TikTok pages but did not receive a response at the time of publication.

“Last election cycle, hiring human influencers to spread certain messages was all the rage. Now you don’t need someone like that on your team,” explains Emmanuel Saliba, principal research officer at GetReal Security, a cybersecurity firm that analyzes deepfakes. “GenAI has made such great strides that campaigns and activists will be able to use text-to-video conversion to create hyper-realistic videos of their supporters and detractors, and online consumers will also become increasingly savvier,” she added.

This online campaign shows how generative AI has essentially democratized the space lawn. “Astroturfing is automated and almost undetectable without technology,” Saliba said. This is a notable evolution from previous election cycles, when it was more common for political operatives to hire influencers, he added.

Using online tools to create a false impression of support for or opposition to a cause or candidate is not new. For example, in 2017, bots were deployed to submit comments to the Federal Communications Commission, which was considering new rules on net neutrality at the time. However, these types of campaigns typically required at least some human effort, such as running a network of social media accounts and hiring influencers.

The rise of generative AI has made it much easier to create the illusion of political popularity online. Now, with just a few prompts and access to the right platform, you can easily generate videos of realistic groups of people.

mirage

Of course, one of the challenges of deepfake detection is that there is no completely reliable way to confirm that a deepfake was generated by AI. But the evidence in the anti-Mandani videos is overwhelming.

In addition to some videos displaying the Sora watermark (a label created by OpenAI to indicate content created with the company’s technology), these accounts publish many similar videos around the same time.

Another big clue is the objects in the background of the image, said Shiwei Liu, a computer science professor at the University at Buffalo who studies deepfakes.

Reality Defender, another company that investigates AI-generated content, analyzed some videos using its platform called RealScan and found that they were highly likely to have been manipulated. The company rated the video, which shows a man in a blue hat shouting, “We all got fooled by Mamdani,” as having a 99% chance of being a deepfake. (It is impossible to score 100%; there is no way to truly verify the truth of content creation).

It’s unclear how much people actually bought into the video, but the comments on the video suggest that at least some online users are taking it seriously. “The videos present the illusion of widespread support for and against an issue, and the people in the videos are ordinary people, so it’s difficult to verify their existence,” Liu said. “This is yet another dangerous form of AI-driven disinformation campaign.”

large scale astroturfing

These accounts are a reminder that the cost of producing disinformation has never been lower. In the past, social engineering support for specific objectives required real effort. For example, by investing in creating authentic and realistic content, explains Alex Lisle, Chief Technology Officer at Reality Defender.

“Now you can ask your LLM to come up with what to say, defining the emotion or message you’re trying to give them,” says Lisle. “And now we can do it at a scale that previously would have taken hours and create hundreds of different citations, thousands of different citations, very quickly,” he added.

By combining deepfakes with large-scale language models, political operatives can not only generate countless scripts of what the deepfakes say, but also videos of real people speaking in convincing voices. spread Those stories. “You’re doubling down on power right now,” Lyle continued. “It used to take multiple people and hours of effort to do this. Now it just costs more computers.”

GetReal’s Saliba emphasized that the problem extends beyond politics. While Mamdani may be an example of a target, the low cost of producing this type of content means that businesses and loved ones could be targeted by this type of disinformation campaign in the future.

The final deadline for Fast Company’s World Changing Ideas Awards is Friday, December 12th at 11:59pm PT. Apply now.