summary: Loneliness, especially among older people, is a growing public health crisis. Researchers have discovered a way to make robot companions more “human” and emotionally resonant: combining empathic AI-powered speech with music. The study found that when a robot uses music alongside sensitive dialogue, it creates a stronger emotional connection and increases the machine’s perceived empathy.

However, the effect may fade as users become accustomed to the music, suggesting that the next generation of social robots will have to be “quantum-inspired,” meaning they will need to be able to adapt to the fluid, ambiguous, and ever-changing nature of human emotions to provide continuous companionship.

important facts

- Multifaceted empathy: Combining music with empathetic speech significantly increases a person’s perception that the robot is lifelike and socially present.

- Effects of “Counselor”: The music mimics the way human therapists use music to comfort clients, making interactions feel like real conversations with personalities.

- Overcoming familiarity: The emotional impact of music may diminish over time, so robots should learn to personalize and vary their music and conversation choices.

- Quantum inspired effects: Researchers are exploring quantum models to capture the ambiguity of human emotions, treating emotions as probabilistic states that change depending on the situation.

- Real world application: The technology is specifically designed for mental health support, elderly care and education, providing meaningful connections for isolated people.

sauce: polyyu

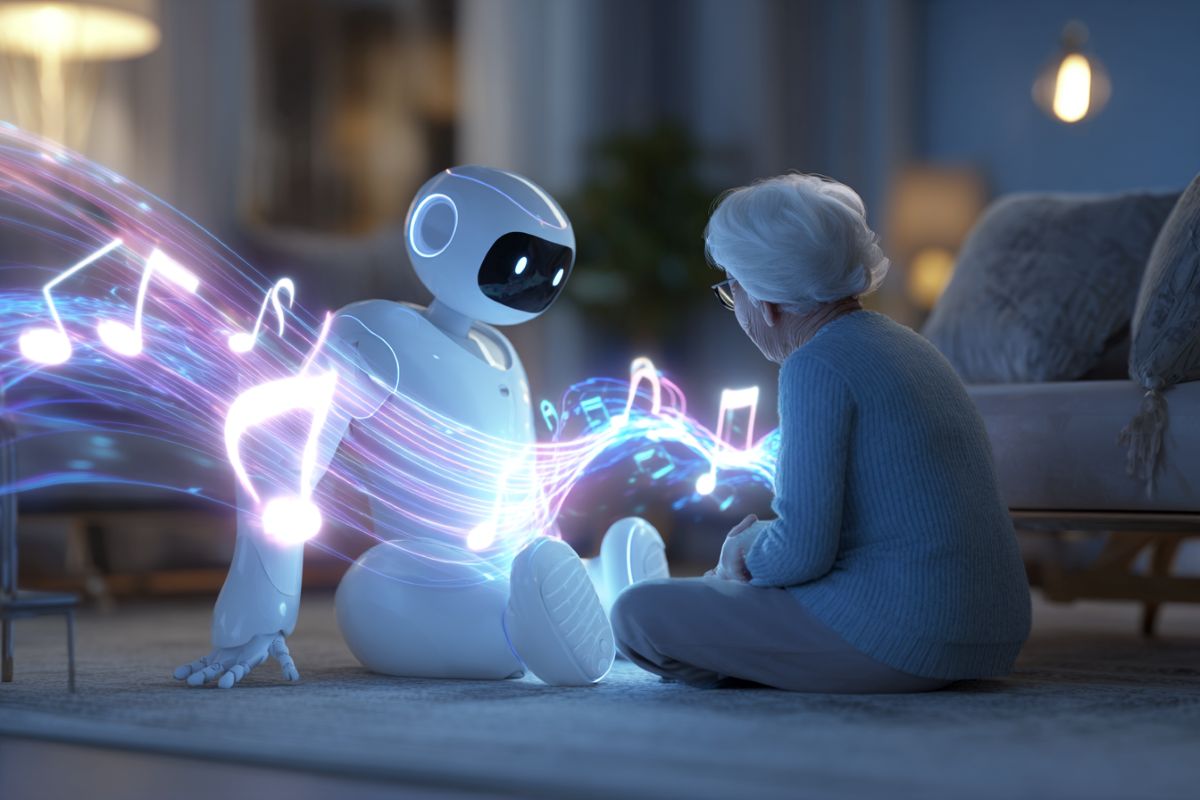

Loneliness has a significant impact on the mental health of the population, especially the elderly. Robots that can recognize and respond to human emotions can serve as uplifting and heartwarming companions.

A research team at the Hong Kong Polytechnic University (PolyU) has discovered that combining the power of music and empathetic speech in artificial intelligence (AI)-powered robots could foster stronger bonds between humans and machines.

These findings highlight the importance of a multimodal approach in the design of empathic robots and have important implications for robot applications in health assistance, elderly care, education, etc.

The research project is Musical robot that speaks through multiple interactionswas led by Professor Johan HOORN, Interfaculty Full Professor of Social Robotics in PolyU’s School of Design and School of Computing, in collaboration with Dr. Ivy HUANG from the Chinese University of Hong Kong.

This study investigated how music and empathetic speech can enhance the emotional resonance of on-screen robots, revealing that music can act as a powerful adjunct to empathetic speech.

As part of the study, the team investigated how Cantonese-speaking participants interacted with an empathetic robot across three interactive sessions. The results showed that combining music and audio significantly increased participants’ empathy with the machine.

“Our data show that the presence of music continued to increase the robot’s human likeness in subsequent sessions,” Professor Hoorn explained.

“One interpretation is that the music made the interaction feel like an actual conversation with a personality, similar to what a human counselor might do by playing music to comfort a client. As a result, the robot seemed more alive or socially present.”

However, the study notes that the influence of music can fade over time if participants tune in after repeated sessions, highlighting the importance of tailoring interaction strategies to the needs of individual users to maintain effective human-robot interaction.

This study suggested that empathic robots should be designed to adapt their responses depending on user feedback and context, such as adjusting different musical elements or gradually personalizing the content of interactions, to maintain lasting empathic relevance.

“Our research shows the importance of multimodal communication, including music and voice, through empathic robots. This holds great promise for applications in real-world settings, particularly in the fields of mental health support and elderly care,” Professor Hoorn emphasized.

The integration of empathic robots that can provide customized musical experiences and conduct sensitive conversations could provide meaningful companionship and emotional support to individuals who may experience loneliness and social isolation. ”

Professor Hoorn is leading another project, “Social Robots with Embedded Large-Scale Language Models to Relieve Stress in the Hong Kong Population”, which has received over HK$40 million in funding from the Research Funding Council’s Theme-Based Research Program.

Professor Hoorn, who also serves as Deputy Director of the PolyU Institute for Quantum Technology, will explore quantum-inspired models of human emotion to better capture and address the ambiguity and ambiguity inherent in emotional experiences.

Unlike traditional computational systems that deal with the fluid and context-dependent nature of emotional responses, quantum models can represent emotional states as probabilistic superpositions, reflecting the true uncertainty and complexity of human emotions.

“What excites me most is the possibility of developing social robots that not only recognize but embrace the complexity of human emotion. These robots have the potential to provide support that is as adaptable, free, and compassionate as the individuals they are designed to assist,” added Professor Hoorn.

Answers to key questions:

answer: Music is a universal emotional language. When a robot combines this with empathetic speech, it sends a signal to the human brain that the machine “understands” the mood. It turns mechanical interactions into shared emotional experiences.

answer: That is the current challenge. The study found that “empathy fades” as people become accustomed to the robot’s patterns. To solve this, researchers are developing AI that can sense when a user is bored or accustomed to a particular song and switch to a new, more relevant song.

answer: Human emotions are not just “happy” or “sad” but are complexly layered. Quantum-inspired models allow robots to process emotions as complex, changing states rather than just binary data, making their responses more compassionate and less robotic.

Editorial note:

- This article was edited by the editors of Neuroscience News.

- Journal articles were reviewed in full text.

- Additional context added by staff.

About this robotics and neurotechnology research news

author: iris rye

sauce: polyyu

contact: Iris Rai – PolyU

image: Image credited to Neuroscience News

Original research: Open access.

“Speaking musical robots in multiple interactions: After bonding and empathy disappear, relevance and realism emerge” by Johan Hoorn and Ivy Huang ACM Transactions on Human-Robot Interaction

DOI:10.1145/3758102

abstract

A musical robot that speaks through multiple interactions: Relevance and realism emerge after bonding and empathy fade.

This study investigates the evolution of the user experience over three repeated interactions with an on-screen NAO robot designed to express artificial empathy through verbal communication and music.

The number of participants across the three interactions is: N1 = 139, N2 = 129, and N3 = 121 each, with 121 participants completing all sessions. During the interaction, the robot gave empathic feedback to the participants and played music as a sign of empathy.

Repeated measurements of MANCOVA and structural equation modeling revealed that the robot’s initial bonding tendency and perception of being empathic faded over time. Instead, a trend has emerged where robots become more personal and, surprisingly, their designs seem to be becoming more human-like.

When the robot simply attempted empathic conversation or played music, participants were disappointed in its ability, as evidenced by increased levels of negative valence. When the robots played music while empathetically singing together, the perception of bonding and empathy increased, creating a mutually reinforcing effect.

Initially, for the loneliest people, the mere presence of the robot was more influential in determining the robot’s relevance to their concerns than the robot’s empathic behavior.

These results highlight the importance of a multimodal approach in the design of empathic robots.