The AI startup has introduced what they say is the world's first video-to-video-to-video model that can convert live footage in real time.

Mirage is made by startup decals and aims to make a big splash in the live streaming industry by repetition of the booming AI video market led by Google's VEO and Openai's Sora.

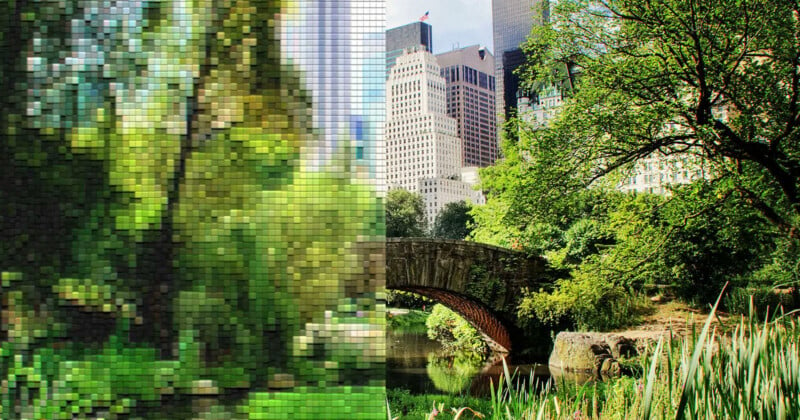

This week, Decart launched its Mirage website and app. This allows users to change YouTube clips. The service offers default themes such as “comic book”, “K-Pop, “Egyptian Pyramids” and “Steampunk”.

According to Decart, Mirage can operate at 20 frames per second at standard web resolution, with processing delays of approximately 100 milliseconds per frame. If supported with sufficient hardware and cloud infrastructure, the system could enable new uses for live streaming, video conferencing, and other real-time applications. The company will also expand its performance to support full HD and 4K resolution.

Wired Note that changing live images in real time requires a lot of computational power. Restarts optimized low-level code to maximize performance on NVIDIA hardware, allowing Mirage to generate video at 20 frames per second with a resolution of 768 x 432 and a latency of 100 milliseconds per frame.

Introducing Miragelsd: First Live Stream Dispersion (LSD) AI Model

From cameras and video chats to computer screens and games, enter video streams and convert them into any world you want in real time (latency <40ms).

How this works (with a demo available!): pic.twitter.com/1o5ggspbz6

– Decart (@decartai) July 17, 2025

Unlike previous image and video generation models that struggled with instability and visual artifacts, Mirage generates each video frame in turn. Condition the previous frame and each frame in the text prompt provided by the user who describes the desired visual style. This autoregressive approach aims to maintain consistency of movement, an area where diffusion-based models often encounter limitations.

“The content is no longer modified, but it's adaptive,” says Dean Leitersdorf, founder and CEO of DeCart. ctech. He points out that Mirage could allow viewers to influence the appearance of video content, matching the company's broader vision of making videos a more interactive and customizable medium.

You can try Mirage now by visiting the Decart website.