Want to scroll through an endless feed of soulless AI-generated video content?

Well, here’s the good news. Following the success of its AI-powered “Vibes” video feed (showing only AI-generated clips) built into the Meta AI app, Meta announced that it is testing a standalone Vibes app, with initial versions currently available in Brazil and Mexico.

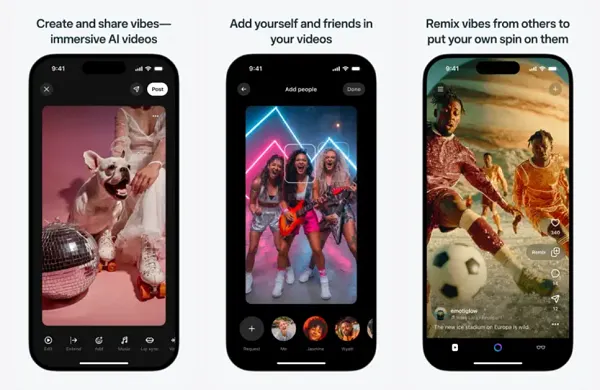

The standalone full-screen video app separates Vibes’ AI video feed from other elements of the Meta AI app. These factors primarily concern the pairing of Meta’s AI glasses with your device.

So it’s essentially like TikTok, but all the clips are AI.

According to Meta (via Platformer):

“Following the strong traction of Meta AI’s early vibes, we’re testing a standalone app to build on that momentum. We’re seeing users increasingly turning to this format to create, discover, and share AI-generated videos with friends. This standalone app provides a dedicated home for that experience, giving people a more focused and immersive environment.”

As you can see from these examples, Meta has added a variety of editing and customization options to Vibes since its initial release last September, which the company says has helped it gain traction.

In fact, last week, as part of its fourth quarter earnings report, Meta reported: Content generated through Meta AI apps tripled year-over-year in Q4 2025.

This last statistic is a bit skewed, but as mentioned earlier, Meta only released the Vibes feed for the Meta AI app in September, meaning there was no easy way to generate video content like this until Q4. I mean, I don’t know, it seems a little misleading to say, “Usage increased rapidly after we made it available to users,” but either way, it’s clear that Meta is happy with the momentum of its AI-generated feeds, which is why they’re trying to put extra emphasis on it right now.

However, it feels a little defeatist and a little counter to the real purpose of social media, which is to foster human connection.

Generative AI tools are undoubtedly an interesting technological achievement, offering a way for people to explore ideas in entirely new ways, and potentially helping some people realize fascinating concepts.

However, it is not human and in most cases is not original, which limits creative abilities in this respect.

By their very nature, generative AI tools are derivative, regurgitating other content that already exists, and most often lack the humanity, or soul, that makes human-created content truly compelling.

But in reality, it mostly comes down to concept. You can have all the AI tools that do everything for you, but if your idea is terrible, the end result will be terrible, no matter how good it looks. This is where AI lag becomes an issue. Because most ideas are not good and most people don’t have the ability to come up with great, engaging, and creative videos. But now that we can create content at such a scale, if we just keep throwing every concept at the wall, it won’t really stick and will eventually form a giant pile that we all have to sift through.

Fundamentally, I feel that Meta’s approach here should be one that encourages the use of generative AI in conjunction with human input, rather than focusing on producing purely AI-generated content. A dedicated AI content feed seems like the opposite direction to where we should be heading in terms of creating tools that facilitate human interaction, but then again, Meta also has hundreds of billions of dollars invested in AI development, so it’s no wonder the Zucks want to say, “Hey, check out all the cool things you can do with this.”

But I feel like non-creative engineers are trying to make creativity more codified and more accessible to everyone. But creativity is not rooted in raw logic, but is an expression of the human soul, a translation of people’s lived experience, allowing readers and viewers to see and feel things from a different perspective.

True creativity is the closest thing to experiencing the world inside someone else’s mind. Can AI reproduce that?