Rather than existing only in research labs, artificial intelligence now shapes the tools people use to connect, work, and create. Meta AI, formerly Facebook AI, drives this transformation by linking research in vision, language, audio, and robotics with applications that reach billions across its platforms.

We analyzed key Meta AI applications and research projects, ranging from personal AI assistants and translation systems to models for media generation, perception, and safety, and selected the following examples to illustrate how Meta leverages research projects for practical use.

Meta AI’s purpose is to advance AI technology and integrate it into Meta’s products, including Facebook, Instagram, WhatsApp, and Messenger. The artificial intelligence division develops models and tools that enable people to interact with personal AI assistants, enhance content across platforms, and create a safer user experience.

A central focus of Meta AI is natural language processing, reasoning, and multimodal learning, which combine inputs such as text, images, and videos. To support these areas, Meta AI has released the LLaMA family of models, designed to provide features that enhance content, improve efficiency in online advertising, and strengthen information management across Meta products.

1. Meta AI app

The Meta AI app serves as both a standalone AI app and an integrated feature within core Meta products. It enables users to chat with their personal AI, ask questions, generate content, and interact through natural language processing.

Projects such as Seamless Interaction and Seamless Communication support this app by modeling conversational dynamics and improving multilingual communication, allowing more natural and context-aware interactions.

2. Facebook, Instagram, WhatsApp, and Messenger

Within these social media apps, Meta AI offers features such as chat, content generation, and reasoning support.

Models such as Movie Gen and Audiobox enhance media creation by generating images, videos, and audio content. At the same time, Video Seal supports these apps by embedding watermarks in the generated videos, thereby protecting authenticity and enhancing content safety.

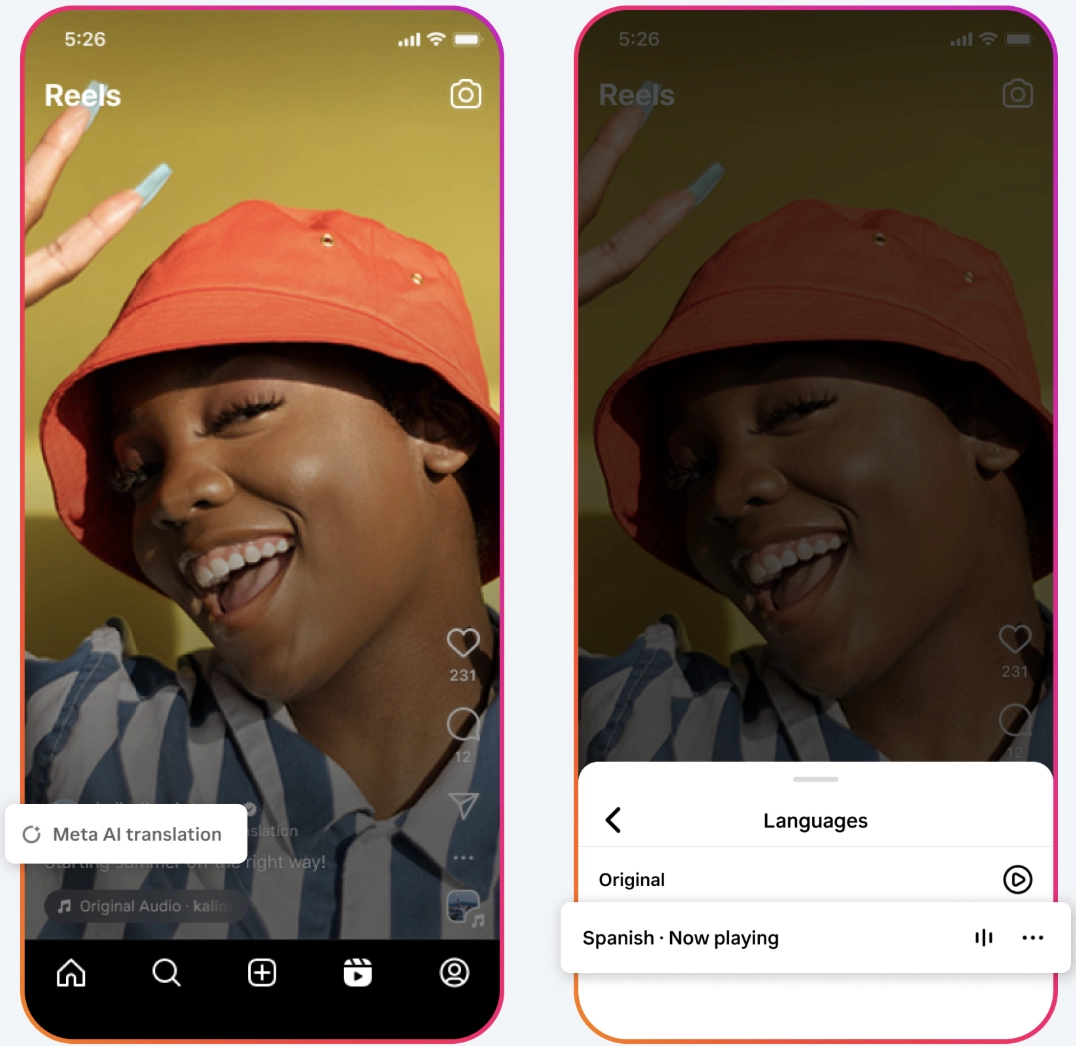

For example, Meta AI is testing a translation tool for Reels that automatically dubs audio and syncs lip movements, allowing viewers to watch content in different languages. The first trials on Instagram and Facebook focus on English and Spanish videos from creators in Latin America and the US, with plans to expand to more languages and regions.

Figure 1: Meta AI translation feature on Instagram reels.

3. Ray-Ban Meta glasses

Ray-Ban Meta glasses represent a significant advancement in wearable AI technology, combining design with advanced AI features. They allow users to stay connected while keeping their hands free, combining form and function in everyday use.

The glasses integrate Meta AI directly, enabling people to ask questions, set reminders, translate text, capture media, and interact with their personal AI assistant naturally.

Across all models, users can:

- Take and make calls hands-free.

- Send and receive messages through integrated apps.

- Ask Meta AI questions with voice prompts, such as “Hey Meta.”

- Get personalized recommendations, such as the best time to take photos.

- Translate text in real-time, displayed directly in the display model.

- Capture Ultra HD photos and videos with hands-free voice controls.

- Listen to music while staying aware of their surroundings through open-ear Bluetooth speakers.

The glasses feature privacy enhancements, including built-in capture LEDs that indicate when recording is active. They also integrate with various apps and account settings, enabling users to manage their preferences and maintain control over their data.

Ray-Ban Meta glasses and their variations highlight how Meta is combining AI research in vision and perception, such as DINOv3 and Segment Anything 2, with consumer devices. This integration brings advanced computer vision and natural interaction into people’s daily lives, making the glasses both a fashion accessory and an advanced AI device.

4. Meta AI on the web

Meta AI is also accessible through the web, offering tools that help users boost productivity and develop creative projects directly in the browser. The web experience is designed to combine advanced AI features with an intuitive desktop interface.

- Video restyling: Users can transform or adjust videos by entering simple prompts. Preset options for style, lighting, and effects make editing fast and approachable without specialized skills.

- AI-powered writing: Meta AI can generate complete documents that include both text and visuals from a single prompt. This capability supports drafting, revising, and enhancing content, enabling faster completion of writing tasks.

- Image creation and editing: Users can generate images or modify existing ones through customizable presets. Adjustments in style and lighting allow ideas to be turned into polished visuals with minimal effort.

- Productivity support: The enhanced desktop version provides features to help organize and execute projects more efficiently. Whether brainstorming, drafting content, or refining media, users can manage their work with greater ease.

Meta AI on the web extends the reach of Meta products by making AI technology available on any desktop. It complements other platforms, such as Ray-Ban Meta glasses and Meta Quest devices.

5. Meta Quest devices

Meta Quest represents Meta’s line of mixed reality headsets, which combine performance and natural interaction in all-in-one devices. Powered by Meta Horizon OS, these headsets enable users to transition between the physical and virtual worlds, supporting both entertainment and productivity.

- Meta Quest 3: The most advanced model in the lineup, Meta Quest 3 offers twice the GPU power and 30% more memory compared to Quest 2. It delivers 4K resolution with a wider field of view, making experiences sharper and more immersive. Equipped with advanced passthrough and sensors, Quest 3 enables applications that blend real and virtual environments with high fidelity.

- Meta Quest 3S: Designed to make mixed reality more accessible, Quest 3S provides the performance and features of Quest 3 at a lower price point. By using the proven optical stack of Quest 2, it ensures compatibility with the latest Quest 3 apps while maintaining reliable performance for a larger audience.

Meta Quest devices support developers through a shared ecosystem. Every headset is effectively a developer kit, allowing apps to be built once and scaled across multiple devices. Performance guidelines ensure that apps optimize GPU and CPU resources, minimize latency, and maintain consistent frame rates.

Using cameras and depth sensors, the devices create a 3D scan of the environment, allowing virtual objects and scenes to align naturally with the physical space. APIs such as Passthrough, Scene, and Anchor enable spatially aware applications.

Figure 2: Meta Quest 3 headset example.

6. Accounts Center and payment services

Beyond apps and devices, Meta AI supports account settings, payment services, and online advertising.

AI features provide companies with tools to create personalized ad experiences, while also allowing users to manage cookie choices and opt out of optional cookies in the Accounts Center. Research into alignment and safety expands this work into scientific domains, demonstrating how Meta AI is intended not only to enhance content and manage information but also to extend AI technology into new industries.

Meta AI organizes its research into several areas: core learning and reasoning, communication and language, perception, embodiment and actions, alignment, and coding. Within these areas, the team develops projects that test new models, explore applications, and improve Meta products.

Core learning and reasoning

DINOv3

Meta AI has released DINOv3, a self-supervised learning (SSL) model designed to advance computer vision research. The model is trained on 1.7 billion images with parameter sizes up to 7 billion, making it one of the largest vision models available. Its main objective is to produce high-resolution image features that can be reused across tasks without requiring labeled datasets or extensive fine-tuning.

Key characteristics of DINOv3:

- Performance across domains: The model demonstrates strong results in image classification, semantic segmentation, object detection, and video tracking. For the first time, a single frozen backbone outperforms task-specific supervised systems on several dense prediction benchmarks.

- Scalability: DINOv3 employs SSL methods that eliminate reliance on human-annotated metadata. This reduces training costs and enables scaling both the dataset size and the model size.

- Model family: To address varied computing requirements, DINOv3 includes smaller distilled versions and ConvNeXt alternatives. These variants allow deployment in resource-constrained environments.

- Open resources: Meta AI has released training code, pre-trained backbones, evaluation heads, and documentation under a commercial license to support research and development in computer vision and multimodal applications.

Applications and early use cases:

- Environmental analysis: The World Resources Institute applies DINOv3 to satellite imagery for monitoring deforestation and restoration projects. Tests show improved canopy height estimation accuracy, with error reduced from 4.1 meters in DINOv2 to 1.2 meters in DINOv3.

- Robotics: NASA’s Jet Propulsion Laboratory is using the model to support robotic exploration, where efficient computing is necessary for running multiple vision tasks simultaneously.

DINOv3 highlights the role of SSL in bridging the gap between large language models and computer vision systems. By removing dependence on labeled data and offering deployment-ready model families, it provides a foundation for research and applied work in fields ranging from healthcare and environmental monitoring to autonomous systems and manufacturing.

Figure 3: DINOv3 performance benchmark results.

V-JEPA 2

V-JEPA 2 (Video Joint Embedding Predictive Architecture 2) is a self-supervised foundation model trained on video data. It is designed to improve visual understanding, prediction, and planning, and demonstrates advanced performance in tasks that require reasoning about motion and future outcomes.

Core capabilities:

- V-JEPA 2 learns from natural videos to understand physical dynamics, enabling it to anticipate actions and environmental changes without explicit supervision.

- The model supports planning for robotic control. By training on 62 hours of robot data from the Droid dataset, it can perform tasks such as reaching, grasping, and pick-and-place in new environments without task-specific training.

- When combined with language modeling, V-JEPA 2 enhances its ability to reason about events and contexts, thereby linking visual and textual information for more accurate predictions.

Architecture and training:

- The model follows a two-phase training process. First, its encoder and predictor are pre-trained through self-supervised learning on large-scale video data.

- It is then fine-tuned on a small set of robot interactions, which enables efficient deployment in robotic systems without requiring extensive demonstrations.

Potential applications:

- Robotics: V-JEPA 2 can be applied to robotic assistants capable of operating in unfamiliar physical environments and performing a variety of tasks.

- Assistive technology: World models like V-JEPA 2 may support wearable devices that provide users with real-time alerts about obstacles or environmental hazards.

Figure 4: V-JEPA 2 benchmark results.

Communication and language

Seamless Interaction

Seamless Interaction is a family of audiovisual motion models designed to capture the dynamics of two-party conversations. These models focus on generating realistic gestures, expressions, and reactions that align with natural human dialogue.

Core capabilities:

- The models are trained on paired conversations and can reproduce behaviors such as nodding, hand gestures, eye contact, and backchanneling.

- They offer controllability in expression, allowing variation in the degree of facial and body gestures.

- Outputs are compatible with both 2D video renderings and 3D avatars, including Codec Avatars.

Training dataset

- The Seamless Interaction Dataset contains over 4,000 hours of high-resolution recordings of face-to-face interactions.

- It includes contributions from more than 4,000 participants across 65,000 interactions, ranging from casual exchanges to highly expressive scenarios.

- The dataset comprises 5,000 annotated samples that describe emotional states and visual behaviors, along with 1,300 scripted prompts grounded in psychological research.

Applications: The research demonstrates how dyadic motion models can support more natural and expressive AI communication systems. By modeling conversational cues and synchrony, the technology has potential applications in avatars, assistive tools, and other areas where human-like interaction enhances the user experience.

Seamless Communication

Seamless Communication is a suite of research models designed to improve translation across languages while maintaining expression, speed, and accuracy. The goal is to facilitate natural and authentic communication in real-time.

Model family:

- SeamlessExpressive: Focuses on preserving vocal style, tone, pauses, and speech rate, aiming to retain emotional expression in translations rather than producing flat, monotone output.

- SeamlessStreaming: Delivers translations with approximately two seconds of latency. It supports speech recognition, speech-to-text, and speech-to-speech translation for nearly 100 input languages and 36 output languages.

- SeamlessM4T v2: An updated foundational model that supports both speech and text translation. It introduces a new architecture for improved consistency between spoken and written outputs.

- Seamless: Combines the capabilities of SeamlessExpressive, SeamlessStreaming, and SeamlessM4T v2 into a single model.

Research and data:

- The models are built on multilingual training and multitask learning, enabling wide language coverage.

- The release includes metadata, tools, and documentation to support open research and collaboration.

Safety measures:

- The models incorporate strategies to reduce hallucinogenic toxicity in outputs.

- A watermarking system has been added for expressive audio translations to improve accountability.

Figure 5: Figure showing Seamless, a unified system that combines the multilingual strength of SeamlessM4T v2, the low latency of SeamlessStreaming, and the expressive capabilities of SeamlessExpressive.

Perception

Segment Anything Model 2

Segment Anything Model 2 (SAM 2) is a segmentation system designed to identify and track objects in both images and videos. Building on the first Segment Anything Model, SAM 2 expands capabilities from static images to video streams while maintaining fast inference and interactive performance.

Key capabilities:

- Universal segmentation: Allows users to select objects with simple prompts, such as a click, box, or mask, applicable across both images and video frames.

- Video tracking: Incorporates a memory module that stores object context, enabling the model to follow objects across frames, even when they are partially occluded or disappear temporarily.

- Zero-shot performance: Performs accurately on unfamiliar images and videos without prior task-specific training.

- Efficiency: Designed with streaming inference for real-time interaction, supporting applications that require immediate segmentation results.

Training and dataset:

- SAM 2 was trained using the Segment Anything Video (SA-V) dataset, which includes over 600,000 object mask sequences across 51,000 videos from 47 countries.

- The dataset captures full objects, parts, and complex occlusions, with an emphasis on geographic diversity.

- Meta AI has released the model, training code, and dataset for the research community, along with a fairness evaluation to support transparency.

Applications:

- SAM 2 can be applied in media editing, video generation, and real-time object tracking.

- Its outputs can be integrated with other AI models to enable advanced video manipulation and creative workflows.

- Future extensions may allow for more interactive inputs, supporting broader use in live and streaming environments.

Embodiment and actions

Meta Motivo

Meta Motivo is a behavioral foundation model developed to control virtual humanoid agents across a wide range of full-body tasks. The model is trained with a novel unsupervised reinforcement learning method and demonstrates zero-shot capabilities.

Core capabilities:

- Zero-shot task performance: The model can handle unseen tasks, such as motion tracking, pose reaching, and reward optimization, without requiring fine-tuning.

- Physics-based environment: Behaviors are trained and tested in a simulated environment governed by physical constraints, making them robust to variations and perturbations.

- Prompt flexibility: Tasks can be prompted using different goals, such as tracking a specific motion, achieving a target pose, or optimizing for rewards.

Algorithm and architecture:

- The model uses Forward-Backward Conditional Policy Regularization (FB-CPR), which aligns states, motions, and rewards into a shared latent space.

- It includes an embedding network to represent the agent’s state and a policy network that generates actions based on this representation.

- Training combined large-scale motion capture data with 30 million simulated interaction samples, enabling generalization across diverse behaviors.

Evaluation and results:

- A new humanoid benchmark was developed for assessment, covering motion tracking, pose reaching, and reward-based tasks.

- Meta Motivo reached 61% to 88% of the performance of top retrained task-specific methods and outperformed other unsupervised reinforcement learning and model-based baselines.

- Human evaluations indicated that while some high-performance methods produced less natural behaviors, Meta Motivo generated more human-like movements.

Limitations:

- Fast motions and ground-level activities remain difficult for the model to reproduce accurately.

- Some behaviors exhibit unnatural jittering, showing the need for further refinement.

Figure 6: The image shows motion embeddings grouped by activity types, including jumping, running, and crawling, alongside reward-based tasks.

Media and audio generation

Movie Gen

Movie Gen is a family of foundation models for media creation. These models generate high-quality 1080p videos in multiple aspect ratios with synchronized audio, and they also support instruction-based video editing and personalization from user images.

Core capabilities:

- Text-to-video synthesis, video personalization, and video editing.

- Video-to-audio and text-to-audio generation.

- Personalized outputs, including custom videos created from user-provided images.

Model design

- The largest model is a 30B parameter transformer with a context length of 73,000 video tokens, capable of generating 16-second videos at 16 frames per second.

- Technical developments include advances in model architecture, latent spaces, training objectives, and inference optimization.

- Scaling in model size, training compute, and pre-training data contributes to state-of-the-art performance across multiple benchmarks.

Audiobox

Audiobox is a foundation model designed for generating and editing audio. Building on earlier work with Voicebox, Audiobox integrates speech, sound effects, and soundscapes into a single system.

Core capabilities:

- Describe-and-generate: Users can provide text prompts to generate speech, voices, or soundscapes, such as “a young woman speaks with a high pitch” or “a running river with birds chirping.”

- Dual input control: Audiobox supports combining a recorded voice sample with a text prompt, enabling restyling of speech to reflect environments (e.g., “in a cathedral”) or emotions (e.g., “sad and slow”).

- Editing through infilling: The model can modify audio by inserting or replacing sounds within an existing clip, such as adding a barking dog into a rain recording.

Responsible release:

- Audiobox includes safeguards against misuse, such as automatic audio watermarking that traces generated audio to its origin and a voice authentication feature in demos to deter impersonation.

- Meta AI has released the model under a research-only license, initially to selected researchers and academic institutions focused on speech and safety studies.

Future applications: Audiobox highlights progress toward general-purpose audio generation. Potential uses include content creation, podcasting, video game design, narration, and integration into conversational AI systems.

Alignment and integrity

Video Seal

Video Seal is a framework for open and efficient video watermarking designed to address the challenges posed by AI-generated content and advanced editing tools. The system embeds imperceptible signals into videos, enabling reliable identification while maintaining visual quality.

Core approach:

- Video Seal jointly trains an embedder and extractor by applying transformations such as video compression during training.

- The method uses a multistage process including image pre-training, hybrid post-training, and extractor fine-tuning.

- Temporal watermark propagation enables the adaptation of any image watermarking model for video without requiring the embedding of watermarks in every frame, thereby improving efficiency.

Open resources

- Meta AI has released the codebase, trained models, and a public demo under permissive licenses to encourage further research and development in video watermarking.

Figure 7: Video Seal optimization pipeline.

💡Conclusion

Meta AI connects foundational research in language, vision, audio, and robotics to applications that are already in use across Meta’s platforms. Its initiatives include personal assistants, translation tools, and media generation systems, which are reinforced by projects such as DINOv3 for vision, V-JEPA 2 for video understanding, SAM 2 for segmentation, and Seamless Communication for multilingual interaction.

These research efforts extend into consumer devices, including Ray-Ban Meta glasses and Quest headsets, as well as web-based tools, enabling AI to function across multiple environments.

By integrating research with practical deployment, Meta AI demonstrates how large-scale models and multimodal systems can enhance communication, improve accessibility, and offer new ways for users to interact with digital content.

Principal Analyst

Cem Dilmegani

Principal Analyst

Cem’s work has been cited by leading global publications including Business Insider, Forbes, Washington Post, global firms like Deloitte, HPE and NGOs like World Economic Forum and supranational organizations like European Commission. You can see more reputable companies and resources that referenced AIMultiple.

Throughout his career, Cem served as a tech consultant, tech buyer and tech entrepreneur. He advised enterprises on their technology decisions at McKinsey & Company and Altman Solon for more than a decade. He also published a McKinsey report on digitalization.

He led technology strategy and procurement of a telco while reporting to the CEO. He has also led commercial growth of deep tech company Hypatos that reached a 7 digit annual recurring revenue and a 9 digit valuation from 0 within 2 years. Cem’s work in Hypatos was covered by leading technology publications like TechCrunch and Business Insider.

Cem regularly speaks at international technology conferences. He graduated from Bogazici University as a computer engineer and holds an MBA from Columbia Business School.

View Full Profile

Researched by

Sıla Ermut

Industry Analyst

Sıla Ermut is an industry analyst at AIMultiple focused on email marketing and sales videos. She previously worked as a recruiter in project management and consulting firms. Sıla holds a Master of Science degree in Social Psychology and a Bachelor of Arts degree in International Relations.

View Full Profile