Humanity's Claude Code's large-scale language model is being abused by threat actors who use it in data horror campaigns and develop ransomware packages.

The company says the tool is also used to disseminate North Korean IT workers schemes and infectious interview campaigns, China's APT campaigns, and to distribute lures to create malware with advanced evasive capabilities by Russian-speaking developers.

AI-created ransomware

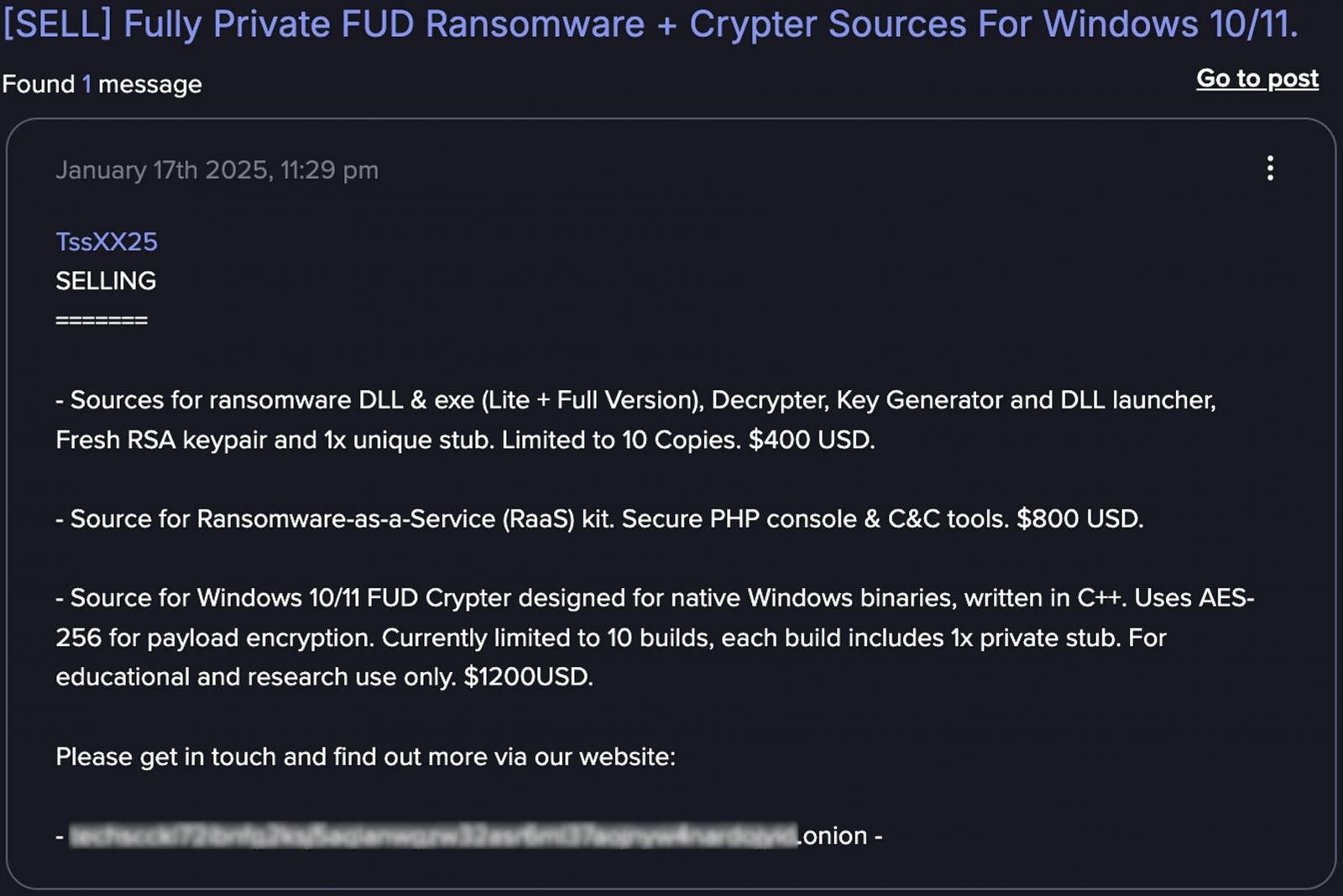

In another example, tracked as “GTG-5004,” a UK-based threat actor used Claude code to develop and commercialize ransomware as a service (RAAS) operations.

The AI utility helped create all the tools needed for the RAAS platform, implementing Chacha20 stream ciphers with modular ransomware, shadow copy removal, specific file targeting options, and RSA key management for the ability to encrypt network shares.

In terms of evasion, ransomware is loaded through reflective DLL injection and features Syscall Invocation Techniques, API Hooking Bypass, String Dofuscation, and Anti Debugging.

Humanity says that threat actors were almost entirely dependent on Claude to implement the most knowledgeable bits of the RAAS platform, as they point out that without AI support it would likely have failed to generate work ransomware.

“The most impressive discovery is that actors seem to rely entirely on AI to develop functional malware,” the report reads.

“It appears that this operator is unable to implement internal operations of the window without encryption algorithms, anti-analytic techniques, or Claude's assistance.”

After creating RAAS operations, Threat Actor provided its Ransomware executable, a kit with PHP console and command and control (C2) infrastructure, for $400 to $1,200 for Windows Crypters, in dark web forums such as Dread, CryptBB, and Nuled.

Source: Humanity

Ai-operated horror campaign

In one of the analyzed cases where humanity tracks as “GTG-2002,” Cybercriminal used Claude as an active operator to run a data extortion campaign against at least 17 organisations in the government, healthcare, finance and emergency services sectors.

The AI agents performed network reconnaissance, assisting threat actors to achieve initial access, and then generated custom malware based on the Chisel tunneling tool used for sensitive data exfiltration.

After the attack failed, we used Claude code to better hide the malware by providing string encryption, undeveloped code, and masquerading filenames.

Claude then analyzed the stolen files to set ransom requests, ranging from $75,000 to $500,000, and even generated custom HTML ransom notes for each victim.

“Claude not only performed the “on the keyboard” operations, but also analyzed the extended financial data to determine the appropriate ransom amount, generated visually surprising HTML ransom notes, and embed them in the boot process, showing them on the victim's machine.” – Humanity.

This humanity, known as attack, is an example of “vibe hacking,” reflecting the use of AI coding agents as partners in cybercrime, rather than using them outside the context of manipulation.

Anthropic's reports include additional examples of Claude Code illegally used, despite its uncomplicated nature. The company says LLM has helped threat actors in developing advanced API integration and resilience mechanisms for carding services.

Leverage another cybercrime leverage AI power for romance scams, generate “high emotional intelligence” responses, and cream It offers images to improve profiles and develop emotional manipulation content to target victims, as well as multilingual support for wider targeting.

For each case presented, the AI developer provides tactics and techniques that help other researchers uncover new cybercriminal activities and build connections with known illegal surgeries.

Humanity has banned all accounts linked to detected malicious operations, constructed tailored classifiers to detect suspicious usage patterns, and shared technical indicators with external partners that help to protect against these cases of AI misuse.

46% of the environment have corrupted passwords, almost doubled from 25% last year.

Get Picus Blue Report 2025 and take a comprehensive look at more research findings on prevention, detection, and data removal trends.