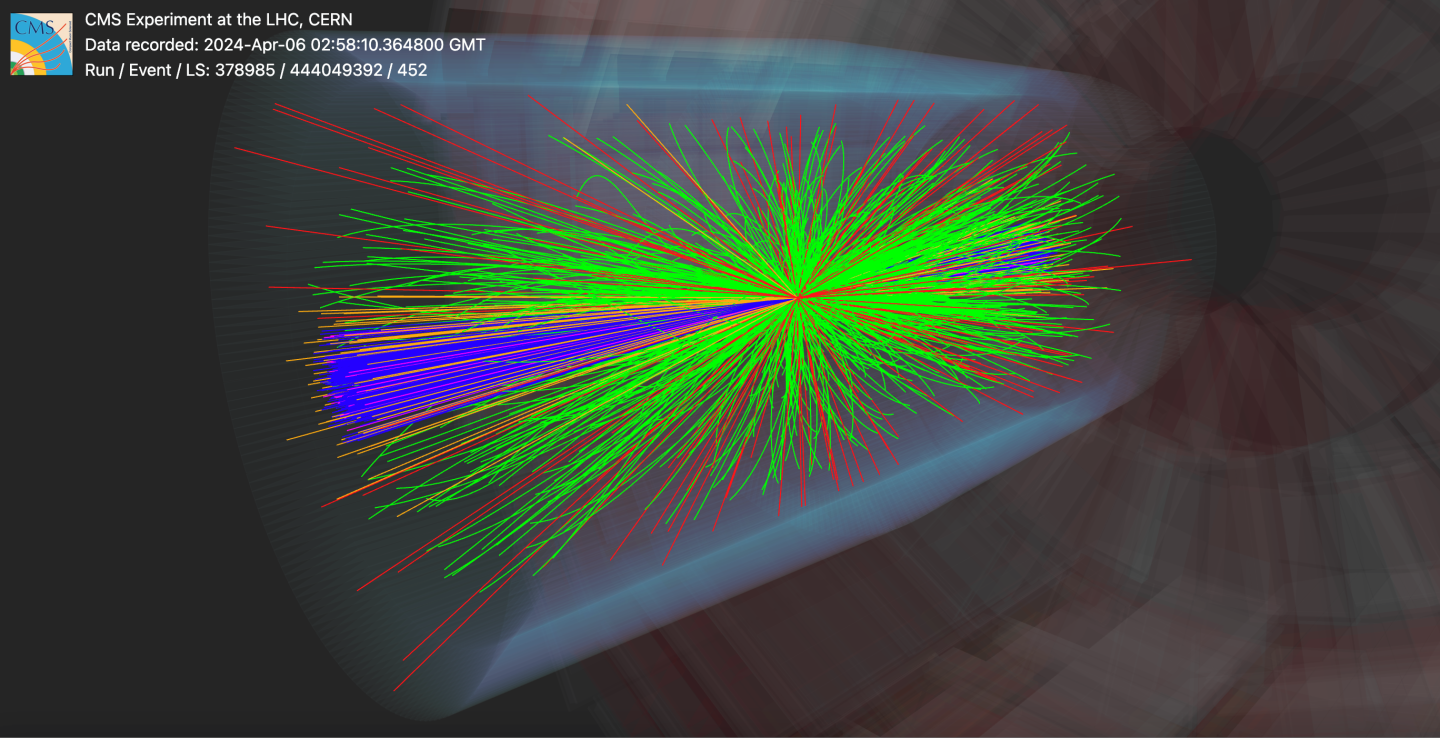

Particle collisions reconstructed using the new CMS machine learning-based particle flow (MLPF) algorithm. The HFEM and HFHAD signals are transmitted from forward calorimeters that measure energy from particles moving near the beamline. (Image: CMS)

The CMS collaboration has shown for the first time that machine learning can be used to fully reconstruct particle collisions at the LHC. This new approach can reconstruct collisions more quickly and accurately than traditional methods, giving physicists a deeper understanding of LHC data.

Every time protons collide with each other at the LHC, complex particle patterns are ejected. This pattern must be carefully reconstructed before physicists can study what actually happened. For more than a decade, CMS has used a particle flow (PF) algorithm that combines information from the experiment’s various detectors to identify each particle produced in a collision. This method works very well, but it relies on a long chain of hand-crafted rules designed by physicists.

The new CMS machine learning-based particle flow (MLPF) algorithm approaches the task in a fundamentally different way, replacing much of the rigid hand-crafted logic with a single model trained directly on simulated collisions. Rather than being told how to reconstruct particles, the algorithm learns what particles look like in the detector, much like humans learn how to recognize faces without memorizing explicit rules.

When benchmarked using data that mimics current LHC runs, the new machine learning algorithms’ performance matched, and in some cases exceeded, that of traditional algorithms. For example, when tested on simulated events in which top quarks are produced, the algorithm improved the accuracy with which sprays of particles, known as jets, are reconstructed by 10 to 20 percent in key particle momentum ranges.

The new algorithm can also run more efficiently on modern electronic chips known as graphics processing units (GPUs), allowing collisions to be completely reconstructed much more quickly than before. Traditional algorithms typically need to run on a central processing unit (CPU), which is often slower than a GPU for such tasks.

“New uses of machine learning can lead to more accurate data reconstruction and directly benefit CMS measurements, from standard model accuracy testing to searching for new particles,” said Joosep Pata, lead developer of the new MLPF algorithm. “Ultimately, our goal is to extract the most information from experimental data as efficiently as possible.”

The new algorithm was tested with current LHC data conditions, but is predicted to be even more useful with data from the high-luminosity LHC. The LHC upgrade, scheduled to start operating in 2030, will generate about five times as many particle collisions, posing major challenges to LHC experiments. By teaching detectors to learn directly from data, physicists are not only improving performance but also redefining what is possible in experimental particle physics.

/Open to the public. This material from the original organization/author may be of a contemporary nature and has been edited for clarity, style, and length. Mirage.News does not take any institutional position or position, and all views, positions, and conclusions expressed herein are solely those of the authors. Read the full text here.