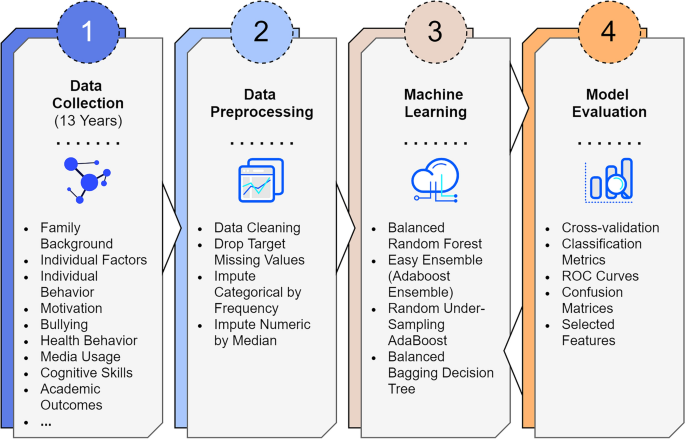

We trained and validated machine learning models, with a 13-year longitudinal dataset, to create classification models for upper secondary school dropout. Four supervised classification algorithms were utilized: Balanced Random Forest (B-RandomForest), Easy Ensemble (Adaboost Ensemble), RSBoost (Adaboost), and the Bagging Decision Tree. Six-fold cross-validation was used for the evaluation of performance. Confusion matrices were calculated for each classifier to evaluate performance. The methodological research workflow is presented in Fig. 1.

Proposed research workflow. Our process begins with data collection over 13 years, from kindergarten to the end of upper secondary education (Step 1), followed by data processing which includes cleaning and imputing missing feature values (Step 2). We then apply four machine learning models for dropout and non-dropout classification (Step 3), and evaluate these models using 6-fold cross-validation, focusing on performance metrics and ROC curves (Step 4).

Sampling

This study used existing longitudinal data from the “First Steps” follow-up study40 and its extension, the “School Path: From First Steps to Secondary and Higher Education” study41. The entire follow-up spanned a 13-year period, from kindergarten to the third (final) year of upper secondary education. In the “First Steps” study, approximately 2,000 children born in 2000 were followed 10 times from kindergarten (age 6–7) to the end of lower secondary school (Grade 9; age 15-16) in four municipalities around Finland (two medium-sized, one large, and one rural). The goal was to examine students’ learning, motivation, and problem behavior, including their academic performance, motivation and engagement, social skills, peer relations, and well-being, in different interpersonal contexts. The rate at which the contacted parents agreed to participate in the study ranged from 78% to 89% in the towns and municipalities – depending on the town or municipality. Ethnically and culturally, the sample was very homogeneous and representative of the Finnish population, and parental education levels were very close to the national distribution in Finland42. In the “School Path” study, the participants of the “First Steps” follow-up study and their new classmates (\(N = 4160\)) were followed twice after the transition to upper secondary education: in the first year (Grade 10; age 16-17) and in the third year (Grade 12; age 18-19).

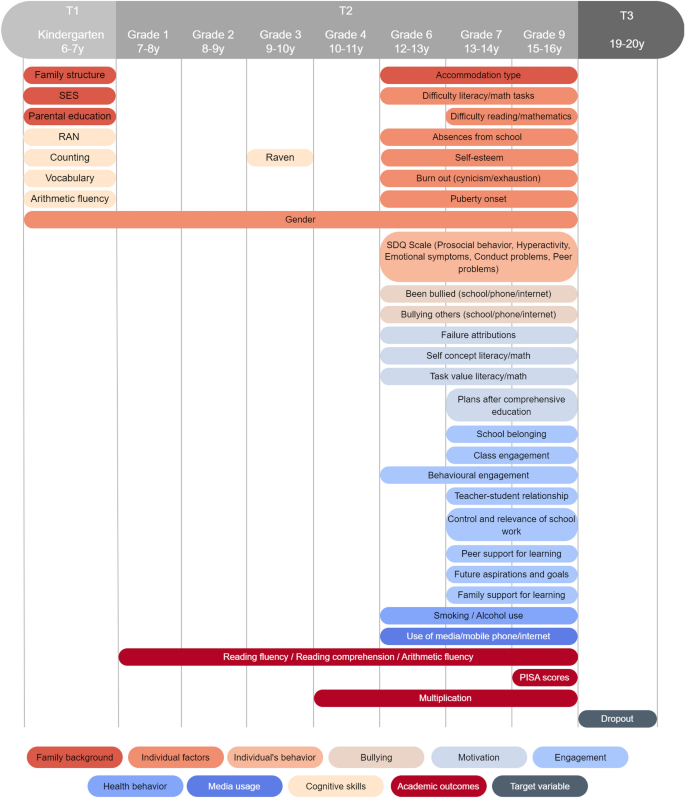

The present study focused on those participants who took part in both the “First Steps” study and the “School Path” study. Data from three time points across three phases of the follow-up were used. Data collection for Time 1 (T1) took place in Fall 2006 and Spring 2007, when the participants entered kindergarten (age 6-7). Data collection for Time 2 (T2) took place during comprehensive school (ages 7-16), which extended from the beginning of primary school (Grade 1; age 7-8) in Fall 2007 to the end of the final year of the lower secondary school (Grade 9; age 15-16) in Spring 2016. For Time 3 (T3), data were collected at the end of 2019, 3.5 years after the start of upper secondary education. We focused on students who enrolled in either general upper secondary school (the academic track) or vocational school (the vocational track) following comprehensive school, as these tracks represent the most typical choices available for young individuals in Finland. Common reasons for not completing school within 3.5 years included students deciding to discontinue their education or not fulfilling specific requirements (e.g. failing mandatory courses) during their schooling.

At T1 and T2, questionnaires were administered to the participants in their classrooms during normal school days, and their academic skills were assessed through group-administered tasks. Questionnaires were administered to parents as well. At T3, register information on the completion of upper secondary education was collected from school registers. In Finland, the typical duration of upper secondary education is three years. For the data collection in comprehensive school (T1 and T2), written informed consent was obtained from the participants’ guardians. In the secondary phase (T3), the participants themselves provided written informed consent to confirm their voluntary participation. The ethical statements for the follow-up study were obtained in 2006 and 2018 from the Ethical Committee of the University of Jyväskylä.

Measures

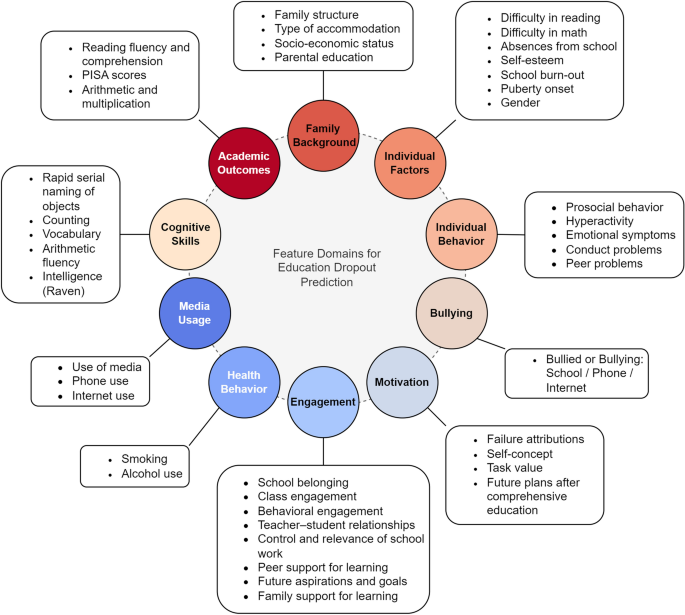

The target variable in the 13-year follow-up was the participant’s status 3.5 years after starting upper secondary education, as determined from the school registers. Participants who had not completed upper secondary education by this time were coded as having dropped out. Initially, we considered the assessment of 586 features. However, as is common in longitudinal studies, missing values were identified in all of them. Features with more than 30% missing data were excluded from the analysis, and a total of 311 features were used (with one-hot encoding) (see Supplementary Table S3). These features covered family background (e.g. parental education, socio-economic status), individual factors (e.g. gender, absences from school, school burn-out), the individual’s behavior (e.g. prosocial behavior, hyperactivity), motivation (e.g. self-concept, task value), engagement (e.g. teacher-student relationships, class engagement), bullying (e.g. bullied, bullying), health behavior (e.g. smoking, alcohol use), media usage (e.g. use of media, phone, internet), cognitive skills (e.g. rapid naming, raven), and academic outcomes (i.e. reading fluency, reading comprehension, PISA scores, arithmetic, and multiplication). Figure 2 presents an overview of the features used while Fig. 3 summarizes the features used in the models, the grades and the corresponding ages for each grade, and the time points (T1, T2, T3) at which different assessments were conducted. The Supplementary Table S3 provides details about the features included.

Features domains used for the classification of education dropout and non-dropout. The model incorporated a set of 311 features, categorized into 10 domains: family background, individual factors, behavior, motivation, engagement, bullying experiences, health behavior, media usage, cognitive skills, and academic outcomes. Each domain encompassed a variety of measures.

Gantt chart summarizing the features used in the models, the grades and the corresponding ages for each grade, and the time points (T1, T2, T3) at which different assessments were conducted. Assessments from Grades 7 and 9 were not included in the models predicting dropout with data up to Grade 6.

Data processing

In our study, we employed a systematic approach to address missing values in the dataset. Initially, the percentage of missing data was calculated for each feature, and features exhibiting more than 30% missing values were excluded. For categorical features, imputation was performed using the most frequent value within each feature, while a median-based strategy was applied to numeric features. To ensure unbiased imputation, imputation values were derived from a temporary dataset where the majority class (i.e. non-dropout cases) was randomly sampled to match the size of the positive class (i.e. dropout cases).

Machine learning

In our study, we utilized a range of balanced classifiers from the Imbalanced Learning Python package43 for benchmarking. These classifiers were employed with their default hyperparameter settings. Our selection included Balanced Random Forest, Easy Ensemble (Adaboost Ensemble), RSBoost (Adaboost), and Bagging Decision Tree. Notably, the Balanced Random Forest classifier played a pivotal role in our study. We delve into its performance, specific configuration, and effectiveness in the following section. Below are descriptions of each classifier:

-

1.

Balanced random forest: This classifier modifies the traditional random forest44 approach by randomly under-sampling each bootstrap sample to achieve balance. In our study, we refer to the classifier as “B-RandomForest”.

-

2.

Easy ensemble (Adaboost ensemble): This classifier, known as EasyEnsemble45, is a collection of AdaBoost46 learners that are trained on differently balanced bootstrap samples. The balancing is realized through random under-sampling. In our study, we refer to the classifier as “E-Ensemble”.

-

3.

RSBoost (Adaboost) : This classifier integrates random under-sampling into the learning process of AdaBoost. It under-samples the sample at each iteration of the boosting algorithm. In our study, we refer to the classifier as “B-Boosting”.

-

4.

Bagging decision tree: This classifier operates similarly to the standard Bagging47 classifier in the scikit-learn library48 using decision trees49, but it incorporates an additional step to balance the training set by using a sampler. In our study, we refer to the classifier as “B-Bagging”.

Each of these classifiers was selected for their specific strengths in handling class imbalances, a critical consideration of our study’s methodology. The next section elaborates on the performance and configurations of these classifiers, particularly B-RandomForest.

Random forest

The Random Forest (RF) method, introduced by Breiman in 200144, is a machine learning approach that employs a collection of decision trees for prediction tasks. This method’s strength lies in its ensemble nature, where multiple “weak learners” (individual decision trees) combine to form a “strong learner” (the RF). Typically, decision trees in an RF make binary predictions based on various feature thresholds. The mathematical representation of a single decision tree’s prediction, (\(T_d\)) for an input vector \({\varvec{I}}\) is given by the following formula:

$$\begin{aligned} T_d({\varvec{I}}) = \sum _{i=1}^{n} v_i\delta (f_i({\varvec{I}}) < t_i) \end{aligned}$$

(1)

Here, n signifies the total nodes in the tree, \(v_i\) is the value predicted at the i-th node, \(f_i({\varvec{I}})\) is the i-th feature of the input vector \({\varvec{I}}\), \(t_i\) stands for the threshold at the i-th node, and \(\delta\) represents the indicator function.

In an RF, the collective predictions from D individual decision trees are aggregated to form the final output. For regression problems, these outputs are typically averaged, whereas a majority vote (mode) approach is used for classification tasks. The prediction formula for an RF (\(F_D\)) on an input vector \({\varvec{I}}\), is as follows:

$$\begin{aligned} F_D({\varvec{I}}) = \frac{1}{D} \sum _{d=1}^{D} T_d({\varvec{I}}) \end{aligned}$$

(2)

In this equation, \(T_d({\varvec{I}})\) is the result from the d-th tree for input vector \({\varvec{I}}\), and D is the count of decision trees within the forest. Random Forests are particularly effective for reducing overfitting compared to individual decision trees because they average results across a plethora of trees. In our study, we utilized 100 estimators with default settings from the scikit-learn library48.

Figures of merit

To evaluate the efficacy of our classification models, we employed a set of essential evaluative metrics, known as figures of merit.

The accuracy metric reflects the fraction of correct predictions (encompassing both true positive and true negative outcomes) in comparison to the overall number of predictions. The formula for accuracy is as follows:

$$\begin{aligned} \text{Accuracy} = \frac{\text{TP} + \text{TN}}{\text{TP} + \text{TN} + \text{FP} + \text{FN}} \end{aligned}$$

(3)

Notably, given the balanced nature of our target data, the accuracy rate in our analysis equated to the definition of balanced accuracy.

Precision, or the positive predictive value, represents the proportion of true positive predictions out of all positive predictions made. The equation to determine precision is as follows:

$$\begin{aligned} \text{Precision} = \frac{\text{TP}}{\text{TP} + \text{FP}} \end{aligned}$$

(4)

Recall, which is alternatively called sensitivity, quantifies the percentage of actual positives that were correctly identified. The formula for calculating recall is as follows:

$$\begin{aligned} \text{Recall} = \frac{\text{TP}}{\text{TP} + \text{FN}} \end{aligned}$$

(5)

Specificity, also known as the true negative rate, measures the proportion of actual negatives that were correctly identified. The formula for specificity is as follows:

$$\begin{aligned} \text{Specificity} = \frac{\text{TN}}{\text{TN} + \text{FP}} \end{aligned}$$

(6)

The F1 Score is the harmonic mean of precision and recall, providing a balance between the two metrics. It is particularly useful when the class distribution is imbalanced. The formula for the F1 Score is as follows:

$$\begin{aligned} \mathrm {F1\ Score} = 2 \cdot \frac{\text{Precision} \cdot \text{Recall}}{\text{Precision} + \text{Recall}} \end{aligned}$$

(7)

In these formulas, \(\text{TP}\) represents true positives, \(\text{TN}\) stands for true negatives, \(\text{FP}\) refers to false positives, and \(\text{FN}\) denotes false negatives.

The balanced accuracy metric, as referenced by Brodersen et al. in 201050, is a crucial measure in the context of classification tasks, particularly when dealing with imbalanced datasets. This metric is calculated as follows:

$$\begin{aligned} BalAcc = \frac{1}{2}\left( \frac{TP}{TP+FN}+\frac{TN}{TN+FP}\right) \end{aligned}$$

(8)

Essentially, this equation is an average of the recall computed for each class. The balanced accuracy metric is particularly effective since it accounts for class imbalance by applying balanced sample weights. In situations where the class weights are equal, this metric is directly analogous to the conventional accuracy metric. However, when class weights differ, the metric adjusts accordingly and weights each sample based on the true class prevalence ratio. This adjustment makes the balanced accuracy metric a more robust and reliable measure in scenarios where the class distribution is uneven. In line with this approach, we also employed the macro average of F1 and Precision in our computations.

A confusion matrix is a vital tool for understanding the performance of a classification model. In the context of our study, the performance of each classification model was encapsulated by binary confusion matrices. One matrix was a \(2\times 2\) table categorizing the predictions into four distinct outcomes. In the columns of the matrix,the classifications predicted by the model are represented and categorized as Predicted Positive and Predicted Negative. The rows signify the actual classifications, which are labeled as Actual Positive and Actual Negative.

-

The upper-left cell is the True Negatives (TN), which are instances where the model correctly predicted the negative class.

-

The upper-right cell is the False Positives (FP), which are cases where the model incorrectly predicted the positive class for actual negatives.

-

The lower-left cell is the False Negatives (FN), where the model incorrectly predicted the negative class for actual positives.

-

Finally, the lower-right cell shows ’True Positives (TP)’, where the model correctly predicted the positive class.

In our study, we aggregated the results from all iterations of the cross-validation process to generate normalized average binary confusion matrices. Normalization of the confusion matrix involves converting the raw counts of true positives, false positives, true negatives, and false negatives into proportions, which account for the varying class distributions. This approach allows for a more comparable and intuitive understanding of the model’s performance, especially when dealing with imbalanced datasets. By analyzing the normalized matrices, we obtain a comprehensive view of the model’s predictive performance across the entire cross-validation run, instead of relying on a single instance.

AUC score

The AUC score is a widely used metric in machine learning for evaluating the performance of binary classification models. Derived from the receiver operating characteristic (ROC) curve, the AUC score quantifies a model’s ability to distinguish between two classes. The ROC curve plots the true positive rate (TPR) against the false positive rate (FPR) at various threshold settings. By varying the threshold that determines the classification decision, the ROC curve illustrates the trade-off between sensitivity (TPR) and specificity (1 – FPR). The TPR and FPR are defined as follows:

$$\begin{aligned} \text{TPR}= & {} \frac{\text{TP}}{\text{TP} + \text{FN}} \end{aligned}$$

(9)

$$\begin{aligned} \text{FPR}= & {} \frac{\text{FP}}{\text{FP} + \text{TN}} \end{aligned}$$

(10)

The AUC score represents the area under the ROC curve and ranges from 0 to 1. An AUC score of 0.50 is equivalent to random guessing and indicates that the model has no discriminative ability. On the other hand, a model with an AUC score of 1.0 demonstrates perfect classification. A higher AUC score suggests a better model performance in terms of distinguishing between the positive and negative classes.

Cross-validation

In this study, we employed the stratified K-fold cross-validation method with \(K=6\) to ascertain the robustness and generalizability of our approach51. This method partitions the dataset into k distinct subsets, or folds with an even distribution of class labels in each fold to reflect the overall dataset composition. For each iteration of the process, one of these folds is designated as the test set, while the remaining folds collectively form the training set. This cycle is iterated k times, with a different fold used as the test set each time. This technique was crucial in our study to ensure that the model’s performance would be consistently evaluated against varied data samples. One formal representation of this process with \(K=6\), is as follows:

$$\begin{aligned} \text{CV}({\mathscr {M}}, {\mathscr {D}}) = \frac{1}{K} \sum _{k=1}^{K} \text{Eval}({\mathscr {M}}, {\mathscr {D}}_k^\text{train}, {\mathscr {D}}_k^\text{test}) \end{aligned}$$

(11)

Here, \({\mathscr {M}}\) represents the machine learning model, \({\mathscr {D}}\) is the dataset, \({\mathscr {D}}_k^\text{train}\) and \({\mathscr {D}}_k^\text{test}\) respectively denote the training and test datasets for the \(k\)-th fold, and \(\text{Eval}\) is the evaluation function (e.g. accuracy, precision, recall).Our AUC plots have been generated using the forthcoming version of utility functions from the Deep Fast Vision Python Library52.

Ethics declarations

Ethical approval for the original data collection was obtained from the Ethical Committee of the University of Jyväskylä in 2006 and 2018, ensuring that all experiments were performed in accordance with relevant guidelines and regulations.