how do we Decision tree regressor The optimal partition is selected by minimizing . Mean squared error (MSE).

Today marks day 7 of our machine learning “advent calendar” and we continue with the same approach. Decision tree classifiercorresponds to the classification of yesterday's model.

Intuitive experiments using two simple datasets

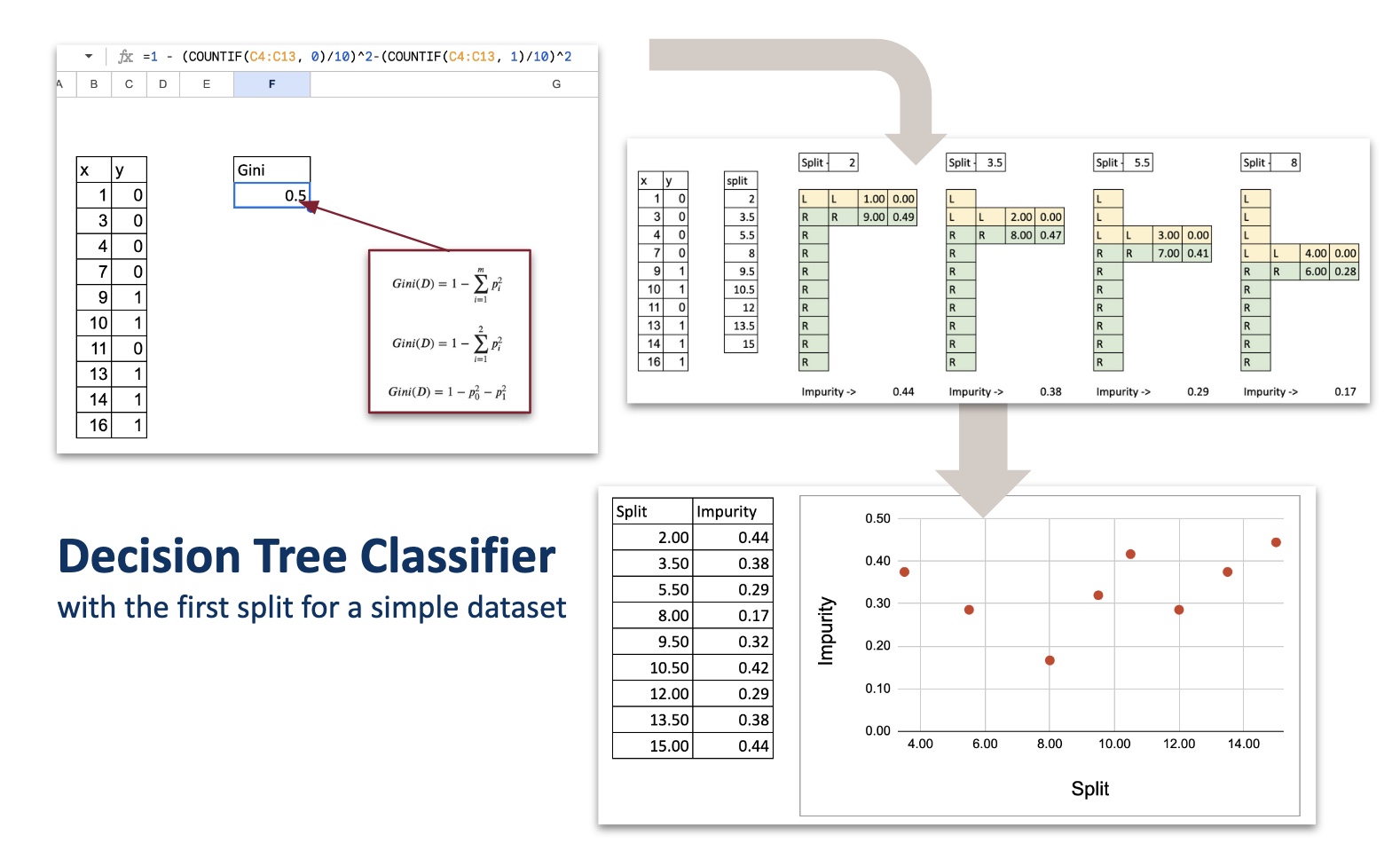

Let's start with a very small toy dataset that I generated that contains one numeric feature and one target variable with two classes (0 and 1).

The idea is to split the dataset into two parts based on one rule. But the problem is: What should this rule be? What are the criteria for determining which split is better?

Now, even if you don't know math yet, you can look at your data and deduce possible cut points.

And visually, 8 or 12right?

But the question is, which one is numerically better?

Intuitively, it looks like this:

- There is a division in 8:

- Left side: no misclassification

- Right side: 1 misclassification

- There is a division in 12:

- Right side: no misclassification

- Left side: two misclassifications

So clearly I feel that splitting by 8 is better.

Now let's take a look at an example 3 classes. I added more random data to create three classes.

Label them here 0, 1, 3Plot vertically.

But you have to be careful. These numbers are: just the category namenot a number. They should not be construed as “commanded”.

So the intuition is always: How homogeneous is each region after the split?

However, it is difficult to determine the optimal split visually.

Now we need a mathematical way to express this idea.

This is exactly the topic of the next chapter.

Measures against impurities as a basis for separation

The decision tree regressor already knows:

- Regional forecasts are average of the target.

- The quality of the split is measured as follows: MSE.

The decision tree classifier looks like this:

- Regional forecasts are majority class of the region.

- Split quality is measured in the following way: Impurity measures: Gini impurity or entropy.

Both are standard in textbooks and are also available in scikit-learn. By default, Gini is used.

But what exactly is this impurity countermeasure?

If you look at the curve, Gini and entropyboth work the same way.

- they are 0 The node is pure (All samples have the same class).

- they reach them maximum when there is a class evenly mixed (50 percent / 50 percent).

- The curve is smoothis symmetric and increases with disorder.

This is an essential property of everything Impurity measures:

If the groups are clean, the impurity is low; if the groups are mixed, the impurity is high.

Therefore, use these measures to decide which splits to create.

Split by one continuous feature

It follows the same structure as for decision tree regressors.

List of all possible splits

Just like the regressor version with one numeric feature, the only split we need to test is the midpoint between consecutive sorted x values.

For each split, calculate the impurities on each side.

For example, consider split values. x = 5.5.

Split the dataset into two regions.

- Area L: x < 5.5

- Region R: x ≥ 5.5

For each region:

- Count the total number of observations

- Calculate Gini impurities

- Finally, we calculate the weighted impurity of the split.

Select the split with the least impurities

Same as for regressors:

- List all possible splits

- Calculate each impurity

- The optimal division is minimal impurities

Composite table of all partitions

To automate everything in Excel,

organize all calculations 1 tablewhere:

- Each row corresponds to one split candidate,

- For each row, calculate:

- Gini left region,

- Gini right region,

- and Overall weighted Gini of division.

This table provides a short and concise overview of all possible splits.

And the best split is simply the one with the lowest value in the last column.

Multiple class classification

Up until now, I have been working in two classes. However, the impurity of Gini naturally extends to: 3 classesthe logic of the split remains exactly the same.

The structure of the algorithm remains unchanged.

- List all possible splits.

- Calculate the impurities on each side.

- Taking the weighted average,

- Select the split with the least impurities.

Only the Gini impurity formula is a little longer.

Gini impurity with three classes

If the region contains the ratios p1, p2, p3

For the three classes, the Gini impurities are:

Same idea as before:

A region is “pure” if one class dominates.

Mixing classes increases impurities.

left and right areas

For each split:

- Region L contains some observations of classes 1, 2, and 3

- Region R contains the remaining observations

For each region:

- Count the number of points belonging to each class

- Calculate the ratios p1, p2, p3

- Calculate the Gini impurity using the above formula

Everything is exactly the same as in the binary case, except for one term.

3 class division summary table

As before, collect all calculations into one table.

- Each row is one possible split

- Count from the left as class 1, class 2, and class 3.

- Count class 1, class 2, class 3 on the right side.

- Compute the Gini (left), Gini (right), and weighted Gini.

split with least weighted impurity The one selected by the decision tree.

The algorithm can be easily generalized to K classes by calculating the gini or entropy using the following formula:

In reality, how different are impurity countermeasures?

Now, we always refer to Gini or entropy as a criterion, but Is it really different??When you look at the formula, some people may say:

The answer is not very much.

In theory, in almost any real-world situation:

- Gini and entropy Select the same split

- The tree structure is almost the same

- The predictions are as follows same

why?

Because their curves are very similar.

Both peak at 50% mixing and decrease to zero purity.

The only difference is shape Curved:

- Gini is quadratic function Penalize misclassifications more linearly.

- entropy is logarithm Since it's a function, we penalize the uncertainty a little more strongly around 0.5.

But in reality the difference is small and you can do it in Excel.

What about other measures against impurities?

Another obvious question: Is it possible to devise/use other means?

Yes, you can invent your own functions as long as you meet the following conditions:

- it is 0 If the node is pure

- it is the biggest When classes are mixed

- it is smooth And strictly speaking, “disability” is increasing.

Example: impurity = 4*p0*p1

This is also a valid impurity measure. and it is actually equal to Gini If there are only two classes, multiply by a constant.

Again, it gives same division. If you are not satisfied,

Here are some other measures you can use.

Exercises in Excel

Testing with other parameters and features

After building the first split, you can expand the file.

- try entropy instead of Ginny

- try adding categorical features

- Try building next split

- try changing maximum depth Observe underfitting and overfitting

- Create a confusion matrix for prediction

These simple tests have already given us an intuition about how a real-world decision tree will behave.

Implementing rules for the Titanic Survival Dataset

A natural follow-up exercise is to recreate the well-known decision rule. Titanic survival dataset (CC0 / Public domain).

You can start with just two features: sex and year.

Implementing rules in Excel is long and a little tedious, but that's exactly the point. This will help you understand what the decision rules actually are.

They are just a series of things. IF/ELSE A statement repeated over and over again.

This is the essence of a decision tree. A stack of simple rules.

conclusion

Implementing a decision tree classifier in Excel is surprisingly easy.

With some formulas we can reveal the heart of the algorithm.

- list possible splits

- calculate impurities

- Choose the cleanest split

This simple mechanism is the basis for more sophisticated ensemble models such as: gradient boosted treeWe'll discuss this later in this series.

stay tuned 8th day tomorrow!