NVIDIA today introduced a wave of cutting-edge AI research. It enables developers and artists to bring their ideas to life, whether still life or motion, 2D or 3D, surreal or fantasy.

Nearly 20 NVIDIA research papers advancing generative AI and neural graphics (including collaborations with over 10 universities in the US, Europe, and Israel) will be presented at Best Computers, August 6-10 in Los Angeles. It will be announced for SIGGRAPH 2023, a graphics conference.

These papers include generative AI models that transform text into personalized images. An inverse rendering tool that converts still images into 3D objects. A neurophysics model that uses AI to simulate complex 3D elements with stunning realism. and a neural rendering model that unlocks new capabilities for generating visual detail powered by real-time AI.

Innovations from NVIDIA researchers are regularly shared with developers on GitHub and built into our products. This includes his NVIDIA Omniverse platform for building and operating metaverse applications, and his recently announced NVIDIA Picasso, a foundry of custom generative AI models for visual design.With years of his NVIDIA graphics research, the recently released Cyberpunk 2077 Ray Tracing: Overdrive Mode, World’s First Path Tracing AAA Title.

Research advances presented at this year’s SIGGRAPH will help developers and companies rapidly generate synthetic data to feed into virtual worlds for robotics and self-driving car training. It also enables art, architecture, graphic design, game development, and film creators to more quickly create high-quality visuals for storyboards, pre-visualization, and even production.

Personal Touch AI: A Customized Text-to-Image Model

Generative AI models that convert text to images are powerful tools for creating concept art and storyboards for movies, video games, and 3D virtual worlds. Text-to-image AI tools transform prompts like “kids toys” into nearly limitless visuals that creators can use for inspiration, generating images of stuffed animals, blocks, or puzzles To do.

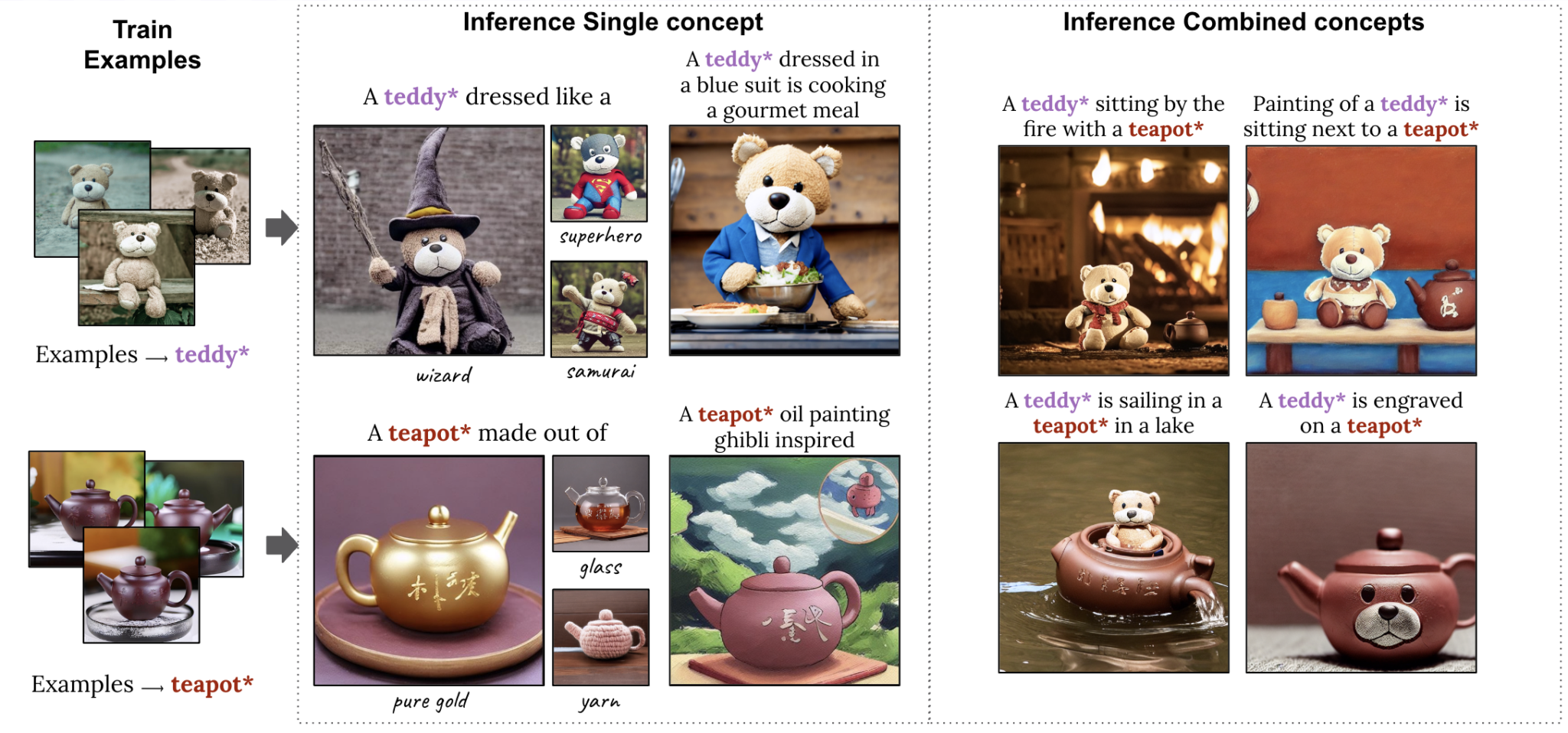

However, an artist may have a particular subject in mind. For example, the creative her director of a toy brand is planning an advertising campaign about a new teddy bear and wants to visualize the toy in different situations, such as teddy bear tees at his party. To achieve this level of specificity in the output of generative AI models, researchers at the University of Tel Aviv and her NVIDIA have produced two of his SIGGRAPH papers, in which users can see images that models quickly learn can provide an example of

A paper describes a technique that requires a single sample image to customize the output. This accelerates the personalization process from minutes to about 11 seconds on a single NVIDIA A100 Tensor Core GPU, more than 60x faster than previous personalization approaches.

A second paper presents a very compact model called Perfusion. It uses a small number of concept his images to allow users to combine multiple personalized elements (such as a specific teddy bear or teapot) into a single AI-generated visual.

Delivered in 3D: advances in reverse rendering and character creation

Once the creator has come up with concept art for the virtual world, the next step is to render the environment and place the 3D objects and characters. NVIDIA Research invents AI technology to accelerate this time-consuming process by automatically converting 2D images and videos into 3D representations that creators can import into graphics applications for further editing. .

A third paper, written in collaboration with researchers at the University of California, San Diego, describes techniques that can generate and render photorealistic 3D head and shoulders models based on a single 2D portrait. increase. This is a major breakthrough that enables 3D avatar creation and 3D video conferencing. AI accessible. This method runs in real-time on the consumer’s desktop and can generate a photorealistic or stylized 3D telepresence of him using only his traditional webcam or smartphone camera.

A joint project with Stanford University, the fourth project brings 3D characters to life. Researchers have created an AI system that can learn various tennis skills from his 2D video recordings of real tennis matches and apply these motions to his 3D character. A simulated tennis player can hit the ball precisely to his target position on the virtual court and even conduct lengthy rallies with other characters.

Beyond the tennis test case, this SIGGRAPH paper tackles the difficult task of creating a 3D character that can perform a range of skills with realistic movements without the use of expensive motion capture data.

No hair out of place: Neural physics enables realistic simulations

Once the 3D character is generated, artists can overlay realistic details such as hair. This is a complex and computationally expensive challenge for animators.

There are an average of 100,000 hairs on the human head, each of which responds dynamically to individual movement and the surrounding environment. Traditionally, creators have used physics formulas to calculate hair movement, simplifying or approximating it based on available resources. That’s why virtual his characters in big-budget movies feature much more detailed hair than real-time video game avatars.

A fifth paper shows how neural networks can simulate tens of thousands of hairs in real time at high resolution using neural physics, an AI technique that predicts how objects will behave in the real world. It has been.

The team’s novel approach to accurate simulation of full-scale hair is especially optimized for the latest GPUs. Performance is greatly improved compared to state-of-the-art CPU-based solvers, simulation times are reduced from days to just hours, and the quality of real-time possible hair he simulations is also improved. This technology finally enables accurate and interactive physically based hair grooming.

Neural Rendering Brings Cinematic Detail to Real-Time Graphics

After the environment is filled with animated 3D objects and characters, real-time rendering simulates the physics of light reflecting through the virtual scene. His recent NVIDIA research shows that AI models of textures, materials and volumes can deliver film-quality photorealistic visuals in real time for video games and digital twins.

NVIDIA invented programmable shading over 20 years ago, allowing developers to customize their graphics pipeline. In these latest neural rendering inventions, researchers augment programmable shading code with AI models running deep within NVIDIA’s real-time graphics pipeline.

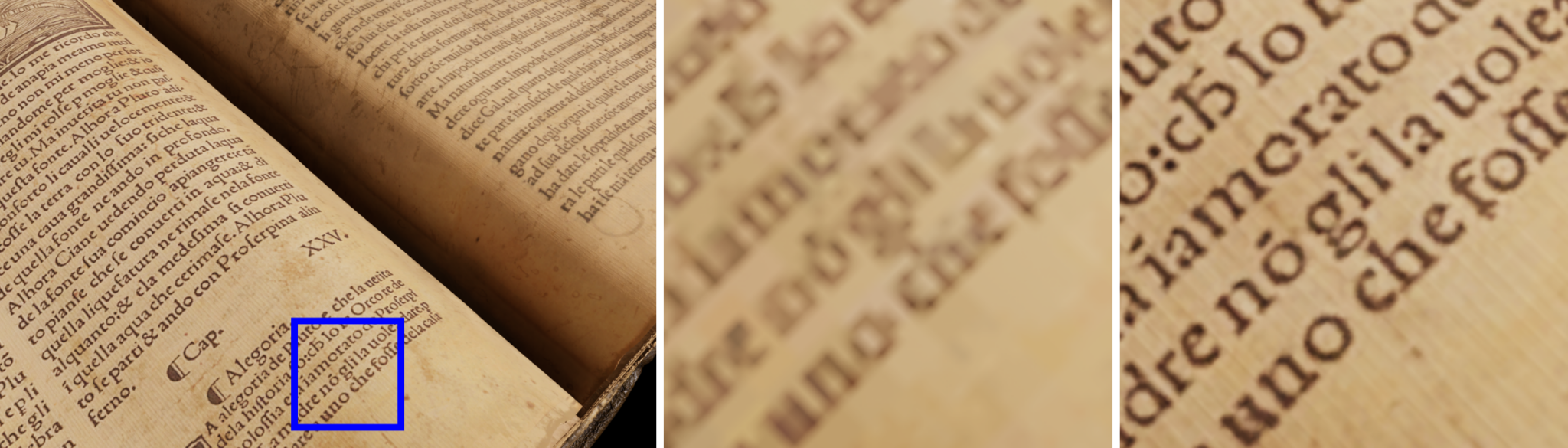

In the sixth SIGGRAPH paper, NVIDIA presents Neural Texture Compression that delivers up to 16x more texture detail without requiring additional GPU memory. As you can see in the image below, Neural Texture Compression can greatly improve the realism of 3D scenes. This shows how the neural compression texture (right) captures sharper details than the previous format (middle) where the text remains blurry.

A related paper published last year is now available in early access as NeuralVDB, an AI-enabled data compression technique that reduces the memory required to represent volumetric data such as smoke, fire, clouds, and water by a factor of 100. became.

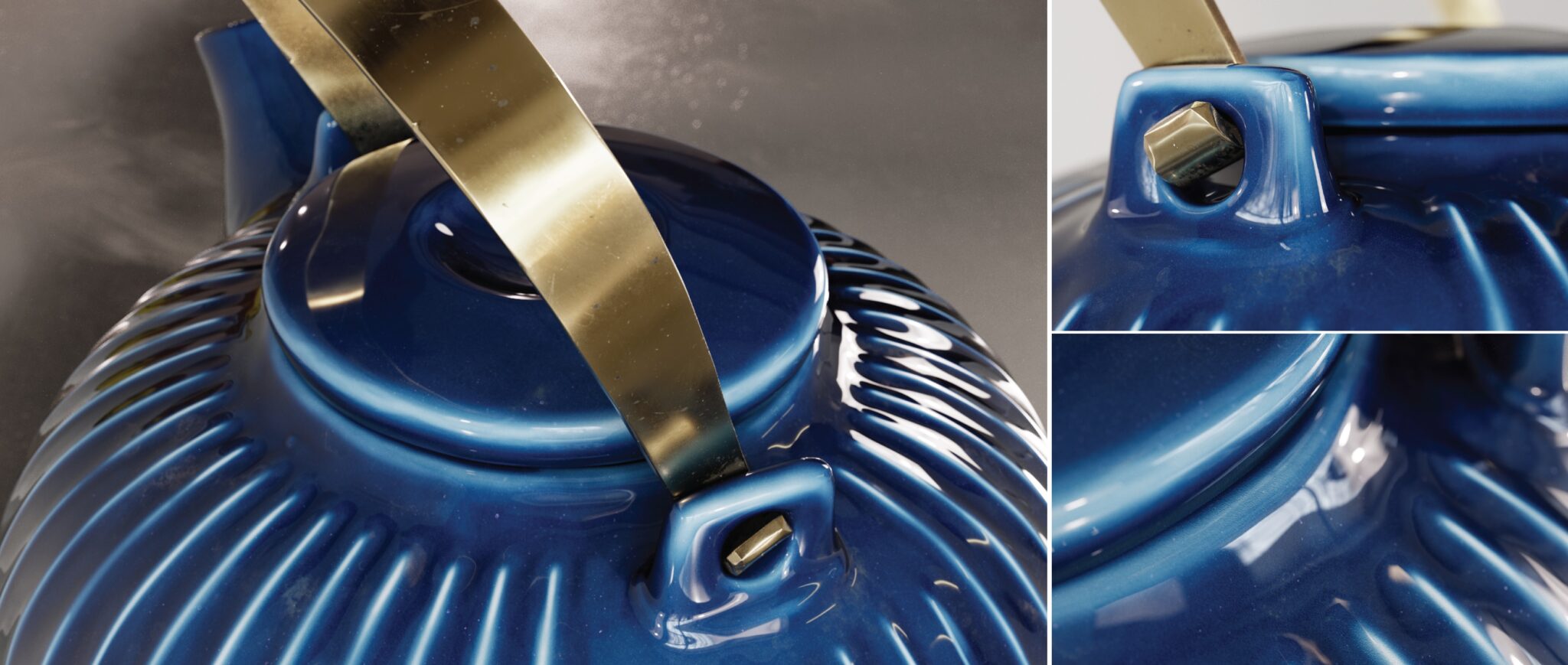

NVIDIA also announced today details of the neural materials research presented in the latest NVIDIA GTC keynote. In this paper, we study how light reflects from photorealistic multi-layer materials and reduce the complexity of these assets to small neural networks running in real-time for up to 10x faster shading. It describes an AI system that enables

You can see the level of realism in this neurally rendered teapot with accurate representations of ceramic, imperfect clear coat glaze, fingerprints, smudges and even dust.

More generative AI and graphics research

These are just the highlights. For more information on all NVIDIA papers, visit SIGGRAPH. NVIDIA will also present six courses, four talks, and two of his Emerging Technology demos at the conference, including topics such as path tracing, telepresence, and diffusion models for generative AI.

NVIDIA Research has hundreds of scientists and engineers around the world, with teams focused on topics such as AI, computer graphics, computer vision, self-driving cars, and robotics.