Traditional knowledge graphs are static and thus have difficulty adapting to the rapidly changing conditions of production environments. To address this limitation, this section proposes a dynamic optimization framework that incorporates reinforcement learning. Specifically, the framework employs the Q-learning algorithm to evaluate inference results from the knowledge graph in real time. Based on this evaluation, it adjusts the weights within the graph structure, allowing for the adaptive and continuous updating of manufacturing knowledge.

Reinforcement learning is a machine learning method that enables intelligent systems to interact with their environment and learn through trial and error to maximize cumulative rewards. Unlike traditional supervised and unsupervised learning methods, reinforcement learning does not require labeled data but rather improves decision-making capabilities through iterative feedback36.

The process of optimizing knowledge graphs using reinforcement learning begins with the interaction between the intelligent mold system and the knowledge graph. Based on the current state, actions are chosen—typically selecting new triples involving specific head entities from the knowledge graph. The system employs a retrieval function or random selection function to obtain a series of triples related to the selected head entity. Subsequently, a transformation model computes attribute vectors for each retrieved triple. These attribute vectors capture semantic associations between entities and relations, forming the basis for subsequent evaluations. The system assesses the relevance between new triples and known triples by calculating the similarity between attribute vectors. During evaluation, if the similarity between a new triple and known triples is sufficiently high, the system adds it to the candidate set. Concurrently, based on evaluation results, the system may provide positive rewards to reinforce strategies for future action selection. As the system continues to interact with and learn from the knowledge graph, employing Q-learning update policies from reinforcement learning optimizes the accuracy and efficiency of action selection. This iterative process enhances the system’s understanding of complex knowledge graph structures, thereby improving and expanding its application effectiveness across various intelligent domains. Ultimately, through continuous evaluation and optimization, the intelligent system effectively enhances the quality and utility of the knowledge graph, providing users with more precise and efficient knowledge acquisition and application experiences.

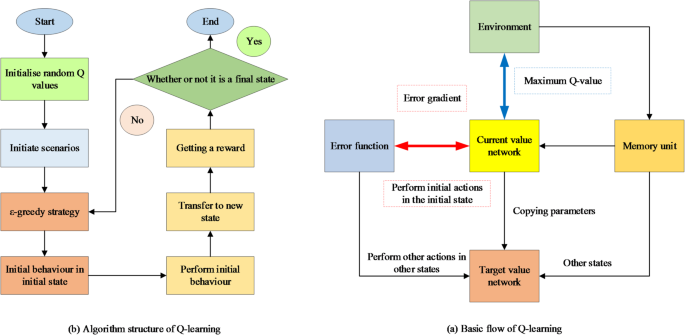

Q-learning is a classic reinforcement learning algorithm used to learn optimal policies for decision-making in unknown environments. Its core involves updating a state-action value function known as Q-value to learn the optimal strategy. The basic process and algorithmic structure of Q-learning are depicted in Fig. 437.

Basic process and algorithm structure of Q-learning.

According to the Bellman equation, the calculation of the Q-value update rule is shown in Eq. (3)38:

$$\varvec{Q}\left(\varvec{n},\varvec{m}\right)\leftarrow\:\varvec{Q}\left(\varvec{n},\varvec{m}\right)+\varvec{\beta\:}\left[\varvec{\delta\:}+\varvec{\mu\:}{\varvec{m}\varvec{a}\varvec{x}}_{{\varvec{m}}^\prime}\varvec{Q}\left({\varvec{n}^\prime},{\varvec{m}^\prime}\right)-\varvec{Q}\left(\varvec{n},\varvec{m}\right)\right]$$

(3)

In Eq. (3), \(\:\varvec{Q}\left(\varvec{n},\varvec{m}\right)\) represents the Q-value when action \(\:\varvec{m}\) is taken in state \(\:\varvec{n}\); \(\:\varvec{\beta\:}\) is the learning rate, controlling the magnitude of each update. \(\:\varvec{\delta\:}\) is the immediate reward obtained after taking action \(\:\varvec{m}\). \(\:\varvec{\mu\:}\) is the discount factor, measuring the importance of future rewards. \({\varvec{n}}^\prime\) is the next state transitioned to after taking action \(\:\varvec{m}\). \({\varvec{m}\varvec{a}\varvec{x}}_{\varvec{m}^\prime}\varvec{Q}\left({\varvec{n}^\prime},{\varvec{m}^\prime}\right)\) denotes the maximum Q-value among all possible actions in state \({\varvec{n}}^\prime\).

Data-driven system for mold design based on reinforcement learning knowledge graph recommendation algorithm

This section builds on the dynamic knowledge graph’s enhanced learning capabilities by introducing the LightGCN-Q recommendation algorithm. LightGCN-Q integrates the high-order feature propagation of graph convolutional network (GCN) with the real-time decision-making power of reinforcement learning. Together, these components form a closed-loop optimization system designed specifically for personalized mold production.

In mold design, geometric features represent key position constraints and design parameters. Through knowledge engineering, feature-driven parameters can be altered to achieve parametric modeling of parts, including feature selection, parametric modeling, and design knowledge storage centered around features. Based on the reinforcement learning knowledge graph recommendation algorithm, this study initially utilizes the open application programming interface of the Nutanix nx platform to implement part modeling, ensuring the technical feasibility of feature-driven knowledge modeling methods. Subsequently, design rules are translated into programming languages to accurately express them. Starting from key parameters of dedicated and associated modules, with parameter changes and transfers, the modeling process is driven to generate dedicated modules that meet requirements.

To further enhance the performance of the knowledge graph recommendation algorithm, this study integrates LightGCN into the core of the reinforcement learning system, optimizing the representation of user and module features and recommendation decisions. LightGCN is a lightweight GCN model specifically designed for recommendation systems to efficiently capture collaborative signals between users and items. Compared to traditional graph neural networks, LightGCN simplifies the model structure by removing unnecessary operations and retaining only the core neighbor information aggregation operation, significantly improving computational efficiency and representational ability. Its multi-layer nested graph convolution design can capture higher-order collaborative relationships between users and items in the interaction graph, enabling the recommendation system to achieve a balance between accuracy and efficiency.

In the mold digitization system, the interaction behavior between users and modules forms a sparse user-module interaction matrix. Based on this matrix, this study optimizes the construction of the user-module interaction graph using LightGCN, where the graph’s nodes include two categories: users and modules. The edges of the graph represent user operations on the modules, and the edge weights can be dynamically adjusted based on interaction frequency or importance. LightGCN aggregates neighborhood information of users and modules in the interaction graph through multiple layers of graph convolution, capturing higher-order collaborative signals. The specific calculation is as shown in Eqs. (4) and (5):

$${\varvec{e}}_{\varvec{u}}^{(\varvec{l}+1)}=\sum_{\varvec{i}\in{\mathcal{N}}_{\varvec{u}}}\frac{1}{\left|{\mathcal{N}}_{\varvec{u}}\right|}{\varvec{e}}_{\varvec{i}}^{\left(\varvec{l}\right)}$$

(4)

$${\varvec{e}}_{\varvec{i}}^{(\varvec{l}+1)}=\sum_{\varvec{u}\in{\mathcal{N}}_{\varvec{i}}}\frac{1}{\left|{\mathcal{N}}_{\varvec{i}}\right|}{\varvec{e}}_{\varvec{u}}^{\left(\varvec{l}\right)}$$

(5)

\(\:{\varvec{e}}_{\varvec{u}}^{(\varvec{l}+1)}\) represents the embedding of user \(\:\varvec{u}\) at layer \(\:\varvec{l}\). \(\:{\mathcal{N}}_{\varvec{u}}\) represents the set of neighboring modules of user \(\:\varvec{u}\). \(\:{\varvec{e}}_{\varvec{i}}^{\left(\varvec{l}\right)}\) represents the embedding of module \(\:\varvec{i}\).

By stacking multiple layers of convolution, the representations of users and modules not only include information from direct neighbors but also capture second-order and higher-order collaborative signals.

In the reinforcement learning framework, the output of LightGCN is used as part of the state representation to improve the accuracy and generalization ability of policy decisions. Let the high-order embeddings of users and modules output by LightGCN, \(\:{\varvec{e}}_{\varvec{u}}\) and \(\:{\varvec{e}}_{\varvec{m}}\), be integrated into the state vector, and the state \(\:{\varvec{S}}_{\varvec{t}}\) is calculated as shown in Eq. (6):

$${\varvec{S}}_{\varvec{t}}=\left[{\varvec{e}}_{\varvec{u}},{\varvec{e}}_{\varvec{m}},\varvec{E}\right]$$

(6)

\(\:{\varvec{e}}_{\varvec{u}}\) and \(\:{\varvec{e}}_{\varvec{m}}\) are the final embedding representations of the user and module, which more precisely describe the latent relationship between user demands and module features. \(\:\varvec{E}\) represents other modeling parameters.

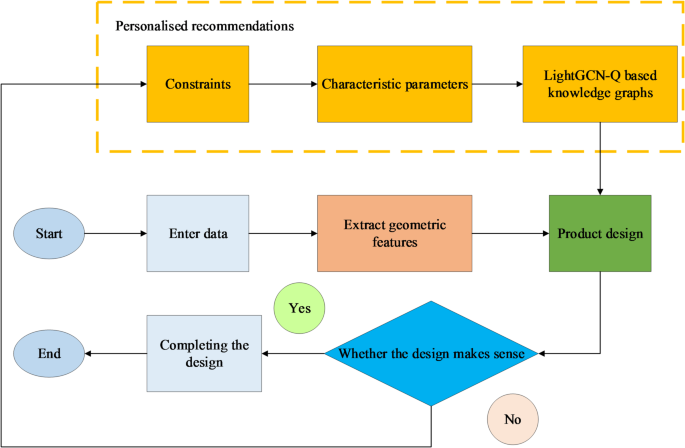

In the reinforcement learning algorithm, the core task of the policy network is to generate an action based on the current state \(\:{\varvec{S}}_{\varvec{t}}\). This action may include recommending a module or adjusting specific parameters to optimize the performance of the mold digitization system. Traditional policy networks might rely solely on simple historical behavior or static rules. However, after integrating LightGCN, by aggregating collaborative signals from user and item nodes, the policy network can obtain richer and more accurate contextual information. This enhanced signal allows the policy network to more accurately predict user preferences and select better actions. When the action chosen by the policy network is accepted by the user, the system provides positive rewards, encouraging the network to learn similar behaviors. Conversely, if the recommended action is not accepted or does not achieve the expected result, the system gives negative rewards to guide the policy network in optimizing the decision-making process. Through this reward-based feedback mechanism and combined with update algorithms such as Q-learning, the policy network can continuously adjust its decision-making strategy, gradually improving the accuracy and efficiency of action selection. Furthermore, the collaborative signals from LightGCN play an additional role in the reward mechanism, helping the system better understand complex user behavior patterns. This enhances its ability to adapt to user demands, thereby achieving comprehensive optimization of the mold digitization system. The process of the mold digitization system under the LightGCN-optimized reinforcement learning knowledge graph recommendation algorithm is shown in Fig. 5.

Process of mold digitization system under the knowledge graph recommendation algorithm based on LightGCN-Q.

The calculation of model prediction \(\:\varvec{A}\varvec{c}\varvec{c}\varvec{u}\varvec{r}\varvec{a}\varvec{c}\varvec{y}\), F1 score, \(\:\varvec{P}\varvec{r}\varvec{e}\varvec{c}\varvec{i}\varvec{s}\varvec{i}\varvec{o}\varvec{n}\), and \(\:\varvec{R}\varvec{e}\varvec{c}\varvec{a}\varvec{l}\varvec{l}\) under the knowledge graph recommendation algorithm based on LightGCN-Q is as shown in Eqs. (7)–(10):

$$\:\varvec{A}\varvec{c}\varvec{c}\varvec{u}\varvec{r}\varvec{a}\varvec{c}\varvec{y}=\frac{\varvec{T}\varvec{P}+\varvec{T}\varvec{N}}{\varvec{T}\varvec{P}+\varvec{T}\varvec{N}+\varvec{F}\varvec{P}+\varvec{F}\varvec{N}}$$

(7)

$$\:\varvec{F}1=2\times\:\frac{\varvec{P}\varvec{r}\varvec{e}\varvec{c}\varvec{i}\varvec{s}\varvec{i}\varvec{o}\varvec{n}\times\:\varvec{R}\varvec{e}\varvec{c}\varvec{a}\varvec{l}\varvec{l}}{\varvec{P}\varvec{r}\varvec{e}\varvec{c}\varvec{i}\varvec{s}\varvec{i}\varvec{o}\varvec{n}+\varvec{R}\varvec{e}\varvec{c}\varvec{a}\varvec{l}\varvec{l}}$$

(8)

$$\:\varvec{P}\varvec{r}\varvec{e}\varvec{c}\varvec{i}\varvec{s}\varvec{i}\varvec{o}\varvec{n}=\frac{\varvec{T}\varvec{P}}{\varvec{T}\varvec{P}+\varvec{F}\varvec{P}}$$

(9)

$$\:\varvec{R}\varvec{e}\varvec{c}\varvec{a}\varvec{l}\varvec{l}=\frac{\varvec{T}\varvec{P}}{\varvec{T}\varvec{P}+\varvec{F}\varvec{N}}$$

(10)

\(\:\varvec{T}\varvec{P}\) is True Positive; \(\:\varvec{T}\varvec{N}\) is True Negative; \(\:\varvec{F}\varvec{P}\) is False Positive; \(\:\varvec{F}\varvec{N}\) is False Negative.

The calculation of model Area Under the Receiver Operating Characteristic Curve (AUC-ROC), mean absolute error (MAE), and root mean square error (RMSE) under the knowledge graph recommendation algorithm based on LightGCN-Q is as shown in Eqs. (11)–(13):

$$\varvec{A}\varvec{U}\varvec{C}={\int}_{0}^{1}\varvec{T}\varvec{P}\varvec{R}\left(\varvec{F}\varvec{P}\varvec{R}\right)\varvec{d}\varvec{F}\varvec{P}\varvec{R}$$

(11)

$$\varvec{M}\varvec{A}\varvec{E}=\frac{1}{\varvec{N}}\sum_{\varvec{i}=1}^{\varvec{N}}|{\varvec{y}}_{\varvec{i}}-{\widehat{\varvec{y}}}_{\varvec{i}}|$$

(12)

$$\varvec{R}\varvec{M}\varvec{S}\varvec{E}=\sqrt{\frac{1}{\varvec{N}}\sum_{\varvec{i}=1}^{\varvec{N}}({\varvec{y}}_{\varvec{i}}-{\widehat{\varvec{y}}}_{\varvec{i}}{)}^{2}}$$

(13)

\(\:\varvec{T}\varvec{P}\varvec{R}\) is True Positive Rate; \(\:\varvec{F}\varvec{P}\varvec{R}\) is False Positive Rate; \(\:{\varvec{y}}_{\varvec{i}}\) is the actual value; \(\:{\widehat{\varvec{y}}}_{\varvec{i}}\) is the predicted value; \(\:\varvec{N}\) is the total number of data points.