Understanding how independent agents achieve coordination remains a central challenge in artificial intelligence, and recent research by Azusa Yamaguchi and colleagues at the University of Edinburgh sheds new light on this complex process. The team is investigating fully independent reinforcement learning and running large-scale experiments to map the conditions under which cooperation emerges, fluctuates, or breaks down completely. Their studies revealed a distinct three-phase structure: a stable conformational phase, a brittle transition region, and a disordered phase separated by significant instability ridges. This finding demonstrates that emergent coordination in multiagent systems behaves as a coherent phenomenon driven by the interaction of scale, density, and a previously unrecognized factor, kernel drift, and suggests fundamental principles governing the behavior of these complex systems.

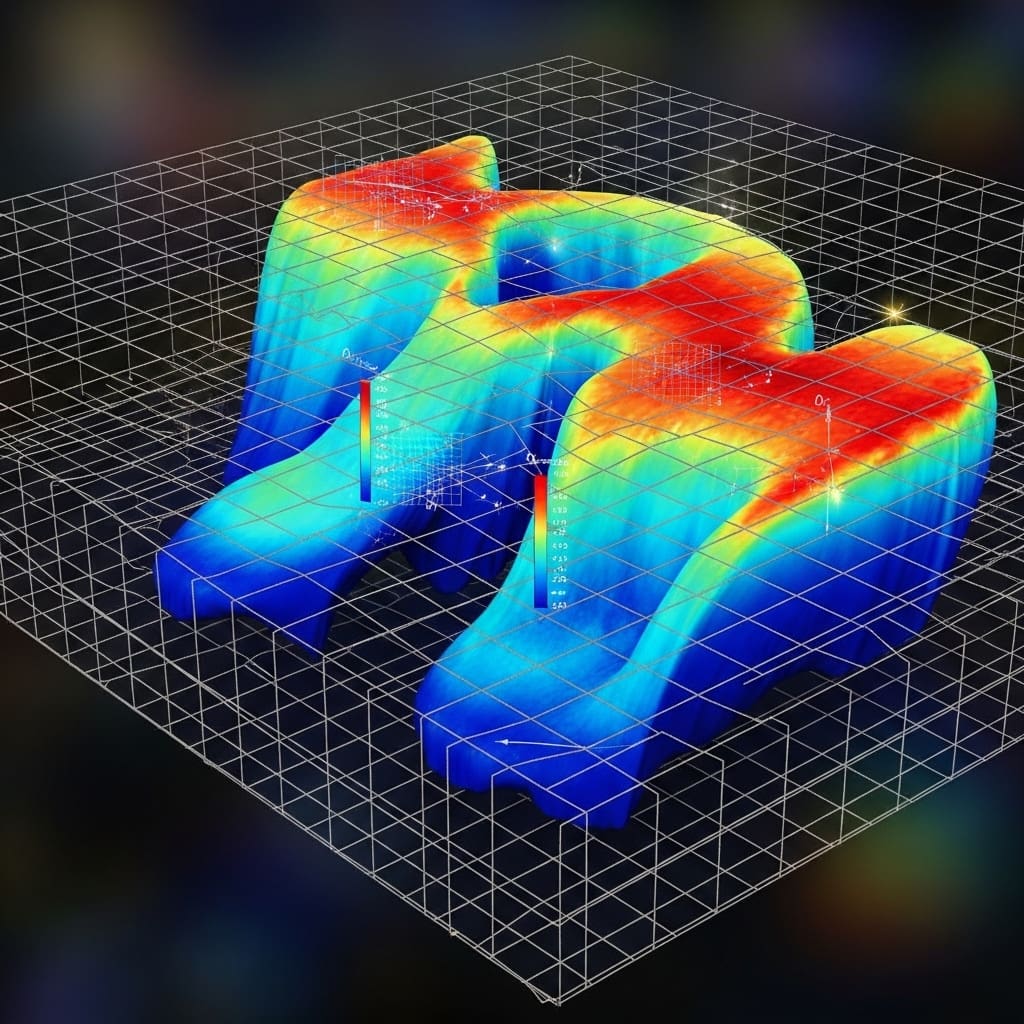

The team established a distributed testbed and ran large-scale experiments across environment sizes and agent densities. They constructed a phase map using stability metrics derived from cooperative success rates and learning errors, revealing three distinct regions: a coordinated stable phase, a vulnerable transition region, and a disturbed or disorganized phase. Sharp boundaries called instability ridges separate these regions and accommodate persistent kernel drift, or changes in each agent’s behavior over time caused by the learning of other agents. Synchronization analysis further showed that sustained cooperation requires temporal coordination and that drift compromises stability.

Breaking symmetry promotes the emergence of coordination

This study details the emergence of cooperative behavior in multi-agent systems and investigates how agents synchronize and coordinate under different conditions. This study investigates the effects of environment size and agent density, as well as the impact on system behavior of removing individual agent identities. The results show that the system exhibits three distinct phases. a coordinated phase in which agents are well synchronized, a fragile phase that is subject to fluctuations, and a disturbed or chaotic phase in which coordination is minimal. The appearance of these phases is strongly influenced by the size of the environment and agent density, with larger environments suppressing learning errors.

Importantly, removing the agent’s identity dramatically changes the dynamics of the system, causing the coordinated, vulnerable, and disturbed phases to disappear. This suggests that asymmetry is essential to control system behavior, suppress learning errors, and amplify update noise. The system becomes nearly homogeneous, eliminating the transitions observed when agents have unique properties. These findings highlight the importance of individual differences in shaping collective behavior.

The emergence, fluctuation, and collapse of coordination within agents

Through extensive experiments, researchers have achieved a comprehensive understanding of how coordination arises, fluctuates, and breaks down in distributed multi-agent reinforcement learning systems. In this study, we focused on completely independent learning and mapped the dynamics of learning by systematically varying the size of the environment and the density of agents. The results demonstrated the existence of three distinct regions: a coordinated stable phase, a brittle transition region, and a disturbed or disordered phase, revealing a coherent phase structure that governs the emergent coordination. The research team characterized each experimental condition using stability metrics derived from collaborative success rates and learning errors.

In all conditions, both indicators were high at small scales and low densities, indicating that coordinated behavior can emerge even in the absence of central control. However, as either scale or density increased, both metrics collapsed rapidly, defining an “instability ridge” corresponding to persistent kernel drift. Experiments reveal that as agent density increases, the cooperation success rate decreases significantly and learning error increases. This suggests that congestion amplifies kernel drift and makes learning unstable. Further analysis showed that temporal synchronization is important for maintaining coordination and that poor synchronization is a characteristic of vulnerable transition regions. In particular, removing the agent identifier eliminated kernel drift and collapsed the three-phase structure. This shows that even small asymmetries between agents are a necessary factor in drift. These findings establish a clear link between scale, density, kernel drift, and the emergence of cooperative behavior.

Emergent adjustments, fragility, and ridges of instability

This study shows that independent multi-agent reinforcement learning exhibits systematic patterns of emergent adjustments, vulnerabilities, and failures in response to changes in agent density and environmental scale, despite the absence of centralized control. By combining a measure of cooperation success with a stability measure based on learning error, the scientists created a phase diagram that reveals three distinct states. namely, a stable phase of coordination, a region of weakness characterized by oscillating coordination, and a disordered phase in which coordination breaks down. A key finding is the identification of a “ridge of instability” that separates these regimes, indicating changes in learning dynamics caused by changes in agent behavior. These observations suggest that coordination in multi-agent systems appears as a spontaneous phenomenon shaped by interactions rather than an explicit coordination mechanism. Experiments that remove agent identifiers eliminate the observed phase structure and support the interpretation that small asymmetries between agents are an important driver of these dynamics. The researchers propose that understanding kernel drift, the variation in effective behavior, provides a unified view of the instability of these systems and provides the basis for future stability analyses.

👉 More information

🗞 Emergent coordination and phase structure in independent multi-agent reinforcement learning

🧠ArXiv: https://arxiv.org/abs/2511.23315