We look forward to introducing split cost allocation support for accelerated workloads in Amazon Elastic Kubernetes Service (EKS). This expansion of splitting EKS cost allocation data allows customers to track container-level resource costs for accelerator-driven workloads. Split cost allocation data now utilizes Trainium, Irsentia, Nvidia and AMD GPUs to complement existing CPU and memory cost tracking capabilities. This cost data is available in AWS Cost and Usage Reporting (Legacy and Cur 2.0) and provides organizations with a unified view of cloud spending. This feature is now available in all AWS commercial regions (except China region) at no additional cost to customers.

Challenges in monitoring and allocation of container costs for accelerated workloads

Organizations are increasingly using Amazon EKS' accelerator-powered workloads to power AI (AI) applications, including machine learning (ML) and generator AI applications. These workloads typically run on multi-tenant clusters that host multiple application containers using shared Amazon Elastic Compute Cloud (Amazon EC2) instances. These accelerator resources are extremely valuable and in high demand, so it is important to optimize usage and maximize return on investment. These clusters run application workloads across multiple teams, departments, and environments. Customers need detailed cost visibility and accountability to accurately allocate costs, set budgets, and promote efficient use of resources. Furthermore, simply visualizing the CPU and memory of accelerated workloads provides an incomplete view of infrastructure usage and misallocating costs. Customers are looking for granular pod-level usage data for accelerator resources, along with CPU and memory. As a result, customers often rely on using homemade solutions or third-party products.

Get granular cost visibility for accelerated workloads running in EK using split cost allocation data

EKS' accelerator support for split cost allocation data provides customers with a native AWS solution that allows for visibility into the cost and usage of Kubernetes pods based on the actual use of accelerators (Training, Inkentier, NVIDIA, AMD GPU), CPU, and memory consumed by the pods. Customers can take advantage of cost allocation tags like aws:eks:cluster-name, aws:eks:namespace, aws:eks:node, aws:eks:workload-type, aws:eks:workload-nameand aws:eks:deployment Accelerator Powered Pods is automatically enabled to get a unified view of the cost and usage of applications running in a shared multitenant environment. This feature allows customers to accurately assign estimates, training and GPU costs to their respective cost centers. This allows customers to promote accountability for resource use and make informed product prioritization decisions. Additionally, the split cost allocation data feature allows customers to identify unused computing resources and optimize cluster configuration and container reservations, minimizing inefficiencies. This feature eliminates the need for customers to build their own custom cost management tools. It is resource intensive and can create additional operational cost burdens.

Customers running containerized machine learning workloads can opt-in to split Amazon EK cost allocation data by accessing the AWS billing and cost management console. Once you opt in, EK's split cost allocation data automatically scans the cluster across all accounts in your organization. Inges the container workload's accelerator, CPU, and memory reservation data and prepares granular cost data for this month. EKS' split cost allocation data automatically calculates new split allocation cost metrics. For example, it includes the GPU usage for each Kubernetes Pod, including the Amazon EC2 depreciation cost and the applicable discount. Customers can use the system that created Kubernetes primitives like aws:eks:cluster-name, aws:eks:namespace, aws:eks:node, aws:eks:workload-type, aws:eks:workload-nameand aws:eks:deployment As a cost allocation tag, it makes it easier to organize costs. Customers can analyze costs by hourly, daily or monthly granularity and perform internal chargebacks using cost and usage data exports.

For a detailed explanation of enabling EKS Outflow Cost Allocation Data, see Understanding Split Cost Allocation Data.

How EKS split cost allocation works

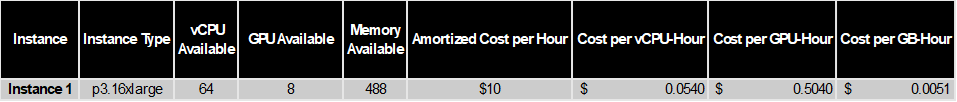

To use this feature, customers must first enable split cost allocation data. For customers with existing split cost allocation data, this feature is automatically enabled. EKS' split cost allocation data takes memory reservations with accelerators, CPUs, and utilization, and uses the reservation and usage magnitude to calculate the amount of GPU, CPUs and memory allocated to the POD. This concept can be best explained by walking through examples. Suppose you have a single EC2 instance running four pods in two namespaces and you want to understand the cost of each namespace. The EC2 instance is P3.16XLARGE with 8GPU, 64 VCPU and 488 GB RAM. The total cost to run an instance is $10/hour. Based on on-demand rates. If an IE, savings plan, or reserved instance has a commitment, use the net amortization cost of the EC2 instance instead. Split cost allocation data normalizes the cost per resource based on the relative GPU ratio: (CPU:Memory) 9:1. This means that a GPU unit costs just as nine times as much as a CPU & memory unit. Next, a 9:1 weight is allocated to the CPU and memory. This matches the current allocation weight. For non-accelerated EC2 instances, the current default behavior is adopted, which is the default CPU:Memory Weight.

There are four steps to calculate (see the documentation page for more detailed calculations):

Step #1 – Calculate unit cost

Based on Accelerator (Trainium, Irsentia, and GPU), CPU and Memory Resources on EC2 instances, we first calculate the unit cost per GPU-HR, VCPU-HR, and GB-HR using the above CPU ratio.

Table 1: Sample Cost, CPU, GB Time per GPU

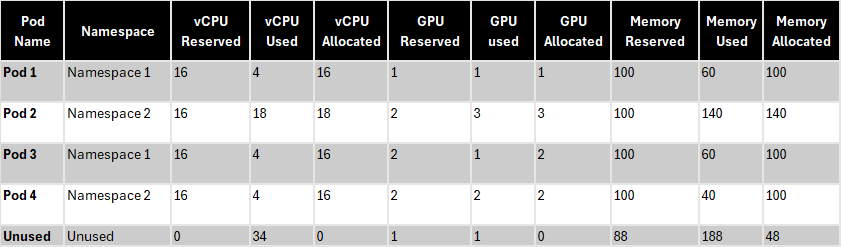

Step #2 – Calculate allocated and unused capacity

The following table lists GPU, VCPU, and memory requests and actual usage across the four Kubernetes pods: POD 2 used more GPU, CPU and memory than was requested because it didn't configure the limit. Split cost allocation data calculates the memory allocated to VCPU and memory based on a larger value between requested usage and actual usage. In this example, there is no VCPU, an unused GPU, but there is 48GB of minor memory.

Table 2: Calculate allocated and unused capacity

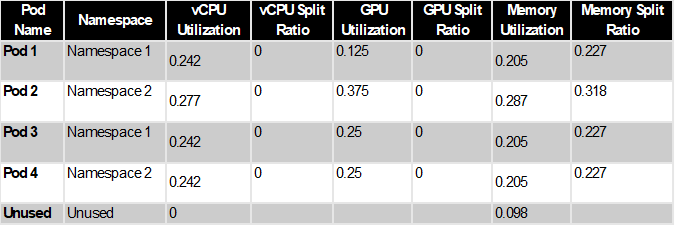

Step #3 – Calculate Usage and Split Usage

Split cost allocation data calculates split usage as a percentage of CPU or memory allocated by the Kubernetes pod compared to GPU, CPU or memory available on the EC2 instance. It also calculates the unused ratio as a percentage of GPU, CPU, or memory allocated by the Kubernetes pod compared to the entire GPU, CPU, or memory allocated on the EC2 instance (i.e. the instance does not consider unallocated GPU, CPU, or memory). For example, the instance has 48GB of unallocated memory. This is 48/488 = 0.098 of total instance memory. POD1 has been allocated 100GB. This is 100/488 = 0.204 of the total memory of the instance. Therefore, the total unused memory ratio for POD 1 is 0.204/(1-0.098) = 0.227.

Table 3: Calculate usage rate

Step #4 – Calculate split and unused costs

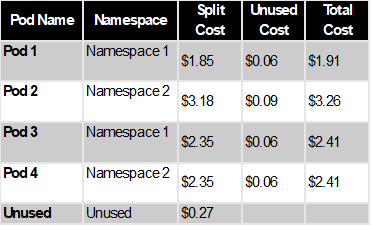

Pod-level split costs are calculated based on split usage multiplied by cost per GPU, VCPU time, and GB time. If there are unused resources, in this case, 48GB of unused memory, the unused instance cost ($0.27) is distributed proportionally to each POD based on the unused ratio calculated for each pod. The total allocated cost for each POD becomes an unused cost that is not redistributable proportionally to the total split costs. Once the EC2 cost is available at the POD level, you can calculate the namespace-level cost of the Aggregate. In this example, the total allocated costs for namespace 1 and namespace 2 are $4.32 and $5.67, respectively. It also aggregates costs using the new EKS cost allocation tags added to the cost category or Kubernetes pods, allowing costs to be calculated at the desired business entity level.

Table 4: Calculate split and unused costs

What are the report columns for new costs and usage?

Existing Split Cost Allocation Data users will not see new columns as this accelerator support feature takes advantage of the current column structure. New customers who enable this feature will observe Kubernetes pod-level metrics in their CUR reports in columns such as “splitlineItem/splituSage” that display GPU, VCPU, or memory allocations for a specific period at the POD level. For a comprehensive understanding of all related columns and their definitions, see the CUR Data Dictionary.

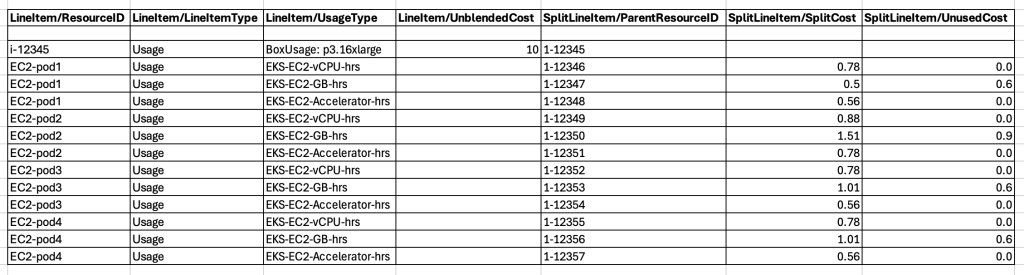

To conclude this example, here is a demo CUR report: You can see how data will be displayed in the new Cur column.

Figure 1: Demo Cur report showing new columns.

You can also query and visualize EKS costs for Amazon QuickSight and Cur Query Library, and use Amazon Athena to query EKS costs and visualize them using the Container Cost Allocation Dashboard.

Conclusion

The visibility of EKS cost data provides insights to improve efficiency and cost optimization. Enable this granularity level of cost data today and learn more about this feature.