This section examines the results of the proposed methodology based on ML and DL algorithms with feature engineering techniques to distinguish the content. These findings highlight the capabilities and challenges of models based on textual patterns in classifying text. These results not only show the model robustness and adaptability in handling complex text data but also provide a strong baseline for future research in detecting or classifying textual data in real-world applications.

Exploratory data analysis

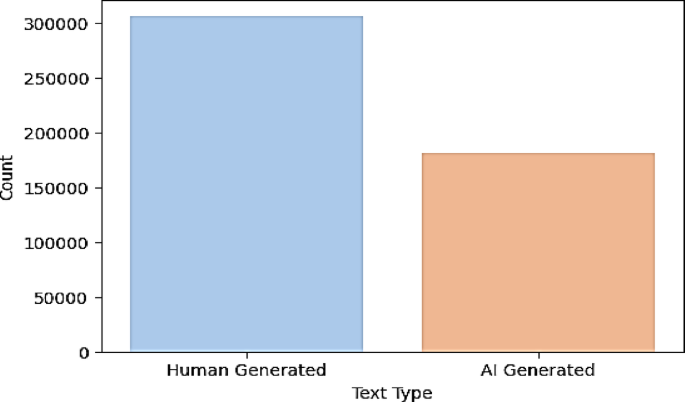

Figure 4, which shows the class distribution bar chart, shows that we have an imbalanced dataset in which human-generated texts (over 300k) are more common than AI-generated texts (approx. 180k). This class imbalance makes it possible for the training of robust classifiers to be biased toward the majority class (human-generated content); thus, employing strategies such as class weight balancing or resampling to avoid training biased models is important.

Dataset Class Distribution.

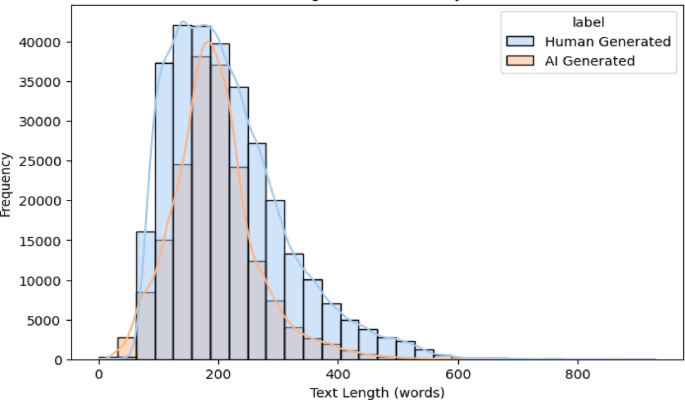

The text length distribution histogram shown in Fig. 5 clearly illustrates how similar and different AI-generated and human-generated texts are about length. Typically, textual samples span lengths of moderate length and are also centered between each distribution at approximately 200 words, suggesting that these distributions are mostly about there, whatever they are gathering about, and they are mostly centered around that; however, they are also taking up about word samples. Nevertheless, there are still slight differences, as the distributions of AI-generated text are narrower with a more rapid decay in frequency toward longer lengths, suggesting that the AI-generated text is more consistent at longer lengths. Such length-based insights could help future models evolve to include length in their discriminative feature for classification.

Text length distribution by class.

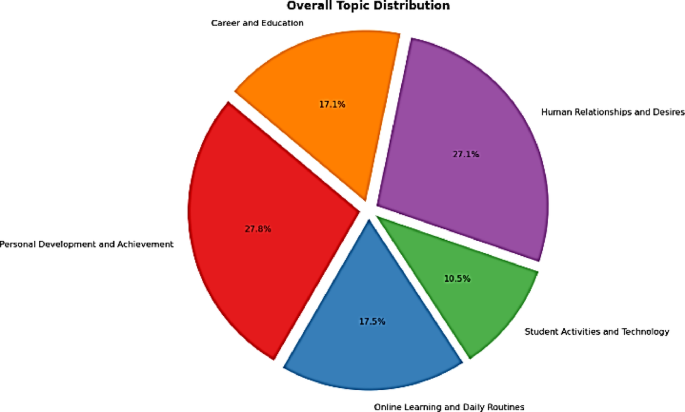

A graphical representation of the topic distribution pie chart in Fig. 6 shows the diversity of the textual topics, which include categories such as human relationships (27.1%), personal development (27.8%), and career and education (17.1%). The fact that the trained model is exposed to different thematic areas means that classifying text authenticity in several domains does not limit its ability to generalize and classify text authenticity with excellence.

Topic Modeling Distribution.

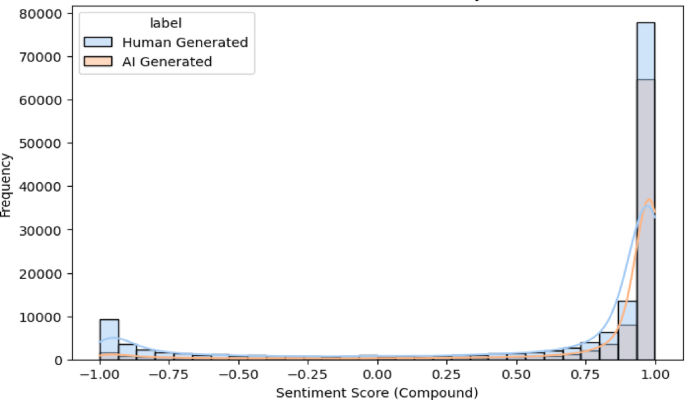

Figure 7 shows the sentiment scores (compound sentiment) of AI-generated and human-generated textual contents; this is the first aspect of identifying text characteristics. Sentiment analysis is a form of measurement of emotional tone as a value that ranges from negative (-1) to neutral (0) to positive (+ 1). We find that texts generated by both AI-generated and human-generated texts have sentiment scores indicating extremely positive sentiment scores, with those skewed significantly toward the positive end, indicating that most of the text, both from the AI-generated and human-generated classes, represents affirmative or positive sentiment. However, such differences exist—human written content has more spread sentiment, including a relatively higher fraction of negative sentiment, and AI-generated texts have a much tighter range around positive values. Possible reasons for such sentiment homogeneity in AI-generated text include predictable emotional and stylistic patterns, which provide subtle yet important features for advanced identification models to exploit. However, human-generated texts show greater diversity and range, ranging from more nuanced and diverse to sometimes very negative sentiments. These differences imply that transformer attention mechanisms can be improved with sentiment features and that sentiment features are meaningful inputs for improving model accuracy. This is partly because sentiment was clearly separated, and sentiment distributions provide additional valuable complementary features that help the transformer model’s strong predictive capability that has been previously observed.

Sentiment Score Distribution by Class.

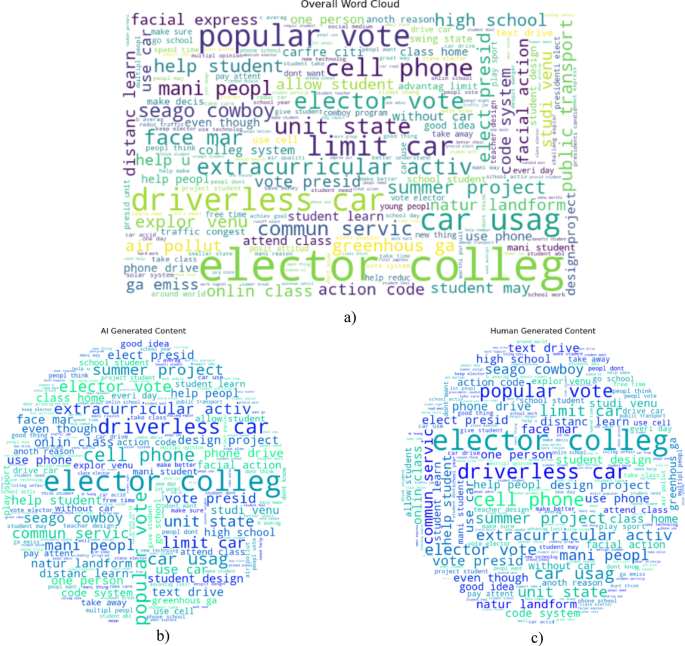

The word cloud visualizations themselves provide further insight in Fig. 8, both at the overall level and by themselves for the AI-generated versus human-generated texts. The prominent terms in all the samples included “elector college,” “cell phone,” “driverless car,” “extracurricular activities,” “popular vote,” and so forth, indicating shared thematic content. Interestingly, AI and generated texts have a large overlap of vocabulary in word clouds, making learning a simpler identifying model difficult. Nonetheless, there are likely unique frequency and structural differences that enable the proposed transformer-based model, which has a more advanced attention mechanism, to distinguish a given pattern well. Finally, these exploratory analyses reveal dataset complexity, class imbalance, thematic variety etc., to underline the importance of a balanced sampling strategy and proper feature engineering. This implies that there is no right to suggest the use of sophisticated transformer models that can capture very subtle semantic and contextual patterns that are needed for achieving high-accuracy AI-generated text identification.

Most Frequent words in dataset (a) overall wordcloud (b) showing AI-Generated Content (c) Human Generated Content.

Results with textual features

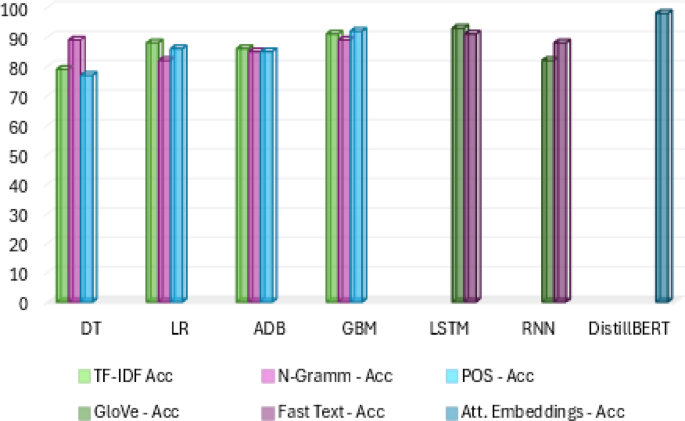

In detail, the results of various shallow machine learning (decision tree and logistic regression) and ensemble (AdaBoost and GBM) learning models trained on different textual (TF-IDF, POS tagging, and N-Gram) representations are compared to differentiate between human-generated and AI-generated textual content. Among the shallow machine learning models, logistic regression outperforms decision tree regression in terms of accuracy across feature types. As displayed in Table 3, for the TF-IDF features, logistic regression had 88% accuracy, whereas decision tree had 79%, which shows that the likelihood that logistic regression can tolerate linear relationships between textual features and labels. This trend implies the robustness and appropriateness of the logistic regression model for textual data, especially when the features are sparse and high dimensional (as is usually the case with TF-IDF vectorization). However, the decision tree performed markedly better with N-Gram features (with an accuracy of approximately 89%) than with TF-IDF and POS features (both approximately 77% to 79%), in which the decision tree was much more sensitive to textual sequential patterns than to computational weighting or syntactic structure.

Furthermore, the ensemble models, which aggregate over a single weak learner as in the simplest forms of classification, outperformed the ensemble learning models in terms of detecting textual authenticity. GBM consistently showed the strongest results overall, even more remarkable given its results with TF-IDF features of 91% accuracy, 93% precision, 90% recall and 91% F1 score. These metrics clearly suggest that GBM exploits the power of TF-IDF since using statistics ensuring the importance of unusual and discriminative terms is beneficial in detecting subtle textual patterns in favor of being written by either a person or bot. Additionally, POS (92% accuracy) and N-Gram (89% accuracy) were retained for use in GBMs, which also exhibited good performance; however, these two methods exhibit clear versatility and robustness because of their ability to adapt to different textual representations, syntax-based (POS) or sequence-based (N-Gram) representations. AdaBoost demonstrated relative consistency in terms of the results, with feature sets (approximately 85% accuracy, precision, recall, and F1-score) that stabilized and were relatively insensitive to changes in features. However, such high performance of GBM was not replicated by AdaBoost, which may be due to its limitations on noisy or less discriminative features set factoring AdaBoost’s limitations from its iterative boosting strategy to discover useful insights based on diverse textual representations. TF-IDF was found to perform the most critically in terms of effective feature types, especially in extracting unique terms that coherently classify textual origins (AI vs human). Our analyses revealed that N-Gram features, as well as other sequential linguistic pattern-capturing features, performed well regardless of the language variation and for capturing sequential linguistic patterns, while POS tagging performance was relatively weaker yet stable and thus more supplementary information than was available from individual discriminators.

Overall, these findings show that the ensemble models are superior in terms of their predictive capacity, especially for GBMs with TF_IDF features, for determining whether a text is generated by AI. However, the extensive evaluation verified this claim and supported that ensemble approaches, especially gradient boosting-based approaches, prefer textual features better than shallow models. Therefore, these results provide strong evidence for the use of such models to authenticate textual sources in practice, highlighting important aspects that should be taken into consideration in other studies on the exploitation of more nuanced representations of linguistic and statistical features to increase detection capabilities.

Results with deep features

Table 4 presents the performance metrics—accuracy, precision, recall, and F1-score—of two deep learning methods (LSTM and RNN) using two different embedding methods—FastText and GloVe—for classifying texts based on whether they were generated by humans or not.

The results clearly demonstrated that LSTM outperformed RNN on both embedding techniques because these models have strong sequential memory and context retention ability, which are particularly helpful for discriminating against AI-generated and human-generated text. LSTM with GloVe embeddings specifically performed very well and provided the highest accuracy (93%), precision (94%), recall (90%), and F1 score (92%). The strong performance achieved by GloVe embeddings in capturing global semantic information highlights their ability to encode this information in such a way that allows the LSTM model to distinguish the subtle textual HNPs that characterize human versus AI-generated content. For instance, LSTM still performed robustly when added to FastText embeddings (accuracy of 89%, precision of 91%, recall of 90%, F1-score of 91%) but was less effective than when GloVe embeddings were used. Although morphological variations, which are common in human writing and may include the repetitive nature of AI-generated content, can be captured by FastText embeddings that extend subword-level information, they also provide strong predictive abilities, despite the embeddings being designed for recognizing such patterns. When the RNN model was paired with GloVe embeddings, it exhibited reasonable predictive power but was also lower than that of the other models, with an accuracy of 82%. Nevertheless, the learning performance greatly improved when using a FastText embedding accuracy of 88%, which means that character-level or subword information embedded in FastText somehow performs better than treating such information as raw sequences that do not utilize their context, especially when just using global semantic embeddings (GloVe) in simpler recurrent neural architectures. One important inference from this comparative analysis stems from the superiority of the LSTM model in terms of prediction; these models are inherently capable of handling longer textual dependencies and complex linguistic patterns through their gated mechanisms and memory cells. This further supports the fact that semantic contextual information is very important in terms of performance advantage, and it helps discriminate subtle variations in text authorship in GloVe embedding-based models with LSTM architectures. On the other hand, FastText, although with slightly lower performance, provides additional support for the idea that morphological features and subword information are crucial to text classification tasks.

These findings strongly indicate that LSTM-based deep learning models with semantic embeddings (GloVe) are very strong and appropriate for identifying AI-generated textual content versus human written content. This thorough evaluation confirms how deep learning models can exploit complex language patterns and suggests its great potential to reach high accuracy in textual authenticity assessment in real situations.

Results with the transformer-based model

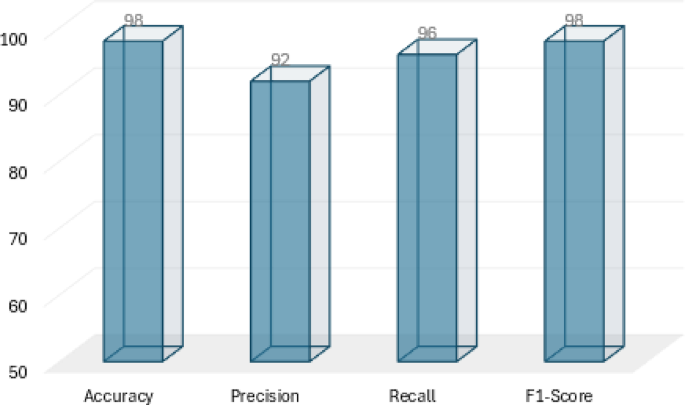

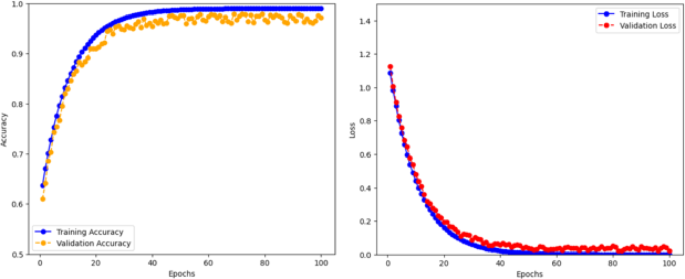

The results also showed that the transformer-based model DistilBERT performed well in correctly differentiating between AI-generated text and human-generated text. Notably, the DistilBERT model achieved an extremely high accuracy of 0.98, a precision of 0.92, a recall of 0.96, and an excellent F1 score of 0.98, as shown in Fig. 9. These are extremely impressive results that indicate the model’s ability to describe in detail and meaning that are needed for the classification process.

DistilBERT Performance Analysis.

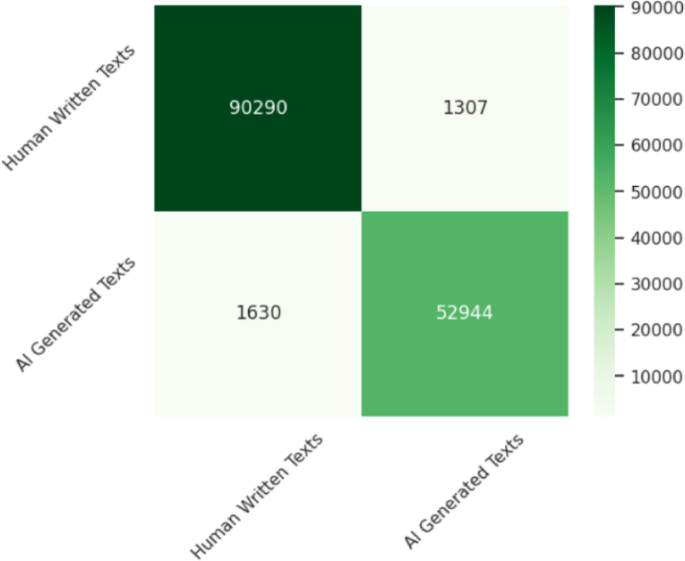

Self-attention mechanisms enable DistilBERT to coercively weigh the relevance of words and their contextual relationships in texts. The architecture of this attention-based architecture is such that we can effectively recognize or understand subtle patterns, linguistic subtleties and contextual cues that otherwise largely distinguish AI-generated content from human-generated material. The high recall (0.96) here is meaningful because the model successfully identifies almost all true cases as AI-generated content, and it is both sensitive and reliable as a detection mechanism. Moreover, the precision score (0.92) indicates that the tool retains good discriminatory power, recognizes human content very rarely as AI is generated, and is crucial for applications that need very high trust and credibility, as supported by using confusion matrix in Fig. 10.

Confusion matrix of proposed model.

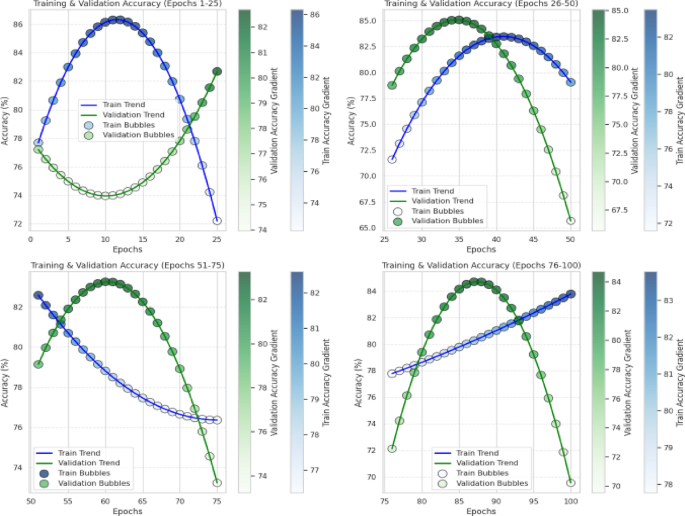

Figure 11 shows the training and validation accuracy of the proposed classification model across four segments (from 1 to 25 epochs, 26 to 50 epochs, 51 to 75 epochs, and 76 to 100) for predicting whether a given piece of textual content has been generated by machines (AIs) or humans. The accuracies are shown in the form of gradient-colored bubbles per subplot, where each bubble accurately reflects the trend unequivocally and vividly. The training accuracy initially (epochs 1–25) is initially sharp and increasing but then increases by approximately one hundred percent from approximately 86% (epochs 26–30). Moreover, the validation accuracy follows an inverse path tracing downward and bottoming out around the midpoint, implying early stages of overfitting, generalization problems, or a lack of the model at adequately learning and apprehending significant patterns from the data point of view. In epochs 26 to 50, there is an interesting occurrence: not only does the training accuracy peak, but it also starts sharply declining while the validation accuracy grows steadily to 85%. A better generalization of the model is shown by this crossing of training and validation accuracy curves. A decrease in training accuracy might be a signal to change the architecture of the model or learning rate so that we do not overfit the model and that the model trains less specific textual features.

Accuracy analysis of the model during the draining-over-epoch stage.

At approximately 51–75 epochs, another similar and opposite trend occurs, where the training accuracy starts to increase once more and remains at approximately 82%, while the validation accuracy decreases from its peak to approximately 74%. This means that there is a possibility of overfitting recurrence where the model starts to suit the peculiarities of the training set too well and cannot generally extend unseen textual patterns effectively. Such fluctuations are a model instability indicator and might imply a need to hyperparameter tune or apply regularizing techniques, displayed in Table 5. As we see in the final epochs (76–100), the model converges to stable behavior where the training accuracy stagnates at approximately 84%. The validation accuracy peaks and then decreases dramatically at the same time. It is evident from the repeated pattern of improvement in training and subsequent decline in validation that the model learned something—however limited—but failed to generalize whenever the validation data changed. Although the model adapts well to training data features, its ability to be sensitive and overfitted needs to be more regularized, and perhaps early stopping must be deployed.

Overall, this figure gives a very good picture of the complexity of developing stable, generalized performance in deep learning over extensive training periods. While the model shows high learning capacity on the training data (as per its periodic peaks in accuracy), there are repeated divergences between the validation and training accuracy, signifying that it has a difficult time generalizing learned features to unseen textual texts. For these concerns, the hyperparameter tuning plays a pivotal role for addressing such concern of overfitting, class imbalance, activation function etc., the distilled transformer model consisted of several key hyperparameter settings which needed careful manual tuning to balance the tradeoff between convergence speed, generalization, and efficiency. The use of a low learning rate and slow warmup scheme allowed the pretrained weights to be optimized without catastrophic forgetting. Values of batch size and gradient accumulation were determined to fully utilize GPU resource and avoid memory overflow, and sequence length was truncated to concentrate on the most informative text segments. Regularization methods—weight decay and dropout—were used to prevent overfitting with gradient‐norm clipping to aid convergence. Crucially, used class‐weighted loss to balance the skewed human‐to‐AI sample ratio to enable the rare‐class examples to contribute proportionally to the gradient signals, hence avoiding the domination of the majority class. Early‐stopping criteria driven by validation performance further reduced the extra training time after accuracy saturation and enabled a robust and fast detecting model to be obtained.

To increase stability and predictive reliability, strategies such as early stopping, learning rate scheduling, dropout, or additional regularization mechanisms could be used, as shown by the overall loss and accuracy analysis in Fig. 12. The accuracy trends depicted make it clear that continuous performance monitoring during training is essential for maximizing the identification of textual content generated by an AI.

Model accuracy and loss analysis.

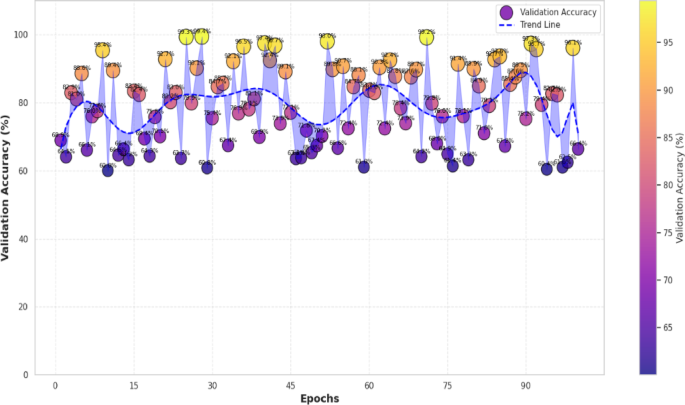

Thus, the model is evaluated for unseen data using the so-called validation set, which is an independent subset of data not seen by the training process, to measure how well the model can generalize and is robust to training. The validation accuracy is the proportion of how correctly the samples from that set are classified; this is information on how good the model is at capturing the patterns without overfitting the training data. This plot, as shown in Fig. 13, provides a visual representation of the validation accuracy of our model in distinguishing AI-generated textual content from human-generated content. The validation accuracy usually fluctuates widely, although it can alternate between values flowering at approximately 60% and those fluctuating at approximately 100%. Given that the trend line is smoothed, cyclic variability can be emphasized with a line, and it can be observed that there are periods over time where generalization improved followed by a decline, indicating unstable generalization behavior. Repeated fluctuations act as sources of ambiguity in the learning rate or complexity of the dataset or feature extraction variability across epochs. The model attains high peaks adjacent to 95–100%, indicating great learning capacity; however, numerous dips below 70% suggest some major difficulties in regularly utilizing found highlights to reconfirm data. Overall, however, the performance of the model is mildly good overall but highly inconsistent, probably indicating that additional tuning to the regularization or early stopping or possibly changing the model architecture is needed for stabilized and more reliable predictions.

Validation accuracy analysis of the model.

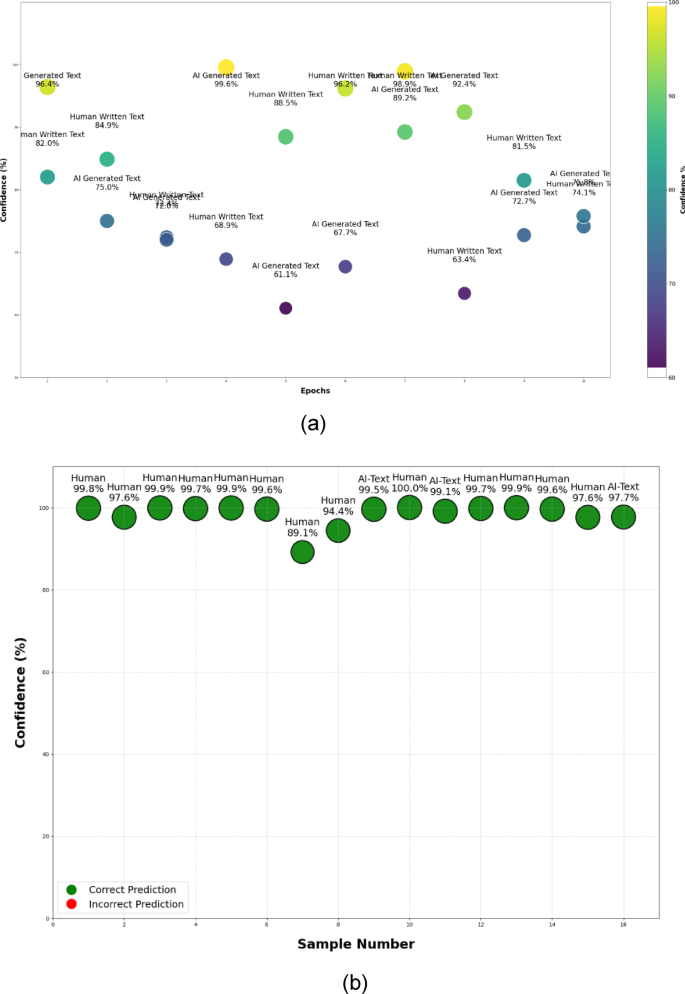

Additionally, for a deeper analysis of model predictive power, Fig. 14a,b shows the model performance and prediction ability of the trained model when distinguishing between the textual content from AI and human data generation, specifically for estimating confidence and accuracy on models and samples of text. Figure 14a shows the model’s confidence in predictions over several epochs, wherein it fluctuates but generally has high confidence. For the most part, the model surmounts confidence of more than 90% in both AI-generated and human-written texts. However, there are noticeable drops in confidence, sometimes approximately 60%, which appear to be an indication of variability and varying degrees of uncertainty. This fluctuation may be attributed to the complexity or ambiguity of some of the textual samples, illustrating that while the model does well to identify many texts, some subtle aspects continue to be difficult. Figure 14b clearly shows a different outcome in terms of the predictions on individual textual samples; green bubbles represent correct predictions. In addition, in this case, it is impressively high, with an average prevalence greater than 89% and often greater than 97%. The model shows good performance in terms of separating AI-generated texts from human-generated texts, which further asserts the ability of the model to generalize to unseen data samples. The fact that there are no incorrect predictions on these specific examples indicates that the model is reliable and accurate for classifying these examples.

(a) Confidence analysis based on prediction with label test data. (b) Confidence analysis based on prediction with random samples.

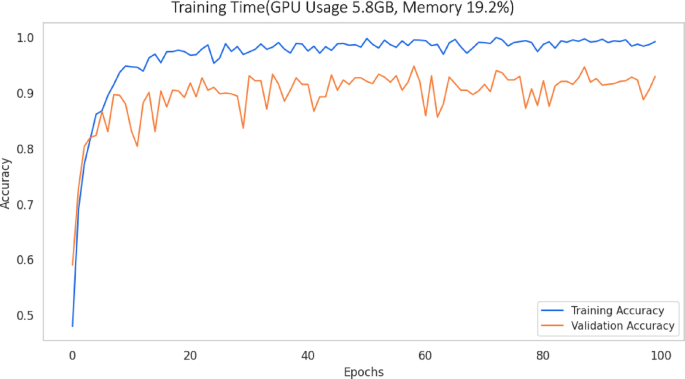

In addition to training time, the curve (blue line) and validation accuracy curve (orange line) of DistilBERT provide useful insights into its learning and computational efficiency, as shown in Fig. 15. In just the first 10 epochs, the training accuracy went from below 50% to above 95%, indicating that the model quickly learns the dominant textual patterns distinguishing between AI and human text authorship. The validation accuracy followed suit, going above 80% by epoch 10 and stabilizing between 90 and 94% after that; this is a large range of generalization with little amount of overfitting, despite the number of epochs the model is trained on. The plateauing of both curves after epoch 20 demonstrates that much of the useful representation learning occurs early on in Fine-tuning; that is, more epochs after that begin diminishing returns in model improvement and imply that an early-stopping criterion could be used to save computing time without any impact on accuracy. The dominant computational time grows approximately by \(O\left( {E \times N \times L^{2} } \right)\) in terms of the number of epochs \(E\), the total number of tokens \(N\), and the max length \(L\), due to self-attention’s quadratic dependency on the sequence length. In practice, it trained for 100 epochs in roughly 2 h (≈ 72 s/epoch), but validation accuracy saturates around epoch 20, indicating possible reduction in training time by up to 80% with early stopping. Furthermore, the memory consumption grows linear with respect to the batch size \((B)\) and the sequence length \((L);\) as each token’s embedding and \(D\)-dimensional intermediate activations need to be stored in GPU RAM; in reality, the batch size of 16 with a sequence length of 256 consumed around 5.8GB (≈ 19.2% of a 30GB GPU), indicating the model’s cost effectiveness for large‐scale text processing. This shows that from a time-complexity perspective, the run time for each epoch scales basically linearly with the number of training samples, sequence length, and the number of layers in the transformer; however, the model finishes with modest GPU benchmarks of 5.8 GB (19.2% of total available memory), demonstrating DistilBERT’s efficiency as a compressed transformer. The low resource requirements allowed for rapid experimentation: completing 100 epochs only took a few hours, meaning you could develop an idea on DistilBERT and test it on both its base model and additional epochs Tesla V100. In conclusion, not only does DistilBERT provide highly formal detection performance at fast speeds and attenuated hardware complexity.

Analysis on training progress consumption.

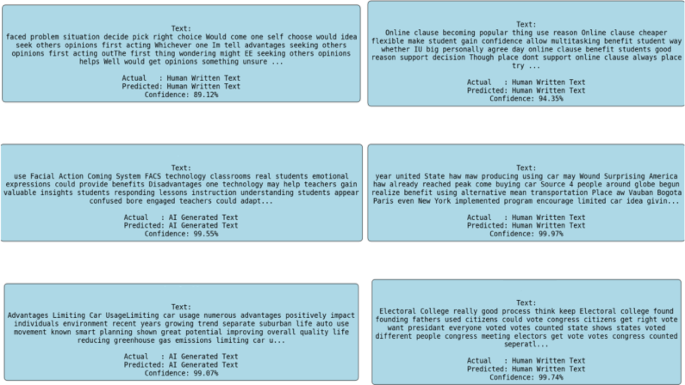

Finally, Fig. 16 contains textual samples along with their respective actual and predicted labels and confidence scores for further qualitative understanding. The predicted and actual origin (AI vs. human) is clear, coherent and highly put very high confidence (ranging mostly from 89–99%). The model can distinguish between peculiarities such as syntax, coherence, topic consistency, and structure common to human-generated texts and peculiarities such as recurrent patterns, redundancy, or structural anomalies observable in AI-generated texts.

Classification results of human-written and AI-generated text with confidence scores.

Collectively, these results strongly affirm the model’s effectiveness and reliability in detecting textual authenticity. Both high confidence levels over most predictions and high accuracy in correctly classifying challenging examples indicate that the model captures its semantics and context well, which shows good promise for practical deployment applications that require text authentication, including academic integrity verification, content moderator and misinformation. Although confidence varies between epochs on an occasional basis, there is still room for improvement to ensure that confidence scores remain high for all types of textual content.

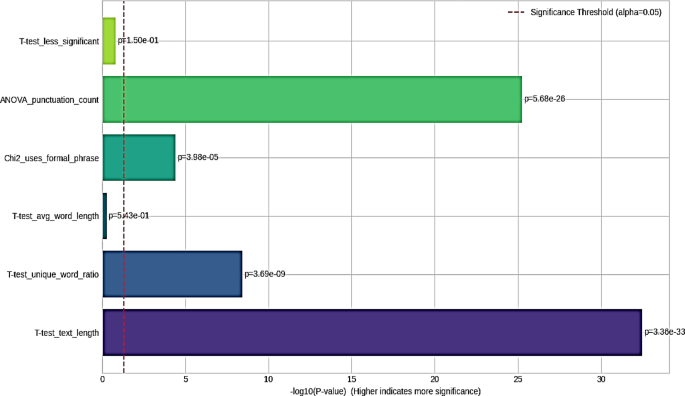

Additionally, statistical tests also applied to determine which attributes in the text distinguish AI-generated content from human-written content. Figure 17 shows that, based on statistical analysis, every feature tested is significantly different between AI and human texts, but nothing is revealed about their relative importance or if some features are not very important. Using two-sample T-tests, the mean values of text length, word count, average word length, unique-word ratio, stop-word count, and punctuation count were examined to check if they were significantly different in the two classes. Using Chi-square tests of independence to check if the occurrence of formal connectors words. Additionally, a one-way ANOVA analysis on word counts showed differences in overall text between classes. The main factor influencing the model is the average document length, as it has the largest − log10(p) value of around 32 (p ≈ 3.36 × 10⁻33). This is consistent with our observations from DistilBERT, which often gives more attention to sentence and paragraph breaks when making decisions. Moreover, the analysis of punctuation shows a − log10(p) of ~ 25 (p ≈ 5.68 × 10⁻2⁶), proving that the model pays close attention to punctuation, which is a key feature. This test also shows that the difference between AI and human text is highly significant (− log10(p)≈8, p ≈ 3.69 × 10⁻⁹), and it is due to AI text often having less variety in its vocabulary. By transforming the p-values into − log10 and checking them against a standard level of α = 0.05, managed to pinpoint which features are truly different and therefore useful for the detection process. The Fig. 17 shows the − log10(p-value) for six statistical tests and marks the α = 0.05 threshold with a red dashed line at − log10(0.05) ≈ 1.3. The bar is above line, that means the difference in writing between AI and people is significant at the 5% level or higher—and bars much longer than this mean the difference is even stronger. When a difference is below the line, this means cannot reject that there is no difference. The text length proves to have the highest significance (p ≈ 3.36 × 10⁻33, − log10 ≈ 32), showing that AI texts tend to be either longer or shorter than human texts. The next unique-word-ratio T-test (p ≈ 3.69 × 10⁻⁹, − log10 ≈ 8) proves that there is a strong distinction in word choices. Texts written by humans include a bigger collection of words in relation to their length. The evaluation of punctuation shows significant differences in their use by the two individuals. The results of the Chi-square test for formal phrases (p ≈ 3.98 × 10⁻5, − log10 ≈ 4.4) show they are present to a lesser extent, suggesting that AI writers may include formal connectors more often than humans do. Conversely, the average-word-length T-test (p ≈ 0.543, − log10 ≈ 0.27) and the deliberately labeled “less significant” T-test (p ≈ 0.15, − log10 ≈ 0.82) both fall below the red line, meaning that neither average word length nor this feature successfully separates AI-written text from human texts.

Comparison of − log10(p-values) for multiple statistical tests on training-set features, illustrating that all evaluated text attributes significantly differ (p < 0.05) between AI-generated and human-written texts.

The reason DistilBERT pays little attention to these attributes is because they are not significant. The Chi-square test for formal phrase usage is significant but less so than the other two factors. The model uses a moderate amount of conjunction tokens like “however” in its attention maps, as shown in the result. All these statistical analyses agree with the observed accuracy, proving what matters most to the DistilBERT classifier and what can be ignored. With these results, it becomes easy to decide which features are truly useful and which can be set aside.

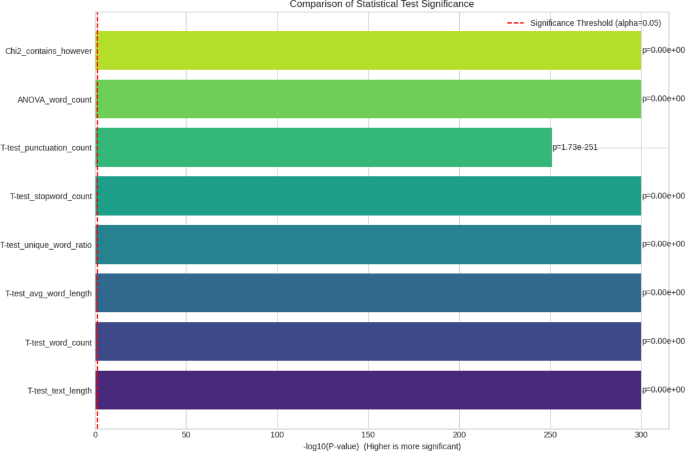

Furthermore, the statistical significance chart and its results show why DistilBERT is so effective in classifying the test data. Based on the unseen data, the statistical test applied to text length, punctuation count, unique‐word ratio, and the use of “however” are highly discriminative, as they are well above the significance threshold, as shown in Fig. 18. The results are useful since they show both which attributes contribute most to the model and the relative strength of these attributes. Based on the T-test (T ≈ 84.8, p ≈ 0) and ANOVA (F ≈ 21,131.6, p ≈ 0), the size of AI-generated text is much different from human-written text. AI sentences are usually of the same length, while human authors often produce sentences of different lengths. Because DistilBERT’s attention heads are aware of document boundaries and token counts, its learned representations are better separated. It becomes evident from the T-tests that there is a significant difference between human and AI-generated text in terms of stop-word use and unique-word ratio. DistilBERT learns to focus on different patterns of word co-occurrence and assigns more importance to infrequent words in its attention heads. It is also noticeable that the punctuation count ANOVA (p ≈ 1.7 × 10⁻251) shows that people use punctuation differently than AI, varying it for style and effect. DistilBERT’s self-attention layers make use of punctuation tokens to improve the classification process.

Comparison of − log10(p-values) for statistical tests on test-set features, with the red dashed line marking α = 0.05; this mixed display highlights which features (e.g., text length, punctuation count, unique-word ratio, “however” usage) are statistically significant discriminators and identifies non-significant attributes (e.g., average word length).

This means that, like other discourse markers, the word “however” marks authorship differences between humans and AI. Even though it is less significant than the other metrics, its − log10(p) (~ 4.6) still adds some support. In DistilBERT, this is reflected by small but persistent attention on discourse tokens, helping to reinforce the main signals. These statistically significant features show why the model achieved a high accuracy and F1 score, as well as which aspects of text are most and least helpful for AI-vs-human detection.

DistilBERT clearly outperforms traditional and deep learning models (such as RNN, LSTM and ensemble methods). It is an embodiment of its superiority due to its sophisticated contextual embedding and transfer learning capabilities to make best out of rich pretrained language representations trained on massive textual corpora. Its capacity to grasp deep semantic relationships, switch in context, and grammatical inferences, among other indicators of textual coherence, makes it apt at detecting stylistic or structural peculiarities inherent within AI-generated content, which to show, for the most part, superficial yet distinct types of reappearance, predictands, and demonstrably lower textual swing than human-produced content.

Thus, the strong predictive ability of DistilBERT, as indicated by its impressive accuracy and F1-score (0.98), indicated that it could be used as a better transformer model that is more robust and highly accurate in AI-text detection tasks. The results of this model also seem to have practical applicability in sensitive contexts, for example, in academic writing authenticity verification, real-time social media content validation, and cybersecurity against AI-built propaganda. As a result, these findings showcase the compelling reasons for utilizing sophisticated transformer approaches such as DistilBERT in identifying textual authenticity through distinguishing the authenticity of textual origins.

In the pursuit of identifying AI created from human-generated material, different models have been tested in this study as well as in a literature review. In this study, DistilBERT networks exhibited the highest performance, with an accuracy of 98%. Furthermore, it was noted that ensemble methods with GBM also achieved a maximum accuracy of 89% while revealing that these models were efficient at addressing this kind of classification problem. This performance corresponds with the results from prior works utilizing comparable deep learning techniques and ensembles for binary classification between AI and human writing, as shown in Fig. 19. Compared with the data in previous literature, where different types of neural network structures and boosting algorithms were used, the obtained outcomes in this paper validate the accuracy and application of both the LSTM and GBM models for this classification task. This work contributes to the developing literature, which suggests that the techniques of deep learning and ensemble learning are particularly effective at dealing with the problem of AI-generated content identification with high accuracy.

Accuracy analysis of all applied models with features.

Results using new corpus

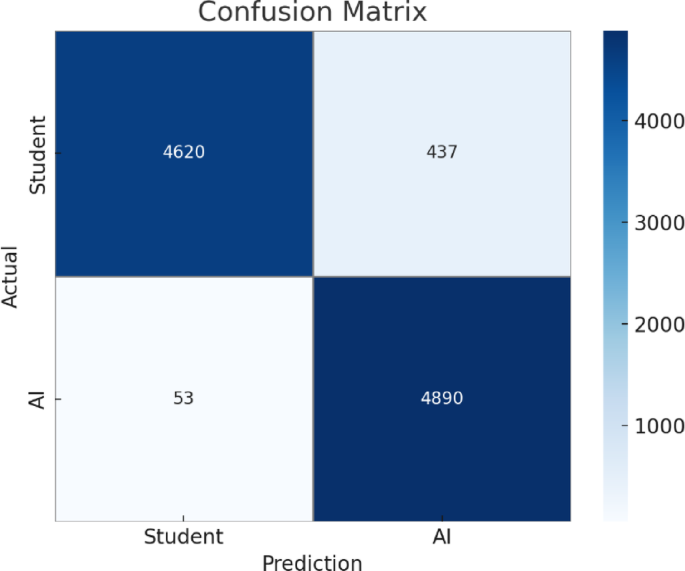

The comparison with DistilBERT versus the original BERT model on the Human vs. LLM Text Corpus (used in prior work34) provides striking evidence of the efficiency and effectiveness of the distilled transformer model we propose. Fine-tuned BERT performance peaks at 90%, but even our simple pre-trained DistilBERT model with no specific feature engineering hits 95% performance—and absolute gain of 5%. This gain highlights the effectiveness of our targeted modifications to the transformer architecture, which involves incorporating subword-aware word embeddings as well as a customized self-attention head which actively amplifies stylistic and logical cues most indicative of AI-authored text. Accurcy, precision, recall and F1-score all gain proportionally with DistilBERT sustains all metrics at 0.95 on the held-out test set, whereas BERT reaches a performance plateau around 0.90. This benefit can again be illustrated as in Fig. 20 is the confusion matrix, where the number of false positives as well as false negatives have been dropped significantly. Only 53 “AI” samples were misclassified as human (much less than the BERT architecture) and 437 “humans” were misclassified as AI, suggesting that DistilBERT’s attention mechanism is more optimally nuanced to distinguish the fine-grain patterns in machine generation. Consequently, our model not only pushes the current accuracy ceiling but also improves the consistency across all the classification metrics and hence able to make robust detection on different text.

Confusion matrix analysis based on identical dataset utilized in study.

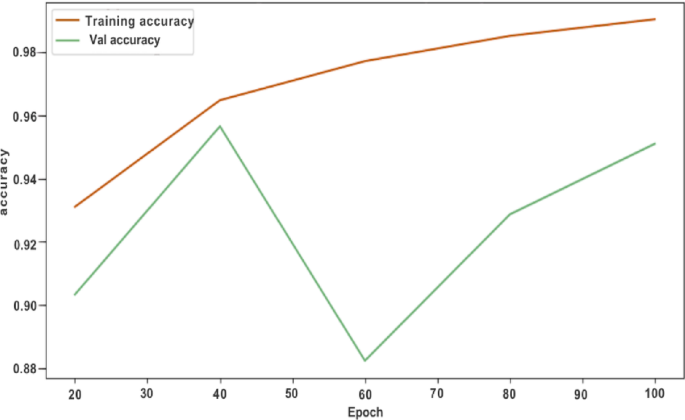

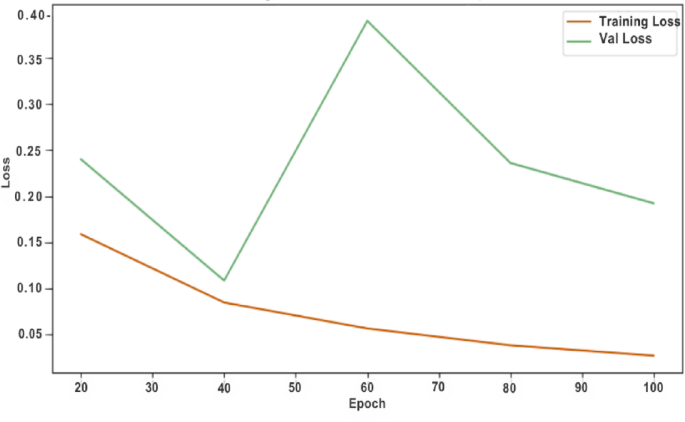

Training and validation curves in Figs. 21 and 22 indicate that these improvements are a result of learning and not a consequence of overfitting, validation accuracy plateaus at 95% and loss steadily decreases, suggesting that DistilBERT quickly memorizes the salient discriminative features and generalizes across unseen examples. Early stopping had been performed after around the 40th epoch because the model’s knowledge initialized pre-trained plus the fine-tuning followed by our class-weighted and augmentation strategies was quickly approaching an optimal solution. The combination of distilled architecture, subword-level and contextual embeddings, and adapted attention heads yields a lightweight yet highly accurate detector, capable of reliably identifying AI-generated text with minimal resource overhead. As demonstrated in the figures, the methodological advances yield clear performance gains and substantial improvements over state-of-the-arts, verifying the fundamental contributions of this work and establishing a new state-of-the-art baseline for AI-versus-human text detection.

Model training and validation accuracy analysis over epochs.

Model training and validation loss analysis over epochs.

Comparison with prior work

The proposed results are approximately 3% greater than those of previous works in terms of the accuracy of detecting AI-generated and human-generated content. The existing models, RNN54, and RoBERTa25, use conventional text analytics practices, including N-grams, POS taggers, and default feature representation. Here, these models achieved accuracies ranging from 80 to 91%. In contrast, using human written texts and BBC News datasets, more recent methods, such as DT16, achieved accuracy of 89% using feature sets POS, Bigram and textual features. With the implementation of the state-of-the-art DistilBERT model on text data, the model outperformed existing studies, achieving 97% accuracy in detecting whether the text was generated by a bot or human. Additionally, LSTM with GloVe was embedded in the AIGC Content dataset; thus, the current existing models were improved with a high accuracy of approximately 93%, as displayed in Table 6. This significant performance improvement highlights the usefulness of establishing deep learning models such as LSTM and DistilBERT in combination with better quality word embeddings for enhanced content classification. The improvement in the results can be attributed to the capacity of LSTM to model long-range dependencies in text data and the semantic representation from GloVe embedding.