Google Gemini Pro 1.5 is a transformative moment for multimodal artificial intelligence. You can feed video, audio, or image files and ask questions about their content.

To see how well it works, I fed the Gemini Pro 1.5 a complete silent video of the moment of a recent total solar eclipse that was visible across North America.

Using the Google Cloud platform VertexAI, we were also able to give Gemini some instructions along with video clips. I had her AI music generator write lyrics and prompts to create a song inspired by the content of the video.

I then entered the prompts and lyrics into Udio to create the song, sent the complete track back to Gemini Pro 1.5, and asked it to listen to the track and plot a music video.

What is Google Gemini Pro 1.5?

Google released the Gemini family of models in November, starting with the smaller Nano, which is available on some Android smartphones. He then released Pro, which powers the Gemini chatbot, and finally he released Gemini Ultra, his GPT-4 level of power, in January.

Last month, the search giant released its first update to the Gemini family, launching Gemini Pro with a massive 1 million token context window, a mix of expert architectures for improved responsiveness and accuracy, and true multimodal capabilities. 1.5 has been released.

Currently available to developers only through API calls or the VertexAI cloud platform, this advanced functionality will soon be brought to Gemini chatbots.

Some of the features include the ability to upload audio files such as songs or speeches, video files of exercise or solar eclipses, and ask Gemini questions about the files.

create songs from videos

Although you can't use Gemini Pro 1.5 to directly generate music (Google has other AI models for creating both music and videos), you can create prompts and lyrics.

I fed the AI model a short 25-second clip showing the moment of total solar eclipse from a recent total solar eclipse visible from the United States, and fed it into an AI music generator to create lyrics and an inspired song. I asked them to provide both possible prompts. In video.

New @Google developers released today: – Gemini 1.5 Pro now available in over 180 countries via Gemini API in public preview – Speech (voice) understanding features and new features for easier file processing Supports File API – new embedding model! https://t.co/wJk1e1BG1EApril 9, 2024

Gave this to me as a hint to Udio. “A grand orchestral piece in three different movements, the first building is full of tension and anticipation as it approaches, the second slows down during totality, becoming dreamy and mysterious. , the third building begins a triumphant crescendo as the sun again emerges from behind the moon.”

These were the lyrics for the chorus. “The solar eclipse has arrived, a celestial show. In the moment of darkness before light grows, the stars appear to play in the midday sky. A once-in-a-lifetime spectacle, as the world passes by.”

I don't think Gemini Pro 1.5 is as creative as Claude 3, ChatGPT, or Gemini Ultra. The lyrics on these platforms tend to be more original, but their ability to analyze videos is very good. We were able to evaluate different moments in the video and capture the changes that were reflected in the lyrics.

Create music videos from songs

One of the latest updates to Google Gemini 1.5 is the ability to grab a song or piece of audio and analyze its content. This is especially helpful when planning music video ideas for the song, especially if you're working in a hurry.

We took a Udio-generated song using Gemini Pro 1.5 prompts and lyrics and asked the AI model to plot a shot-by-shot music video based on the audio files.

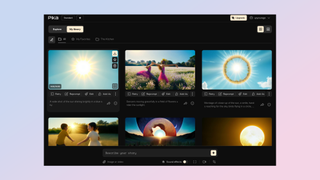

We got a series of 5-second shots of each part of the song, including the intro and chorus. Each section presented a prompt such as “Generate a beautiful landscape with a sunrise or birds flying.'' I then entered the prompt into Pika Labs.

This could allow robots to act completely independently using only a microphone and camera.

This is an unusual example of how Gemini Pro 1.5 can be used, but it shows what is possible and what can even be built by third-party developers. For example, you can use Gemini Pro 1.5 as a middle layer between an AI music generator and an AI video platform like his LTX Studio or Runway to create songs and music videos in one click.

The real benefits of larger context windows will emerge as AI applications like Meta's smart glasses and Rabbit R1 begin to hit the market. If Google can tackle the latency issue, AI will be able to analyze large amounts of real-world data and provide live feedback and information.

This could help visually impaired people understand audio instructions, create the first step towards truly self-driving cars, or give robots the ability to act completely independently using just a microphone and camera. It can be used to give or give.