A new AI system called StreamDit generates live stream videos from textual descriptions, opening up new possibilities for games and interactive media.

Developed by researchers at Meta and University of California, Berkeley, StreamDit creates videos at 16 frames per second using a single high-end GPU. This model outputs video at 512p resolution with 4 billion parameters. Unlike previous methods of generating a complete video clip before playback, StreamDit generates a live video stream for each frame.

Video: Kodiara et al.

The team introduced a variety of use cases. StreamDit also allows you to generate one minute of video on the fly, respond to interactive prompts, and edit existing videos in real time. In one demo, the pig in the video transformed into a cat, and the background remained the same.

advertisement

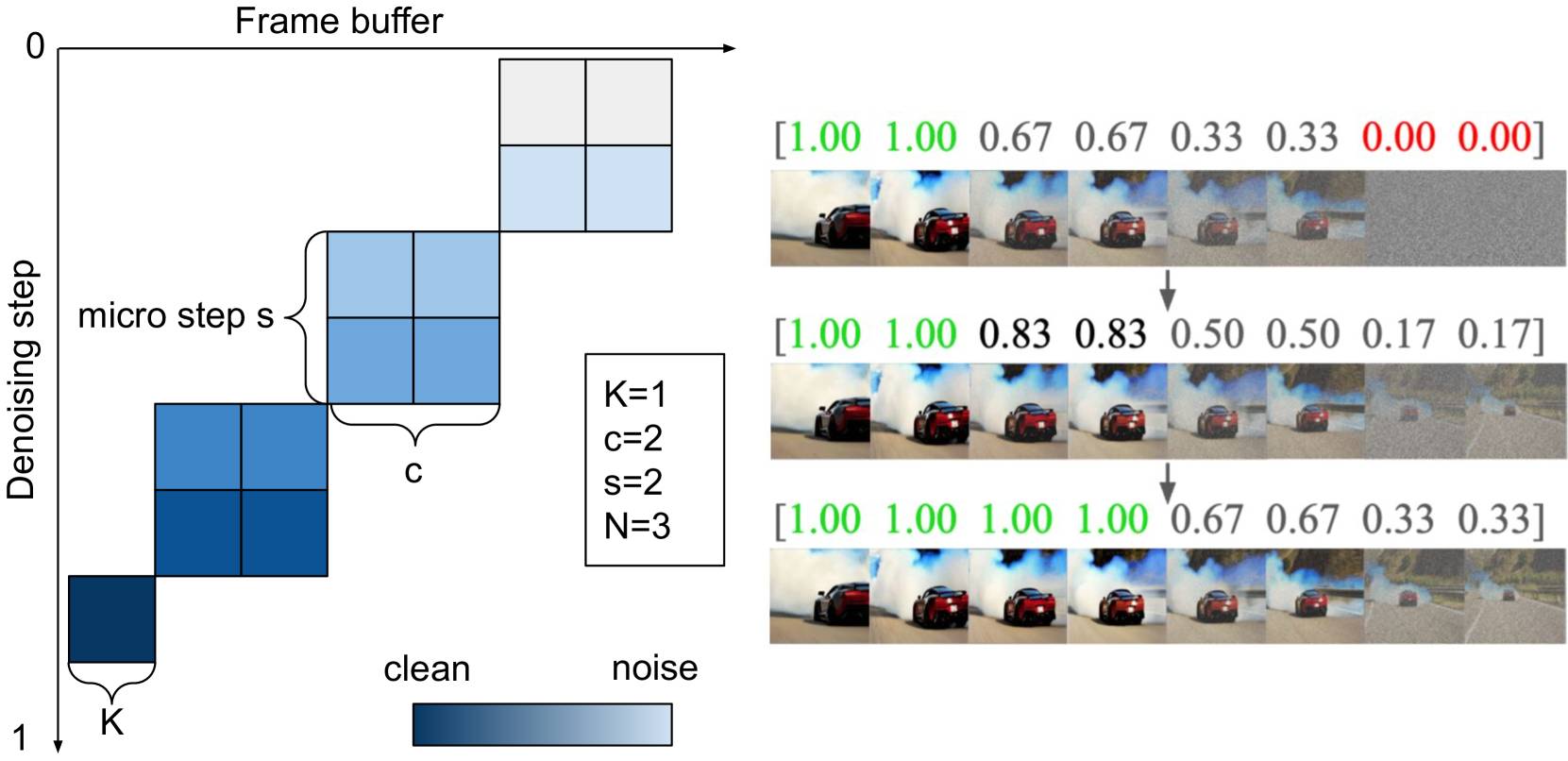

This system relies on custom architectures built for speed. StreamDit uses the moving buffer to process multiple buffers simultaneously, working on the next frame while outputting the previous frame. The new frame is noisy, but gradually refines until it's ready for display. According to the paper, it takes about 0.5 seconds to generate two frames and generate eight completed images after processing.

Training for versatility

The training process is designed to increase versatility. Instead of focusing on a single video creation method, the model was trained in several approaches using a larger dataset of 3,000 high quality videos and 2.6 million videos. Training was conducted on a 128 NVIDIA H100 GPU. Researchers found that mixing the sizes of 1-16 frames of mass yields the best results.

To achieve real-time performance, the team introduced an acceleration technique that reduced the number of computational steps required from 128 to just 8, which had a minimal impact on image quality. The architecture is optimized for efficiency. Rather than interacting with all other image elements, information is exchanged only between local regions.

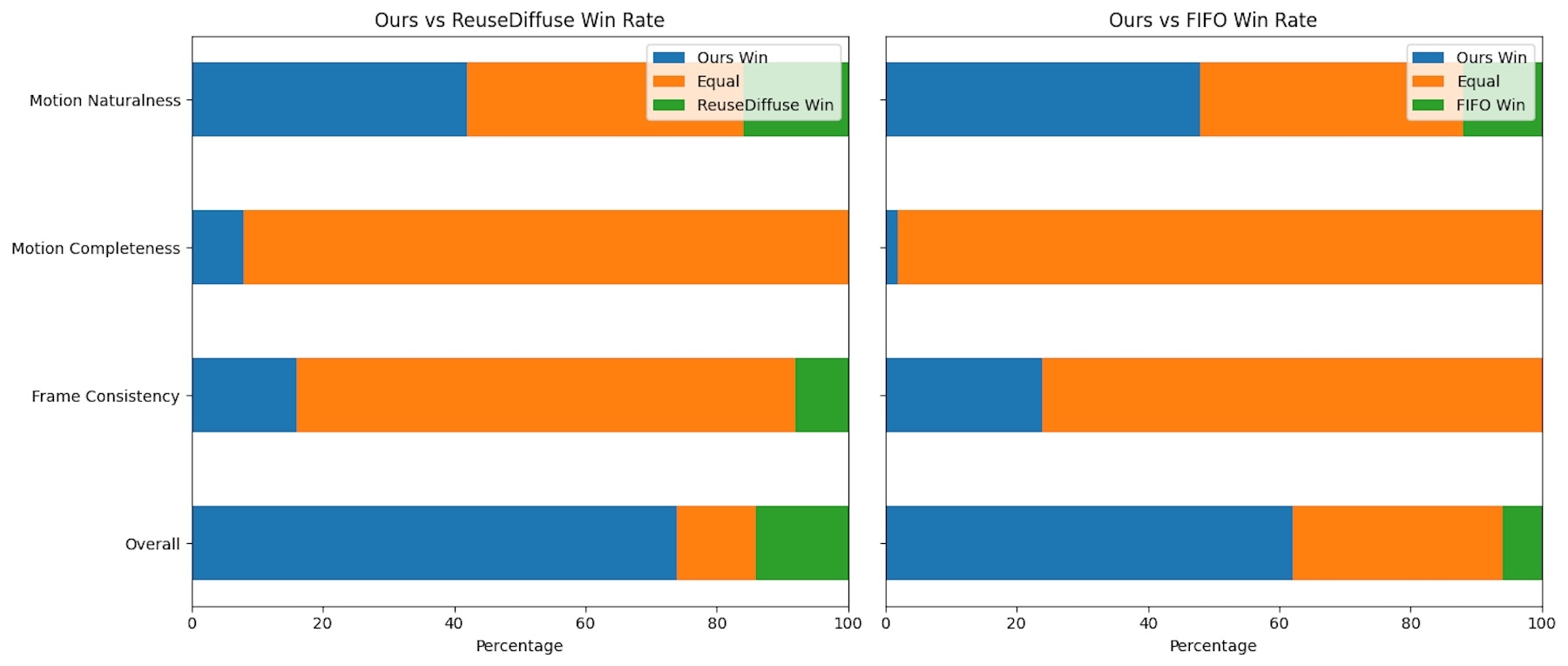

In the Head-tight-Head comparison, StreamDit outperformed existing methods such as Reusediffuse and FIFO spread, especially for videos with a lot of movement. Other models tended to create static scenes, but StreamDit produced more dynamic and natural movements.

Human evaluators evaluated the system's performance in terms of motion fluidity, animation integrity, frame-to-frame consistency, and overall quality. In all categories, StreamDit came to the top when tested with an 8-second 512p video.

recommendation

Bigger model, better quality – but slower

The team also experimented with a much larger 30 billion parameter model. This was not fast enough for real-time use, but it achieved even higher video quality. The results suggest that the approach can scale to a larger system.

Video: Kodiara et al.

There are still some limitations, including limited ability to “remember” previous parts of the StreamDit video, and occasional visible transitions between sections. Researchers say they are working on a solution.

Other companies are also looking to generate real-time AI video. For example, Odyssey recently introduced an autoplay world model that adapts video frames frames per frame according to user input, making interactive experiences more accessible.