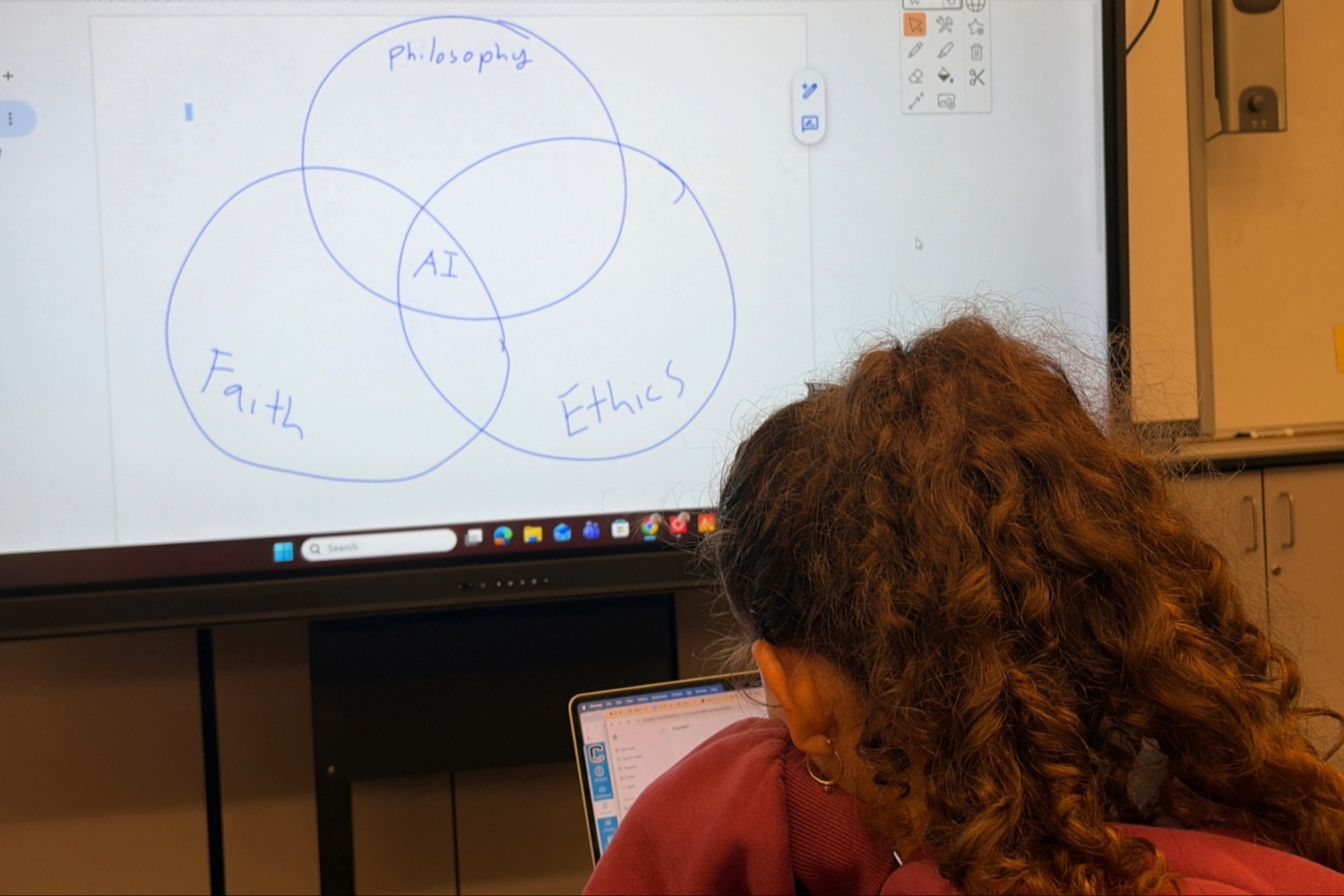

Carlmont University students study a Venn diagram showing the intersection of faith, philosophy, and ethics in the development of artificial intelligence in a classroom projection. This diagram highlights the growing debate surrounding the values embedded in AI tools used in education.

Anthropic consulted with Christian leaders to shape the ethical framework for its Claude chatbot, raising concerns about religious bias in artificial intelligence increasingly used in schools.

Brian Patrick Green, director of technology ethics at Santa Clara University, said Antropic invited Christian leaders in March to discuss Claude’s moral formation. He attended the meeting and briefed the Washington Post on the discussions. Claude has enhanced educational tools such as: magic schoolWhich Sequoia Union High School District (SUHSD) adopted at its March 4 board meeting For educators and students. While current platforms are unaffected, Green’s description of Anthropic’s consulting has led educators to reconsider the principles that guide the software students use on a daily basis.

“We all have values. We all have how we were raised. We are all influenced by our community.” said Jadee Sun, a computer science teacher at Carlmont University.. “When chatbots are influenced and used to educate students, it can create bias and reduce people’s views and freedom of thought.”

As AI tools become more widely used in classrooms, the values embedded in those systems are under scrutiny, raising questions about neutrality in public education.

Anthropic positions itself as an AI ethics leader. Constitution AI This approach trains Claude in the guidelines for being safe and helpful. The company will further strengthen this stance, Refusal to relax safeguards even for national defense projects.

But critics say Anthropic’s recent talks with Christian leaders risk introducing bias by excluding other religious viewpoints.

“We need to get as many opinions as possible. AI should be a think tank for all different opinions, and the more diversity and knowledge, the more informed everyone will be,” Sun said.

As AI companies prioritize ethics, classrooms and organizations are debating what these principles should mean for students.

College Board is a prime example of incorporating ethics-related learning objectives into classes such as Advanced Placement (AP) computer science courses. AP Computer Science A (AP CSA) focuses primarily on Java programming and introduces the ethical and social implications of computing, while AP Computer Science Principles (AP CSP), which Carlmont does not offer, emphasizes responsible computing.

But some AP CSA students say they have limited guidance on ethics, especially as AI becomes more central to their education and future careers.

“I think in AP CSA classes there is more emphasis on technical skills. We learn more about logic and problem-solving thinking,” said Gila Dremer, a sophomore at Carlmont College.

Sun echoed similar sentiments, noting that there was not much emphasis on ethics in the course.

“It’s part of the AP CSA curriculum, but it’s not tested. If it’s not tested, students tend to deprioritize it,” Sun said.

Sun, who is the advisor for Carlmont University’s student-led AI and Machine Learning Club, said that despite the gaps in the curriculum, students are actively exploring their interests in ethics.

“The club is researching machine learning and ways to improve the way AI systems learn,” Sun said.

Broad efforts are beginning to incorporate ethics-based approaches into AI education. In April, University of Notre Dame’s Ethics and Common Good Institute (ECG) awards up to $1 million in grants Developing a faith-based K-12 curriculum delta framework. This effort questions the theology-influenced moral framework in secular public schools.

“In fact, we have not encountered any strong backlash against the faith angle that DELTA is taking. The core values of this framework are broadly agreed upon by everyone with whom we have shared this framework,” said ECG Program Manager Julia French.

French said the feedback is more focused on implementation.

“The bigger pushback we’ve received is that the framework doesn’t explicitly propose any concrete solutions,” French said. “Instead, it provides a lens through which to approach AI issues and the critical flexibility needed both for schools in Northern California to implement AI and for rural schools in Louisiana to implement AI.”

It remains unclear whether a faith-influenced framework is compatible with secular public schools.

“As with any resource or curriculum, districts will approach the implementation of the AI platform with an emphasis on transparency, continuous assessment, and alignment with educational goals,” said Barbara Reclis, director of instructional technology at SUHSD.

The marriage of faith and AI is not new. It began in February 2020, led by the Vatican. Rome calls for AI ethicsoutlined a human-centered approach to AI. Since then, the initiative has attracted more than 80 signatories from religious leaders, technology companies, and major corporations.

Following the partnership with MagicSchool, SUHSD plans to establish guardrails to promote safe and responsible use of AI. California Department of Education Guidelines. The district will also host an AI listening session on April 28 to gather input from students, teachers, staff, parents, and community members about their priorities and concerns for AI integration in education.

“AI tools play an important role in education, and it’s important that they come from reputable companies,” says Draemer.

As AI becomes more integrated into everyday life, the debate about who defines its moral compass and whether that definition belongs in the classroom is only just beginning.

“Our top priority is to ensure our students are getting what they need to achieve academically while remaining safe,” Reclis said.