The quantum resource

The core quantum resource is a multimode optical network generated via parametric down-conversion (PDC) in a nonlinear waveguide pumped by a pulsed laser, as shown as in Fig. 1. The interaction Hamiltonian describing the generation of signal and idler fields of wavelengths λS, λI reads

$$\widehat{H}\propto \int \,d{\lambda }_{{\rm{S}}}d{\lambda }_{{\rm{I}}}\,J({\lambda }_{{\rm{S}}},{\lambda }_{{\rm{I}}})\,{\widehat{a}}^{\dagger }({\lambda }_{{\rm{S}}}){\widehat{b}}^{\dagger }({\lambda }_{{\rm{I}}})+{\rm{h}}.{\rm{c}}.,$$

(1)

where J(λS, λI), the so-called joint spectral amplitude (JSA), can be written as45,46,47

$$J({\lambda }_{{\rm{S}}},{\lambda }_{{\rm{I}}})=p({\lambda }_{{\rm{S}}},{\lambda }_{{\rm{I}}})\cdot \phi ({\lambda }_{{\rm{S}}},{\lambda }_{{\rm{I}}}).$$

(2)

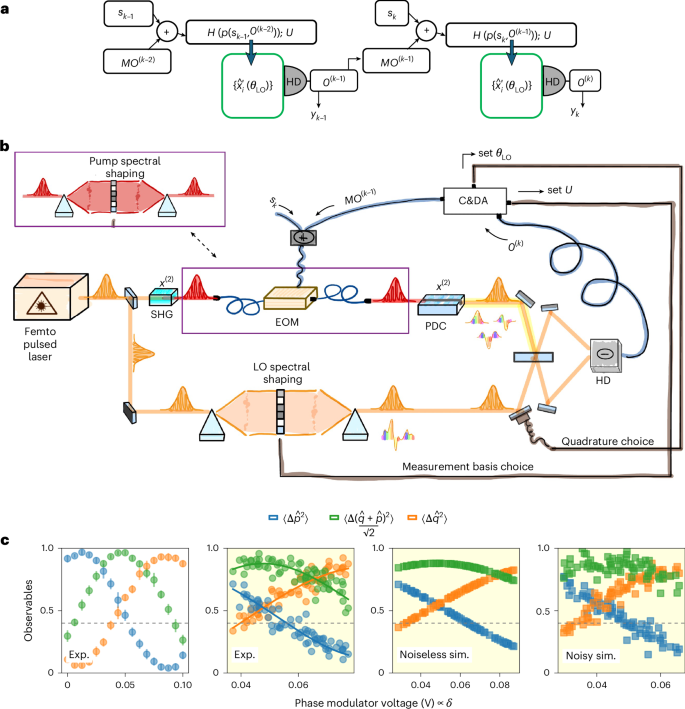

a, Quantum reservoir protocol. At each timestep k, the input sk is added to a feedback signal derived from the previous observables O(k−1). This sets the pump of the parametric process, determining the operators \({\widehat{x}}_{i}^{{\prime} }\) and their quantum correlations. The task output yk is obtained from the observables. b, Experimental set-up. Information is encoded in the global phase of the pump, generated via second harmonic generation (SHG) and modulated by an EOM. The more general encoding involves phase shaping of the pump spectrum. The multimode entangled state from PDC, and thus its observables, is tailored by this encoding. The control and data acquisition (C&DA) module includes electronics to set the measurement basis (U) via local oscillator shaping in homodyne detection (HD), measure the observables Om and generate the feedback. Phase locking is not shown. c, Nonlinear dependence of observables with the EOM voltage (proportional to the encoding phase δ, which is a linear combination of sk and O(k−1)). Left: experimental data (dots) in low- and average-noise regimes. The low-noise regime (first panel) is obtained by repeating the measurement ten times; the data are shown as mean values ± s.d. All the other panels present one-sample independent measurements and simulations to highlight the impact of the noise and therefore present no error bars. Right: simulated data (squares) using the Digital Twin model according to the experimental noise evaluation. The white panel spans a voltage range sufficient for observables to vary from 0 to 1 (phase difference slightly exceeding π/2). Yellow panels show observables recorded during training on a memory task.

The term p(λS, λI) is the complex pump spectral shape, while ϕ(λS, λI) is the phase-matching function, determined by the characteristics of the nonlinear medium. The multimode nature of the generated quantum system can be retrieved by performing a Schmidt decomposition of the JSA J(λS, λI) = ∑krkhk(λS)gk(λI), where hk(λS) and gk(λI) are two sets of orthonormal modes, spectral profiles of signal and idler channels, and rk are called the Schmidt coefficients. In case of degenerate signal and idler fields, as in our experimental set-up, hk = gk; and the Hamiltonian in equation (1) can be written as \(\widehat{H}\propto {{\rm{\sum }}}_{k}{r}_{k}\,{\widehat{A}}_{k}^{\dagger 2}+{\rm{h}}.{\rm{c}}.\), with \({\widehat{A}}_{k}^{\dagger }\) broadband creation operators associated to the modes hk. Acting on the vacuum, this Hamiltonian generates a tensor product of single-mode squeezed vacuum states, one in each mode hk(λS), also called supermodes. The used set-up can generate up to 40 Schmidt modes approximated by Hermite–Gauss polynomials as shown in Fig. 1.

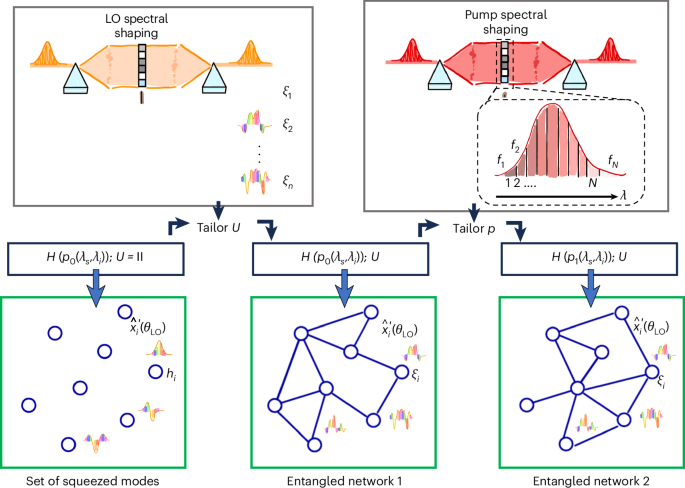

The generated quantum state is Gaussian and fully characterized by the quadrature covariance matrix σ, measured via homodyne detection. Mode-selective homodyne detection enables probing in arbitrary mode bases. In the supermode basis, σ is diagonal; in any other basis ξj(λS) related by a unitary U, it transforms as \({{\boldsymbol{\sigma }}}^{{\prime} }={{\boldsymbol{S}}}_{{\boldsymbol{U}}}^{T}{\boldsymbol{\sigma }}{{\boldsymbol{S}}}_{{\boldsymbol{U}}}\), where SU is the symplectic transformation induced by U (Supplementary Section B). The matrix \({{\boldsymbol{\sigma }}}^{{\prime} }\) is non-diagonal and the off-diagonal terms are signatures of entanglement correlations between the measured modes. Measuring on such basis allows to experimentally access entanglement correlations for quantum protocols35,48. The resource can then be pictured via an entangled network as shown in Fig. 2.

The nodes of the network are different spectro-temporal modes of the field. The set of modes are defined by the basis choice U. For convention when U = I, the supermode basis {hi} is chosen, and the modes are independently squeezed. In all the other mode bases {ξi}, the modes show entanglement correlations represented as links in a network. The general mode quadrature is \({\widehat{x}}_{i}^{{\prime} }({\theta }_{\mathrm{LO}})=\cos ({\theta }_{\mathrm{LO}}){\widehat{q}}_{i}^{{\prime} }+\sin ({\theta }_{\mathrm{LO}}){\widehat{p}}_{i}^{{\prime} }\) that corresponds to \({\widehat{q}}_{i}^{{\prime} }\) or \({\widehat{p}}_{i}^{{\prime} }\) if the local oscillator phase is set to be θLO = 0 or θLO = π/2. The correlations can be controlled by tailoring the pump of the process that modifies the Hamiltonian.

The phase-matching function term of the JSA in equation (2) is fixed and determined by the material and waveguide properties, and only the pump-envelope function can be modified in a reconfigurable way via mode-shaping techniques. This modifies the entanglement correlation between nodes as shown in Fig. 2. Therefore, we can explicitly write the dependence of the covariance matrix \({{\boldsymbol{\sigma }}}^{{\prime} }\) on the pump p and the chosen basis U as \({{\boldsymbol{\sigma }}}^{{\prime} }={{\bf{S}}}_{{\bf{U}}}^{T}\,{\boldsymbol{\sigma }}({\bf{H}}({\bf{p}}))\,{{\bf{S}}}_{{\bf{U}}}\).

In the following, we exploit the control on the pump-envelope function to encode information in the quantum system in the configuration of a fixed measurement basis U. The expectation value of general observables \({O}_{m}=\langle \Delta {\widehat{x}}_{i}\Delta {\widehat{x}}_{j}\rangle\) that will be used in the reservoir task can be retrieved from the covariance matrix \({{\boldsymbol{\sigma }}}^{{\prime} }(U,p)\). Given the pump shape, we have accurate numerical models of the process from the nonlinear interaction to the observables45,46,47, setting a Digital Twin of the experimental set-up as fully explained in Supplementary Section A.

Information encoding and memory control of the quantum reservoir

Tuning the pump phase profile, the pump-envelope function can be written as

$$p({\lambda }_{{\rm{P}}},{\boldsymbol{\delta }})=\mathop{\sum }\limits_{i=1}^{N}{f}_{i}({\lambda }_{{\rm{P}}}){e}^{i{\delta }_{i}},$$

(3)

where the fi(λP) are a set of N pump modes and the \({\boldsymbol{\delta }}={({\delta }_{i})}_{i=1}^{N}\in {{\mathbb{R}}}^{N}\) are tunable phase parameters that allow precise shaping of the JSA to easily encode information. The pump input modes fi that we consider are N frequency windows, delimiting a portion of our Gaussian pump profile f(λP), that we can precisely control with a spatial light modulator, for example. In the simple case of N = 1, when a unique global phase parameter is considered, an EOM is used as shown in Fig. 1. To perform the measurement, a set of n modes ξj(λS) is selected by the basis choice U via the local oscillator shaping in homodyne. The tailoring of N pump spectral components and the choice of the measurement basis composed by n elements are shown in the upper part of Fig. 2 (more details in Supplementary Section B).

Our system is endowed with memory through a real-time feedback mechanism: the results of previous measurements are fed back into the system to influence future states. Specifically, if we consider a discrete-time sequence of inputs {sk}, where k denotes the timestep, the pump parameters are updated according to

$${\delta }_{i}^{(k)}={\alpha }_{i}{s}_{k}+{\beta }_{i}+\mathop{\sum }\limits_{m}{M}_{im}{O}_{m}^{(k-1)}.$$

(4)

Here i ∈ 1, …, N, αi and βi are real-valued coefficients, and Mim is a feedback mask (a real matrix) that determines how previous measurement outcomes influence the current pump parameters. This determines the fading memory of the QRC. To summarize, N is the number of different input components that we encode in a single instance by manipulating several components of the pump spectrum: N > 1 can encode multidimensional inputs as well as enrich the encoding of a scalar input, repeated across the pump spectrum with different parameters (αi, βi, Mim). The quantities \({O}_{m}^{(k-1)}={\langle \Delta {\widehat{x}}_{i}\Delta {\widehat{x}}_{j}\rangle }^{(k-1)}\) are the values of the mth observable measured at timestep k − 1, and are derived from a subset of elements of the covariance matrix (i, j ∈ 1, …, 2n). These are measured by accumulating data on the homodyne signal. The number n of measured modes determines then the size of the output layer of our reservoir. The reservoir computing is described by

$$\left\{\begin{array}{l}{O}_{m}^{(k)}\propto {\sigma }_{ij}^{{\prime} (k)}| {{\bf{\sigma }}}^{{\prime} (k)}={{\bf{S}}}_{{\bf{U}}}^{T}\,{\bf{\sigma }}({\bf{H}}({\bf{p}}({{\boldsymbol{s}}}_{{\bf{k}}},{{\bf{O}}}^{({\bf{k}}-{\bf{1}})})))\,{{\bf{S}}}_{{\bf{U}}}\\ {y}_{k}={{\bf{w}}}^{T}{{\bf{O}}}^{(k)}+b\end{array}\right..$$

(5)

The reservoir is trained by adjusting the matrix w and parameter b to minimize the error in the chosen learning task. The scheme of the quantum reservoir with feedback is shown in the top part of Fig. 1. While we here focus on the case of feedback restricted to the last output at each cycle, the fading memory can be extended re-injecting a longer combination of past signals.

QRC tasks

We evaluate the performance of our system across a range of benchmark tasks considering two input encoding: the simple, experimentally implemented scheme with N = 1, and the more general encoding with N > 1. We begin with the XOR task, which assesses the system’s nonlinearity and short-term memory while requiring minimal resources. We then consider the memory task to characterize the system’s fading memory property. Finally, we turn to more complex benchmarks, including the double-scroll and parity check tasks, which challenge the system’s scalability and capacity to handle richer, higher-order nonlinear dynamics.

Experimental control of memory via global phase

The phase encoding via the EOM, as shown in Fig. 1, corresponds to N = 1, and the voltage applied changes linearly the pump phase δ:

$$p({\lambda }_{{\rm{P}}},\delta )=f({\lambda }_{{\rm{P}}}){e}^{i\delta },$$

(6)

where f(λP) is the natural Gaussian profile of the pump. As explained in Methods, with this type of encoding, the expressivity of the observables does not increase with the number of measured modes n; therefore, we use n = 1 as well. This means that we measure only the first supermode h1 and we can use as observables {O1, O2, O3} the set \(\{\langle \Delta {\widehat{q}}^{2}\rangle ,\langle \Delta {\widehat{p}}^{2}\rangle ,\langle \Delta \widehat{q}\Delta \widehat{p}\rangle \sim \langle \Delta {(\frac{\widehat{q}+\widehat{p}}{\sqrt{2}})}^{2}\rangle \}\). These three observables are sufficient for implementing several tasks, as they express three different nonlinear dependencies with respect to δ as shown in the lower part of Fig. 1. The first two panels show the three behaviours experimentally, in the case of a low-noise realization and a more noisy one, which is representative of the average noise in measurements. Assuming a Gaussian noise model, the typical variance of observables is recovered via a least square method. The result is used in the Digital Twin to simulate the expected behaviour as shown in the last two panels.

The unique non-zero term M that we use for the feedback mask is the one corresponding to \({\langle \Delta {\widehat{q}}^{2}\rangle }^{(k-1)}\). At each timestep k, we set the global phase depending on the input sk and the feedback as

$${\delta }^{(k)}=\alpha {s}_{k}+\beta +M{\langle \Delta {\widehat{q}}^{2}\rangle }^{(k-1)}.$$

(7)

M, α and β are randomly chosen within ranges that ensure both the input and the feedback contribute comparably to the phase and such to explore a nontrivial region of the observable space.

We begin with a binary task, the temporal XOR, where the target output is \({\widehat{y}}_{k}={s}_{k}\bigoplus {s}_{k-1}\) and the input is a random sequence of values sk ∈ {0, 1}. This task requires both nonlinearity and memory of the previous encoded input. The reservoir is trained on a set of inputs by applying linear regression to the measured observables to maximize accuracy (Supplementary Section C).

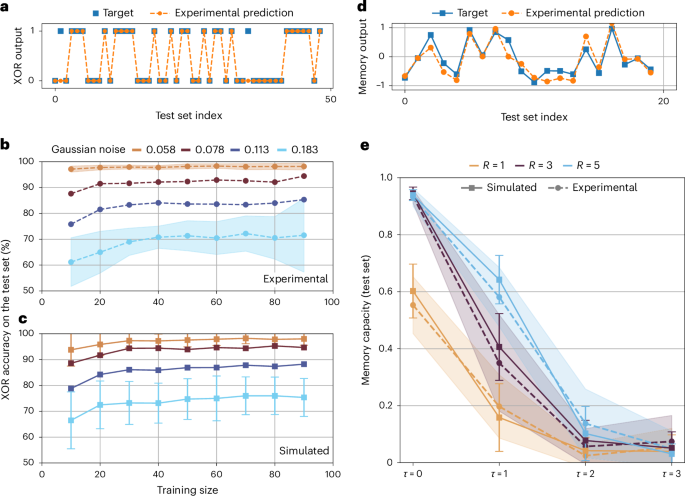

The performance of the reservoir is then evaluated using the accuracy again, but on a test sequence of new data. In Fig. 3a, we present an example of the test target values and the corresponding experimental prediction. In Fig. 3b,c, we show experimental and simulated accuracies as a function of training set size, for different levels of Gaussian noise. Experimental instabilities can introduce Gaussian noise in the measurement of the observables (Fig. 1), which can be mitigated by averaging over repeated measurements. These instabilities arise from mechanical and thermal fluctuations and are not intrinsic limitations of the protocol, but rather reflect the current performance of the experimental set-up. We see that the training saturates for set sizes around 100 and that noise affects the performance. Nevertheless, the reservoir could achieve experimentally test accuracies of 98 ± 1% for realistic levels of noise, with only 70 training steps. The Digital Twin behaves similarly to the experiment, as shown in Fig. 3c.

XOR task (experimental and simulated). a, Example of binary target (blue) and predicted (orange) outputs for the test set, with a test accuracy of 95.9% (correct predictions over total). Training and test set sizes are 99 and 49, respectively. b,c, Test accuracy versus training size: experimental data (dots) (b) and simulated data (squares) (c) under varying noise levels (light brown, brown, blue and light blue); these noise levels are determined by calculating the standard deviation around the expected behaviour of the observables (see the bottom of Fig. 1). Experimental error bars (shaded) represent mean ± s.d. over n = 50 independent reshufflings of the dataset (technical replicates). Simulated data points represent mean ± s.d. over n = 100 independent simulation runs (different random seeds; technical replicates). Error bars are shown only for the first and last noise levels for clarity. Larger training sets lead to smaller test sets, increasing variability owing to single misclassifications. Memory task (experimental and simulated). d, Continuous target (blue) and predicted (orange) output for a test set using 5 reservoirs with delay τ = 1 (capacity = 0.81). e, Capacities versus delay τ: experimental (dots) and simulated (squares) results for different reservoir numbers (light brown, dark purple and light blue). Experimental error bars represent mean ± s.d. over n = 100 reshufflings (technical replicates), and simulated error bars represent mean ± s.d. over n = 30 simulation runs (technical replicates). In both cases, simulations use a realistic experimental noise (for the memory task, we had a Gaussian noise of 0.057) model via the Digital Twin.

A way to further test the memory of the system is through the linear memory task, in which the reservoir is trained to reproduce past entries of the input series. The target function at timestep k is the input encoded τ steps in the past, \({\widehat{y}}_{k}={s}_{k-\tau }\), the input sk ∈ [−1, 1] being a random uniformly distributed sequence. Here the three previous observables are not sufficient. Therefore, we resort to spatial multiplexing, which is equivalent to use R different reservoirs at the same time. This is a classical reservoir computing technique used to enhance the expressivity of the system (see Methods for details), whereas the general encoding introduced in the next section leverages spectral multiplexing, an intrinsic resource of the quantum system. In Fig. 3d, we present an example of target and experimentally predicted values. In Fig. 3e, instead, we show the agreement between the experimental and simulated capacities (see Supplementary Section C for the definition) on test sets when varying τ and the number R of reservoirs. The simulations agree with the experimental results, confirming the advantage of multiplexing (better capacities for higher R) and the fading memory of the system (capacity gradually decreasing for higher delays).

Given the optimal agreement with the experiment, we use the Digital Twin to test the protocol on a more complex task. Concretely, we perform forecasting of chaotic time series such as the double-scroll electronic circuit49 and also (Supplementary Section H) the Lorenz system. Since the input vectors are three-dimensional, for the global phase encoding, we require at least three spatially multiplexed reservoirs (one per input signal) with additional reservoirs possible to enhance expressivity. The target function is the input one timestep in the future: \({\widehat{{\bf{y}}}}_{k}={{\bf{s}}}_{k+1}\). Concerning the double-scroll, in Fig. 4a, we present an example of target and predicted values, obtained with simulations with realistic noise values. In Supplementary Fig. 2, we present three plots (corresponding to V1, V2 and I) where the capacity is calculated while varying the training size and the number of reservoirs R. We prove that even with the experimental noise, we saturate the prediction capacity for relatively small values of R (below 15) and of training size (below 400).

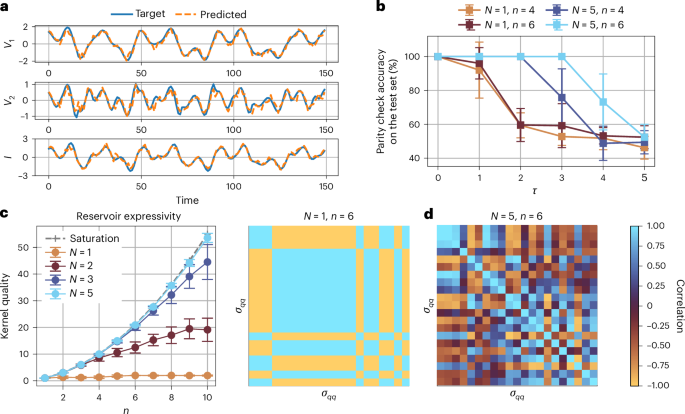

Double-scroll task (simulated). a, Target (blue) and predicted (orange) trajectories using a realistic experimental noise model (Gaussian noise = 0.057), with 15 reservoirs and a training size of 350. Prediction capacities for V1, V2 and I are 93.1%, 85.3% and 90.7%, respectively. General encoding (simulated). b, Parity check accuracy versus delay τ for different combinations of N (the number of phase segments that we imprint on the pump) and n (the number of measured output modes). Data points represent mean accuracy over n = 10 independent trials with different random seeds. Error bars denote ±s.d. across these trials. c, Kernel quality (rank of the observables matrix) versus number of measured modes n for different N values, measuring reservoir expressivity. Data points represent mean rank over n = 25 independent trials; error bars denote ±s.d. For N = 1 (light brown), increasing n does not improve expressivity, while for higher N values, the rank approaches the saturation predicted by a polynomial scaling (n(n + 1)/2). d, Correlation matrices between observables for N = 1 and N = 5 at n = 6 (\(n\,\widehat{q}\)-quadrature operators, yielding n(n + 1)/2 = 21 observables). For N = 1, we can see the high redundancy; for N = 5, correlations and thus observables behaviours are richer and more diverse. When the rank of the observables matrix is n(n + 1)/2, which is its dimension, it means that every observable is meaningful and not redundant.

Scaling the expressivity and boosting the memory with general encoding

In the global phase encoding, the expressivity is limited for any number of measured modes n > 1 (Methods). Therefore, to take advantage of the multimode nature of the system, we diversify the phase profile of the pump (N > 1). This means exploiting fully the phase encoding from equation (4), as shown in Fig. 4. In Fig. 4b, with this general encoding (N = 5), we achieve perfect capacities (100%) for a parity check task for τ ∈ {1, 2, 3} and prove the boost in memory when n, the number of measurement modes, is increased. Furthermore, as shown in Fig. 4c, the expressivity (see Supplementary Section C for definition) increases with n for N > 1. Specifically, for N > 5, we saturate a polynomial scaling with n as reported in ref. 25: the expressivity scales with n(n + 1)/2, which is also the dimension of the observables matrix (considering only \(\widehat{q}\) observables). This means that with a sufficiently large N, all the terms in our covariance matrix are independent. We highlight the increased diversity in behaviours of the observables in Fig. 4d. We also exploit the multidimensionality of the input (it can be a vector of size up to N), to implement again the double-scroll task, without multiplexing. The performances of the global phase encoding with multiplexing (R = 15 reservoirs) and the general encoding with N, n = 9, 9 are similar, but the gain in scalability is clear as with the general encoding use only one system, instead of multiplexing in 15 reservoirs.