Global shipping network representation

The global shipping network can be described as \(G=(V, E, W)\), where V represents the set of ports, E is the set of shipping routes connecting pairs of ports, and W is the collection of edge weights. In this context, each weight in W corresponds to the number of individual trips \(T_{ij}\) between ports i and j:

$$\begin{aligned} W = \{ w_{ij}: w_{ij} = \sum _{t} T_{ij}

(2)

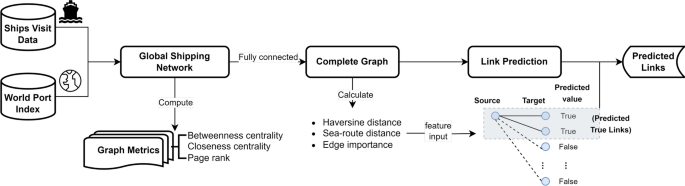

With the global shipping network defined, we performed network analysis to extract graph metrics as features for our proposed gravity-informed predictive model. We also conducted link prediction to identify potential origin-destination (OD) pairs within current shipping traffic, thus providing pre-knowledge to the predictive models. Figure 7 illustrates the pipeline of shipping network analysis and the link prediction process, which precedes the gravity-informed predictive models forecasting the ship traffic flows.

The experiment pipeline for analyzing and predicting links in the global shipping network from 2017 to 2019 includes constructing the global shipping network, calculating centrality and PageRank graph metrics, fully connecting the shipping network for disconnected and weakly connected components, and performing link prediction to identify potential real shipping connections.

Complex network metrics computation

We analyzed the shipping network by calculating various graph metrics for each node. These metrics included betweenness centrality, closeness centrality, and PageRank. The weights of the edges utilized in these metrics computations correspond to the number of trips along these individual shipping routes, as defined in Eq. 2. Subsequently, we introduced the graph metrics used for shipping network analysis:

Betweenness centrality \((C_B)\)

The Betweenness identifies how often a particular vertex is present in the shortest paths of a graph. A higher metric value indicates that the vertex is present in a larger number of shortest paths. In Eq. 3, \(\sigma (u,v)\) represents the number of shortest paths between vertices u and v, and \(\sigma (u,v|i)\) represents the number of those paths that pass through vertex i. The Edge-Betweenness, which assigns a Betweenness value to each edge in a graph, is worth noting. This variant considers \(\sigma (u,v|i)\) as the shortest paths between vertices u and v that pass through edge i. In the shipping network, betweenness centrality quantifies the frequency with which a port serves as an intermediary on the shortest paths between other ports; ports with high betweenness behave as bridges in the shipping network.

$$\begin{aligned} {C_B(i)} = \sum _{\left<u, v\right>\in V}{} \frac{\sigma (u,v|i)}{\sigma (u,v)}, ~ u \ne v \end{aligned}$$

(3)

Closeness centrality \((C_C)\)

The Closeness measures how closely a vertex is connected to all the other vertices in a network, as determined by the shortest paths between them (refer to Eq. 4). A vertex with higher centrality is considered more central and has a shorter average distance to all other vertices in the network43. In a port network, the closeness-central ports are easily accessible from all other ports in the shipping network. They are expected to have higher vessel traffic, providing insight into trading behavior and economic relationships inherent to their country and cities.

$$\begin{aligned} C_C(i) = \frac{|V|-1}{\sum _{j=1}^{|V|} d_{ij}^{N}}, ~ i \ne j \end{aligned}$$

(4)

PageRank \((P_{ij})\)

The PageRank44 is based on a mathematical model known as the stochastic Markovian process. This model defines a probability distribution over a set of states, where the probability of transitioning from one state to another is solely correlated with the state immediately preceding it:

$$\begin{aligned} P_{ij} = {\textbf{P}}(X_{n+1}=j\mid X_n=i) \end{aligned}$$

(5)

The algorithm assesses the significance of a vertex concerning the significance of the vertices linked to it. This way, it measures the vertex contribution based on the number of outgoing edges each adjacent vertex has, ensuring the uniqueness of the edge. The algorithm calculates the stationary transition probability matrix to determine vertex importance. The values obtained from this calculation indicate the significance of the vertices based on their access probability. Equation 6 illustrates the stationary matrix, where \(\pi ^{(n)}\) denotes the probability matrix at time n, and \({\textbf{P}}\) is the transition probability matrix.

$$\begin{aligned} \pi ^{(n-1)}{\textbf{P}} = \pi ^{(n)} \end{aligned}$$

(6)

As PageRank identifies essential nodes in a graph—ports with high metric values are more influential and likely to be frequently visited by ships from other important ports.

After calculating three different graph metrics, we noticed that they all showed positive non-linear correlations with each other. This means that as one metric increased, the others also tended to increase. Although each metric has a distinct interpretation, we found five ports from different bodies of water that had high values in all three metrics (see Table 2). These ports are particularly noteworthy because they excel in multiple areas. Many of the most influential ports are located at crucial marine traffic junctions that connect different oceans, such as the Gulf of Suez, the Gulf of Panama, and the Strait of Malacca.

Given that betweenness and closeness centrality measures involve the calculation of shortest distances, we also applied the reciprocals of the weights defined in Eq. 2 to calculate the graph metrics. This transformation aligns with interpreting weights as distances. However, the centrality rankings of the ports listed in Table 2 remained largely unchanged even after applying the reciprocal weights. This suggests that the topology and connectivity patterns are more critical attributes in the real-world shipping network characterized by sparsity and a highly skewed degree distribution. Furthermore, our shipping network modeling strategy aligns with the principles of gravity models. The strength of relationships is determined by the masses of sources and destinations, with larger objects driving more gravitational pull.

Fully connected shipping network

A component is a group of vertices connected to each other, and a network with more than one group of vertices that are not connected is called a non-connected graph. In an arbitrary graph, vertices i and j are in the same component \(G’=\{V’,E’\}\), \(V’ \subseteq V\), if and only if \(\{\forall i \in V’, \forall j \in V’| d_{ij} < \infty \}\), meaning that it is possible to travel from any vertex i to any vertex j in a finite number of steps, where \(d_{ij}:V \times V \rightarrow {\mathbb {R}}\) is a function that returns the distance between any two vertices45. Connected components for directed graphs are divided into weakly and strongly connected components, both of which identify absorbing regions.

When analyzing the shipping network, we observed the presence of 2 disconnected components and 13 components weakly connected (i.e., these subgraphs are unilaterally connected). These disconnected and weakly connected segments pose a challenge for our proposed framework, which relies on integrating features across all possible destinations from each source port. Such disconnections can compromise the model’s ability to generate accurate or well-defined predictions for shipping flows between isolated areas. Thus, we transformed the original network into a complete graph by connecting each pair of nodes \(V_i\) and \(V_j\) in the shipping network G. We assigned a small weight, \(w’=0.1\), to these newly established links and denoted the complete graph as \(G’\). More specifically, we set the weights of these non-existent edges to be inversely proportional to the distance. This approach considers the low probability of vessels leaving one port from a component and directly reaching another, ensuring an accurate representation of the network’s connectivity. This action mitigates the issues arising from data sparsity and establishes a uniform data structure, thereby enhancing the robustness of the flow estimation framework. With the fully connected shipping network \(G’\), we proceed with the flow estimation framework by forecasting whether a trajectory exists by solving a link prediction problem in \(G’\), which can be framed as a binary classification task within machine learning models. This action provides concrete source-destination pairs well-prepared for building feature sets and modeling the gravity force.

Network feature extraction, representation, and purpose

To perform link prediction, we first separated the shipping data from 2019 for testing and retained the data from 2017 and 2018 for training and validation. Then, we prepared features for this binary classification task. We calculated the Haversine distances between every pair of ports, which is defined in Eq. 7, measures the shortest distance between two vertices \(i \in V\) and \(j \in V\) on a sphere’s surface46. This metric incorporates the latitudes \(\phi _{i}\) and \(\phi _{j}\) of vertices i and j, the difference \(\bigtriangleup ^{\phi }_{ij}\) between \(\phi _{i}\) and \(\phi _{j}\), the difference \(\bigtriangleup ^{\lambda }_{ij}\) between their longitudes \(\lambda _{i}\) and \(\lambda _{j}\), and the Earth’s radius \({\textbf{R}}\) (6,371 km) — all values are in radians.

$$\begin{aligned} d_{ij}^{E} = 2 \times arcsin\left( {\text{sin}}^2\left( {\bigtriangleup ^{\phi }_{ij}}\right) + {\text{cos}}\left( \phi _{i}\right) \times {\text{cos}}\left( \phi _{j}\right) \times {\text{sin}}^2\left( {\bigtriangleup ^{\lambda }_{ij}}\right) \right) \times {\textbf{R}} \end{aligned}$$

(7)

However, Haversine distances only provide the geodesic approximation and cannot capture the real sea routes that the ships have traveled. Therefore, we also computed the sea-route distances between port pairs. We obtained a more accurate representation using a Python package that models the shortest routes and calculates the sea route distances using historical trajectories47. Finally, we used these distances to evaluate the importance level \(I_{ij}\) for each edge \(\langle i, j \rangle \) in the complete graph \(G’\). Inspired by straightness centrality measuring the node connectivity by the straightness of the shortest distance48, this metric combines the normalized flow size \(\hat{w}_{ij}\) and the normalized Haversine distance \(\hat{d_{ij}^E}\), using a small constant \(\epsilon \) to prevent division by zero. Connections with high shipping flows and shorter distances are deemed more important:

$$\begin{aligned} I_{ij} = \frac{\hat{w}_{ij}}{\hat{d_{ij}^E}+\epsilon },~ i, j \in V’, ~i \ne j \end{aligned}$$

(8)

Following is an explanation of the shortest distance and straightness centrality concepts in network analysis, from which we drew inspiration for our edge importance metric (Eq. 8):

Shortest distance \((d_{ij}^{N})\)

A path S between two vertices i and j is a sequence of connected vertices \(S^n = \left<v_1, v_2, \text {…}, v_{q-1}, v_q\right>\), where each consecutive vertex is connected through an edge \(\left<S^n_m, S^n_{m+1}\right> \in E\) for all \(m \in [1,|S^n|[\). The shortest directed path \(d_{ij}^{N}\) is obtained by minimizing the weight function \({ f :E^n \rightarrow {\mathbb {R}}}\), which describes the cost of the paths among all possible paths \({\mathbb {S}} = \{S^{1}, S^{2}, \ldots , S^{n}\}\) between vertices i and j49. The goal is to find the path with the minimum cost, which is determined by the sum of the weights of the edges. The weight is the straight-line distance between the vertices, such as in Eq. 9.

$$\begin{aligned} d_{ij}^{N}= min\left( \sum _{m=1}^{|S|-1} f (\left<S^n_m, S^n_{m+1}\right>), \forall S^n \in {\mathbb {S}} \right) \end{aligned}$$

(9)

Straightness centrality \((C_S)\)

This metric measures the straightness of paths connecting vertices i and j. It does so by comparing the deviation of the geodesic distance \(d_{ij}^{E}\) and the shortest path distance \(d_{ij}^{N}\) that links them50. A high centrality value indicates the existence of connections with distances close to the geodesic one. When the two distances match, it is the optimal scenario for communication between vertices.

$$\begin{aligned} C_S(i) = \frac{1}{|V|-1}\sum _{j=1}^{|V|} \frac{d_{ij}^{E}}{d_{ij}^{N}},~i \ne j \end{aligned}$$

(10)

Next, we incorporated the Haversine distance (Eq. 7), sea route distance47, and edge importance (Eq. 8) into the feature set. We then employed machine learning classification models, including Logistic Regression, KNeighbors, Decision Tree, XGBoost, and Random Forest, to determine the existence of a link between two ports. Upon labeling the real (true class) and pseudo (false class) links in the complete network \(G’\), we observed a significant class imbalance (2.3% real links and 97.7% pseudo links); consequently, we employed stratified sampling of the pseudo links based on their spatial distribution to balance the number of examples in each class in a manner that preserved the data’s characteristics. The models were engaged in a binary classification task, where we utilized 75% of data from 2017 and 2018 for training and 25% for validation. During the training phase, a 5-fold grid search cross-validation was implemented to fine-tune the hyperparameters of each model. Finally, we tested the models on unseen data from 2019, reinforcing the validity of our approach.

Features used with gravity-informed models

Based on predicted shipping trajectories, we have origin-destination pairs for preparing gravity model features. Features incorporated in gravity-informed models are listed in Table 3, including shipping fluxes, distances, international bilateral trade volume33, and the centrality graph metrics extracted from the global shipping network. In particular, the bilateral trade volume data is country-based. Therefore, we used the source and destination countries to extract trade volume data and integrate it into the feature set.

Transformer gravity model

In this study, we incorporate the self-attention mechanism of the Transformer architecture34 into our proposed framework. Compared to the conventional MLPs structure, the self-attention mechanism can inspect the input sequence and weigh to identify and prioritize the most relevant elements for generating the output. This characteristic enables our model to capture crucial dependencies in vessel mobility effectively flows. Additionally, the self-attention mechanism accomplishes high performance with fewer parameters, making it a computationally efficient model. This section shows how we model the Transformer Gravity, combining the characteristics of the Gravity Model and the self-attention mechanism.

Problem definition

Based on the pairs of source-destination ports obtained from the previous link prediction step, we encode the destination ports into 17 geographical regions according to the ISO-3166 standard51, where ports within regions are expected to have a similar set of organisms and, therefore, share similar habitat. Given the limitations in the granularity of our data, which does not extend to cover all geographic areas, this regional categorization makes it feasible to model and incorporate all intra-region locations within the landscape. Over the encoded representation of regions, we now aim to estimate the sizes of shipping mobility flows between each source-destination pair \((P_i, P_j)\), where \(P_i\) is the source port and \(P_j\) is the destination port that pertains to a unique geographical region. A ship departing from a source port may have one or more destination ports in the same or different regions, and following the Deep Gravity29 method, the goal is to estimate probabilities of the ships traveling to these geographical regions, becoming a multiclass classification task.

Predict target: We compile a set of 10 features from various sources for each pair \((P_i, P_j)\), such as shipping fluxes at ports, geodesic distances between source and destination, bilateral trade volume source from The Atlas of Economic Complexity33, the graph metrics computed with the global shipping network; detailed information about these extracted features is provided in Table 3 in the Methods. We represent the feature vector for each source-destination pair as \(x_{ij} = \left<m_1, m_2, \ldots , m_{10}\right>\). Given the ships from each source port travel to multiple destination regions, these feature vectors are aggregated into a single data sample \(X_i = \{x_{i1}, x_{i2}, \ldots , x_{iN}\}\), where N is the number of destination regions in the data sample, and \(1 \le N \le 17\). Each destination region is represented as a class, so the prediction has N classes for a sample. Since samples of varying lengths cannot be wrapped to a tensor for batch processing, we set the batch size to 1 for model input. Using \(\hat{y}_{ij}\) to represent the estimated size of the mobility flow between \((P_i, P_j)\), which is the target of the prediction, we have:

$$\begin{aligned} \hat{y}_{ij} = O_i \cdot p_{ij} \equiv O_i \frac{e^{f(x_{ij})}}{\sum ^{N}_{k=1} e^{f(x_{ik})}} \end{aligned}$$

(11)

where \(O_i\) represents the total number of ships departing from source port i. \(p_{ij}\) is the probability of ships traveling from source port i to the destination region j among all the possible destinations \(1 \sim N\), and \(f(x_{ij})\) is the model output of feature vector \(x_{ij}\).

Loss function: We used the cross-entropy loss function for the model optimization process, defined as:

$$\begin{aligned} L\left( \hat{y}_{ij}, y_{ij}\right) = -\sum ^{M}_{i=1}\sum ^{N}_{j=1} y_{ij} \cdot \ln \left( \frac{e^{f(x_{ij})}}{\sum ^{N}_{k=1}e^{f(x_{ik})}}\right) = -\sum ^{M}_{i=1}\sum ^{N}_{j=1} y_{ij} \cdot \ln \left( \frac{\hat{y}_{ij}}{O_i}\right) \end{aligned}$$

(12)

The function presents the total loss between the predicted flows \(\hat{y}_{ij}\), and the real flows \(y_{ij}\) for all the N destination regions from M source ports. The log-softmax function is applied to the model output \(f(x_{ij})\), and the loss function in terms of \(p_{ij}\) is obtained by replacing the log-term by Eq. 11 divided by \(O_i\).

Evaluation metric: The Common Part of Commuters (CPC)52,53,54 is designed to measure the similarity between two sets of data, which could represent various aspects such as the volume of commuters, traffic, or trade between different locations. The metric calculates how much overlap there is between the predicted values \(\hat{y}_{ij}\) and the actual values \(y_{ij}\). In Eq. 13, M represents the number of source ports and N the number of destination regions. The values \(\hat{y}_{ij}\) and \(y_{ij}\) correspond to the flow of vessels from source port i to destination region j, based on a model’s prediction and the actual observed values, respectively. In this case, a high value of the CPC means a significant overlap between the predicted and actual datasets. Specifically, it would indicate that the predictions accurately capture the true data distribution patterns, with most predictive quantities closely matching the actual quantities. Contrarily, a low value of the CPC would suggest that there is little overlap between the predictions and the actual data, indicating that the model’s predictions diverge significantly from the observed data, which could be due to underprediction or overprediction in various parts or a general misalignment of the model with the reality. Accordingly, CPC considers the minimum common value between the predicted and actual data for each pair of source and destination ports, measuring the intersection over the values union:

$$\begin{aligned} CPC(\hat{y}_{ij}, y_{ij}) = \sum ^{M}_{i=1}\frac{2\sum ^{N}_{j=1} \min (\hat{y}_{ij}, y_{ij})}{\sum ^{N}_{j=1} \hat{y}_{ij} + \sum ^{N}_{j=1} y_{ij} } \end{aligned}$$

(13)

To evaluate the model’s performance comprehensively, besides the CPC, we included the Normalized Root Mean Square Error (NRMSE)—lower is better—and Pearson Correlation Coefficients (Corr.)—higher is better—to measure the normalized errors and the correlation between the predictions and observations, and to provide a multi-faceted assessment of the model’s accuracy and reliability, defined as:

$$\begin{aligned} NRMSE(\hat{y}_{ij}, y_{ij}) = \sum ^{M}_{i=1} \frac{\sqrt{\frac{1}{N}\sum _{j=1}^{N}(y_{ij} – \hat{y}_{ij})^2}}{\max (y_{ij}) – \min (y_{ij})} \end{aligned}$$

(14)

$$\begin{aligned} Corr.(\hat{y}_{ij}, y_{ij}) = \sum ^{M}_{i=1} \frac{\sum _{j=1}^{N} (y_{ij} – \overline{y}_{ij})(\hat{y}_{ij} – \overline{\hat{y}}_{ij})}{\sqrt{\sum _{j=1}^{N} (y_{ij} – \overline{y}_{ij})^2 \sum _{j=1}^{N} (\hat{y}_{ij} – \overline{\hat{y}}_{ij})^2}} \end{aligned}$$

(15)

Model framework

Our proposed Transformer Gravity model is composed of three main components: (1) the input embedding layer, which maps the input feature vectors to a higher-dimensional space that is compatible with the Transformer architecture; (2) the multilayer Transformer encoder, which involves the self-attention and feed-forward blocks that process the embeddings to capture complex relationships between input features; and, (3) the output linear layer, which maps the processed embeddings to the target flow predictions, computes loss and CPC and performs backpropagation based on the loss values. The input embedding layer (1) helps us organize and make sense of that data by putting it into a format that is easier for a machine to understand. This format is represented as vectors of numerical values, where each dimension captures specific input data characteristics and is fed into subsequent layers of the model for further processing until achieving the output layer. The output represents the predicted value, which, in our case, is the inflow or outflow of vessels between a pair of ports. The multilayer Transformer encoder (2) looks at all the different parts of information organized in the first component and finds out how they relate. Transformers are known for spotting patterns and connections between different parts of the training data. This helps our model understand the complex relationships between various aspects of the shipping data, like which routes are busiest, or ports are most important. The output linear layer (3) helps us make predictions based on the patterns and connections found by the previous layer. This layer will predict how much shipping traffic will be in a particular area. If the predictions are not entirely correct, this layer also helps us learn from our mistakes and improve our future guesses due to the backpropagation mechanism. Backpropagation is a process where the model adjusts its internal parameters based on the difference between its predictions and the actual outcomes, gradually refining its understanding of the data and improving its predictive power over time. Figure 5 presents the model pipeline using two stacked Transformers modules and provides a glance at the layer’s relationships.

Linear embedding: The embedding layer takes the input sample, which is a sequence of feature vectors represented as \(\{x_{i1}, x_{i2}, \ldots , x_{iN}\}\). It then maps each vector into a higher-dimensional space using a linear transformation that involves a weights matrix and a bias vector. The result of this transformation is the feature embedding \({{\textbf {z}}}_0\) for each vector \(x_{ij}\), which can be obtained following the subsequent calculation:

$$\begin{aligned} {{\textbf {z}}}_0 = x_{ij} \cdot {W_0}^{\top } + b_0, ~ x_{ij} \in {\mathbb {R}}^{1 \times n}, ~ W_0 \in {\mathbb {R}}^{d \times n}, ~ b_0 \in {\mathbb {R}}^{1 \times d} \end{aligned}$$

(16)

The input vector \(x_{ij}\) with 10 features is represented by \(W_0\) (the weight matrix) and \(b_0\) (the bias vector). This input vector is then embedded into a 64-dimensional space, resulting in an embedded output \({{\textbf {z}}}_0 \in {\mathbb {R}}^{1 \times d}\). Subsequently, the embedded output is passed to the multi-head attention encoder layers as the input.

Multi-head attention: A multi-head attention encoder comprises a multi-head self-attention mechanism and a feed-forward network, followed by layer normalization (as illustrated in Fig. 5). Within each self-attention head, the input \({{\textbf {z}}}_0\) is transformed into queries \(Q_h\), keys \(K_h\) and values \(V_h\) using the weight matrices \(W_Q\), \(W_K\) and \(W_V\), respectively. Self-attention then calculates \(Head_h = softmax\left( \frac{Q_h \cdot {K_h}^{\top }}{\sqrt{d_k}}\right) \cdot V_h\), where \(d_k = \frac{d}{h}\) is the dimension of the queries \(Q_h\) and keys \(K_h\) and is used to scale the product \(Q_h \cdot {K_h}^{\top }\). Multi-head attention combines all heads and linearly transforms the concatenation to produce \({{\textbf {z}}}_1 \in {\mathbb {R}}^{1 \times d}\):

$$\begin{aligned} {{\textbf {z}}}_1 = Concat(Head_1, \ldots , Head_h) \cdot W_C, ~~ W_C \in {\mathbb {R}}^{h d_v \times d} \end{aligned}$$

(17)

Our experiment defines the number of heads as \(h=2\), and the heads run operations in parallel.

Layer normalization: After the multi-head attention layer, a dropout layer randomly sets a certain percentage of elements to 0. The dropout ratio p is set to 0.1 as per Eq. 18. Next, a skip connection is applied to add the input features \({{\textbf {z}}}_0\) to the output of the dropout layer \({{\textbf {z}}}_{dropout}\) before the self-attention block. This helps retain the input features’ information and prevents vanishing gradients during backpropagation. The output of this connection, \({{\textbf {z}}}_{skip}\), is then normalized using Eq. 18, where \(\mu \) is the mean and \(\sigma \) is the standard deviation of \({{\textbf {z}}}_{skip}\) with a small bias. The affine parameters \(\alpha \) and \(\beta \) are initialized as 1 and 0, respectively, and can be optimized during training.

$$\begin{aligned} \begin{aligned} {{\textbf {z}}}_{skip}&= {{\textbf {z}}}_0 + {{\textbf {z}}}_{dropout} \equiv {{\textbf {z}}}_0 + Dropout({{\textbf {z}}}_1, p) \\ {{\textbf {z}}}_2&= LayerNorm({{\textbf {z}}}_{skip}) \equiv \frac{{{\textbf {z}}}_{skip}-\mu }{\sigma } \times \alpha + \beta \end{aligned} \end{aligned}$$

(18)

Feed-forward network: After the multi-head attention block processes the input, the resulting output is fed into a feed-forward neural network composed of an MLP structure. The connectivity of each layer in the feed-forward block’s structure is illustrated in Fig. 5. We formulate the output vectors from the layers using corresponding weight updates in Eq. 19. Similar to \({{\textbf {z}}}_{skip}\), a skip connection adds the vector \({{\textbf {z}}}_2\) to the output \({{\textbf {z}}}_4\) to preserve information from the self-attention block.

$$\begin{aligned} \begin{aligned} {{\textbf {z}}}_3&= Dropout \left( ReLU( {{\textbf {z}}}_2 \cdot W^{\top }_1 + b_1), p\right) \\ {{\textbf {z}}}_4&= Dropout \left( ({{\textbf {z}}}_3 \cdot W^{\top }_2 + b_2), p \right) \\ {{\textbf {z}}}_5&= LayerNorm({{\textbf {z}}}_4 + {{\textbf {z}}}_2) \end{aligned} \end{aligned}$$

(19)

Training and optimization: Our Transformer Gravity model has three transformer encoder layers stacked together to capture complex input embedding dependencies. Still, the number of stacked layers can be changed to match different requirements and needs. The output value \({{\textbf {z}}}_5\) is obtained by passing the output of Eq. 19 through a second and later third multi-head attention and feed-forward network block. The output value of the model is denoted as \(f(x_{ij})\), and it produces a sequence of output values for a single data sample with a length of N. This sequence is then applied to a softmax function to produce probabilities \(\{p_{i1}, p_{i2}, \ldots , p_{ij},\ldots , p_{iN}\}\) for N classes. The predicted flow sizes \(\{ \hat{y}_{i1}, \hat{y}_{i2}, \ldots , \hat{y}_{ij},\ldots , \hat{y}_{iN} \}\) for each destination are obtained by multiplying these probabilities with the total outflows \(O_i\) from the source port, as given in Eq. 11. The loss for every sample is computed using Eq. 12, and these losses are collected to derive the total loss. The model’s parameters are updated with each loss by processing a single sample. The summed CPC across all samples is calculated using Eq. 13. After each training epoch, the summed CPC is divided by the number of samples M (i.e., the number of source ports) to obtain the average CPC of that epoch. We used the Adam optimizer with \(L_2\) regularization on the weights during training. We reduced the learning rate by a factor of 0.1 when there was no improvement in the validation CPC after 10 epochs. We used early stopping when there was no improvement with the validation CPC after 20 epochs to prevent overfitting and improve training efficiency.