Let me tell you, I’m starting to feel like I’m riding the crest of a wave when it comes to the multifaceted glory of AI. Back in the 2010s, I thought it would be amazing to be able to view AI images of cats, dogs, chickens, and penguins and distinguish between these little villains. It may seem “outdated” now, but as recently as ten years ago, it was considered mind-boggling.

Since then, I have come to believe that this is the true dawn of perceptual AI.

(Although it pales in comparison to everything that came before), we’ve been competing with augmentative AI, assistive AI, generative AI, and more recently agent AI. Now we live in a dizzying age of vibe coding (see also below) FAQs are perceptual, augmentative, assisted, generative, agentic, and physical AI (and vibe coding). )).

Human-in-the-Loop (HITL) is also increasingly being mentioned. This refers to an AI development approach where humans interact with machine learning models for training purposes to improve accuracy, safety, and ethical practices. The idea is that humans ensure the reliability of AI systems by providing feedback, training data, and monitoring of decisions. How long will it be before this term is replaced by Human-Out-of-the-Loop (HOOTL*)? (*In the days of darkness ahead, remember that you first heard these words here.)

You can also watch from ringside as new companies move into the AI space (where no one can hear you scream). Some of these entities spend too little time with us, while others seem to start in earnest and begin to rise before I can catch my breath.

An example of such a fast-growing company is EnCharge, which officially launched in December 2022 (less than four years ago as I write these words). I spoke with two founders in early 2023, just over a month after coming out of stealth mode ( Executing Extreme AI Analog Computing Without Semiconductors).

Simply put, AI can be broadly divided into training on the front end and inference on the back end. Training is run once (a relatively small number of times), but inference is run millions or billions of times. Approximately 95% to 99% of inference involves matrix operations, the core of which is multiply-accumulate operations. The folks at EnCharge have come up with a new way to perform these multiply-accumulate operations in low-power, high-performance analog. The details are explained in my article. Executing Extreme AI Analog Computing Without Semiconductors article.

Now, just a few years later, EnCharge is going from strength to strength. I was just talking to the CEO, Naveen Verma. He was kind enough to tell me about the latest developments in the company.

What’s particularly interesting about EnCharge is that it’s not just building another digital AI accelerator. The secret sauce lies in using analog techniques to perform core multiply-accumulate operations directly in memory. Dramatically greater efficiency can be achieved by reducing the need to transfer data between memory and computing blocks, a major source of power loss in traditional architectures.

Of course, smart silicon is only half the battle. Since we last spoke,

EnCharge has also built a software stack including quantization tools, compiler flows, runtime support, and firmware to help real-world AI workloads really take advantage of all this analog wizardry.

EnCharge’s chaps and chapes are still pursuing an edge-to-cloud strategy. Their target is outside the data center, close to the edge, but not at the very edge. Their initial focus was on large-scale automation tasks that required cutting-edge AI/ML models, including industrial, smart manufacturing, smart retail, warehouse logistics, and robotics.

While automation at scale remains a key focus, a lot is going to happen in 2023 and beyond, resulting in an urgent need to bring serious AI capabilities to end-users’ local computing platforms. I would like to be in a position where I can run my own high-end AI locally on my home or office workstation.

So you can only imagine my surprise and delight when I was introduced to the EN100. EnCharge officials describe the EN100 as “a revolutionary AI accelerator that delivers 200 TOPS of AI computing power in an ultra-efficient chip. Designed to bring advanced AI capabilities to laptops, workstations, and other computing appliances, the EN100 enables a new era of personalized AI at the edge.”

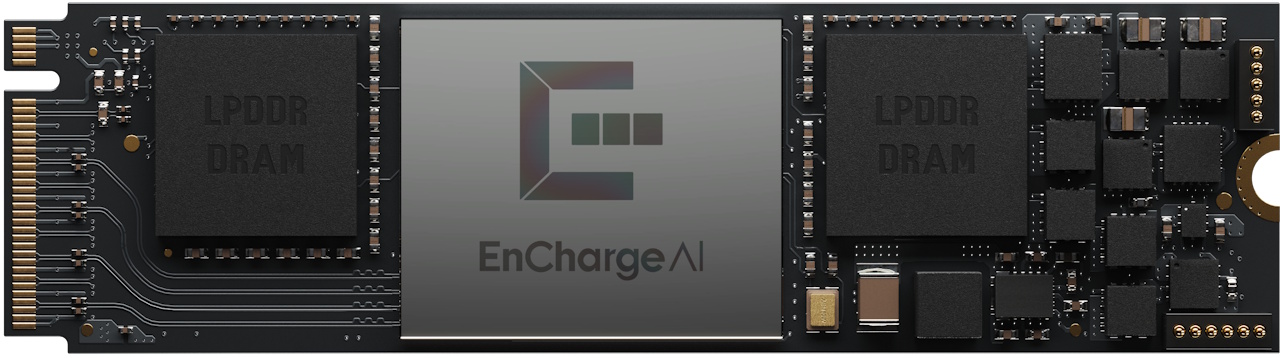

As shown below, a single EN100 device implemented in a 16nm CMOS process node is available in an M.2 2280 card for use in laptop computers. This bold beauty delivers over 200 TOPS while consuming only 8.25W. It has 32GB of onboard memory with up to 68GB/s of bandwidth (amazing!).

Single EN100 provided with M.2 card for use in laptops (Source: EnCharge)

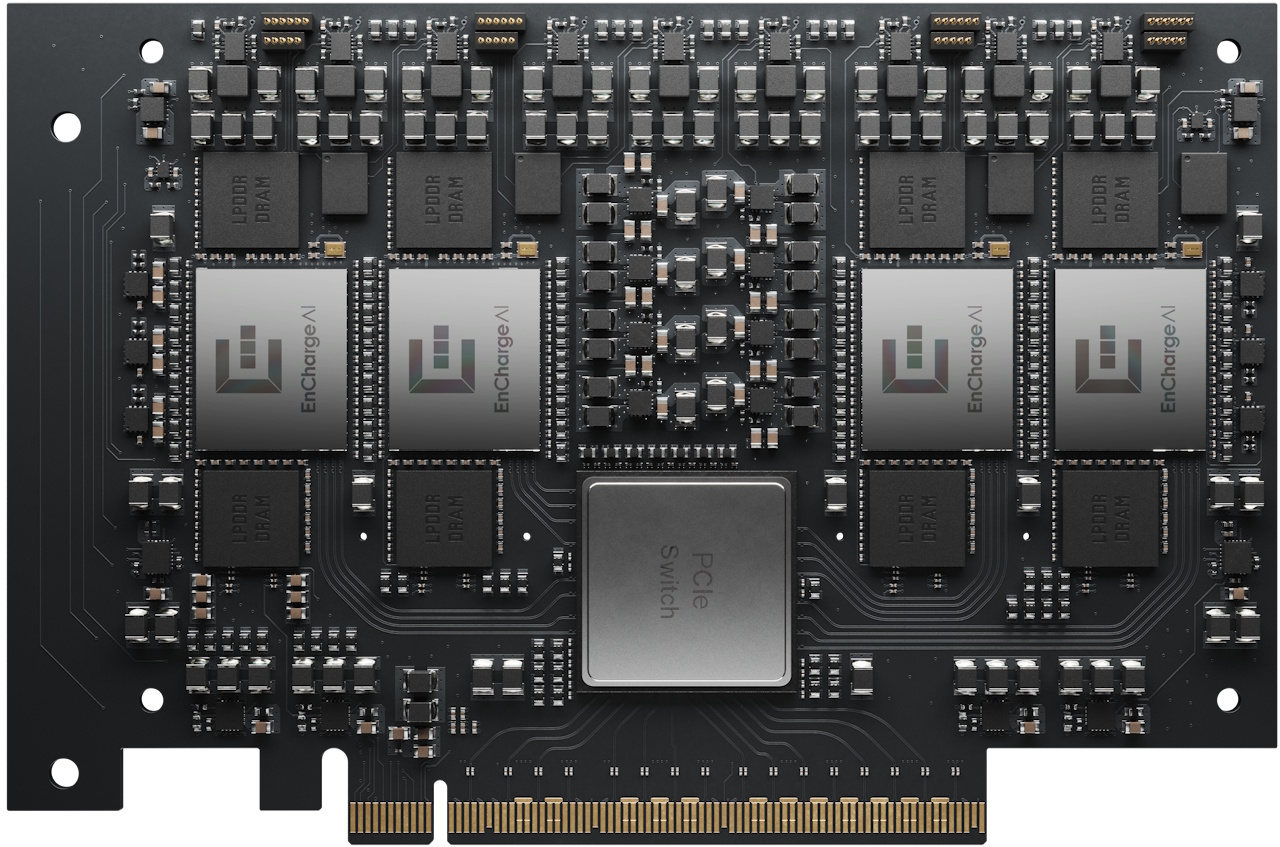

Alternatively, you can deploy four EN100 devices on a PCIe card as shown below. This amazing scan provides ~1 PetaOPS of peak TOPS while consuming only ~40 W of power. It has 128 GB of onboard memory and cumulative bandwidth of 272 GB/s (Wow^2!).

This brings data center AI performance with GPU-level computing power to desktops, workstations, and on-premises servers at a fraction of the cost and power consumption. As EnCharge officials say, it’s “ideal for professional AI applications in secure, sovereign, multi-user environments.”

Four EN100s available on PCIE cards for use in desktops, workstations, and on-premises servers (Source: EnCharge)

And this may be just the beginning. As Naveen pointed out, physical AI (robots, autonomous machines, and other systems that interact with the real world) is the logical next step. In many robotic platforms, AI computing can consume a surprisingly large portion of total system power. It can significantly reduce computing power and reduce cooling, battery size, weight, and even motor requirements. In other words, AI efficiency is about more than just improving robot brains. You can transform your whole body.

When you stop and think about it, what’s really surprising is how quickly our expectations have changed. Just a decade ago, we marveled at how machines could tell the difference between a cat and a chicken (I don’t know about you, but I still do). Now we can feel comfortable talking about putting full-fledged AI acceleration into laptops, workstations, robots, and more. If the past few years have taught us anything, it’s that tomorrow has a habit of arriving much earlier than expected. I think it’s safe to say (and feel free to quote me on that) that the future has the potential to be even more amazing and more exciting than we can currently imagine.

related