Data monitoring and preprocessing

The monitoring dataset consists of 300 representative deformation measurements collected over various construction methods and monitoring sections during the construction of Tunnel G from April 2022 to March 2023. The data sources are summarized in Table 1.

The presence of outliers in the monitored data can adversely affect the accuracy of model training, reduce generalization capabilities, and impair data fitting quality. Therefore, noise reduction pre-treatment is essential. In this study, quadratic exponential smoothing methods (time series analysis and prediction techniques) are used to preprocess the data. This method effectively reduces noise by assigning larger weights to recent data points and lowering the weight to older data points.

The equation is provided in the equation. (26) and (27):

$$ \:{f}_{t} = \alpha \:{\cdot} \:{y}_{t}+{l}_{t-1}{\cdot} \:( 1- \alpha \:)$$

(26)

$$ \:{l}_{t} = \alpha \:{\cdot} \:{y}_{t}+{l}_{t-1} {\cdot} \:( 1- \alpha \:)$$

(27)

where, ft This is the predicted value at the moment. \(\:\alpha\:\) Smoothing parameters, [0,1]; yt The observed value t; lT-1 Observations are observed at the moment before. lt Currently it is a horizontal value t.

Monitoring data is normalized and scaled linearly to range [0, 1]. The normalization formula is shown in the formula formula. (28).

$$ \:{x}_{n} = \frac {x- {x}_{min}} {{x}_{max} – {x}_{min}} $$

(28)

where, x The original value. xn Normalized eigenvalues. xMax This is the original maximum value. xMin This is the original minimum value.

To prevent and improve overfitting of model generalization and stability, the normalized dataset is divided into training and test subsets according to predefined ratios. Specifically, 70% of the data is allocated to training, with the remaining 30% being used to evaluate the performance and generalization capabilities of the model.

Structure of the deep learning model

Deep learning architectures can be categorized into different types based on tasks, data types, network structures, and other criteria. Based on the network structure, representative models include feedforward neural networks (FNN), CNN, RNN, Generated hostile networks (GAN), Graph neural networks (GNN), and attention mechanisms. As summarized in Table 2, various neural network characteristics and application scenarios, such as CNN, RNN, GAN, GNN, and attention mechanisms (e.g. CNN, RNN, GAN, GNN, and attention mechanisms).

This paper mainly uses deep learning models such as RNN, LSTM, GRU, and CNN-Gru, and outlines the basic steps for building these models below.

Data preprocessing and model training pipeline can be summarized as follows: First, data preparation involves collecting representatives, high quality data sets, removing redundant or noisy entries, and extracting related multidimensional features. Next, ensure that data normalization is applied and the input function is on an equal scale. The appropriate model architecture is then selected and designed based on the specific task requirements. Suitable activation, loss functions, and optimizers are chosen to promote effective learning. The dataset is then divided into training, validation, and test subsets according to task type, and mini-batch training is employed to accelerate convergence. During model editing and training, parameters such as learning rate, batch size, and number of epochs are constructed. The training process is monitored using loss functions and evaluation metrics to prevent overfitting. The model is then evaluated on a test set using various metrics, and loss and accuracy curves are plotted to evaluate its performance. Finally, model performance and generalization can be further improved by improving the architecture, adjusting hyperparameters, selecting appropriate optimizers, and applying normalization techniques.

Deep learning model structure combining bionic algorithms

Bionic algorithms are optimization methods that address practical problems by mimicking biological processes, evolutionary mechanisms, or ecological behaviors observed in nature. Their core principle is to replicate the behavior, evolutionary patterns, and adaptation strategies of natural organisms to identify the best or best solution.

Step 1: Create a time series dataset by splitting the data into an input sequence and corresponding target values. Use the NP.reshape function to add time dimensions and convert the data from “(samples, functions)” to “(samples, functions, time steps)” to meet the input requirements of the CNN-Gru model.

Step 2: Import the sequential model from the KERAS library, configure network parameters such as the number of convolutional kernels, kernel size, activation features, and apply zero pads to maintain consistent input and output size, and create CNN-Gru models.

Step 3: Train the model, adjust the number of neuronal nodes in the GRU layer, set the activation function, calculate the error between the output of the model and the monitored values, and evaluate the current solution. Update the weights using a gradient descent equation to check if the maximum iteration count (200) or accuracy requirements are met. If not, continue training. Otherwise, stop and store the optimal weight matrix. Then pass the full sequence to the full connected layer. Finally, we train the model using Adam Optimizer and evaluate the accuracy using the root mean square (MSE) loss function.

Step 4: Define the Whale Optimization Algorithm (WOA) and use it to optimize the hyperparameter or weight of the model. Next, we train the final CNN-Gru model using the WOA-Optimized parameter and monitor the loss function and accuracy to evaluate the model's performance.

Step 5: Once training is complete, save the best model weights for future use.

Indicators for assessing the effectiveness of forecasts

In this paper, we use the root mean square error (RMSE) and the mean absolute percentage error (MAPE) as indicators to measure the error between the predicted and actual values. RMSE has the same units as the original target value, making it easier to compare, while MAPE measures relative errors. Therefore, RMSE and MAPE are selected as evaluation metrics for the performance of the model, and the formula is displayed in the formula. (29) and (30).

$$ \:rmse = {\left[\frac{1}{n}\left(\sum\:_{i=1}^{n}{\left(y-\widehat{y}\right)}^{2}\right)\right]}^{\frac {1} {2}} $$

(29)

$$\:mape=\left(\frac{1}{n}{\sum\:}_{i=1}^{n}\frac{\left | y-\widehat{y}\right |}{y}\right)\times\:100\%$$

(30)

where, n The total number of samples. y The actual value of itth sample; \(\:\widehat {y} \) is the predicted value of itth sample.

Selecting Comparative Model Parameters

To assess the predictive performance of the WOA-CNN-Gru model of construction monitoring data for high-speed railway tunnels, this study compares the results with the results of four neural network models (RNN, LSTM, GRU, and CNN-Gru). The parameter settings for both the baseline and WOA-CNN-Gru models are shown in Table 3.

Structure of the deep learning model

Model accuracy analysis

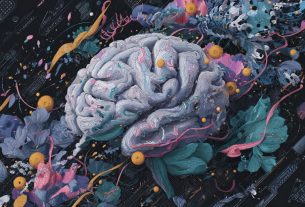

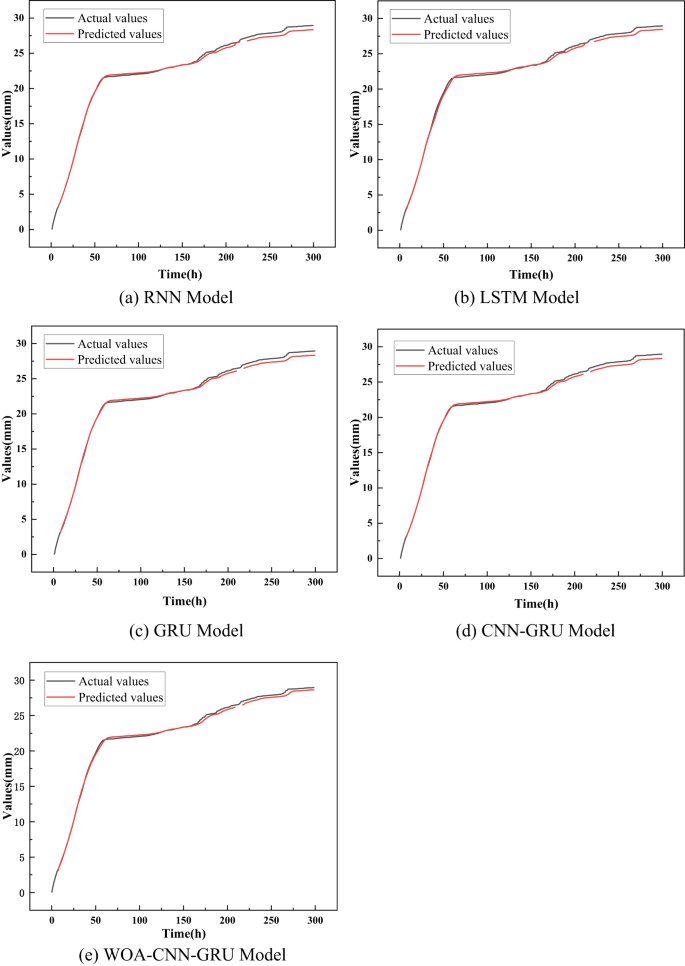

The Surface Settrement Dataset DK24 + 985:Z1, collected from the Step Methods section, is employed as input data to evaluate the training accuracy of various models. Initially, the training dataset will be fed to five models: RNN, LSTM, GRU, CNN-Gru, and WOA-CNN-Gru to compare training performance. The training results for each model are shown in Figure 11, while the corresponding loss function curves are shown in Figure 12. In 11 and 12, all models achieve prediction accuracy above 95% when applied to surface resolution prediction in the step method section. This indicates that while all models exhibit strong performance, variation in accuracy still exists.

Training results for various models.

As shown in Figure 2. The WOA-CNN-Gru models summarized in 11 and 12 and Table 4 achieve RMSE of 0.1257 mm in predicting structural deformation of high-speed railway tunnels. In comparison, the RMSE values for the RNN, LSTM, GRU, and CNN-Gru models are 0.9814 mm, 0.7629 mm, 0.4188 mm, and 0.2292 mm, respectively. The model also generates 0.51% MAPE, but the corresponding values for the RNN, LSTM, GRU, and CNN-Gru models are 2.64%, 1.37%, 1.05%, and 0.86%, respectively. Additionally, the WOA-CNN-Gru model exhibits lowest test and validation losses from 0.8×10× and 2.1×10 respectively. In contrast, other models produce significantly higher values for these metrics.

Loss loss functions for different predictive model training.

The absolute prediction accuracy of the model is improved by factors of 6.8, 5.1, 2.3, and 0.8 compared to the RNN, LSTM, GRU, and CNN-Gru models, respectively. Similarly, its relative prediction accuracy is increased by factors of 4.2, 1.7, 1.1, and 0.7. These results show that the WOA-optimized CNN-Gru model significantly outperforms traditional deep learning models in terms of predictive performance. Therefore, the WOA-CNN-Gru model can accurately predict surface settlements and horizontal convergence in tunnel structures, contributing to improved safety in high-speed rail tunnel engineering.

Model accuracy analysis

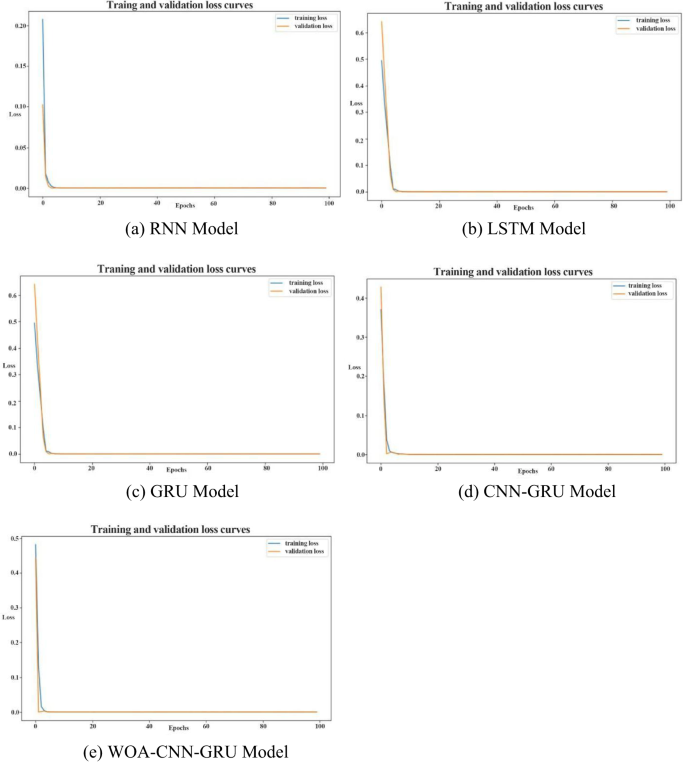

The trained WOA-CNN-Gru model is applied to various working methods, sections, and monitoring data, with comparisons of predicted and actual values shown in Figure 13.

Comparison of actual and predicted values for different monitoring data.

As shown in Figure 13. Table 5, RMSE and MAPE between actual and predicted monitoring data across different sources during the structure of high-speed railway tunnels are consistently below 1 mm and 1%, respectively. These results show that the WOA-CNN-Gru model is highly effective and reliable in predicting the deformation of surrounding rocks. The application can contribute to improving construction efficiency and reducing project costs.