Genai Startup has launched MiragelsD, an AI video model that converts live video feeds on the fly. The system aims to solve two major problems with previous AI video tools. This is a loss of image quality with slow rendering and rapid rendering over time.

AI video models are often slow and can usually produce only short 5-10 second clips before the visual starts to deteriorate. Miragelsd takes a different approach. Instead of generating the entire video sequence at once, the model creates each frame individually.

https://www.youtube.com/watch?v=s7wkoya0tg8

The system uses the windows of recent frames, current video input, and user prompts to predict the next frame as the stream unfolds. The new frame is immediately returned to the next calculation step, allowing the model to respond instantly to changes in the live feed. This setup allows continuous, real-time video conversion at 20 frames per second, 768 x 432 resolution, keeping the potential for interactive applications low enough.

https://www.youtube.com/watch?v=ws8jagy5k9i

To stabilize video quality during long sessions, Decart uses two training techniques: The first thing called “diffusion forced” teaches the model to add noise individually to each frame and clean up the image without relying on the previous frame. This helps prevent the error from increasing over time.

advertisement

The second method, “History Augmentation,” learns to find and correct repetitive mistakes, rather than simply passing them.

https://www.youtube.com/watch?v=ctl2lxgz9ce

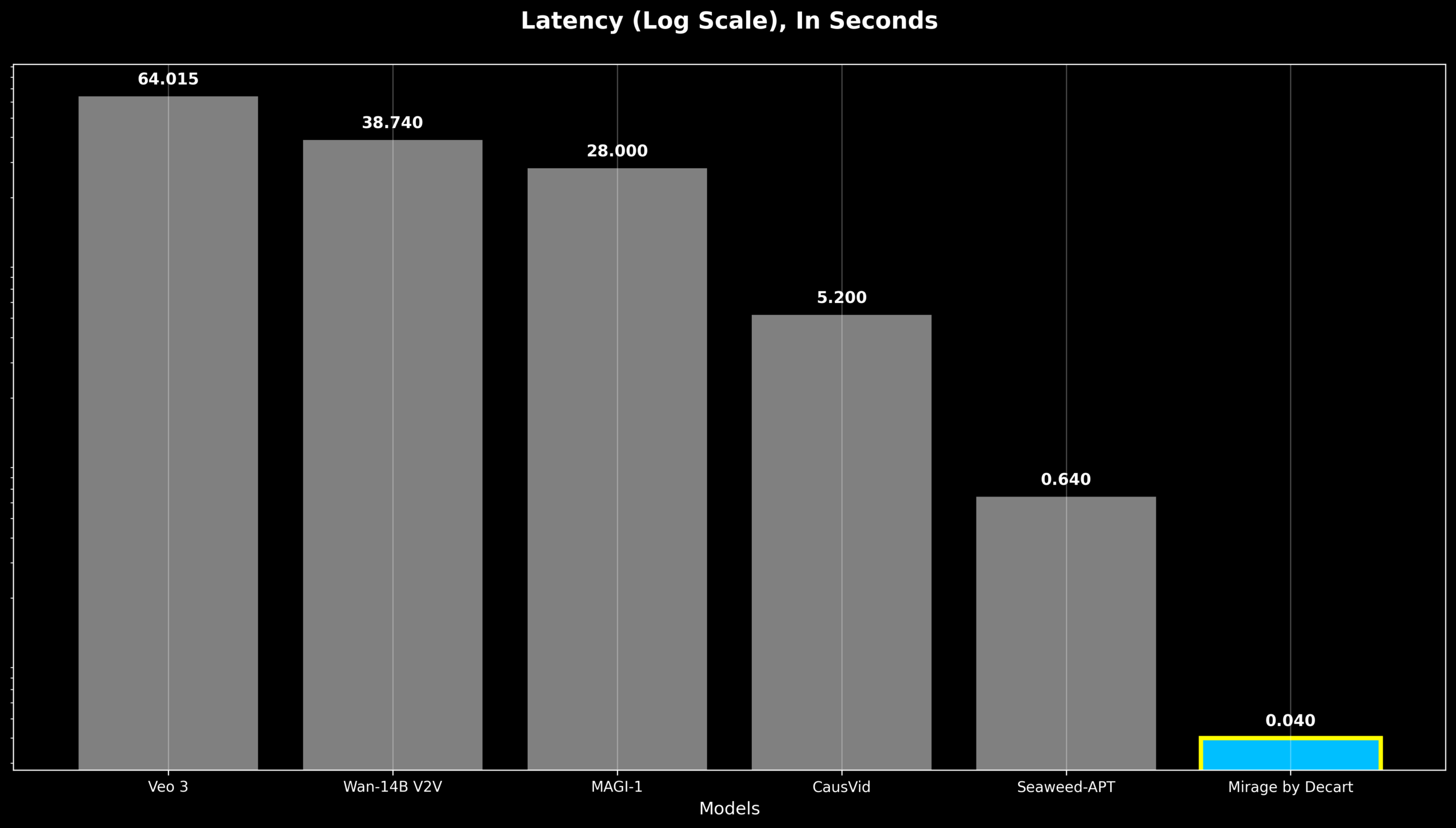

Decart has tailored MiragelsD specifically for the Nvidia Hopper GPU and used “architecture-aware pruning” to trim less important parts of the model, increasing both speed and efficiency. The team also applies “shortcut distillation” to train the larger model to replicate the results of the larger model. This is a process that leads to a 16x performance boost. As a result, each frame is processed in under 40 ms, keeping its potential sufficiently low to most viewers not realise the big delay.

Miragelsd has some limitations. Currently, we only handle small windows from previous frames, so long videos can be less consistent. The model also struggles with key style changes and precise control over individual objects.

Mirage platform is live and there are more features along the way

Decart launched Mirage Platform along with MiragelsD. The web version is already available, offering mobile apps for iOS and Android along the way. The platform targets live streaming, video calls and gaming. Restart regular update plans over the summer, adding features such as improved facial consistency, voice control, and more accurate object control.

This is Decart's second AI model, following the virus Minecraft project Oasis. It took about six months to build Miragelsd. Other systems like StreamDit can achieve similar speeds (16 to 16 frames per second). It also offers interactive features, but lags behind top models like Google's VEO 3 in terms of image quality.

recommendation