A survey of 193 compliance, ethics, risk, and audit leaders found that 90% of organizations using AI have deployed generative AI tools such as ChatGPT and Claude. About 52 percent use agent AI to perform tasks, 51 percent use large language models, and 42 percent use predictive analytics or machine learning tools.

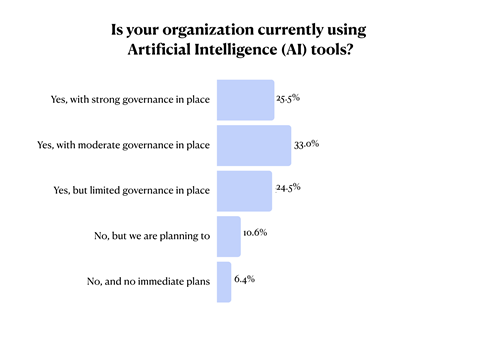

Vincent Walden, CEO of konaAI, said a lack of governance is likely slowing the adoption of these tools.

Walden said of AI, “AI is relatively new, having only been around for two and a half years.” Compliance departments are turning to AI’s capabilities because it can automate many manual and tedious processes required for compliance, such as due diligence.

“This is an exciting time to be disrupted by AI,” Walden said.

Walden said that because of the efficiencies that AI brings, companies are using it to handle many of these labor-intensive compliance tasks.

Approximately 30% of survey respondents said they work in financial services, and organizations vary in size and industry. Slightly more than half of leaders indicated that their companies are small, with fewer than 5,000 employees. Approximately 27% of organizations had between 5,000 and 50,000 employees, and approximately 18% had between 50,001 and 100,000 employees.

In their findings, these leaders expressed that the efficiencies brought about by AI tools are of interest in several key compliance areas.

For example, nearly 40% said their organization has deployed AI for risk assessment and monitoring, and about 61% said they plan to extend AI to that use in the near future.

Organizations using AI for compliance report significant benefits. Approximately 84% of leaders said AI has made their departments more efficient. About 54% said AI has improved analysis and monitoring, 49% said it has led to better and faster decision-making, and 41% said AI has led to cost savings.

Agentic AI is a tool that not only automates tasks but also makes decisions, and has the potential to be a game-changer in compliance. Walden said business processes that are repetitive and involve multiple people are ripe for automation by agents.

His organization has had success—and fun—with anthropomorphizing AI agents.

One of them, named Eva, specializes in investigations and enforcement for the Department of Justice. If a whistleblower reports a suspected bribery scheme involving certain employees or officials, Eva can quickly determine that two of the employees have been previously warned of inappropriate behavior. Walden said she could be asked to write a work plan based on that information.

He said external pressures such as economics and politics are also factors driving the adoption of AI.

“As the political agenda changes, new priorities emerge and compliance changes accordingly,” Walden said. The regulatory environment and politics are big factors.

“You have to be adaptable,” he added.

The findings certainly show this. While nearly 80% of organizations plan to use AI in the area of pricing, only about 20% are using AI in this way.

Similarly, nearly one-third of organizations are leveraging AI for regulatory reporting, and nearly 67% expect to use AI in the regulatory realm in the near future.

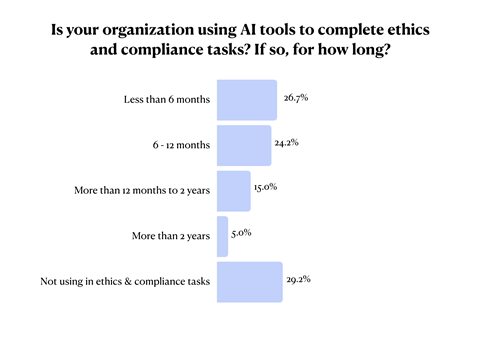

While organizations are likely to have adopted AI, the study found that compliance teams may not be using it yet. And most of the teams using AI are new converts.

Approximately 30% of compliance teams are not yet leveraging AI to accomplish their ethics and compliance tasks. Approximately 27% have been using AI for less than 6 months, but only 24% of compliance teams have been using AI for 6-12 months, 15% have been using it for 1-2 years, and only 5% have been using AI for more than 2 years.

Walden says leveraging AI is a good idea.

By continuing to spend valuable labor on boring tasks without relying on AI, Walden says, “It’s only a matter of time before CEOs and CFOs start asking, ‘What the hell are you doing?'”

The leaders also reported on the challenges of using AI. Approximately 66 percent of leaders reported data quality or data access issues, 47 percent reported training issues, 46 percent had data privacy and security issues, and 42 percent experienced unsupervised use of AI by employees.

Getting help with AI risks and issues can be difficult in itself. Approximately 54 percent of leaders said a major issue is a lack of AI expertise.

Many compliance teams spend a significant portion of their technology budget on AI-related projects and use cases. Approximately 41% of teams plan to spend up to 25% of their budget on AI. About 19% of teams expect to spend 25%-50% on AI, and about 8% of teams expect to spend 50% or more of their technology budget on AI. Approximately 13% of survey respondents said their team has no technology budget at all.

Some teams plan to reduce their use of AI in areas where they currently use it, such as training. Almost 58 percent of organizations are using AI for training, and only 42 percent plan to use AI for that purpose in the near future.

Companies also expect to reduce AI for data analysis and communications. Nearly 60% are currently using AI for data analysis, but only 41% plan to use AI for data analysis in the future.

These results speak to the fact that AI is not suitable for all jobs, especially those that require significant trust, such as training and communication, Walden said. Trust, he said, comes from authenticity, inspiration and empathy.

Training videos featuring CEOs and training sessions with compliance and other experts are “much more inspirational, more relatable, and more authentic,” Walden said.

Approximately 63% of organizations are currently using AI for communications, but only 37% plan to use AI in the future.

The survey found that nearly one-third of companies have custom-built AI tools, and 43% use third-party compliance platforms with AI capabilities.