Through the rapid advances in AI over the past few years, the industry has been almost entirely dominated by one logic. That is, computing power sets the upper limit, and the GPU is the core of computing power.

But in 2026, this logic started to change. Model inference is no longer the only bottleneck. System performance is increasingly determined by execution and scheduling capabilities. While GPUs remain important, the key factor in determining whether AI can actually run is gradually shifting to the long-overlooked CPU.

On April 9 in the US, Google and Intel reached a multi-year agreement to widely deploy Intel’s Xeon processors in AI data centers around the world, specifically to address this bottleneck. Intel CEO Chen Liwu has made it clear that AI runs throughout the system, with the CPU and IPU being the key to performance, efficiency, and flexibility. In other words, the CPU, which has been sidelined for the past two years, is now limiting the scale of AI.

Intel CEO Patrick Gelsinger said on social media that Intel is deepening its collaboration with Google, expanding from traditional CPUs to AI infrastructure (such as IPUs) and jointly advancing AI and cloud computing capabilities.

The CPU is no longer just a passive support component. It is becoming one of the key variables in AI infrastructure.

01

“Silent” supply crisis

While everyone is focused on GPU delivery times, the CPU market is already severely constrained.

According to the latest reports from multiple IT distributors, the average selling price of server CPUs increased by approximately 30% in the fourth quarter of 2025. Such an increase is extremely rare in a relatively mature CPU market.

Forrest Norrod, head of AMD’s data center business, revealed that CPU demand has increased at an unprecedented rate over the past three quarters. AMD’s delivery times have now been extended from eight weeks to more than 10 weeks, with some models experiencing delays of up to six months.

This shortage is mainly caused by resource congestion caused by “secondary effects.” Industry insiders say TSMC’s 3nm production line is so stretched that wafer production capacity originally allocated to CPUs is increasingly being replaced by orders for more profitable GPUs. This creates a very ironic situation. AI Labs has plenty of GPUs, but is finding it difficult to buy enough high-end CPUs to “power” these graphics cards.

Elon Musk is also in this CPU buying frenzy.

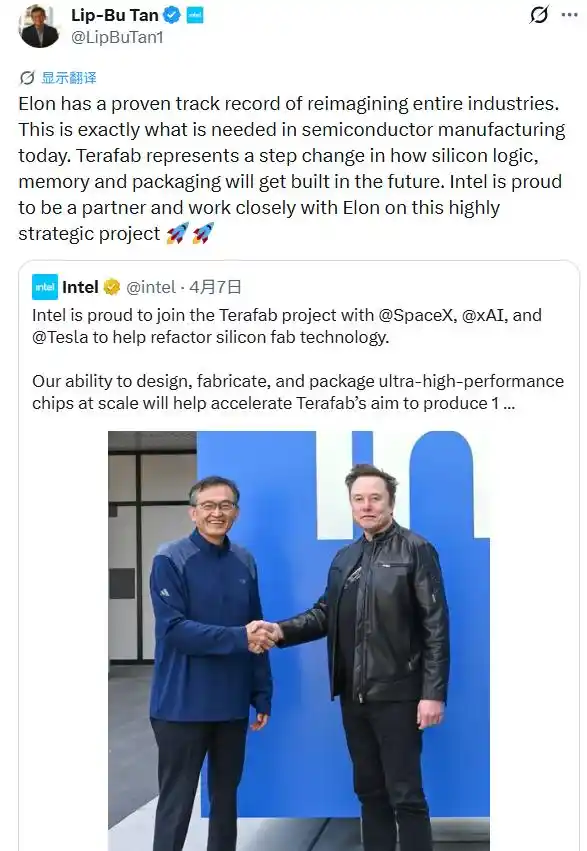

Intel CEO Patrick Gelsinger confirmed on social media that Musk had asked Intel to design and manufacture custom chips for the Terafab project in Texas. This large-scale effort aims to provide a unified computing foundation for xAI, SpaceX, and Tesla.

Musk’s confidence in Intel stems primarily from Intel’s efforts to embed the company at every level, from data centers on the ground to orbital computing.

This is definitely a boost for Inter. Industry analysts predict that AMD’s revenue share in the server CPU market will surpass Intel’s by 2026, but Intel’s deep ecosystem inertia and manufacturing capabilities in the x86 environment are still important advantages that cannot be ignored by large customers like Musk.

This deep cross-industry integration is transforming competition in the CPU market from a simple parameter showdown to a battle over ecosystem and supply chain stability.

02

Why is the CPU the bottleneck?

The workload that the CPU has to handle has fundamentally changed in the agent era, so the CPU suddenly became the bottleneck.

In traditional chatbot architectures, the CPU primarily handles scheduling and data processing, while the GPU performs the core inference computations. Because computationally intensive tasks are concentrated on the GPU side, the overall latency is typically dominated by the GPU, and the CPU is rarely the performance bottleneck.

However, the agent workload is completely different. Agents must perform multi-step inference, call APIs, read and write databases, orchestrate complex business flows, and integrate intermediate results into final output. Tasks such as searches, API calls, code execution, file I/O, and result orchestration primarily occur on the CPU and host system. The GPU handles the generation of tokens (or “thoughts”), and the CPU converts those “thoughts” into executable steps.

Georgia Tech researchers quantified the delay distribution of agent workloads in their November 2025 paper, “A CPU-centric perspective on agent AI.” The study found that CPU-side tool processing accounted for 50% to 90.6% of the total delay. In some scenarios, while the CPU is waiting for a response to a tool call, the GPU is ready to process the next batch of tasks.

Another important factor is the rapid expansion of the context window. In 2024, mainstream models generally supported 128,000 to 200,000 tokens. By 2025, models such as Gemini 2.5 Pro, GPT-4.1, and Llama 4 Maverick will start supporting over 1 million tokens. The key-value (KV) cache used to speed up inference in Transformer models scales linearly with the number of tokens, reaching approximately 200 GB at 1 million tokens. This is much more than the 80 GB VRAM capacity of a single H100 GPU.

One solution to this problem is to offload part of the KV cache to CPU memory. This means that the CPU must not only manage orchestration and tool calls, but also help store data that doesn’t fit in GPU memory. Therefore, CPU memory capacity, memory bandwidth, and interconnect speed between CPU and GPU are important for system performance.

Therefore, a CPU suitable for the agent era requires lower latency, consistent memory access, and stronger system-level collaboration than just single-core scaling.

03

What are manufacturers doing? Some companies are competing for market share, while others are redesigning their products.

In the face of this surge in CPU demand, several major companies adopted completely different strategies.

Intel has been the leader in traditional server CPUs for many years. According to Mercury Research data, in the fourth quarter of 2025, Intel still held a 60% share of the server CPU market, compared to AMD’s 24.3% and NVIDIA’s 6.2%. However, Intel has been trying to keep up with new technology for years, and this surge in CPU demand is both an opportunity and a challenge for Intel.

Intel’s current strategy consists of two approaches. Meanwhile, the company continues to sell its Xeon processors through deep partnerships with hyperscale customers like Google. On the other hand, we are collaborating with SambaNova to launch a combined solution with Xeon processors and our own RDU accelerator, emphasizing the selling point of “performing agent inference without GPUs.” The Xeon 6 Granite Rapids and 18A process roadmap will be important in determining whether Intel can turn things around.

AMD is one of the biggest beneficiaries of this surge in CPU demand. In the fourth quarter of 2025, AMD’s data center revenue reached $5.4 billion, an increase of 39% year over year. 5th generation EPYC Turin accounted for more than half of server CPU revenue, and cloud instances running EPYC saw more than 50% year-over-year growth. AMD’s market share in server CPU revenue exceeded 40% for the first time.

AMD CEO Lisa Su says this growth is due to agent development, with agent workloads pushing tasks back to traditional CPUs.

In February 2026, AMD also announced a potential deal with Meta worth over $100 billion to supply MI450 GPUs and Venice EPYC CPUs.

However, AMD lacks mature, high-speed CPU-GPU interconnect capabilities like NVLink C2C, and there is still room for improvement in system-level collaboration. The importance of this aspect is steadily increasing as agent systems require efficiency in data exchange and coordination.

NVIDIA’s approach to CPU design is completely different from that of Intel and AMD.

NVIDIA Grace CPUs only have 72 cores, while AMD EPYC and Intel Xeon typically have 128 cores. Dion Harris, Head of AI Infrastructure at NVIDIA, explained, “If you’re a hyperscaler, you want to maximize the number of cores per CPU, which inherently reduces cost (in dollars per core). So this is a business model.”

In other words, within an AI computing architecture, the CPU is no longer the primary general-purpose processor, but acts as a “coordination hub” servicing the GPU. If the CPU can’t keep up, the expensive GPU will have to wait, ultimately reducing overall efficiency.

Therefore, NVIDIA’s design prioritizes efficient cooperation between the CPU and GPU. For example, through the NVLink C2C interconnect, the bandwidth between the CPU and GPU is increased to approximately 1.8 TB/s, far more than traditional PCIe, and the CPU has direct access to GPU memory, greatly simplifying KV cache management.

NVIDIA currently sells Vera CPUs as standalone products. CoreWeave is our first customer. The deal with Meta is even more significant, as it marks the company’s first large-scale “pure grace deployment,” or independent deployment of CPUs at scale without being combined with GPUs.

Ben Bajarin, principal analyst at research firm Creative Strategies, noted that high-intensity system collaboration requires the processing power of the CPU to keep up with the iteration speed of the accelerator. Even a 1% delay in the data path can significantly reduce the economic efficiency of the entire AI cluster. This pursuit of system efficiency is forcing all major companies to re-evaluate their CPU performance metrics.

Holger Müller, vice president and principal analyst at Constellation Research, said the role of the CPU is becoming increasingly central as AI workloads move to agent-driven architectures. He said, “In the agent world, agents need to call APIs and various business applications, tasks that are best suited for the CPU.”

He continued, “At this point, there is no consensus on whether GPUs or CPUs are better suited for inference tasks. GPUs have advantages in model training, and custom ASICs like TPUs also have strengths. But one thing is clear: Google needs to embrace a hybrid processor architecture. So it makes sense for Google to partner with Intel.”

04

Conclusion: In the age of agents, the balance of computing power will regress

Among the latest industry insights, there’s one data point worth noting. In the $38 billion partnership between Amazon AWS and OpenAI, the official announcement specifically mentions a scaling scale of “tens of millions of CPUs.”

For the past few years, the industry has typically focused on “hundreds of thousands of GPUs.” However, leading labs like OpenAI are aggressively treating CPU scale as a key planning variable, sending a clear signal that scaling agent workloads should be built on large-scale CPU infrastructure.

Bank of America predicts that the global CPU market size could double from the current $27 billion to $60 billion by 2030, with almost all of this growth driven by AI.

We’re seeing a whole new infrastructure expansion. Leading enterprises are no longer just deploying GPUs, they are simultaneously scaling up entire layers of “CPU orchestration infrastructure” designed specifically to support AI agents.

Intel and Google’s partnership and Musk’s significant investment in custom chips all confirm one truth: progress is the key to winning the AI race. When computing power is no longer in short supply, only those who can solve the system-level bottlenecks first will win this multi-trillion dollar game.

Special contribution to this article was provided by Jinlu.

This article is from the WeChat public account “Tencent Technology”, written by Li Helen, and edited by Xu Qingyang.