Researchers at Beijing University of Posts and Telecommunications have demonstrated that, contrary to previous assumptions, quantum machine learning (QML) models leak information about the data used to train them. The team confirmed this “membership and privacy leakage” in both “basic QNNs” and “hybrid QNNs” through simulations and, importantly, by running tests on real cloud quantum devices. This reflects known vulnerabilities in classical machine learning and raises new questions about data security in the emerging field of quantum computing. To prove the risk, the researchers developed a specialized “Membership Inference Attack (MIA)” tailored to the QNN output and showed how to identify whether a particular data point is influencing the model’s training. This work provides a potential pathway to privacy-preserving QML.

Membership inference attack exposes leaked QNN training data

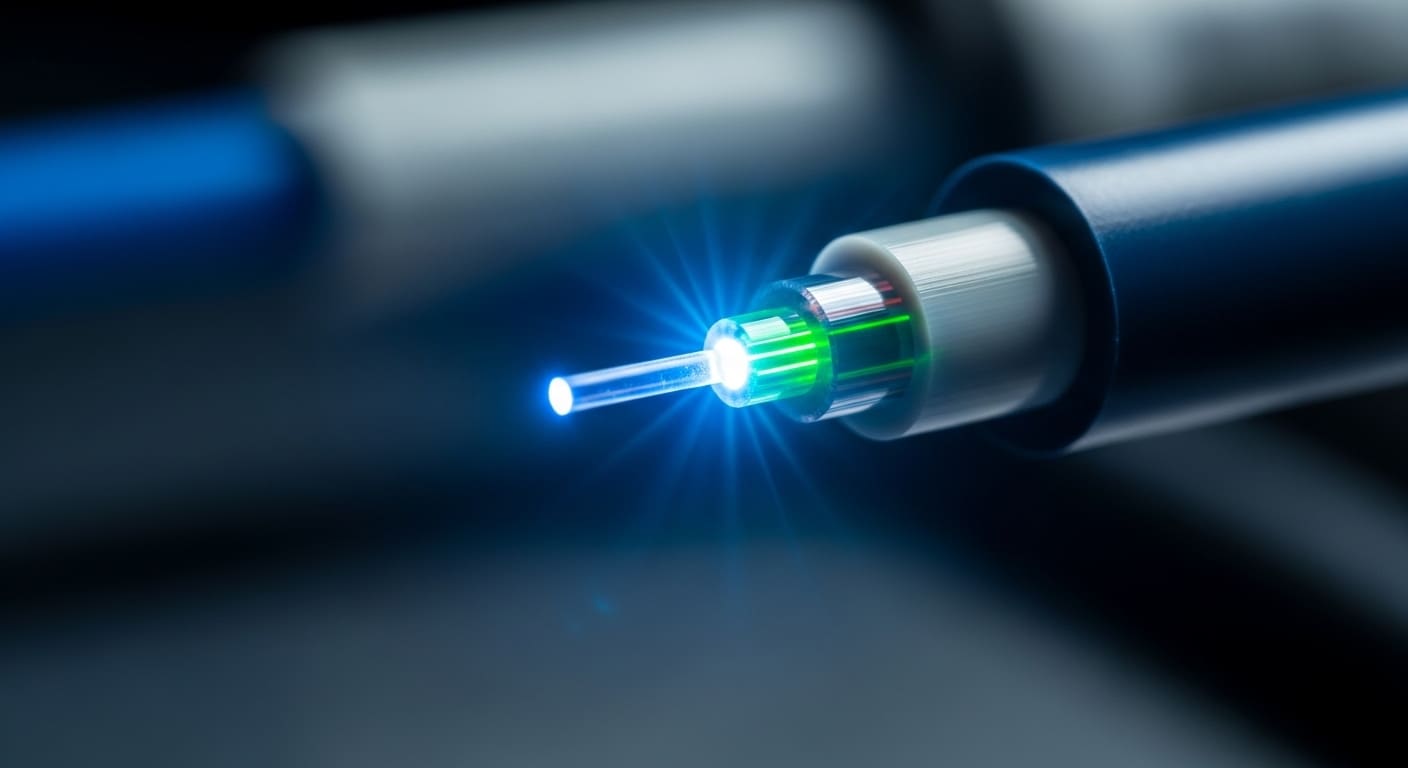

Quantum neural networks, once theorized to offer inherent privacy benefits, are clearly vulnerable to information leaks about their training data, according to new research from the Beijing University of Posts and Telecommunications (BUPT). Researchers led by Fei Gao showed that these models leak “membership privacy.” This means that an attacker can determine whether a particular data point was used during the training process. While this reflects well-known weaknesses in classical machine learning, it is the first demonstration of its kind in the quantum realm. The team’s research, published in Physics Applied, goes beyond a theoretical risk assessment by confirming this leak through both simulations and experiments on real cloud quantum devices. This study addresses the important question of whether QML models leak membership privacy regarding training data and whether there are ways to mitigate this leakage.

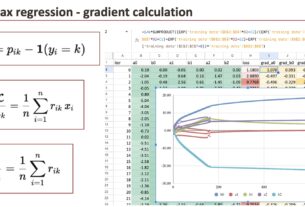

Further research explored the possibility of “quantum machine unlearning” (QMU). It is a framework consisting of three mechanisms designed to remove the influence of data extracted from the trained model while preserving the accuracy of the remaining data. Evaluation of both QNN architectures showed that QMU effectively removes the effects of retrieved data. The researchers also investigated how the number of “shots” used in quantum measurements affects both the degree of membership leakage and the stability of the unlearning process, providing a potential path toward “privacy-preserving QML,” as stated in the published study.

Quantum machine learning framework with three MU mechanisms

This leak allows an attacker to determine whether specific data influenced the training of a model, leading the team to investigate whether QML inherently offers greater privacy than its traditional counterpart. Their findings suggest otherwise. Evaluation across both QNN architectures reveals that QMU successfully eliminates traces of deleted data while preserving the accuracy of the data retained by the model. This is an important balance for real-world applications. In a comparative analysis, we characterized these three mechanisms and evaluated their performance based on data dependence, computational demands, and overall robustness.

Shot number affects quantum measurement leakage and stability

Their research, detailed in Physics Applied, reveals that increasing the number of shots does not necessarily improve security. Instead, it may paradoxically enhance an attacker’s ability to infer membership in the training data. This discovery challenges the assumption that more data inherently equals more privacy in quantum systems, a concept held in traditional machine learning. The team’s analysis focused on how quantum constraints specifically shape this leakage, leading to the formulation of a “realistic gray-box threat model” to assess risk. To further complicate matters, the study also found that the number of shots significantly affects the stability of quantum machine unlearning (QMU), the process of removing data from a trained model. Specifically, the researchers found that the effectiveness of a QMU mechanism designed to mitigate membership privacy leakage is sensitive to the number of shots used during quantum measurements, with certain configurations being more robust than others.