Dataset Description

Yang et al.4 We proposed six benchmark datasets for MDLT learning: VLCS-MLT, PACS-MLT, OfficeHome-MLT, TerrainC-MLT, DomainNet-MLT, and Digits-MLT. Of these, Number-MLT is a synthetic dataset created by combining two-digit datasets, while the remaining five are real-world multi-domain datasets widely used in domain generalization studies. Following setup, these datasets are used to assess the performance of the proposed method. Table 2 provides detailed information about the datasets used in the experiment, while Figure 3 displays the class distribution. In the diagram, class labels are sorted in descending order based on the number of samples per class to clearly show the LT characteristics of each dataset. Note that the class and domain labels in the experimental data are reindexed based on ascending alphabetical order, and the index corresponds to the original labels of each dataset.

Distribution of training, validation, and test data for each MDLT dataset.

Experimental settings

Model Training Setup

All models were implemented using Pytorch and trained on an NVIDIA RTX A4000 GPU. Following the setup of Yang et al.4I used the same CNN architecture for the Digits-MLT dataset and used ResNet-50 as the backbone network for the remaining 5 datasets. To ensure a fair comparison, the following groups of learning solutions were evaluated: (1) Unchanging features learning solutions in the domain ( IRM7, Dan13, cdann39, Coral35, MMD40; (2) and other unbalanced learning solutions focus14, cbloss41, ldam42, bsoftmax15, CRT17, Boda4; other baselines including (3) What?43, GroupDro44, mixture45, Sagnet46, Mldg47, mtl48, fish49. Furthermore, Yang et al.4. The algorithm implementation is Yang et al. Based on the codebase provided by.4and their optimal parameter settings were used for all comparison algorithms.

Evaluation setup

Two widely used assessment metrics for unbalanced learning (the overall accuracy of TOP-1 and F1 scores for all classes) were adopted. Additionally, we calculated the accuracy of a subset of four separate classes: many shot classes (over 100 samples), medium shot classes (20-100 samples), small shot classes (less than 20 samples), and zero shot classes (no training samples). Yang et al chose the best performance model based on accuracy. Unlike, we chose the best model during training according to the F1 scores of all algorithms. This is because F1 scores provide a more comprehensive assessment, especially in the context of unbalanced datasets.

Results and analysis

Quantitative results

The MDLT Image Classification task conducted experiments on all selected benchmark datasets, and results are shown in Tables 3, 4, 5, 6, 7, 8, and 9. It is important to note that in this study we did not perform dataset-specific tuning of the parameters. \(\alpha\), \(\beta\)and \(\gamma\). Instead, we adopted a set of fixed values -\(1 \ times 10^{ – 3} \), \(1 \ times 10^{ – 2} \)and 10, respectively – identify parameter configurations that provide better average performance over different datasets, as supported in the experiments described in the Parameter Analysis section. As a result, this method may not provide optimal performance for a particular dataset (e.g. VLCS-MLT), as shown in Tables 3, 4, 5, 6, 7, and 8. However, subsequent analyses show that these parameters have a significant impact on performance by relying on datasets. For example, when is the number of digits? \(\gamma\) 10 and \(\ alpha = 1 \), \(\beta = 1 \ times 10^{ – 7} \)both the average F1 score and accuracy improve to 0.686 and 0.688, respectively, but the lowest values remain relatively high at 0.644 and 0.646.

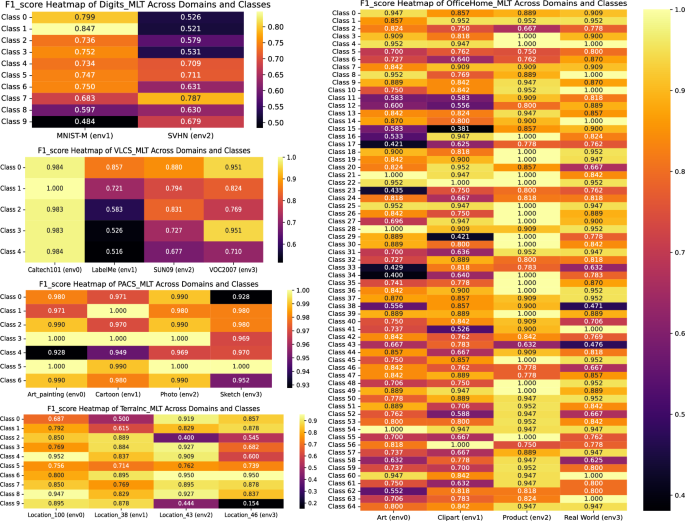

Overall, the proposed model works well across most valuation measures, particularly on the “worst” scale (i.e. performance of the most difficult class), as shown in Table 9. This robustness is particularly pronounced in the DIGITS-MLT and TerrainC-MLT data sets. Here we show strong recognition capabilities for categories where the model is class-hard. Moreover, the two-stage training approach is mostly superior to single-stage training. However, in scenarios with a small number of shots or zero shot classes, the limited number of samples can cause overloading in two stages of training, resulting in slightly reduced results. Nonetheless, our method consistently outperforms the performance of the baseline algorithm. Figure 4 shows the heatmap of the domains with macro F1 scores per class for each dataset (except for domain nets due to many classes (345) that limit visual clarity). Dark cells show a decline in F1 scores, highlighting more difficult domain class combinations.

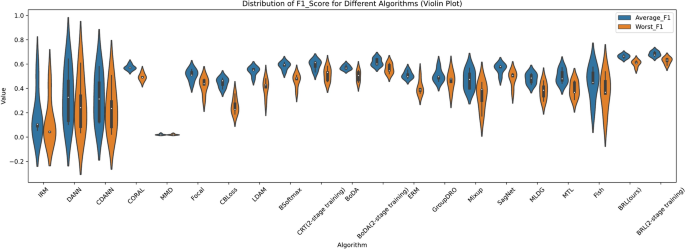

To further evaluate the stability of the algorithm's performance, five independent runs were performed for each method using separate random seeds, resulting in five sets of experimental results per method. Analyses of variance (ANOVA) was used to calculate p-values across different performance metrics. As shown in Table 10, the ANOVA results for the numeric-MLT dataset show that the p-values for all evaluated metrics are significantly below the standard significant threshold (e.g. 0.05). To visualize the distribution of F1 scores, a violin plot was generated showing the spread of both the average F1 score and the worst F1 scores across the algorithm (Figure 5). These plots show that the proposed method achieves a highly concentrated F1 score in the upper range with a narrow gap between the mean and worst values and the relatively low variance.

In summary, the proposed model may not be the top performer of all datasets, but it remains close to optimal for most cases. Furthermore, compared to other algorithms, it shows a smaller inconsistency between the “average” and “worst case” results, indicating higher stability. These findings support the effectiveness of the proposed model in the MDLT image classification task, particularly in improving recognition performance in difficult classes.

F1 Heatmap of proposed models in different datasets.

Numbers – Distribution of F1 scores for different algorithms on MLT.

Qualitative analysis with visualization

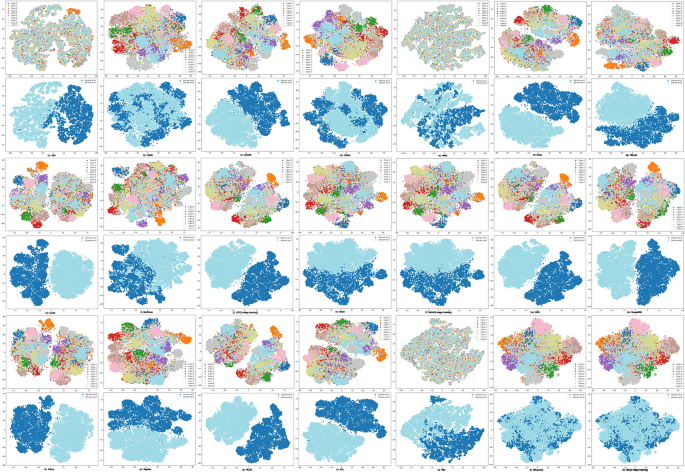

To further analyze the model's functionality, a was performed t– After visualization training of the SNE test set, use both class labels and domain labels for interpretation. Figure 6 shows t-SNE results for the Digits-MLT test set. For each model, the above column presents t-The same features are colored with the SNE plot class labels and the bottom row shows the same features colored with the domain labels. from t-SNE plot-based visualizations have the feature clustering generated by the model very similar to that of Dann and Boda, consistent with the quantitative results in Table 3. However, domain-based visualization reveals a major difference. In the context of domain-invariant representation learning, ideal feature representations should minimize differences between domains. Therefore, better alignment of feature distributions between domains reflects stronger generalization and reduced domain-specific bias. As shown in Figure 6, our model shows a larger overlap between domains, with data point A from the domain closely aligning with data points in domain B. In this way, the phenomenon of shortcut learning, which is based on domain labels, is effectively mitigated.

t– The SNEs in the DIGITS-MLT test are set in different models.

Parameter analysis

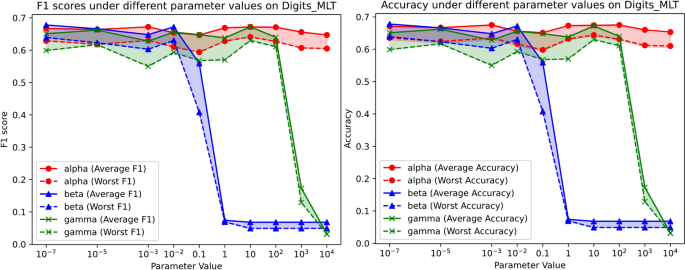

The proposed method incorporates three important parameters:\(\alpha\), \(\beta\)and \(\gamma\)– Controls the balance between classification accuracy and correction of imbalance-related errors. In our main experiment, these parameters were set consistently \(\alpha = 1 \ times 10^{ – 3} \), \(\beta = 1 \ times 10^{ – 2} \), \(\ gamma = 10 \). A series of experiments were performed using a set of values for each parameter to analyze the effect of each parameter on model performance. in particular, \(\alpha\), \(\beta\)and \(\gamma\) It was set to \(\{1\times 10^{-7}, 1\times 10^{-5}, 1\times 10^{-3}, 0.01,1,1,1,10,100,1\{3}, 1\times 10^{4}\}\)one parameter changes at a time, and the other two are fixed. Figure 7 shows the results, mean and worst F1 scores and accuracy reports for the numeral-MLT dataset. In the diagram, solid lines represent average values, dashed lines represent worst case values, colors distinguish between parameters, and shaded areas between lines of the same color indicate the difference between average and worst performance under different parameter values. The results show that the model maintains robust performance as follows: \(\alpha\) Indicates the resilience when highlighting CB components. Best test performance is always achieved \(\beta\) It is set to approximately 0.01. However, it will increase even more \(\beta\) Overcompensation of RB components leads to a rapid decline in performance. Within a certain range, the model's performance showed minor waves. \(\gamma\) The value increases. However, when \(\gamma\) It was excessively large, with over 100, and classification performance was significantly reduced.

Single-parameter analysis is the results under different parameter values in the numeral-MLT.

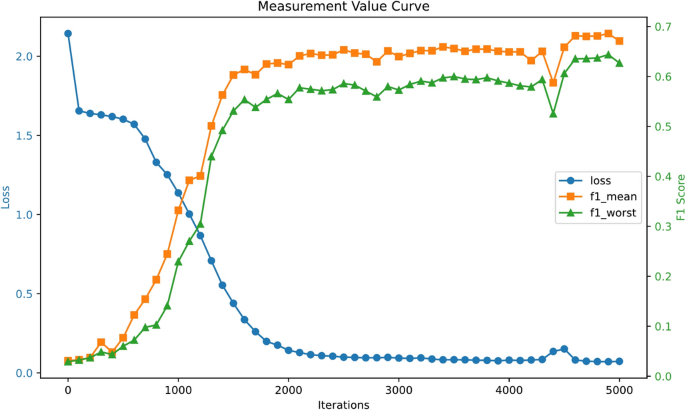

During single-parameter analysis of the Digits-MLT dataset, it was observed that the proposed algorithm achieved relatively high F1 scores and accuracy when hyperparameters were set to. \(\ alpha = 1 \), \(\beta = 1 \ times 10^{ – 7} \)and \(\ gamma = 10 \). To further investigate the convergent behavior of the algorithm based on this setting, both the loss values and the F1 score were recorded at intervals of 100 iterations over a total of 5000 training iterations. The results are shown in Figure 8. As shown in Figure 8, the loss values consistently reduce the number of iterations, indicating progressive convergence. At the same time, F1 scores showed a steady upward trend, improving as losses decreased, eventually stabilized, and exhibiting a favorable convergence trend.

Digits-MLT Iterative Training Results.

Based on the parameter analysis, it is clear that relatively small values of hyperparameters tend to result in higher F1 scores and accuracy in real applications. This means that during model optimization, it is necessary to prioritize exploring smaller ranges of parameters to enhance performance more effectively.

Ablation research

To assess the contribution of individual components within the proposed model, ablation studies were performed by selectively enabling or disabling key modules: balanced cross-entropy loss (BCE), CB components, RB components, and cross-domain penalty period (penalty). The impact of each component on model performance was evaluated using the benchmark digit MLT dataset, and the results are summarized in Table 11. Results show that when all components (e.g. Experiment No. 8) include an average F1 score of 0.672 and a worst F1 score of 0.630. These findings show that the perfect combination of these components provides the most robust and consistent improvement in model performance.