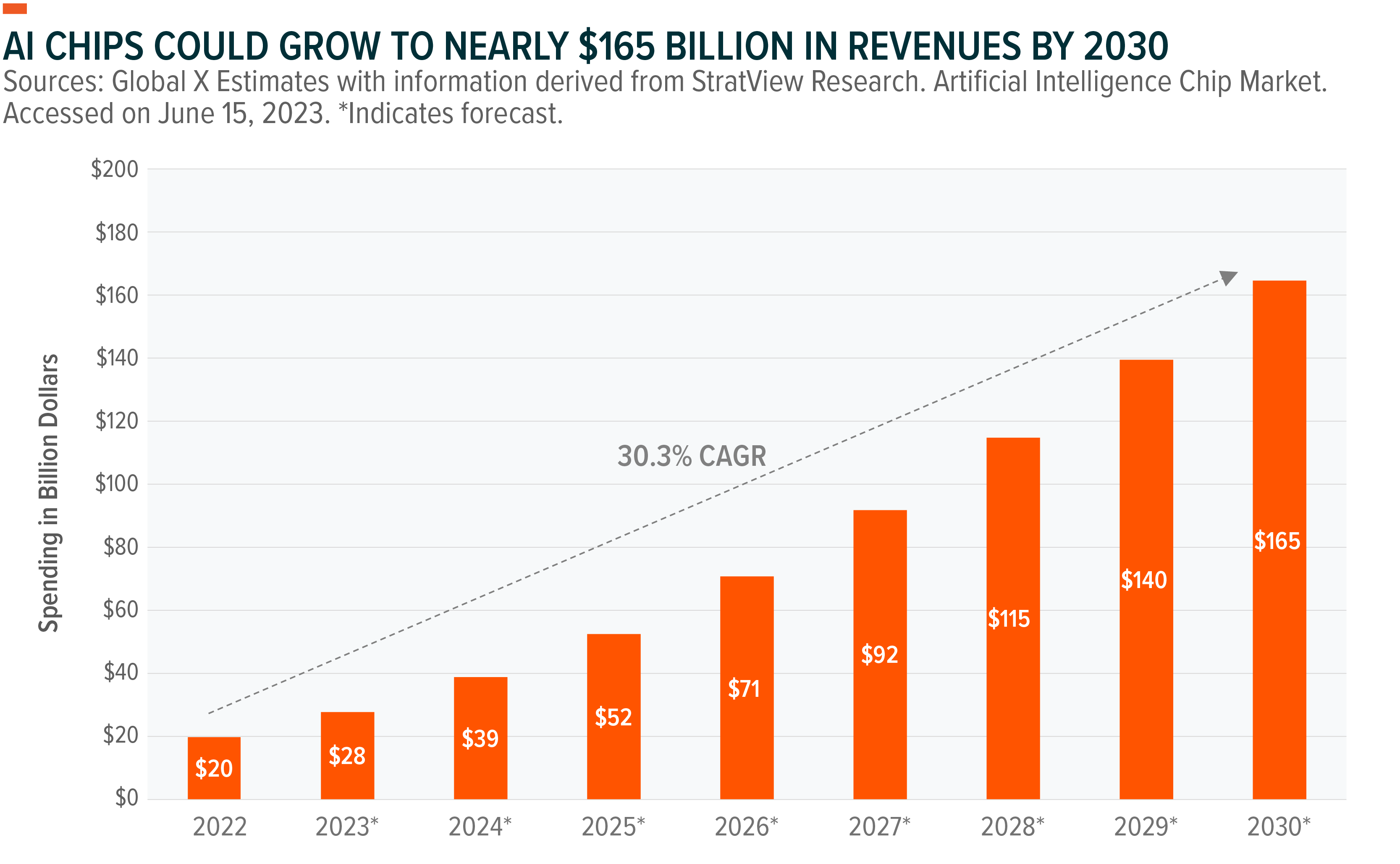

As the range of artificial intelligence (AI) applications expands, existing computing infrastructure, both in the data center and at the edge, will need to be rewired to support emerging needs for data-intensive computing. This migration has been underway, but the rapid adoption and enormous potential of Large Language Models (LLMs) could accelerate that timeline. By the end of the decade, annual spending on AI chips is expected to grow at a compound annual growth rate (CAGR) of over 30% to nearly $165 billion.1 Graphic processing unit (GPU)-based chip providers will benefit the most and continue to be the primary choice due to superior performance and developer preference. Other related hardware, such as memory and network equipment, could also benefit from this wave.

important point

- The range of AI applications is expanding and requires new computing infrastructure, requiring significant investment in AI chips, memory and network equipment.

- While the majority of AI chips will be deployed in data centers and edge networks, device-based AI processors may also become commonplace, potentially opening up new markets for chip providers.

- AI infrastructure providers are considering investments and partnerships to protect themselves from shortages.

Infrastructure spending remains strong as demand for AI services rises

In 2023, large language models will become mainstream. Fundamental models like GPT-4 are available both as application programming interfaces (APIs) and via chat-based interfaces, creating a whirlwind of user and developer activity and making AI the technology and non-verbal definitive I’m on the battlefield. -Technology companies.

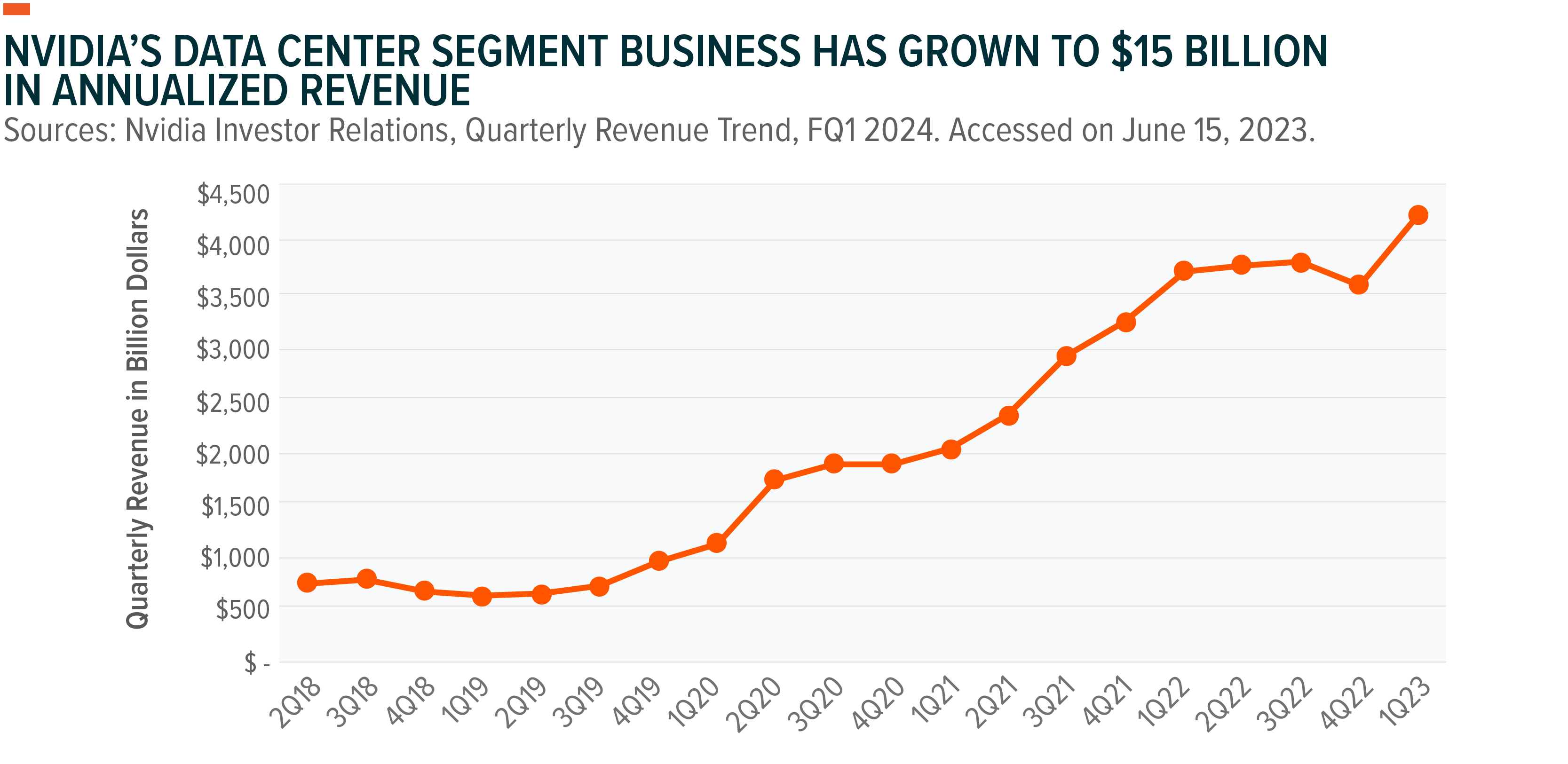

As adoption accelerates, so will the demand for AI infrastructure providers. Nvidia, one of the leading suppliers of chips for training AI models, increased its data center segment revenue by nearly 14% year-over-year (YoY) in the first quarter of 2023, beating its second-quarter guidance year-on-year. It has been revised upward by more than 50%.2 Similarly, Microsoft executives expect AI-as-a-service and machine learning workloads to drive growth in the near term despite broader cloud growth slowing overall. claimed.3

The demand for AI has raised concerns about the sustained availability of AI hardware needed to train, test, and deploy models.Four We believe we are likely at the beginning of a potential long-term investment cycle that will drive record growth for AI hardware and infrastructure providers. A large portion of this spending will be on replacing legacy computing stacks in the data center and edge with hardware that can effectively support the widespread adoption of data-intensive computing and AI.

A hyperscale cloud service provider has expanded its capital infrastructure with over 20% growth over the past five years to support a wide range of enterprise digital transformation mandates.Five The growth of AI-based infrastructure upgrades is also likely to accelerate over the next five years, creating exciting opportunities for data center semiconductor vendors.

Artificial intelligence makes GPUs a necessity

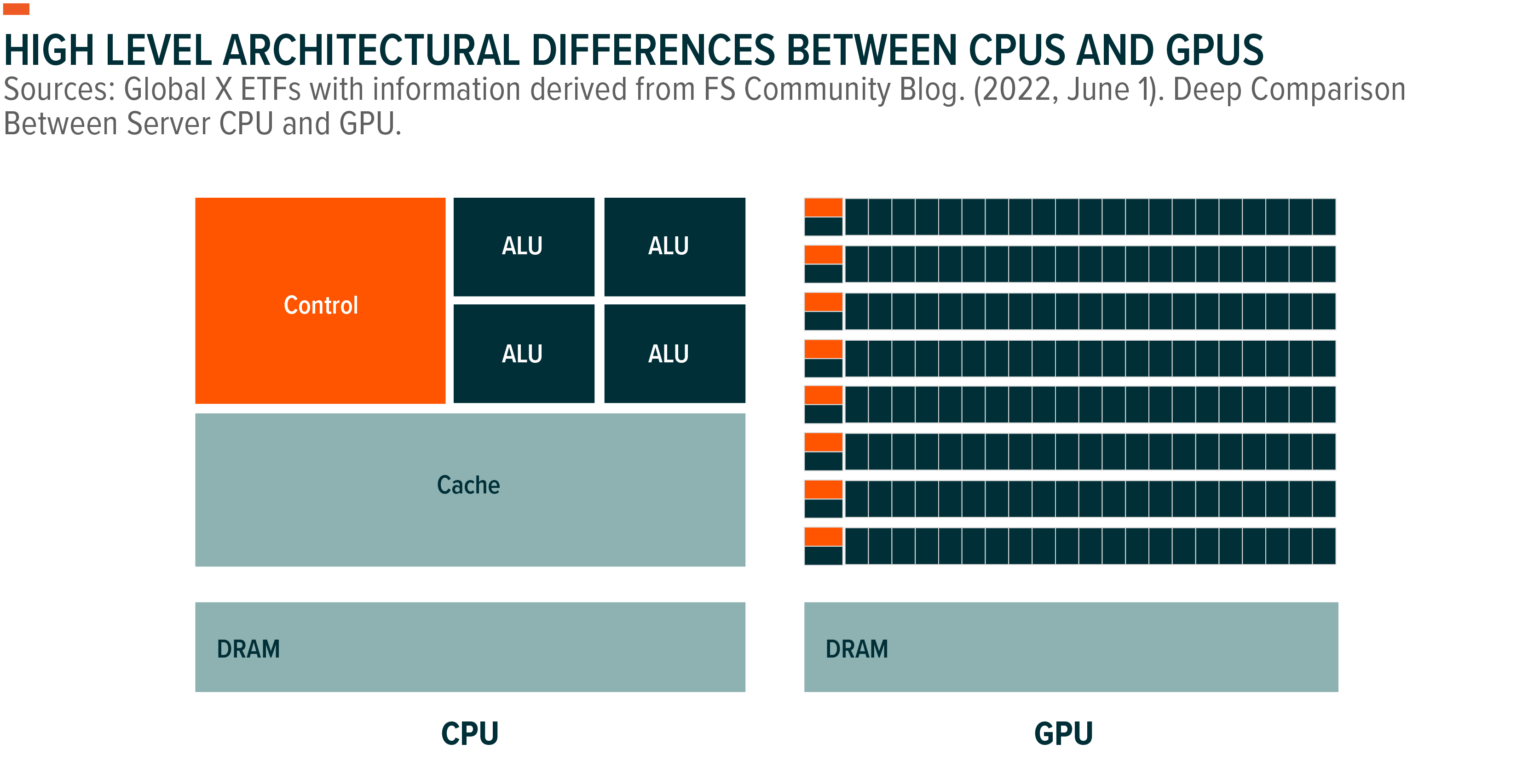

Traditional processors, also known as CPUs, were designed to efficiently perform general-purpose computing tasks in one cycle.6 GPUs are designed to process multiple logic-based computations in parallel, albeit not overly complex, so they can process large amounts of data very efficiently, making them ideal for AI training and Often used for inference tasks.7

By 2022, $16 billion worth of GPUs will be put into AI acceleration-related use cases worldwide.8 Nvidia is the dominant supplier with nearly 80% of the market share for GPU-based AI acceleration.9 Nvidia’s investment in software frameworks, including the CUDA architecture, allows developers and engineers to harness the computational efficiency of GPUs, giving them a sustained edge over competitors who lack similar software support. You can get

Outside of GPUs, Field Programmable Gate Arrays (FPGAs) and Application Specific Integrated Circuits (ASICs) make up the rest of the AI chip market. Together, FPGAs and ASICs will generate approximately $19.6 billion in revenue in 2022, an estimated 50% increase year over year.Ten Over the next eight years, total spending is expected to increase at an annual growth rate of nearly 30%, surpassing $165 billion by 2030.11

As AI chips gain prominence within data centers, spending on premium CPUs slows as CPUs are demoted from primary processing units to mere control units, essentially directing and distributing workloads across clusters of GPUs. I think it is possible. This dynamic will put pressure on high-end data center suppliers like Intel, which have built billions of franchises selling CPUs over the past three decades, while pushing that market to low-cost CPU providers like Qualcomm and Marvell. will tilt in favor of

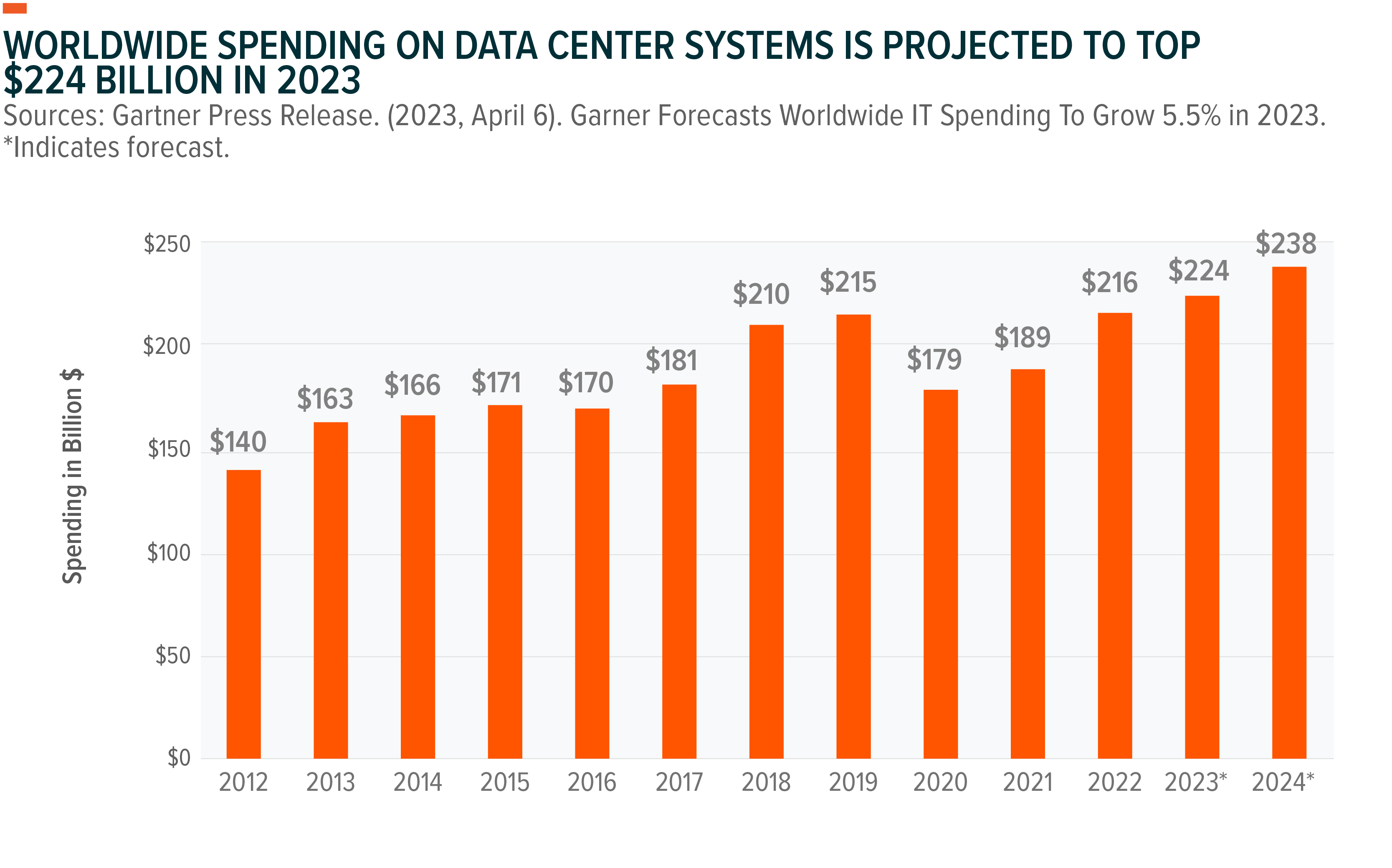

IT buyers spend nearly $224 billion annually on data center systems, including processors, memory and storage, network components, and other hardware products.12 Assuming data center systems are replaced every 4 years, over the next few years will likely replace over $1 trillion worth of computing infrastructure, with AI-first hardware becoming prevalent amid the rapid adoption of generative technologies. It will be a generational opportunity to AI solution.

Beyond data center and high-performance computing use cases, AI chips in vehicles for autonomous driving, edge computing, and mobile devices will further drive demand for specialized hardware such as networking equipment, high-speed memory, and cooling systems. may increase.

Chip Shortage Concerns May Stimulate Investments and Partnerships

With AI processing hardware in short supply, developers and engineers are rushing to bring AI-first products to market. There’s a six-month backlog on new GPU orders and Nvidia’s prices for his A1000 and H1000 GPU lineup have skyrocketed, with the chips selling at a premium on the secondary market.13, 14

Anticipating a supply shortage and over-reliance on chip suppliers, hyperscalers are working to develop their own AI processing hardware, and this in-house capability proves crucial in meeting the AI demand it generates. It turns out. Alphabet, for example, runs its Bard AI system on a natively designed chip called the Tensor Processing Unit (TPU). This chip is also available to external customers through Google Cloud services.15 Alongside low-cost ARM-based CPUs, Microsoft is also working on its own AI chips.16 Private market ventures also want to take advantage of the impending shortage of AI computing. Over the past five years, over $6 billion in external capital has been invested in AI chip ventures.17

Partnerships are another growing trend to address chip shortages. Microsoft has struck a multi-billion dollar deal with GPU-as-a-service provider Core Weave. Core Weave buys GPU power as a service and delivers it to the broader market.18 Amazon Web Services has partnered with Nvidia to give Amazon’s cloud customers predictable and consistent access to Nvidia’s Hopper GPUs. Dedicated instances combine Nvidia hardware with his AWS networking and scalability solutions to give developers up to 20 exaflops of computing performance.19

AI is still in the early innovators and experimental stages, so most of the demand for hardware at this point comes from technologically advanced companies. However, we believe that the spread of AI will benefit the entire semiconductor value chain, including foundries, chip designers, and semi-equipment suppliers in the short term. Multi-trillion-dollar markets such as advertising, e-commerce, digital media and entertainment, online services, communications, and productivity could also increase spending on turnkey hardware setups. We also believe that the industry is likely to experience increased transactional activity, with major accelerator vendors considering acquisitions of adjacent component companies to enhance R&D and product innovation.

Bottom Line: There is No AI Without Dedicated AI Hardware

The AI boom could drive an upgrade cycle for data centers that favor new computing stacks with GPUs at their core. The rapid adoption of LLMs could explode demand for AI processing and accelerate spending on specialty chips, opening up his $100+ billion market for GPUs in the near future. .20 Meanwhile, major cloud hyperscalers are likely to continue investing in R&D to build and deploy their own chips in order to cut costs by reducing their reliance on big chip providers. Demand for AI chips may be volatile, but we believe the semiconductor value chain is well positioned to seize this opportunity and create potential investment alternatives as AI penetrates new markets. thinking about.

Related ETFs

AIQ – Artificial Intelligence and Technology ETF

BOTZ – Robotics and Artificial Intelligence ETF

Click on the fund name above to view the current holdings. Holdings are subject to change. Current and future holdings are subject to risk.