In this new era of artificial intelligence (AI), it’s important to understand the basics of AI to answer important questions such as when it makes sense to use AI tools, how much you should invest in improving them, and whether you should use them at all.

The excitement around AI has sparked a gold rush for data center development in the United States and around the world. This construction boom has led to a corresponding increase in electricity demand. A recent UCS analysis found that without clear policies to support investments in clean energy, this surge in AI-driven data center deployments is likely to increase health and climate costs and lead to higher electricity bills for households and businesses. In this post, I do not intend to answer the fundamental questions posed above, but my goal is to provide important background for an informed discussion about artificial intelligence and its applications. Let’s take a closer look.

What is AI?

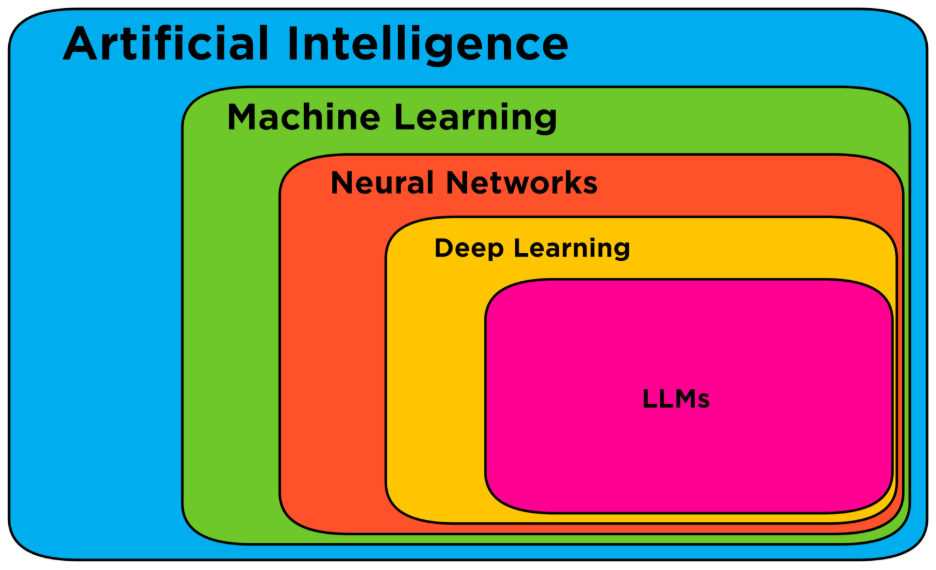

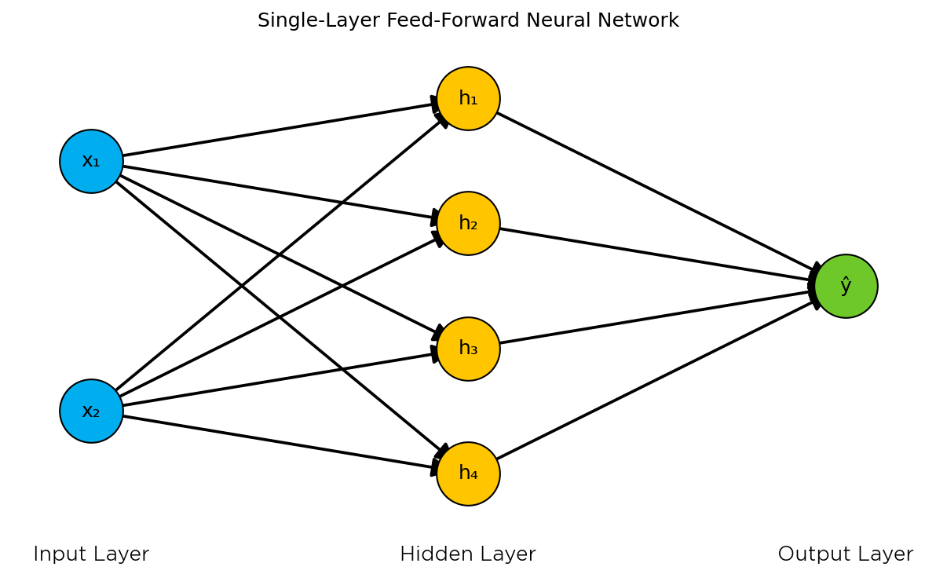

Before we discuss AI in the literal sense, we need to clarify what we mean by AI more generally. The diagram above is a simplified guide to the vast field of AI. In a broad sense, AI refers to any system that attempts to replicate human intelligence. Under the umbrella of AI is the field of machine learning (ML). The idea of machine learning is to apply statistical algorithms to known data to make predictions in new situations. Recommendation algorithms are a common example of machine learning, used by companies like Netflix and Spotify. There’s a veritable zoo of algorithms out there that change depending on the type of data used and the questions asked of those data. But one of the most popular algorithms is called a “neural network,” so called because it is inspired by neurons in the brain. The diagram below shows a simple neural network.

In a neural network, some input data activates a node in the input layer, which activates nodes in hidden layers in a cascading fashion until it reaches the output layer, similar to how neurons connect and fire in the brain. As the number of nodes (or neurons) increased with additional computing resources, researchers classified even larger neural networks as “deep learning.” Interestingly, “deep learning” was the latest buzzword until OpenAI’s ChatGPT 3.5 was released in late 2022. Now it’s AI. This leads to large-scale language models (LLMs), which are a distinctive feature of deep learning architectures. Most readers are familiar with the term AI in the context of LLMs such as ChatGPT, Claude, and Gemini. Since AI via LLM is well known, and since LLM is a subcategory of neural networks, the rest of this blog post will focus only on AI in these contexts.

Now, in a literal sense, AI consists of large files of numbers called “weights” and “biases” that correspond to assumptions. In this discussion, we will refer to them as assumptions. These assumptions are stored in the computer’s memory. There are many AI models that you can run locally on your desktop computer or laptop. However, popular LLMs such as Claude, ChatGPT, and Gemini can require hundreds of gigabytes (GB) of memory to run and require a number of powerful computer chips known as graphics processing units (GPUs).

Storage and memory and why AI models run on GPUs

You may be familiar with hard drives, or solid state drives (SSDs), for storing data on your computer. The cost of storage has come down significantly over the past few years, and as of this writing, you can get a 1TB SSD for around $150. This could certainly include an LLM; storage It’s not where computers do calculations (at least, not fast). You can think of storage like a storage locker. A place where you can store things you want to keep but don’t use regularly.

On the other hand, computers also have something called . Random access memory (RAM) It is used to store information that your computer needs to access quickly. You can think of it like counter space in your kitchen. This space limits the number of pots you can simmer in and the number of vegetables you can cook at the same time. For computers, when you have many browser tabs and programs open at the same time, your computer has to keep track of all those different tasks, which consumes a lot of RAM. For high-end computers with dedicated GPUs, there is a third type of resource: Video RAM (VRAM). VRAM is specifically allocated for advanced graphics processing for video games, 3D rendering, and now AI.

How does AI work?

AI works by taking some input or set of inputs and applying the model’s assumptions to that input. Since computers only understand numbers, text input is first converted into chunks called “tokens.” These tokens are assigned numerical values corresponding to “embeddings” or “vectors.” These vectors specify where a word exists in the space of all possible words and encode its semantic meaning. Just as every place on Earth has a unique longitude and latitude, so too does every word have unique vector coordinates.

An AI model’s assumptions are refined through many iterations in a process called “training.” In this process, the model is asked questions and adjusted based on how close it was to the correct answer. For example, you can ask an AI model to fill in the blanks: “Eating ______ a day will keep the doctor away!” At first, the AI model might give gibberish answers like “penguin.” But because every word is represented as a vector, you can see exactly how far your model was from the correct answer in your training data, and more importantly, know exactly how your AI model needs to adjust its assumptions to get closer to the correct word.

This training process requires many iterations and requires large amounts of data to produce good results. This is why AI companies collect the entire internet to build training datasets. Once trained, the assumptions are fixed and the model can be used to make predictions when someone enters a prompt or other input data (this is often called “inference”). AI companies do not publish a breakdown of the amount of energy used in training and inference. Although model training has a more computationally intensive initial cost, the proportion of AI workloads devoted to inference is expected to exceed that of training workloads in the near future, if not already.

Why do we need data centers to power AI?

So why do you need a data center to power your AI? The answer comes down to scale. GPUs achieve their speed because they can perform many calculations in parallel (similar to multiple checkout lines at the grocery store). However, as mentioned earlier, modern AI models require huge amounts of memory.

OpenAI’s GPT 3.5 model has 175 billion assumptions and requires approximately 350 GB of memory, while the estimated memory for GPT 5 is 1.7 to 1.8. Trillion Assumption. The most advanced GPU on the market (as of this writing, NVIDIA’s H100 GPU) has 80 GB of VRAM. One instance of GPT 3.5 requires 5 GPUs. By the way, high-end gaming computers typically have 10 GB of VRAM. As of December 2025, ChatGPT has approximately 900 million weekly visitors, and it’s not hard to see why OpenAI, one of the leading AI companies, owns a reported 1 million GPUs.

Housing these vast collections of GPUs requires specialized facilities that range in size from enterprise data centers to data centers for small businesses and organizations to hyperscale data centers operated by tech giants. These ultra-large facilities can draw more than 100 MW of power, which is enough to power a small city. Power demand from data centers surged 131% between 2018 and 2023, driven primarily by AI training and deployment. Future AI-focused facilities are planned at gigawatt scale.

What does AI do?

What AI do Make predictions based on some inputs and their built-in assumptions. This means that the AI system doesn’t “see” things. You can also ask an AI model, “What color is the sky?” And it might say “blue”, but that’s only because its training data contained enough examples of the relationship between sky and blue. What color is the sky? According to the AI, it is blue (98.5223% probability).

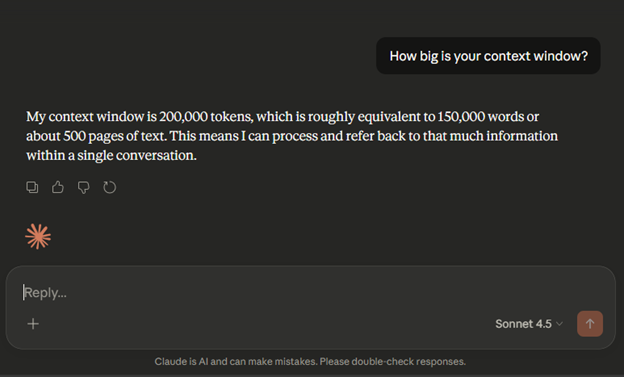

AI models also do not continuously learn. As mentioned earlier, once an AI model is trained, its assumptions are static. If the LLM appears to be “learning” things about you through conversation, it’s because that information is still within the context window (the maximum amount of information the AI can process at one time).

Finally, the AI model is (yet) common This means that the model does not exceed human cognitive capacity across all tasks. Different machine learning models may work well for some tasks and poorly for others. For example, LLMs have proven to be good at generating text and bits of code, but may not be useful for predicting wind speed or stock prices. This is probably the most important lesson from this blog post. All AI models have limitations, and understanding what those limitations are is important to determining how to use AI.

Where do we go from here?

From drafting emails and generating images with LLMs, to AI climate models filling gaps in historical climate records, to media streaming services recommending the next song or TV show, AI is already impacting the way we work and live.

Despite the incredible potential of AI, the environmental and social costs associated with its development and use expose serious trade-offs. Fundamental machine learning algorithms that enable climate scientists to create detailed models of the Earth’s atmosphere and energy companies to optimize renewable energy generation are also driving significant increases in power consumption from data centers in the form of generative AI models such as LLM. Increased demand for electricity from data centers is also driving up electricity bills for consumers. Understanding these trade-offs and making decisions about them should not be left solely to technology companies. It takes all of us.

As the UCS analysis mentioned at the beginning makes clear, the decisions we make now about how we power our data centers and invest in clean energy will shape the costs and benefits of AI for years to come. The more the public is informed about what AI is and how it works, the better equipped we will be to have these conversations.