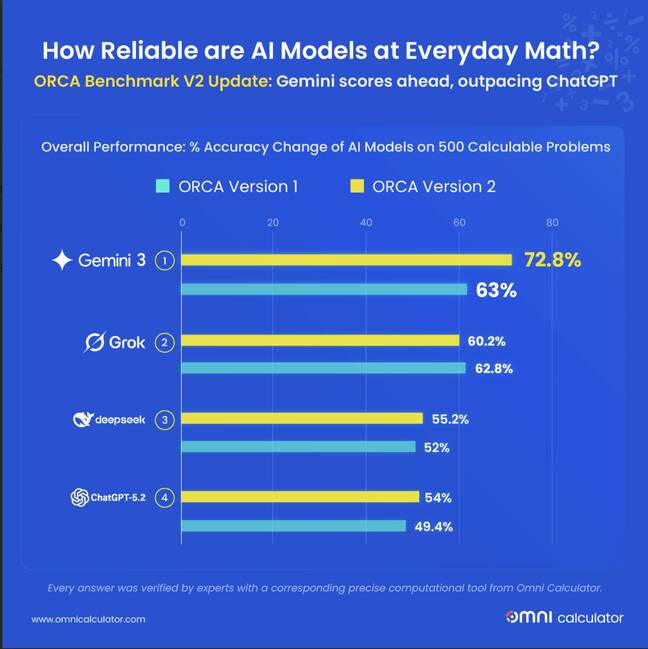

exclusive Modern LLMs are predictive engines, so they can only find the most likely solution to a problem, but that doesn’t necessarily mean it’s the correct solution. Most of the popular models are mathematically superior, but even the top-performing Gemini 3 Flash gets a C when evaluated on a letter grade.

Researchers at Omni Calculator, a maker of online calculators for specific applications, targeted AI models in the company’s new set of ORCA benchmarks, which consist of 500 practical math questions.

In an initial evaluation last November, OpenAI’s ChatGPT-5, Google’s Gemini 2.5 Flash, Anthropic’s Claude Sonnet 4.5, xAI’s Grok 4, and DeepSeek’s DeepSeek V3.2 (alpha) all performed poorly, scoring below 63% on math problems.

The latest participant set consists of ChatGPT-5.2, Gemini 3 Flash, Grok 4.1, and DeepSeek V3.2 (stable). Sonnet 4.5 was not re-evaluated because it was unchanged and no successor version was released during the testing period.

Information about this second round of testing is provided below register Pre-publication – All models saw improvements except Grok-4.1, which took a step back.

Gemini 3.1 Flash’s accuracy reached 72.8%, an improvement of 9.8 percentage points over the previous generation. DeepSeek V3.2 reached 55.2 percent, an increase of 3.2 percentage points from the alpha version. ChatGPT 5.2 achieved 54.0 percent accuracy, an improvement of 4.6 points. And Grok 4.1 dropped to 60.2 percent, a decrease of 2.6 percentage points.

Image of graph showing ORCA test results for AI model – click to enlarge

“Computers are predictable,” ORCA researcher Dawid Siuda said in a statement. “If you ask the same question today and next year, the answer will be the same. AI doesn’t work that way. These systems are predicting the next likely word based on patterns. Mathematically speaking, a model might get the question right today, but it might get it wrong tomorrow.”

The researchers attempted to assess the variability of the model’s response using a metric called “instability.” This is a measure of how often the model changes its answer when asked the same question twice.

Gemini 3 Flash was found to be the most stable, changing only 46.1% of incorrect answers. Researchers reported that ChatGPT changed answers 65.2% of the time. DeepSeek V3.2 also changed the answer to 68.8% of errors.

ORCA researchers note that model performance improvements over time vary by domain. They say DeepSeek’s performance on biology and chemistry questions improved from 10.5 percent to 43.9 percent accuracy. Gemini 3 Flash also achieved math and conversion accuracy between 83 percent and 93.2 percent. Meanwhile, Grok 4.1 lost 9 percentage points in accuracy for answering health and sports questions and 5.3 percentage points in biology and chemistry.

Researchers speculate that recent updates to Grok may prioritize features other than quantitative reasoning.

Noting that calculation errors now account for 39.8 percent of all errors, up from 33.4 percent, and rounding errors decreased from 34.7 percent to 25.8 percent, the ORCA group concludes that while AI models still struggle with arithmetic operations, they are getting better at making math look correct through formatting.

“AI models are essentially predictive engines, not logic engines,” Ciuda said. register By email. “They deal with probability, so they basically guess the next most likely number or word based on patterns they’ve seen so far. It’s like a student who memorizes all the answers in a math book but never actually learns addition.”

Ciudas said he’s known that about the model for a long time and that hasn’t changed.

“They might get the answer right most of the time, but the moment they’re given a unique problem or a tricky problem or a multi-step challenge, they stumble because they haven’t really calculated anything,” he says. “It’s probably not possible to completely close this gap with current technology, but if we can integrate LLM and function calls well enough, we might be able to do it.”

Function calls (performing operations to a deterministic source) are one way to avoid incorrect math in your model.

“Big AI companies like Google and OpenAI are already doing this by having their AI call functions and do the actual calculations,” Ciuda explained. “The real headaches occur when long, thorny problems occur. The AI has to track every little outcome at every step, and it usually ends up overwhelmed and confused.”

Another potential avenue for improvement might be to teach the model to verify responses through formal proofs. As published in Nature last November, Google’s DeepMind developed an approach that won a silver medal at the International Mathematics Olympiad through proof-based reinforcement learning developed in the Lean programming language and Proof Assistant.

But for now, don’t trust AI. ®