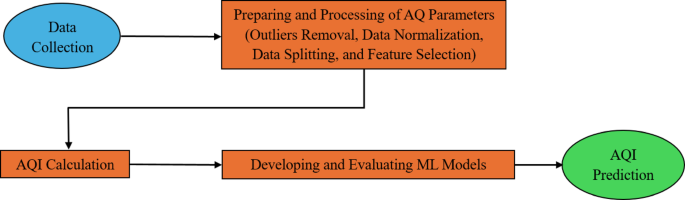

This study has progressed through three major stages. The first included preparation and processing of air quality parameters. The second stage consists of calculations of AQI. Finally, we develop and evaluate the ML model. The framework adopted is shown in Figure 1.

1 is a flow chart showing a machine learning approach for AQI prediction.

Data preparation and processing

The air pollution data used in this study can be accessed online in a real-time air pollution monitoring data set generated using IoT – Mendelly Datatwenty two. This dataset was collected hourly from January 1, 2022 to December 31, 2022, using an IoT-based monitoring system in Ghazipur, Bangladesh. Contains six contaminant concentration levels: PM2.5PM10,co, no2so2and o3was used to calculate the air quality index (AQI). The AQI was calculated according to the US Environmental Protection Agency (EPA) methodology using linear interpolation equations employed by the Environmental Bureau of Bangladesh (DOE) and national air quality breakpoints (see Table 1). For each contaminant, sub-index \({i} _ {p} \) Calculated using the formula. (1)twenty threeand the entire AQI was determined as the maximum computational subindex of the six contaminants.

$${i}_{p} = \frac {{i}_{high} – {i}_{low}} {{c}_{high} – {c}_{low}} \left({c}_{p} – {c}_{c}_{c}_{c}_{c}_{i}_{low} $$

(1)

where:

\({i} _ {p} \)=AQI value corresponding to contaminants p.

\({c} _{p} \)= Measured concentration of pollutants p

\({c} _{low} \) Threshold of concentration that is =≤ \({c} _{p} \)

\({c} _{high} \) =≥ concentration threshold \({c} _{p} \)

\({i} _{low} \) Threshold for the index associated with =\({c} _{low} \)

\({i} _{high} \) Threshold for the index associated with =\({c} _{high} \)

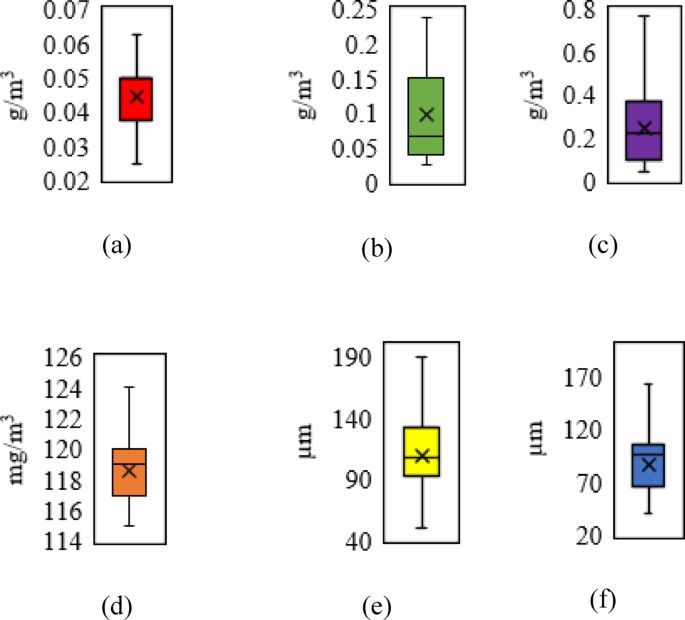

To ensure data quality, a box plot (Figure 2) was first applied to identify and remove outliers from the raw concentration values of each contaminant. Each boxplot displays the distribution of one contaminant using the actual measurement unit.2.5 and PM10 (μm), Co (mg/m3), etc.2no2and o3 (g/m3). Following the removal of outliers, all variables were normalized to the 0-1 range using the MIN-MAX scaling technique, resulting in features on equal scales suitable for machine learning algorithms, while retaining the original distributed shape. The cleaned dataset was split into 80% in training and 20% in testing. To reduce sampling bias and improve generalizability, training and testing were repeated multiple times, and a 10x cross-validation was performed to assess model stability.

Boxplots of input and output parameters: (a) Sulfur dioxide, (b) Nitrogen dioxide, (c)ozone,(d) Carbon monoxide, (e) particulate matter (d≤2.5µm), and (f) particulate matter (2.5µm≤d≤10µm).

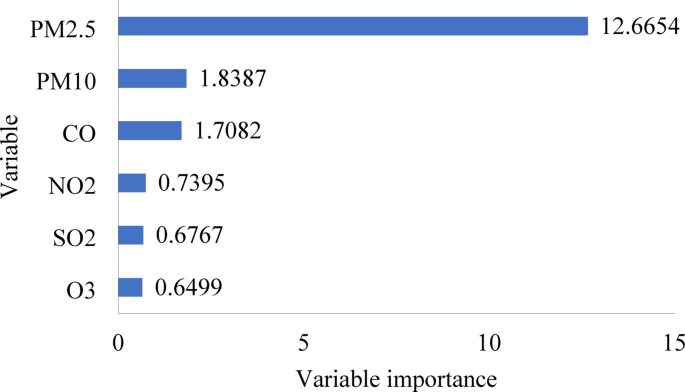

To identify the most influential input variables for AQI predictions, a random forest was employed for characterization importance assessment. This technique effectively captures nonlinear relationships and interactions between variables, enabling a robust, data-driven approach to feature selection. Analysis revealed the PM2.5 The most important score (12.6654) followed by the PM10 (1.8387) and Co (1.7082). It's a PM2.5 and PM10 We showed a moderate correlation (r = 0.3014) calculated using Pearson correlation coefficients as defined in the equation. (2), both were retained due to a clear and substantial contribution to AQI prediction. In contrast, no2 (0.7395)2 (0.6767), and o3 (0.6499) showed lower significance and was excluded from the final model. A bar chart summarizing the importance scores of these features is shown in Figure 3 to increase the clarity, transparency, and reproducibility of the variable selection process in alignment with best practices in machine learning-based environmental modeling.

$$r = \frac {\sum_{i = 1}^{n} \left({x}_{i} – \overline {x}\right)({y}_{i} – \overline {y})} {\sqrt {\sum_{i = 1}^{n} {({x}_{i} – \overline {x})}^{2}}. \sqrt {\sum_ {i = 1}^{n} {({y}_ {i} – \overline {y})}^{2}}}} $$

(2)

where:

Bar chart for the importance of random forest variables.

\(r = \text {pearson correlation coefficient} \)

\({x}_{i} = Individual values of contaminants x and y \)

\(\overline {x} and \overline {y} = mean x and y, respectively\)

n = Number of data points

Development and evaluation of ML models

The Learner Regression App is a graphical interface provided within Matlab's statistics and machine learning toolboxtwenty four. The development and analysis of regression models for use in predictive modeling tasks is easily created by this tool. This application provides an intuitive interface that facilitates interactive exploration and analysis of data, prediction of model construction, performance evaluation of algorithms, and prediction. This study utilizes regression techniques such as GPR, ER, SVM, RT, and KAR. Each model was selected based on its theoretical fit and previous success in the environmental forecasting task. GPR offers robustness to stochastic output and noise. ER enhances generalization by aggregating multiple basic learners. SVM is suitable for high-dimensional data spaces. RT offers interpretability and simplicity. KAR enhances the model's ability to capture complex, nonlinear relationships.

Model training was performed using standardized input variables (PM2.5CO, and PM10), and hyperparameters were tuned to optimize performance. To ensure robustness and minimize overfitting, all models were cross-validated using 10x cross-validation. This is a way to systematically divide the data to reduce model bias and variance. Performance assessments were performed using established regression metrics containing R2RMSE, and MAE. Table 2 summarizes the detailed configuration and optimized hyperparameters applied to each model.

Performance assessment of machine learning models

When predicting AQI using ML, model evaluation is important, so the learner regression tool provides and evaluates three key metrics. These three metrics are the absolute mean error (MAE), root root mean square error (RMSE), and coefficient of determination (r)2). The following equations can represent these statistical indicators:

-

(a)

May.

This condition allows the value of the error to be measured in the predictive dataset while being indifferent to the instructions. The MAE reflects the average absolute deviation between observed and predicted values across the test sample. It can be calculated from the equation. (3):

$$ mae = \frac {1}{n}\sum_{i=1}^{n}\left | {x}_{i} – {y}_{i}\right | $$

(3)

where:

\(n \) = Data Point Number.

\({x} _ {i} \) = Actual value.

\({y} _ {i} \) =Predicted value.

-

(b)

rmse.

RMSE is further used to estimate the value of the error. To achieve this, we find the square root of the latter by taking the average of the average of the statistical variables in terms of actual and predicted values, as calculated in the equation. (4):

$$rmse = \sqrt {\frac {1}{n}\sum_ {i = 1}^{n} {\left({x}_ {i} – {y}_ {i} \right)}^{2}}}

(4)

where:

\({x} _ {i} \) = Actual observation.

\({y} _ {i} \)=Predicted value.

n = number of data points.

-

(c)

r2.

The coefficient of determination represents a metric that evaluates the degree to which the model explains the variance of observed data compared to the prediction. Specifically, it quantifies the percentage of total variation in actual values that the model's predictions can explain. Its values range from 0 to 1, and a high value suggests excellent model performance. Conceptually, it is the ratio of variance explained by the model to the total variance observed in the data. An R-squared value approaching 1 indicates that the model's prediction matches the actual data value. You can calculate it as shown in the formula. (5):

$${r}^{2} = 1- \frac {{\sum }_{i = 1}^{n} {\left({x}}_{i} – {y}_{i}_{i}_{right)}^{2}}} {\sum_{i = 1}^{n} {\left({x}_{i} – \overs } \right)}^{2}} $$

(5)

where:

\({x} _ {i} \)= Actual value.

\({y} _ {i} \)=Predicted value.

\(\overline {x} \)=Average of actual values.

n= Data Point Number.