Wikipedia has announced a new policy that prohibits editors from using artificial intelligence (AI) to generate or rewrite article content.

Wikipedia, the popular free online encyclopedia, is restricting the use of AI on its platform following a recent policy that prohibits large-scale language models (LLMs) from creating or rewriting articles.

Wikipedia’s policy states that “text generated by large-scale language models (LLMs), such as ChatGPT, Gemini, and DeepSeek, often violates several of Wikipedia’s core content policies.”

Despite the new rules, the digital encyclopedia cites two exceptions for copywriting and translation. This policy cautiously allows the use of AI tools to suggest basic copyedits to an editor’s own writing, but only if LLM adds new information. Additionally, using LLM to translate articles is still allowed, but editors must first follow its guidelines.

“Editors are permitted to use LLM to suggest basic copy edits to their writing and incorporate some of it after human review, as long as LLM does not introduce original content,” the magazine said. “Editors are permitted to use LLM to translate Wikipedia articles in other languages into English Wikipedia, but must follow the guidance set out in Wikipedia:LLM Assisted Translation.”

Wikipedia didn’t mention penalties for using AI-generated content, but the LLM guidelines state that any feedback LLM or AI apparently makes “may suffer or break down. Repeated such misuse may form a pattern of destructive editing and may lead to a block or ban.”

Following the announcement of the new policy, a spokesperson for the Wikimedia Foundation, the nonprofit organization that supports Wikipedia, told a news outlet that the platform’s editorial policies and guidelines are not determined by Wikipedia, adding: “Wikipedia’s strength has always been, and will continue to be, our human-centered, volunteer-driven model.”

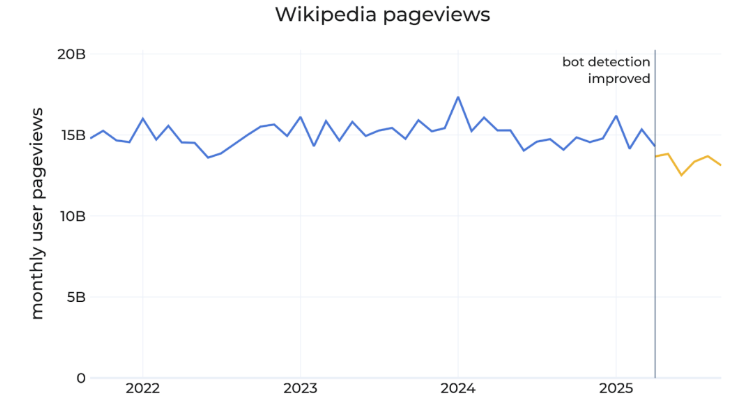

In October, the Wikimedia Foundation revealed that the number of human visitors to its sites fell 8% year-on-year as search engines and AI chatbots continued to provide direct answers on the platform.

“Bots and crawlers continue to have a significant impact on traffic data to Wikimedia projects,” Wikimedia said in a blog post. “Over the past few months, human page views on Wikipedia have declined, by approximately 8% compared to the same month in 2024. We believe these declines reflect the impact of generative AI and social media on how people search for information.”

But just this January, the Wikimedia Foundation announced partnerships with companies researching AI, including Microsoft (NASDAQ: MSFT), Google (NASDAQ: GOOGL), Amazon (NASDAQ: AMZN), and Meta (NASDAQ: META). These agreements allow these companies to use Wikipedia content through their Enterprise products, commercial services that facilitate widespread reuse of the platform’s materials.

How can we hold AI and its creators accountable?

As the use of generative AI increases, so does its darker side: deepfakes or hyper-realistic videos and audio created using AI that can generate fake news.

Various jurisdictions around the world have sought solutions that would bring much-needed accountability to creators and users of AI applications. While some regions, such as the European Union, are moving quickly to introduce regulations such as AI laws, others have yet to develop clear strategies. A further concern is that it remains unclear whether regulations can be effectively enforced.

There may be a silver lining, as blockchain can strengthen accountability for AI by creating a tamper-proof, time-stamped record of how it is built, trained, and used. By recording key aspects such as data inputs, model changes, and deployment decisions in an immutable public ledger, you can track the damage caused by deepfakes and flawed LLMs.

“Blockchain can play a very important role by simply creating a world-wide, unalterable journal that records the truth and describes exactly what happened,” explained Udacity founder Sebastian Thrun at the London Blockchain Conference 2025.

Blockchain technology inherently “records the truth” by having a system that immutably records records. This means that with blockchain, all AI data sources have a transparent history for users, regulators, and other stakeholders.

For artificial intelligence (AI) to function properly within the law and succeed in the face of growing challenges, it must integrate enterprise blockchain systems that ensure the quality and ownership of data input. This allows you to keep your data safe while ensuring data immutability. To learn more about why enterprise blockchain is the backbone of AI, check out CoinGeek’s coverage of this emerging technology.

See: AI is a double-edged sword