The idea of artificial intelligence—a machine that can think and reason in a way that approximates human ability—has been a theme for centuries. Many ancient cultures expressed ideas and pursued such goals. Early in the 20th century, science fiction began to embody concepts for the modern mass audience. Stories such as The Wizard of Oz and movies such as Metropolis have resonated with audiences around the world.

In 1956, John McCarthy and Marvin Minsky hosted the Dartmouth Summer Research Project on Artificial Intelligence, during which the term artificial intelligence was coined and introduced, and the race for practical ways to realize old dreams began in earnest. started. The next 50 years saw the rise and fall of AI development and enthusiasm, but as computing power increased exponentially and the cost of computation plummeted to drive the digital age, AI moved out of the realm of science fiction fantasy. A decisive move into technological reality. In the early 2000s, investors poured billions of dollars into accelerating and deepening the capabilities of his AI system.

Recent technological advances highlight the importance of AI regulation

In 2023, generative artificial intelligence systems (tools that can create new and original content such as images, text, and audio) are ubiquitous in the public sphere. Enterprises are scrambling to understand, adopt, and effectively implement ChatGPT-4 and other large-scale language models and algorithms. The potential benefits for businesses of all sizes are clear. It’s about improving process efficiency through automation, reducing human error, lowering costs, and discovering unknown and unexpected insights in a growing mountain of proprietary and public domain data.

Unsurprisingly, governments are focused on regulating AI. Long-standing concerns such as consumer protection, civil liberties, and fair business practices partly explain the interest in AI among governments around the world.

But at least as important as protecting the public from the downsides of AI is the competition among governments for supremacy in AI. Attracting brains and companies necessarily means creating a predictable and manipulative regulatory environment in which AI companies can thrive.

Effectively, governments face AI regulation Scylla and Charybdis. On the one hand, we are trying to protect our citizens from the real downsides of large-scale AI implementations, and on the other, we need to align our governance regimes with complex and rapidly changing AI. A shock to society.

Global race to regulate AI systems

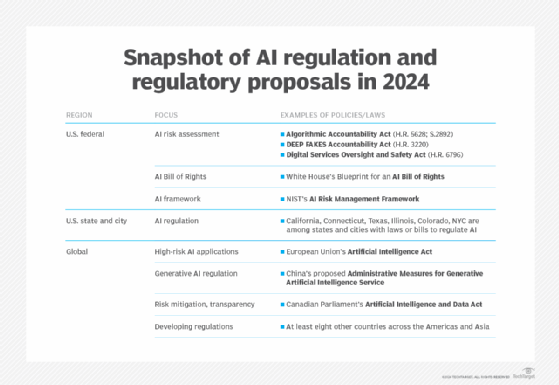

With the rapid evolution and adoption of AI tools, AI regulations and regulatory proposals are proliferating almost as fast as AI applications.

we

In the United States, governments at nearly all levels are actively working to introduce new regulatory protections and related frameworks and policies aimed at simultaneously promoting AI development and curbing social harm from AI. increase.

Federal regulation. AI risk assessment is now a top priority for the federal government. U.S. lawmakers are particularly sensitive to the difficulty of understanding how algorithms are created and how they arrive at certain outcomes. So-called black-box systems make it difficult to mitigate risks, map processes, and document public impact.

The Algorithm Liability Act (HR 6580; S.3572) is currently being debated in Congress to address these issues. If passed as law, companies using generative AI systems to make critical decisions in areas such as housing, healthcare, education, employment, family planning, and personal life will be able to account for the impact on the public both before and after the algorithm is used. judgment will be required.

Similarly, the Deepfake Liability Act (HR 2395) and the Digital Services Surveillance Safety Act (HR 6796), when passed by Congress, will also require companies to be transparent about creating and publishing “fakes.” .personality[s]” and misinformation/disinformation created by generated AI, respectively.

And while this is not law, the White House AI Bill of Rights blueprint is a formidable source of federal governance in the AI space. The Blueprint consists of his five principles of a safe and effective system. Algorithmic Discrimination Protection. Data Privacy; Notices and Instructions. And human alternatives, considerations, and fallbacks are meant to be voluntarily adopted by companies and integrated into the very design of AI systems.

Combined with the AI Risk Management Framework promulgated by the National Institute of Standards and Technology, the US federal government has effectively created a powerful set of policies designed to protect Americans. Given that this guidance represents the largest U.S. employers and shapes the behavior of most federal agencies and the majority of their employees, it also puts pressure on private entities to follow the AI policy agenda. , these “voluntary” ones should not underestimate the suggestions and principles.

U.S. State and City Regulations. Countries are also joining the race to regulate AI. California, Connecticut, Texas, and Illinois are each trying to strike the same balance as the federal government. The idea is to foster innovation while protecting voters from the downsides of AI. Colorado is perhaps the most advanced in the Algorithm and Predictive Model Governance Regulation, which imposes significant requirements on the use of AI algorithms by Colorado-licensed life insurance companies.

Similarly, local governments are also enacting their own AI ordinances. New York City is leading the way with Local Law 144, which focuses on automated employment decision tools—AI used in the context of HR activities. Other cities in the United States will soon follow in Empire City’s footsteps.

global

In Europe, the main source of AI governance is the European Union’s Artificial Intelligence Law. Like the US AI Blueprint, the main purpose of the EU AI law is to encourage developers creating or operating high-risk AI applications to test their systems, document their use, and take appropriate safeguards. to reduce risk by taking The EU AI law will be passed by the end of 2023 and will apply to all 27 countries covered by the law. Importantly, the EU AI law is likely to trigger the “Brussels effect”, a large regulatory impact well beyond Europe.

The China Cyberspace Administration is currently soliciting public comments on a proposed administrative measure on generative artificial intelligence services that will regulate various services provided to residents of mainland China.

The Canadian Parliament is currently considering the Artificial Intelligence and Data Act, a legislative proposal aimed at harmonizing AI laws across provinces and territories, with a particular focus on risk mitigation and transparency.

Across the Americas and Asia, at least eight other countries are in various stages of developing their own regulatory approaches to governing AI.

Potential impact of AI regulation on businesses in 2023

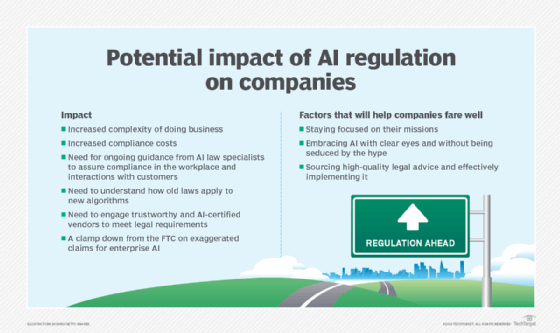

For companies based and doing business in one or more of the above jurisdictions, or looking for talent in those jurisdictions, this increased regulation has two implications.

First, businesses expect continued, or possibly accelerated, submissions of new regulatory proposals and legislation across all major jurisdictions and all levels of government over the next 12 to 18 months. need to do it.

Such increased regulatory activity is very likely to have at least two foreseeable consequences: increased business complexity and increased compliance costs. In the very jungles of AI regulation, ensuring compliance in the workplace and in client and customer interactions will require new expertise and perhaps regular updates from AI law professionals.

A particularly thorny issue is likely the potential interaction between AI policy and existing regulations. For example, in the US context, the Federal Trade Commission has made clear its intention to crack down on exaggerated claims about enterprise AI. Companies will need to be particularly mindful of how old laws apply to new algorithms, rather than simply focusing on new laws with “AI” in the title.

Similarly, businesses will need to pay close attention to working with newly established vendors that have emerged to promote common legal requirements for certifications for various types of AI use. Finding a reputable vendor, or perhaps an accredited certification body, will not only comply with new laws, but it will also demonstrate to consumers that it is as safe as possible and that companies are effectively managing AI risks. Essential to keep insurance companies happy.

The immediate future of AI regulation will almost certainly be a bumpy road. Companies that stay focused on their mission, embrace his AI with a clear eye without being swayed by the hype, and at the same time get quality legal advice and do it effectively will thrive. I guess.