Keeping up with a rapidly changing industry like AI is no easy task. So until AI can do it for you, here’s a quick rundown of last week’s coverage of the world of machine learning and notable research and experiments we didn’t cover individually.

It may not be obvious yet, but the competitive landscape in AI, especially the sub-field known as generative AI, is heating up. And it’s getting hot. This week, Dropbox launched its first corporate venture fund, Dropbox Ventures, to focus on startups building AI-powered products that “shape the future of work,” the company said. Not to be outdone, AWS debuted his $100 million program to fund generative AI initiatives led by partners and customers.

Certainly, a lot of money is being invested in the AI space. Salesforce Ventures, the VC arm of Salesforce, plans to pour $500 million into startups developing generative AI technology. Workday recently added his $250 million to existing VC funds to specifically back AI and machine learning startups. And Accenture and PwC announced plans to invest $3 billion and $1 billion in AI, respectively.

But some may wonder if money can solve the unsolved problems in the AI field.

Speaking at an awareness panel at the Bloomberg Conference in San Francisco this week, Meredith Whittaker, president of secure messaging app Signal, said the technology behind some of today’s most talked-about AI apps is becoming dangerously opaque. claimed. She gave an example of someone going to a bank and asking for a loan.

The person may be denied a loan and “have no idea that such a scheme exists.” [the] It probably came back with a Microsoft API that I determined was untrustworthy based on scraped social media,” Whitaker said. “never gonna know [because] I have no way of knowing that. “

The problem is not capital. Rather, it’s the current hierarchy of power, says Whitaker.

“I have been at the table for 15, 20 years. in the meantime at the table. It makes no sense to sit at a table without power,” she continued.

Of course, achieving structural change is much more difficult than hunting for cash. Especially when structural change does not necessarily favor the great powers. And Whitaker warns of what happens if there isn’t enough backlash.

As AI advances accelerate, so does its impact on society, she said, and we will continue on the “hype-filled path to AI.”point [of having] We have very little agency over our personal and collective lives. “

or should do it Give the industry a pause.whether it really is intention That’s another matter. That’s probably what we’ll hear discussed when she hits the Disrupt stage in September.

Other notable AI headlines from the past few days include:

- DeepMind’s AI controls the robot. DeepMind says it has developed an AI model called RoboCat that can perform different tasks across different models of robotic arms. That alone is nothing new. But DeepMind claims it’s the first model that can adapt to solve multiple tasks using different robots in the real world.

- Robot learns from YouTube. Speaking of robots, CMU Robotics Institute assistant professor Deepak Pathak this week introduced VRB (Vision-Robotics Bridge), an AI system designed to train robotic systems by watching recordings of humans. The robot monitors several key pieces of information, such as contact points and trajectories, and attempts to perform tasks.

- A sea otter participates in a chatbot game. Auto-transcription service Otter this week announced a new AI-powered chatbot that helps attendees ask questions during and after meetings, and collaborate with teammates.

- EU calls for AI regulation: European regulators are at a crossroads on how to regulate AI in the region and ultimately its commercial and non-commercial use. The EU’s largest consumer group, the European Consumer Organization (BEUC), took a stand on its own this week, saying it would stop holding back and “launch an urgent investigation into the risks of generative AI” now.

- Vimeo launches AI-powered features: This week, Vimeo announced that it’s designed to let users create scripts and record footage using the built-in teleprompter, removing long pauses and unwanted inconsistencies like “ahs” and “hmms” from recordings. announced a suite of AI-powered tools.

- Synthetic voice capital: Celebrities, an AI-powered viral platform for creating synthetic voices, has raised $19 million in a new funding round. Eleven Labs quickly regained momentum after launching in late January. However, the reputation was not always good. Especially since bad actors have started to abuse the platform for their own purposes.

- Convert speech to text: French AI startup Gladia has launched a platform that leverages OpenAI’s Whisper transcription model to convert any speech to text in near real-time via an API. Gladia promises that for $0.61 he can transcribe an hour of audio, and the transcription process takes about 60 seconds.

- Harness employs generative AI. Harness, a startup that makes toolkits to help developers work more efficiently, this week introduced a small piece of AI into its platform. Harness can now automatically resolve build and deployment failures, find and fix security vulnerabilities, and make recommendations to control cloud costs.

Other machine learning

CVPR was held in Vancouver, Canada this week, and the lectures and papers looked very interesting, so I should have gone. If you can only watch one, check out his Yejin Choi keynote on the possibilities, impossibilities and paradoxes of AI.

Image credit: CVPR/YouTube

A professor at the University of Wisconsin and recipient of a MacArthur Genius grant, he first addresses some of the unforeseen limitations of today’s most capable models. In particular, he is very bad at multiplication in GPT-4. Two he fails to find the product of three-digit numbers correctly with an alarming rate, but with a little coaxing he can do it right 95% of the time. Why is it important that language models can’t do math? Because the entire current AI market is based on the idea that language models can generalize well to many interesting tasks such as tax and accounting. Choi’s point was that as we learn more about AI’s capabilities, we should look for its limits and work internally, not the other way around.

Other parts of her talk were equally interesting and thought-provoking. You can see the whole thing here.

Introduced as a “hype killer,” Rod Brooks told an interesting history of some of the core concepts of machine learning. These concepts seem new only because few people applying them existed when they were invented. He goes back decades and touches on McCulloch, Minsky, and even Heb, showing how the ideas have remained relevant through the ages. It serves as a reminder that machine learning is a field that stands on the shoulders of giants dating back to the post-war period.

A large number of papers have been submitted and published in CVPR. Although it is reductive to look only at the winners, this is a news roundup, not a comprehensive literature review. So here’s what the conference judges found most interesting:

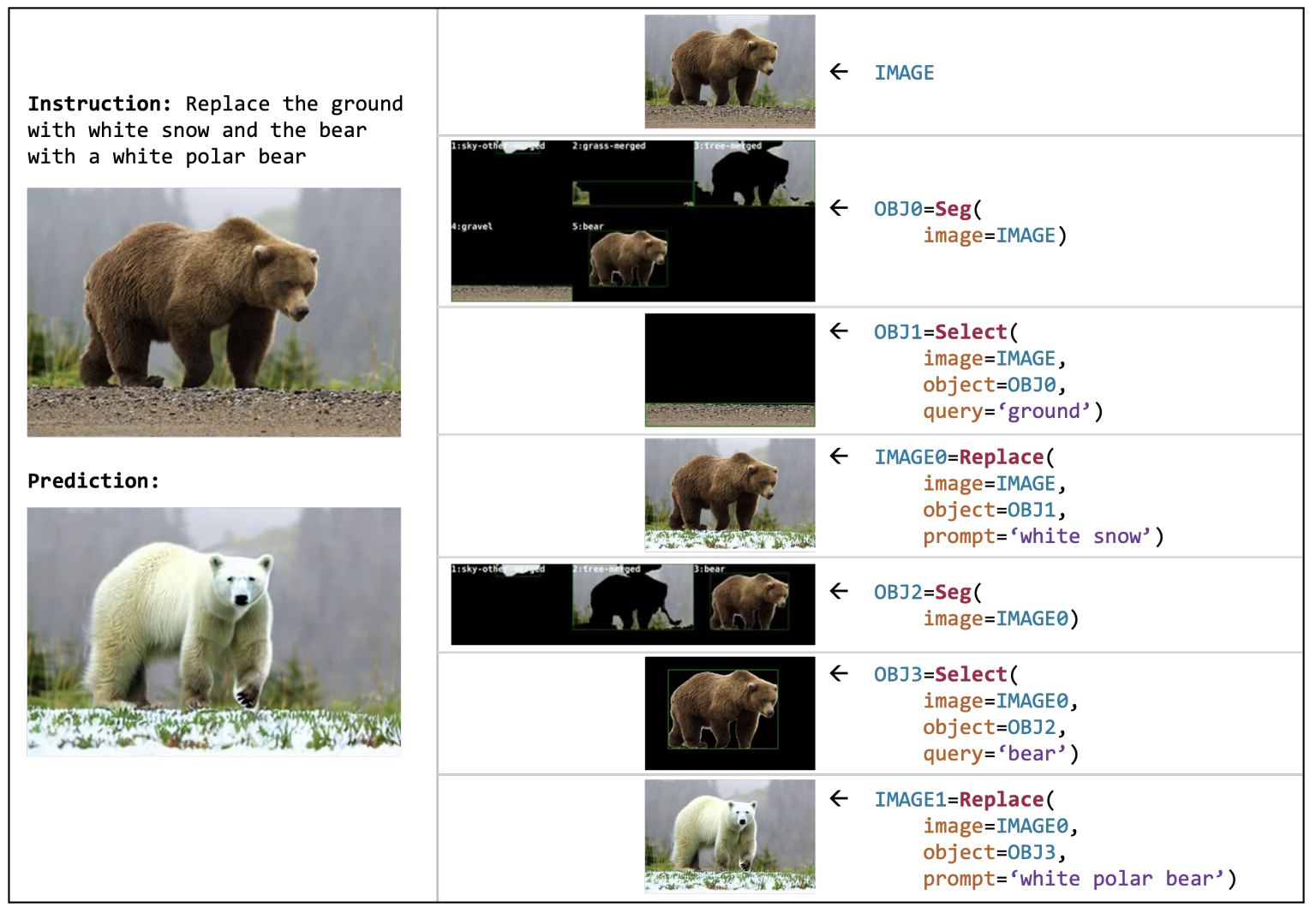

Image credit: AI2

Developed by AI2 researchers, VISPROG is a kind of metamodel that uses a versatile code toolbox to perform complex visual manipulation tasks. Suppose you have a picture of a grizzly bear on the grass (see picture). Just “replace the bear with a polar bear on the snow” and it will start working. It identifies parts of an image, separates them visually, finds and generates appropriate replacements, and intelligently reassembles the whole without further prompting on the part of the user. Blade Runner’s “enhanced” interface is starting to look downright mundane. And that’s just one of its many features.

Plan-Oriented Autonomous Driving, a multi-institutional research group in China, seeks to integrate various pieces of our rather piecemeal approach to self-driving cars. There is typically a step-by-step process of “perceive, anticipate, plan”, each of which may involve a number of subtasks (segmenting people, identifying obstacles, etc.). Their model tries to combine all these into one model. This is similar to the multimodal model where text, audio or images can be used as inputs and outputs. Likewise, the model simplifies, in a way, the complex interdependencies of modern autonomous driving stacks.

DynIBaR demonstrates a high-quality, robust method of manipulating video using a “dynamic neural radiance field” (NeRF). A deep understanding of the objects in the video allows for stabilization, dolly movements, and other things that would normally not be possible if the video was already recorded. Once again… “strengthen”. This is definitely the kind of thing Apple hires you to do at his next WWDC.

As for DreamBooth, you may remember that the project page was published earlier this year. This is hands down the best system ever for creating deepfakes. Of course, performing this kind of image manipulation is valuable and powerful, not to mention fun. Researchers like Google are working to make it more seamless and realistic. Results may come later.

The Best Student Paper Award goes to a method for comparing and matching meshes or 3D point clouds. Frankly, it’s too technical to explain, but it’s an important feature for real-world perception, and improvements are welcome. See this paper for examples and details.

Just two more important points. Intel showed off this interesting model LDM3D for generating 3D 360 images like virtual environments. So if you say “place us in the overgrown ruins of the jungle” in the metaverse, a new one will just be created on demand.

Meta also released a text-to-speech tool called Voicebox that is very good at extracting and replicating voice features, even if the input is not clean. Replicating voice usually requires a good amount and variety of clean voice recordings, but Voicebox does it with less data (think 2 seconds or so) than many others. . Fortunately, they keep this genie in a jar for now. For those of you thinking you might need to duplicate your voice, check out Acapela.