The clinical data problem is not a storage problem. Most organizations already have a data warehouse, CTMS, EDC, and BI layer somewhere downstream. The problem is that none of these systems can communicate with each other in a way that supports the actual decisions that clinical teams need to make, so decisions are instead made in spreadsheets.

Today, we’re releasing Site Feasibility Workbench as a fully open source Databricks app. This is to demonstrate what clinical operational intelligence looks like when applications, models, and data reside on the same platform. The Tufts Center for Drug Development and Research documented that 37% of activated trial sites enrolled fewer patients than targeted, and an additional 11% enrolled no patients at all. This combined effect meant that 53% of trials exceeded their planned enrollment schedule, and 1 in 6 took more than twice as long as planned (Lamberti et al.; subsequent CSDD impact reports continue to track similar levels of underperformance). With unrealized drug sales of up to $500,000 per day and direct clinical trial costs of $40,000 per day, chronic poor facility performance is one of the most significant cost drivers in drug development. This total underperformance rate has remained roughly flat for at least 20 years. Tools don’t matter. The architecture is.

Clinical operations teams don’t need more dashboards to connect to existing systems. Decision support applications must exist where the data and models reside. Doing so actually closes the feedback loop between the prediction and the operational results that validate it.

Architecture discussion

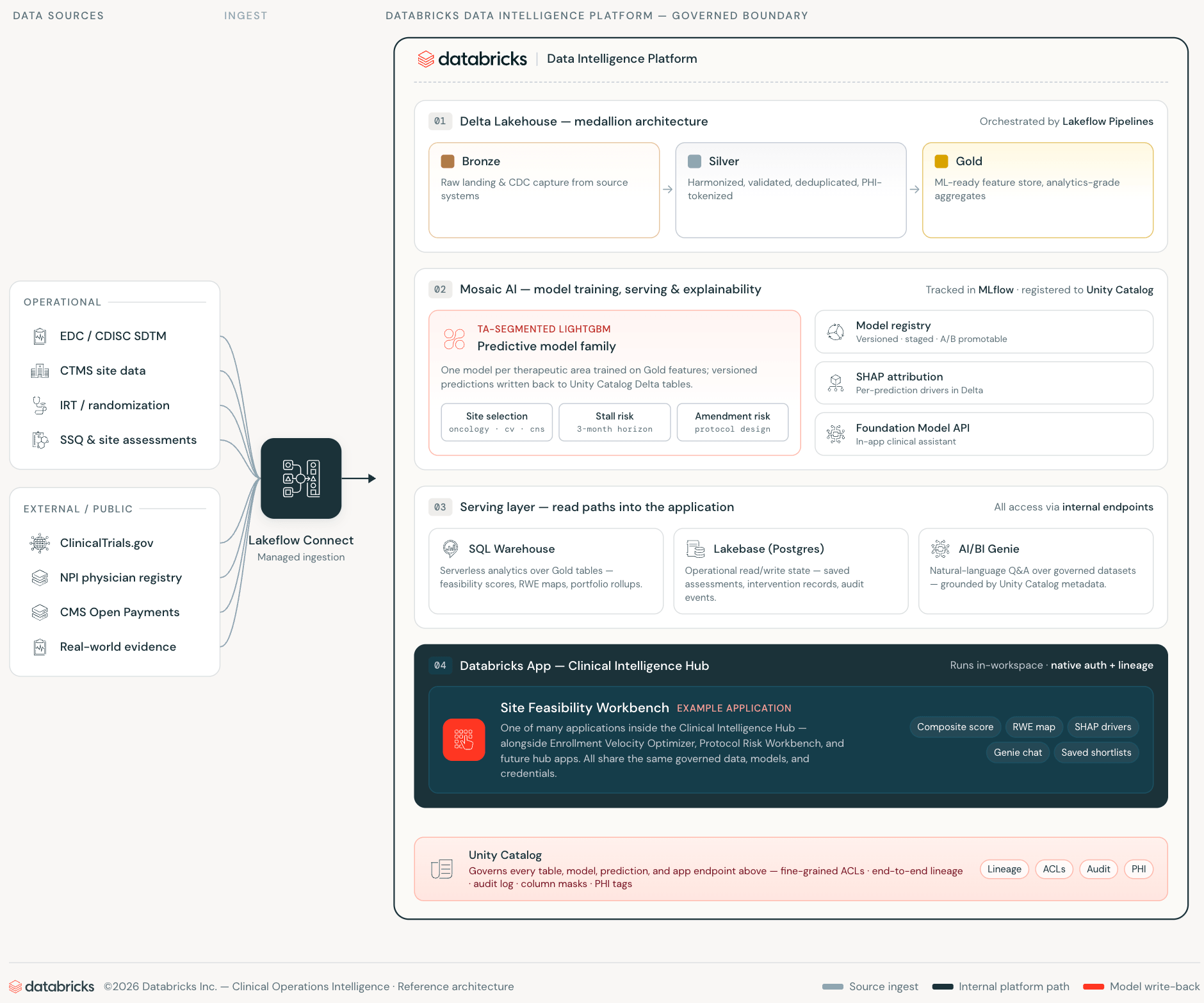

The traditional approach to clinical decision support looks like this: Analytics data is stored in a data warehouse or lakehouse. A separate application database maintains operational state. The pipeline keeps them loosely synchronized. The web application is placed in front of both, adding semantic harmony to the Silver layer. All tiers introduce integration overhead, credential surface area, and synchronization lag that reduces the reliability of the data your application displays.

Databricks Apps, Lakebase, and AI/BI Genie eliminate each of these layers by making them unnecessary rather than abstracting them.

Databricks apps run web applications within your workspace. The app authenticates as a first-class workspace service principal, queries the Unity catalog via the SQL Statements API, and calls the AI/BI Genie via the Workspace REST API. Everything is done on internal connections. Clinical operations data never crosses workspace boundaries. Apps inherit access controls from the Unity Catalog without any additional configuration.

Lakebase is the production database layer, a managed PostgreSQL that scales to zero when fully idle, provisioned, and authenticated within the workspace identity system. While traditional applications require separately managed RDS instances with their own schema drift, sync jobs, and credential rotation, Lakebase resides within the same platform where your data and models reside.

AI/BI Genie closes the final gap: natural language access to managed data embedded directly into application workflows. The survey manager asks questions in plain English against the same Unity catalog tables that the ML model was trained on, with the same access controls.

The result is a clinical production application that makes no external API calls, does not maintain a separate production database infrastructure, and does not require a synchronized pipeline between the analytics and production layers.

Auditability discussion

The industry standard approach to site feasibility relies on commercial scoring products provided by vendors and analytics platforms provided by CROs. These tools are built on aggregated industry data, which can serve as a baseline, but don’t tell you the details of your portfolio. CTMS, EDC, and IRT’s decade-long history of sponsors convey important signals about how their sites perform with their protocols.

When your ML stack resides on Databricks, that organizational knowledge becomes your training data. The workbench’s models are trained on historical enrollment rates, site certification history, screen failure patterns, and protocol execution records, rather than industry averages. When used properly, CMS Open Payments adds a layer of public signals that can be tied to research activities and infrastructure, and is available for free. As the trial portfolio grows, the model improves on the same infrastructure. This is a compounding benefit that is possible with a single platform architecture but not with a licensed scoring product. Every new study improves the prediction, and every new site relationship is factored into the next training run. MLflow tracks all model training runs, parameters, metrics, and artifacts. This enables comparisons between model versions, on-demand reproducibility, and a complete audit trail from raw CTMS and EDC records to deployed predictions.

The regulatory aspect is also important here. 21 CFR Part 11, ICH E6(R3), and FDA’s Good Machine Learning Practice (GMLP) guidance, in addition to FDA’s increasing emphasis on transparency in algorithmic decision support, make model explainability and data governance important considerations rather than optional features. All predictions include SHAP attribution stored as a managed Unity Catalog Delta table, so they are versioned in MLflow, lined through Unity Catalog, and queryable. The rationale behind site selection is auditable, as is the score itself. Clinical operations teams can answer questions from data monitoring boards using SQL queries rather than black-box vendor reports.

what we built

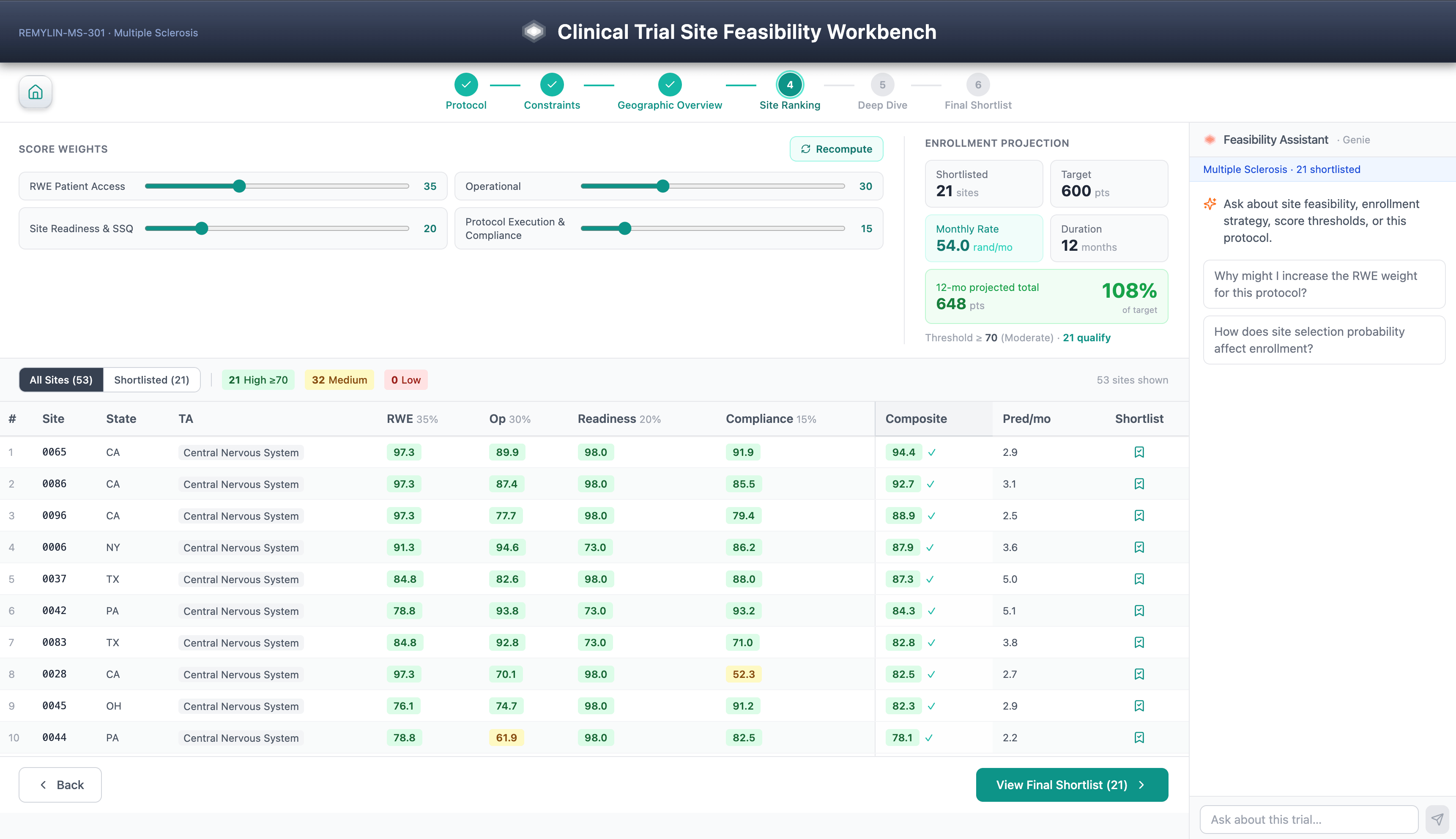

The Site Feasibility Workbench is a six-step guided workflow for selecting clinical trial sites. Protocol selection, scoring constraints, geographic overview, facility rankings, SHAP-led facility investigation, and shortlist. Diversity considerations are a first-class scoring feature that aligns with the expectations of FDA’s Diversity Action Plan under FDORA 2022.

The composite feasibility score combines real-world evidence, patient access data, past facility performance, facility accreditation history, Open Payments KOL signals, and protocol execution factors, all driven by a TA segmented LightGBM model trained on an organization’s proprietary CTMS, EDC, and IRT history.

It’s not the workflow steps or model features that are worth highlighting. Patient-level data inherits access controls from the Unity catalog, and processing of PHI follows the sponsor’s HIPAA safe harbor/professional-determined posture set at the catalog or schema level.

This is possible through architecture. Every prediction includes a SHAP explanation stored as a managed delta table along with the prediction itself, making the model rationale as auditable and versioned as the explained score. All predictions are decomposed into controlled SHAP attribution, allowing sponsors to audit recommendations for systematic underrepresentation of community sites, minority-serving institutions, or first-time investigators, turning explainability into equity controls.

Saved picklists are maintained in Lakebase for team sharing. The AI/BI Genie assistant answers cross-domain questions against the same Unity catalog table in natural language. None of these require any infrastructure outside of your workspace.

This is a decision support layer, not a source of record system. CTMS/EDC/IRT remains authoritative. The workbench generates predictions whose lineage is managed in Unity Catalog and MLflow.

The complete application (FastAPI backend, React frontend, seed notebook, and deployment script) is published as an open source repository. Deploying into an existing Databricks workspace using Unity Catalog requires approximately 30 minutes of technical deployment time, prior to sponsor-specific security review and validation.

One module on a larger platform

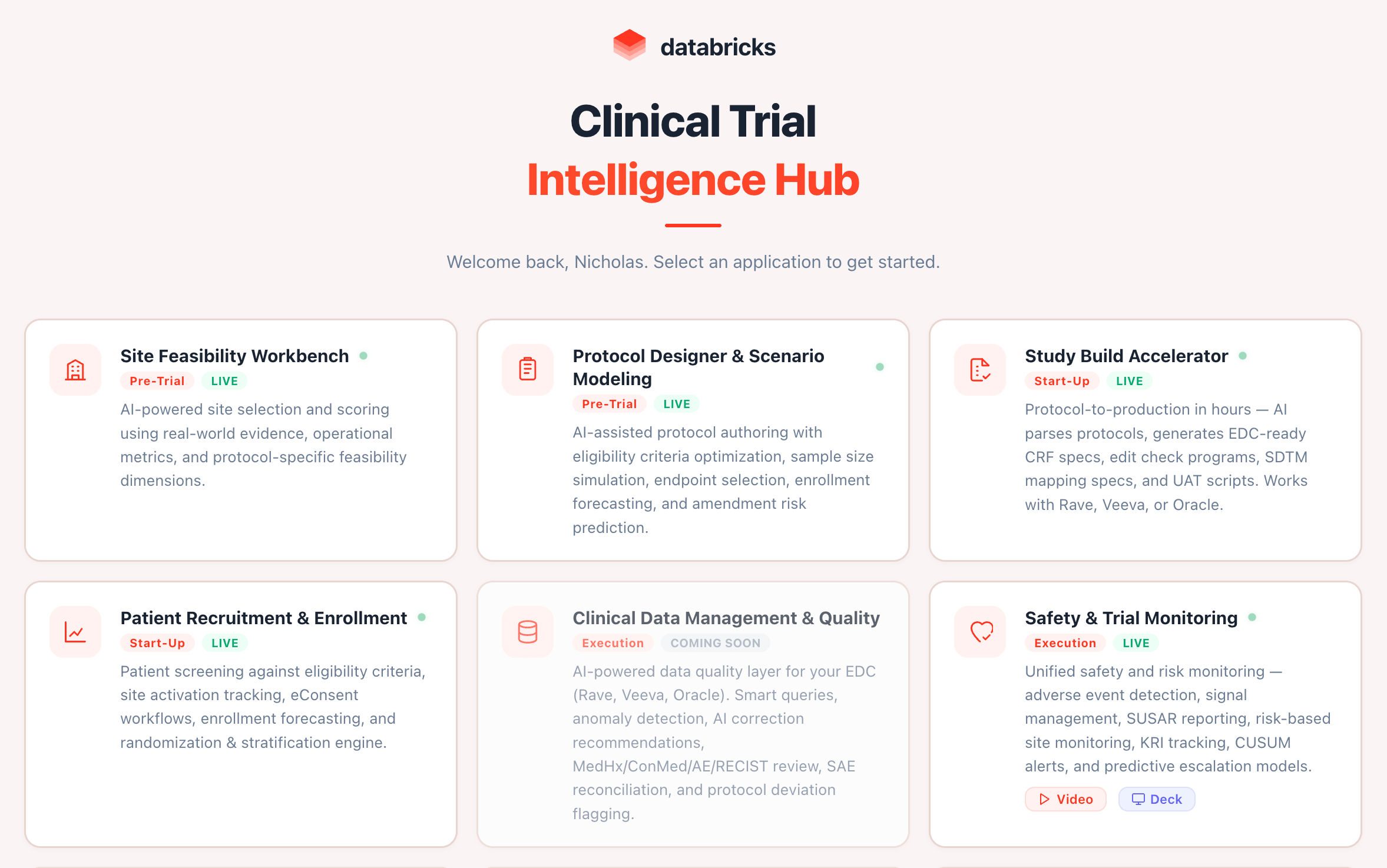

Site Feasibility Workbench is the first public release of Databricks Clinical Operations Intelligence Hub, a broader architecture that spans the entire trial lifecycle.

- Site Feasibility and Selection – Contents of this Repository

- Patient Cohorts and Recruitment — Cohort construction following real-world evidence-based protocols at EHR and Lakehouse scale

- Enrollment Velocity Optimizer — Monthly, site-by-site ML stall prediction 1-3 months into the future

- Risk-based monitoring and compliance — Continuously monitor enrollment anomalies, data lags, and protocol deviations.

All four are deployed as Databricks apps. All four query the Unity Catalog directly. None make external API calls. When clinical applications exist where the data and models reside, the feedback loop closes. The site selection model learns from the registration results. The risk score will be updated as the revision history increases. All AI-driven recommendations leave a lineage trail back to the CTMS, EDC, and IRT records that generated them.

Let’s get started

Clone the public repository. Expand. Please tell me what you changed.

GitHub repository link

To learn more about Clinical Operations Intelligence Hub, watch the BrickTalk recording: Scaling BioPharma Intelligence + Databricks Agentic Clinical Ops.

The production Lakebase and Databricks apps cover the platform’s primitives in depth.

This post is part of the Databricks Clinical Operations Intelligence Hub series. This is a set of open source Databricks apps that cover the entire trial lifecycle. Start with the Site Feasibility Workbench GitHub repository. For a complete overview of the platform, see BrickTalk: Scaling BioPharma Intelligence + Databricks Agentic Clinical Ops. See related platform posts for Lakebase and Databricks apps below.