MIT researchers have spent more than a decade researching technology that allows robots to “see” through obstacles to find and manipulate hidden objects. Their method utilizes surface-penetrating radio signals that reflect off hidden items.

Researchers are now leveraging generative artificial intelligence models to overcome long-standing bottlenecks that limited the accuracy of previous approaches. The result is a new way to generate more accurate shape reconstructions, potentially improving a robot’s ability to reliably grasp and manipulate objects that are obscured from view.

This new technology builds a partial reconstruction of a hidden object from reflected radio signals and fills in missing parts of its shape using a specially trained generative AI model.

The researchers also introduced an augmented system that uses generative AI to accurately reconstruct an entire room, including all furniture. The system relies on radio signals transmitted from a single fixed radar, which are reflected back to humans as they move through space.

This overcomes one of the key challenges in many existing methods, which is the need to attach wireless sensors to mobile robots to scan the environment. Also, unlike some common camera-based technologies, this method protects the privacy of people in the environment.

These innovations could allow warehouse robots to verify packaged goods before shipping, potentially eliminating waste from returns. It could also allow smart home robots to know the location of someone in the room, making human-robot interactions safer and more efficient.

“What we’re doing now is developing generative AI models that help us understand radio reflections. This opens up a lot of interesting new applications, but technically it’s also a qualitative leap in our ability to now be able to fill in gaps that we couldn’t see before, and to be able to interpret reflections and reconstruct entire scenes,” said Dr. Fitzgerald, associate professor in the Department of Electrical Engineering and Computer Science and director of the Signal Kinetics Group at the MIT Media Lab, and a researcher on these technologies. said Fadel Adib, lead author of the paper. “We are using AI to finally unlock wireless vision.”

Adib was joined on the first paper by first author and research assistant Laura Dodds. as well as research assistants Maisy Lam, Waleed Akbar, and Yibo Cheng. and a second paper by lead author and former postdoc Kaichen Zhou. Dods. and research assistant Syed Saad Afzal. Both papers will be presented at the IEEE Conference on Computer Vision and Pattern Recognition.

Overcoming specularity

Adib Group has previously demonstrated the use of millimeter wave (mmWave) signals to create accurate reconstructions of 3D objects hidden from view, such as a lost wallet buried under a mountain.

These radio waves are the same type of signals used by Wi-Fi and can pass through common obstacles like drywall, plastic, and cardboard and bounce off hidden objects.

However, millimeter waves are usually specularly reflected. This means that after a wave hits a surface, it is reflected in a single direction. Large areas of the surface reflect signals from millimeter-wave sensors, making those areas virtually invisible.

“When we want to reconstruct an object, we can only see the top, not the bottom or sides at all,” Dodds explains.

Researchers have previously used principles of physics to interpret the reflected signals, but this limits the accuracy of the reconstructed 3D shape.

A new paper overcomes that limitation by using a generative AI model to fill in the missing parts with partial reconstruction.

“But the challenge is how do you train these models to fill these gaps?” Adib says.

Researchers typically use very large datasets to train generative AI models. This is one of the reasons why models like Claude and Llama perform so well. However, there are no mmWave datasets large enough for training.

Instead, the researchers adapted images from a large computer vision dataset to mimic the properties of millimeter wave reflections.

“We were simulating specularity properties and noise from reflections so that we could apply existing datasets to the domain. It took years to collect enough new data to do this,” Lamb says.

The researchers embed the physics of millimeter-wave reflections directly into these adaptive data, creating synthetic datasets used to teach generative AI models to perform plausible shape reconstructions.

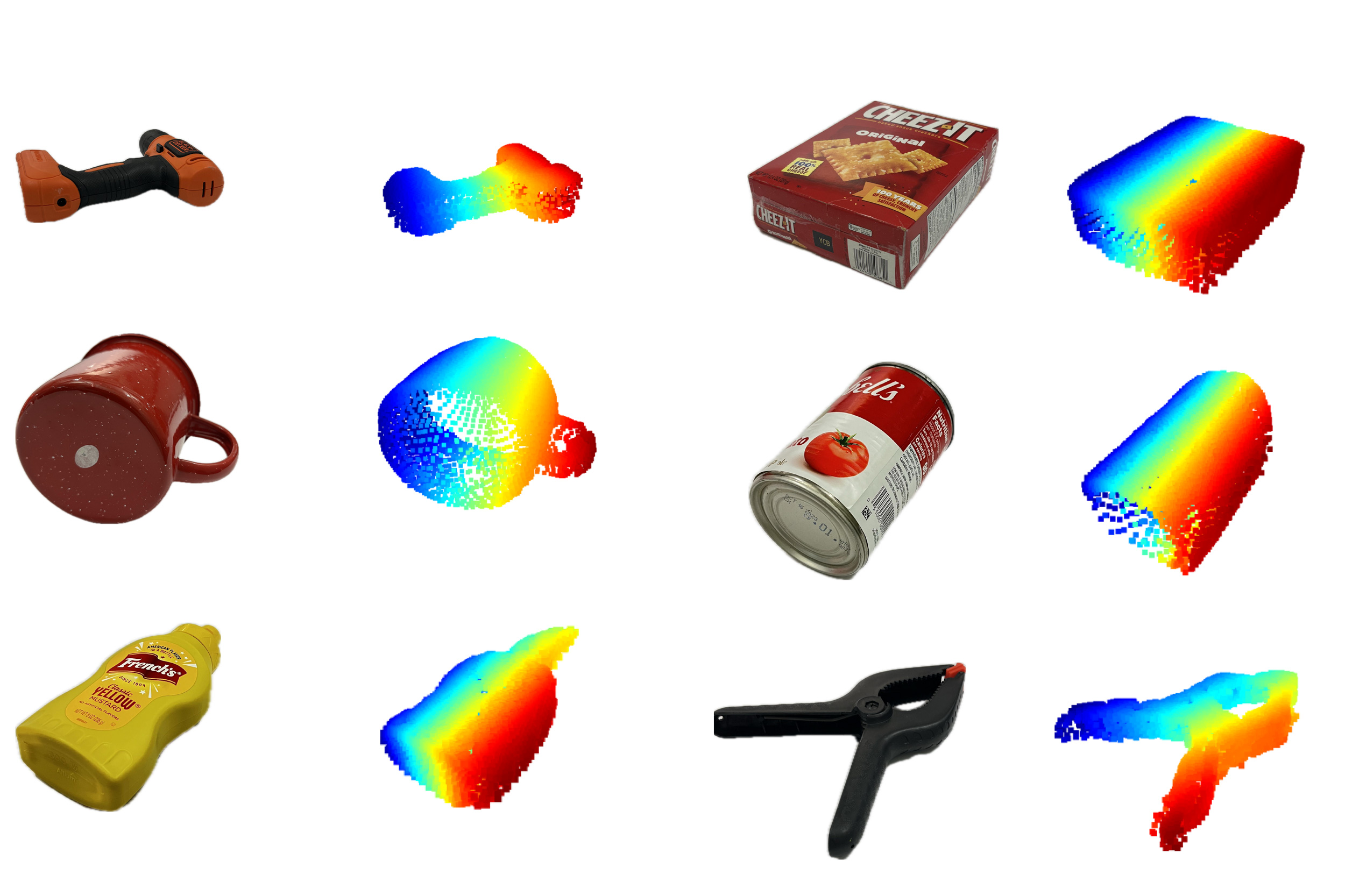

The complete system, called Wave-Former, proposes a set of potential object surfaces based on millimeter wave reflections and feeds them into a generative AI model to complete the shape and refine the surface until a perfect reconstruction is achieved.

Wave-Former was able to generate high-fidelity reconstructions of approximately 70 everyday objects, including cans, boxes, dishes, and fruit, with accuracy improved by nearly 20% over state-of-the-art baselines. Objects were hidden behind or under cardboard, wood, drywall, plastic, and fabric.

see “ghost”

Using this same approach, the team built an enhanced system that uses millimeter wave reflections from humans moving within the room to completely reconstruct an entire indoor scene.

Human movement generates multipath reflections. Dodds explains that some millimeter waves reflect off of people, and some bounce back off walls and objects, returning to the sensor.

These secondary reflections generate so-called “ghost signals”. This is a reflected copy of the original signal that changes position as the person moves. These ghost signals are usually ignored as noise, but they also hold information about the layout of the room.

“Analyzing how these reflections change over time gives us a rough understanding of our surrounding environment, but trying to interpret these signals directly limits our accuracy and resolution,” Dodds says.

They used a similar training method to teach a generative AI model to interpret these coarse scene reconstructions and understand the behavior of multipath mmWave reflections. This model fills gaps and adjusts the initial reconstruction until the scene is complete.

They tested a scene reconstruction system called RISE using the trajectories of more than 100 people captured by a single millimeter-wave radar. On average, RISE produced reconstructions that were approximately twice as accurate as existing techniques.

In the future, the researchers hope to improve the granularity and detail of the reconstruction. They also hope to build large-scale foundational models of wireless signals, such as the language and vision foundational models GPT, Claude, and Gemini, to open up new applications.

This research was supported in part by the National Science Foundation (NSF), the MIT Media Lab, and Amazon.