AI improves some core apps with summarization and transcription features

Apple's next-generation operating system will feature Project Greymatter and bring a variety of AI-related enhancements, and there are new details about upcoming AI features for Siri, Notes, and Messages.

Following widespread claims and reports regarding AI-related enhancements in iOS 18, Apple Insider We've got more information about Apple's plans in the AI space.

The company is internally testing a range of new AI features ahead of its annual WWDC event, according to people familiar with the matter. Known as Project Greymatter, the company's AI improvements will be focused on practical benefits for end users.

In pre-release versions of Apple's operating system, the company is working on a notification summary feature called “Greymatter Catch Up,” which will work with Siri to let users request and receive a summary of their recent notifications through the virtual assistant.

Siri will be getting a major update to its response generation capabilities through a new Smart Response framework and Apple's in-device LLM. When generating replies and summaries, Siri will be able to take into account entities such as people, companies, calendar events, places, and dates.

In our previous reports on Safari 18, Ajax LLM, and the updated Voice Memos app, Apple Insider After revealing that Apple plans to bring AI-powered text summarization and transcription to its built-in apps, we now know that the company also plans to bring these features to Siri.

This ultimately means that Siri will be able to answer queries on your device, create summaries of longer articles, and transcribe audio like an updated note or voice memo app, all using Ajax LLM or cloud-based processing for more complex tasks.

Apple is also reportedly testing enhanced, “more natural” voices and improved text-to-speech capabilities that should ultimately result in a much better user experience.

Apple is also working on cross-device media and TV control for Siri, which could allow you to use Siri on your Apple Watch to play music on another device, for example, though this feature isn't expected to arrive until late 2024.

The company decided to incorporate artificial intelligence into some of its core system applications with different use cases and tasks in mind, and one notable improvement concerns photo editing.

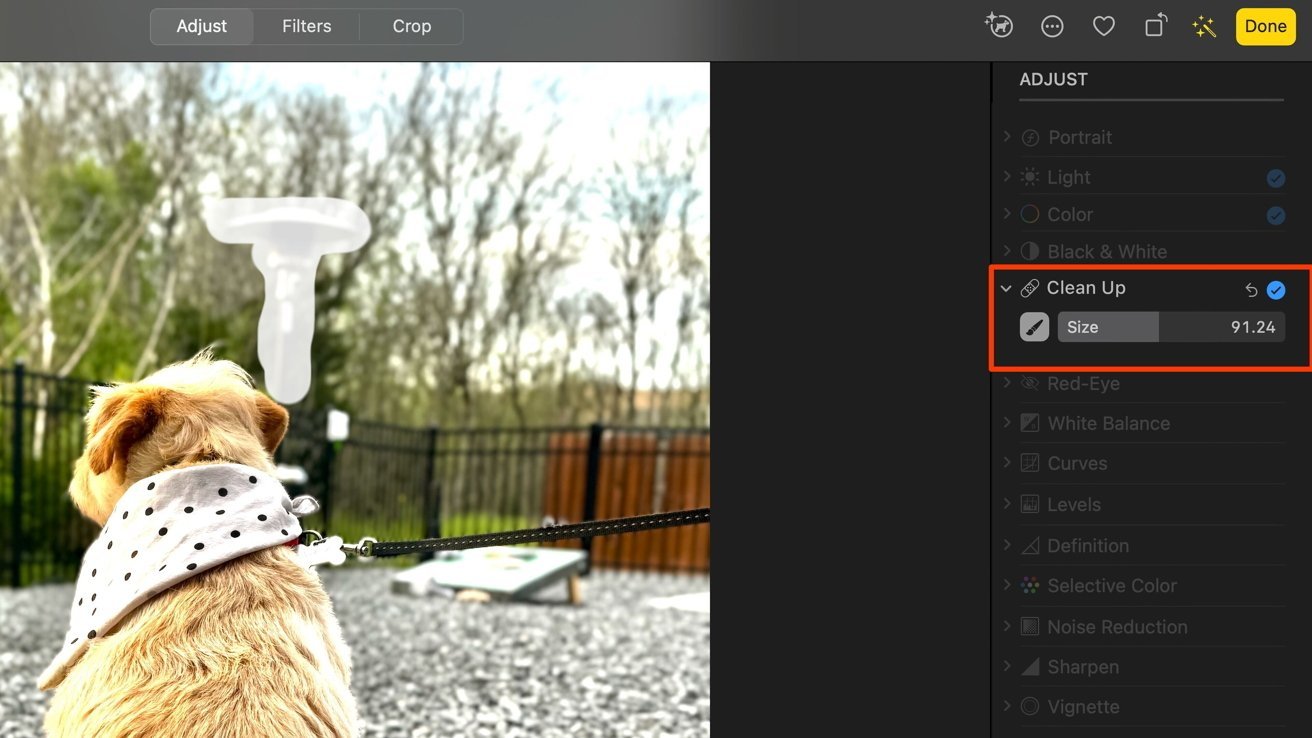

Apple has developed generative AI software to improve image editing

iOS 18 and macOS 15 are set to introduce AI-powered photo editing options to apps like Photos, and Apple has developed a new Clean Up feature in-house that uses generative AI software to let users remove objects from images.

The Clean Up tool is meant to replace Apple's current Retouch tool.

Also in connection with Project Gray Matter, the company has created an internal application known as “Generative Playground,” revealed exclusively by a person familiar with the application. Apple Insider These include the ability to use Apple's Generate AI software to create and edit images, and it has iMessage integration in the form of a dedicated app extension.

In Apple's testing environment, it's possible to use artificial intelligence to generate images and send them via iMessage, and there are signs that the company is planning a similar feature for end users of its operating system.

This information lines up with another report that claims users will be able to generate their own emojis using AI, but the image generation feature could be additional.

A pre-release version of Apple's Notes application also includes references to a generative tool, according to people familiar with the matter, but it's unclear whether the tool will generate text or images, as is the case with the Generative Playground app.

The notes will now include AI transcription and summarization features, and math notes will also be provided.

Apple is preparing major enhancements to its built-in Notes application that is set to debut in iOS 18 and macOS 15. The updated Notes will support in-app audio recording, audio transcription, and summarization powered by LLM.

iOS 18's Notes app will support in-app audio recording, transcription, and summarization

The audio recording, transcription, and text-based summary are all available within a single note, along with any other material the user chooses to add, meaning, for example, that a single note can contain a recording of an entire lecture or meeting, complete with whiteboard photos and text.

These features truly power up Notes and make it the go-to app for students and business professionals. The addition of speech-to-text and summarization capabilities gives Apple's Notes application an edge over competitors like Microsoft's OneNote and Otter.

Application-level support for audio recording, and AI-powered audio transcription and summarization will greatly improve the Notes app, but that's not all Apple is working on.

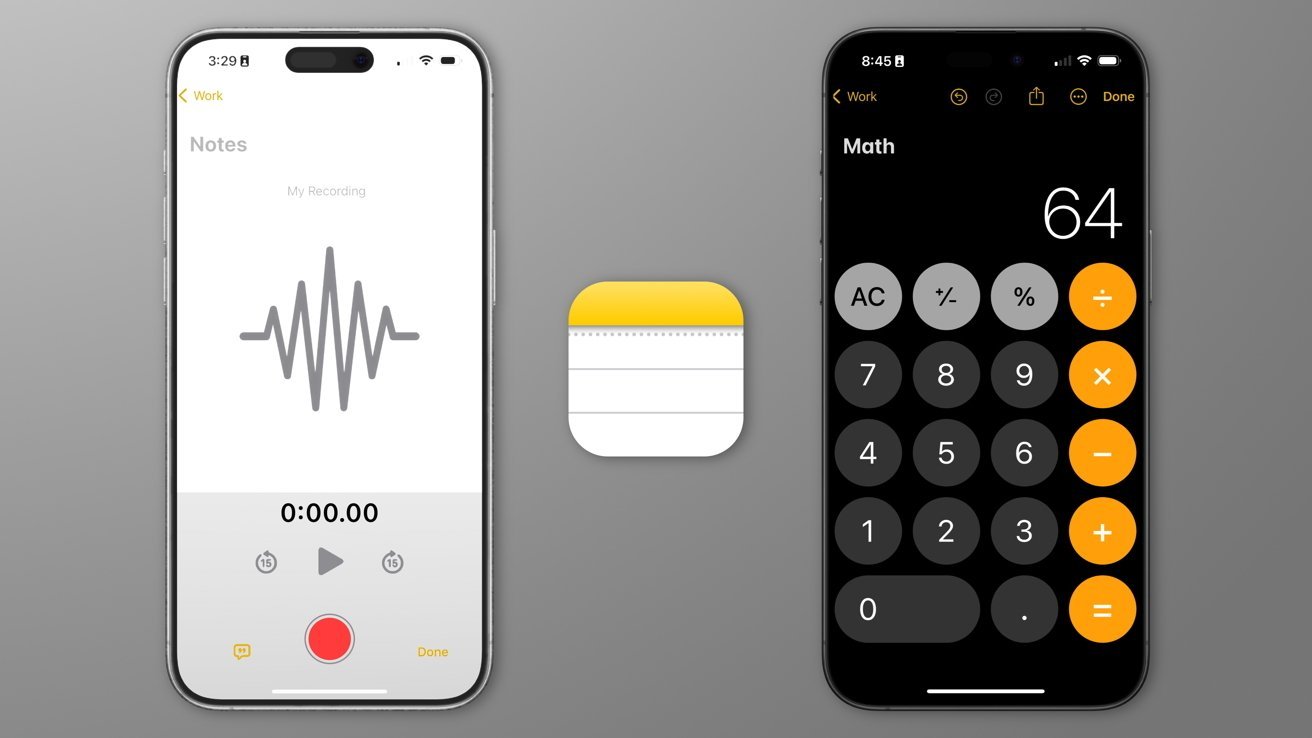

Math Notebook – Use AI to create graphs and solve equations

The Notes app is getting a brand new feature called “Math Notes,” which opens up support for proper math notation and allows integration with Apple's new GreyParrot Calculator app. More details about the features of “Math Notes” have now emerged.

iOS 18's Notes app introduces AI-assisted speech transcription and math note support

A person familiar with the new features said that Math Notes will be able to recognize the text of equations and suggest solutions to them. Support for graphing equations is also in the works, potentially bringing something similar to the Grapher app for macOS to Notes.

Apple is also working on enhancements focused on math-related input, in the form of a feature called “keyboard math prediction.” Apple Insider It explained that this feature allows for the completion of math expressions whenever they are recognised as part of text input.

This means that users will have the option to auto-complete math equations within Notes, similar to how Apple currently offers predictive text and inline completion in iOS, a feature that will also be coming to visionOS later this year.

Apple's visionOS will also see better integration with Apple's Transformer LM, a predictive text model that offers suggestions as you type. The operating system will also see a redesigned voice command UI, a sign of how much Apple cares about input-related enhancements.

The company is also looking to improve user input with a feature it's calling “Smart Replies,” available across Messages, Mail and Siri, which will allow users to reply to messages and emails with basic text-based responses that are generated on the fly by Ajax LLM on Apple's devices.

Apple's AI with Google Gemini and other third-party products

AI is permeating virtually every application and device, and the use of AI-focused products like OpenAI's ChatGPT and Google Gemini has also seen a huge increase in their overall popularity.

Google Gemini is a popular AI tool

Apple is developing its own AI software to compete with rivals, but its AI isn't as good as Google Gemini Advanced. Apple Insider I learned.

At its annual developer conference, Google I/O, on May 14, the company showed off an interesting use case for artificial intelligence: users can ask questions in the form of videos and receive AI-generated answers and suggestions.

As part of the event, Google's AI was shown a video of a broken record player and asked why it wasn't working, and the software identified the model of the record player and suggested that the record player may not be properly balanced, which was why it wasn't working.

The company also announced “Google Veo,” software that can generate videos using artificial intelligence. OpenAI also has its own video generation model called Sora.

Apple's Project Greymatter and Ajax LLM cannot generate or process video, which means the company's software cannot answer complex video questions about consumer products. This is likely why Apple has sought to partner with companies like Google and OpenAI to enter into licensing agreements and provide more functionality to its users.

Apple will compete with products like the Rabbit R1 by offering AI software vertically integrated into its existing hardware.

Compared to physical AI-themed products like the Humane AI Pin and Rabbit R1, Apple's AI project has the major advantage of running on devices that users already own, meaning users don't need to buy a special AI device to enjoy the benefits of artificial intelligence.

Humane's AI Pin and Rabbit R1 were also often thought of as unfinished or only partially functional products, with the latter revealed to be little more than a custom Android application.

Apple's AI-related projects are set to debut at the company's annual WWDC on June 10 as part of iOS 18 and macOS 15. Updates to the Calendar, Freeform and System Preferences apps are also on the way.