New Delhi:

The AI video shows an ecstatic Narendra Modi, wearing a trendy jacket and trousers, grooving on stage to a Bollywood song as the crowd cheers. The Indian Prime Minister reshared the video on X and said, “Such creativity at the peak of voting season is truly gratifying.”

Another video set in the same setting shows Mr Modi's rival Mamata Banerjee dancing in a sari-like outfit, but what is playing in the background is a video of Mr Modi's departure from the party. This is part of her speech criticizing those who have joined the party. State police have launched an investigation into the video, saying it “may have an impact on law and order.”

Mixed reactions to videos created using artificial intelligence (AI) tools show how the use and abuse of artificial intelligence (AI) tools is on the rise as the world's most populous country conducts a huge general election This highlights the concerns that regulators and public safety authorities are facing.

Easily created AI videos with near-perfect shadows and hand movements can mislead even the digitally savvy. But the stakes are higher in a country where many of its 1.4 billion people are technologically challenged and where manipulated content can easily stoke sectarian tensions, especially during election times.

A World Economic Forum study released in January found that the risk to India from misinformation over the next two years is likely to be higher than the risk from infectious diseases or illicit economic activity.

“India is already at great risk of disinformation. With the advent of AI, disinformation can spread 100 times faster,” he said, raising concerns about the use of AI in India's elections to some political parties. said Sagar Vishnoi, a New Delhi-based consultant who is advising them.

“Elderly people who are not tech-savvy are increasingly being fooled by AI video-powered fake narratives. There is a sex.”

The 2024 national election, which will be held over six weeks and end on June 1, will be the first election in which AI will be deployed. The first examples were innocuous and limited to a few politicians using technology to create video and audio to personalize their campaigns.

However, serious abuse incidents occurred in April, including deepfakes of Bollywood actors, new tab openings and fake clips criticizing PM Modi, and new tab openings involving two of PM Modi's close aides that led to the arrest of nine people. It made headlines.

difficult to counter

The Election Commission of India last week warned political parties against using AI to spread misinformation and shared seven provisions of information technology and other laws, including those for fabrication, rumours, and incitement to enmity. It was announced that the crime would be punishable by up to three years in prison.

A senior national security official in New Delhi said authorities were concerned that fake news could cause unrest. The easy availability of AI tools makes it possible to fabricate such fake news, especially during election periods, and difficult to counter, officials said.

“We don't have (proper monitoring) capabilities. It's difficult to keep track of the ever-evolving AI environment,” the official said.

“Social media cannot be completely monitored and people have forgotten how to manage content,'' said a senior election official.

They declined to be named because they were not authorized to speak to the media.

Elections in other parts of the world, such as the US-Pakistan and Indonesia, are also increasingly using AI and deepfakes. A recent video that went viral in India shows the challenges facing authorities.

Over the years, India's IT ministry committee has set up committees that, either at its own discretion or in response to complaints, order the blocking of content it deems to be likely to harm public order. During this election period, pollsters and police forces across the country were deployed, with hundreds of employees opening new tabs to detect problematic content and request its removal.

While Prime Minister Modi's response to the AI dance video (“It was fun to watch myself dance”) was light-hearted, Kolkata police in West Bengal state accused X user SoldierSaffron7 of sharing the Banerjee video. An investigation has begun.

Dulal Saha Roy, a cybercrime officer in Kolkata, shared a type of notice with X asking users to delete the video or “be liable for severe penalties”.

“No matter what happens, I won't delete it,” the user told Reuters in a direct message on X, declining to give his phone number or real name for fear of police action. “They can't track (me).”

Election officials told Reuters that authorities can only tell social media platforms to remove content, and confusion ensues if the platform claims the post does not violate internal policies. Stated.

Biggles video

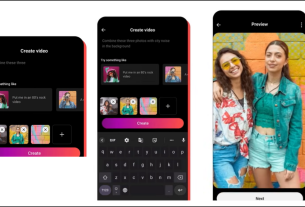

Modi and Banerjee's dance videos, which have been viewed 30 million and 1.1 million times respectively on X, were created using free website Viggle. This site uses your photo and some basic prompts detailed in the tutorial to create a video of the person in the photo dancing or doing other real-life movements within minutes. Can be generated.

Viggle co-founder Hang Chu and Banerjee's offices did not respond to inquiries from Reuters.

Apart from the two AI dance videos, another 25-second Viggle video circulating online shows Banerjee appearing in front of a burning hospital and using a remote control to blow it up. This is an AI-modified clip from the 2008 film The Dark Knight in which Batman's enemy, the Joker, wreaks havoc.

The video post has been viewed 420,000 times.

West Bengal police believe this is a violation of India's IT laws, but an email notification sent by Company X to users seen by Reuters states that Company X is committed to “protecting and respecting user voices. “We strongly believe that this is the case,” and therefore no action has been taken.

“They can't do anything to me. I didn't take that (notification) seriously,” the user told Reuters via X's direct message.