Section “Complex Processes and \(\Epsilon\)-Describes the machine \(\Epsilon\)-Machines and the section “Recurrent Neural Networks” describes typical Recurrent Neural Networks (RNN) and Reservoir Computer (RC) setups.

complex processes and \(\Epsilon\)-machine

Each stationary stochastic process is \(\Epsilon\)-machine. This one-to-one association is particularly noteworthy because it gives an explicit structure to the space of all such processes. You can explore the space of stationary processes, or you can explore the space of all processes as well. \(\Epsilon\)-machine. This further improves usability. \(\Epsilon\)– Enumerate machines efficiently13.

In information theory they are considered as processes Generator It is written as a minimal unifier hidden Markov chain (HMC).In computational theory, they are considered as processes recognition device Described as a minimal probabilistic deterministic automaton (PDA). 14,15. Put simply, \(\Epsilon\)– The machine has a hidden state \(\sigma \in \mathcal {S}\)is called state of causalityspawn a process by emitting the symbol \(x\in \mathcal {A}\) Over a series of transitions from state to state. For the purposes of the following neural network comparisons, we investigate binary-valued processes. \(\Math {A}=\{0,1\}\). \(\Epsilon\)– The machines are unifiers or “stochastic deterministic” models because their transition probabilities are different. \(p(\sigma '|x,\sigma )\) from the state \(\sigma\) I will state \(\sigma '\) given emitted symbol X Supported alone. More simply, there is at most one desired state. In computational theory, this is a deterministic transition in the sense that the model reads a symbol that uniquely determines the subsequent state. That said, these models are probabilistic as process generators. \(\sigma\)some symbols X can be released, and each has a release probability \(p(x|\sigma )\). These models thus represent a probabilistic language, a set of output strings, each with a certain probability of occurring.

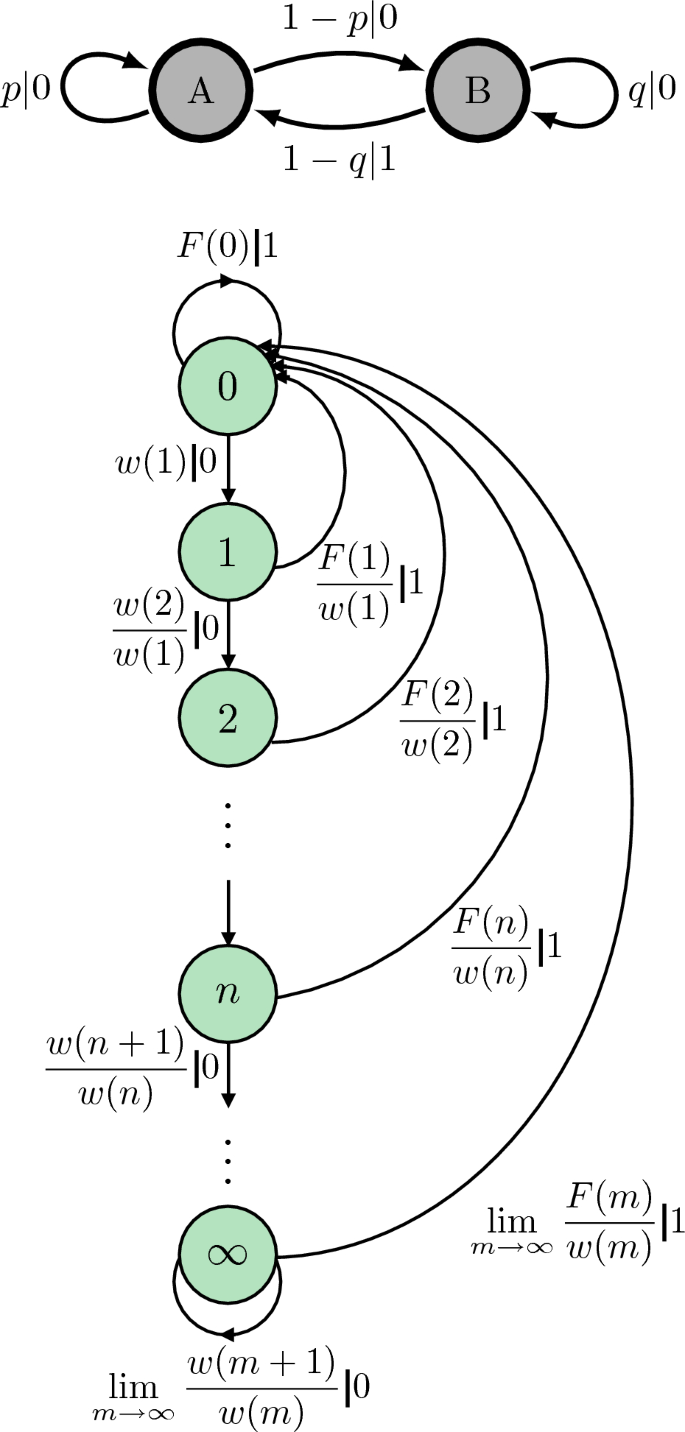

All stationary processes have \(\Epsilon\)-machine presentation, usually not finite. An example is shown in Figure 1.16.The upper finite HMC is non-uniform since starting in the state a Outputting 0 does not uniquely determine which state to transition to. a or B. Bottom HMC teeth This is because unifilar, in any state, uniquely determines the next state by knowing the emitted symbol. be careful. \(\Epsilon\)-The machine of processes produced by a finite nonlinear HMC has an infinite number of causal states. Also note that this process has infinite Markov order. If you see all 0's in the past, you are not “in sync” with the process. \(\Epsilon\)– Hidden state inside the machine17which means we don't know which hidden state it is. \(\Epsilon\)Therefore, there is no perfect one-to-one correspondence between the sequence of observed symbols and the chain of hidden states. In contrast, at each step, \(\Epsilon\)– The machine's presentation approaches a one-to-one correspondence between the observed symbol and the hidden state by an inch. In fact, it will be as close as possible.

At the top, a non-uniform hidden Markov model is displayed. \(\Epsilon\)-Since it is a machine, when the state a, even if we know that it outputs 0, it does not uniquely determine which state it transitioned to.At the bottom you will see the corresponding \(\Epsilon\)– A machine that, in any state, uniquely determines the next state by knowing the issued symbols.For this \(\Epsilon\)– Machinery, we have \(F(n)={\left\{ \begin{array}{ll} (1-p)(1-q)(p^nq^n) / (pq) &{} p\ne q ~, \\ (1-p)^2 np^{n-1} &{} p=q ~. and \(w(n)=\sum _{m=n}^{\infty } F(m)\)16. Note that both hidden Markov models produce the same infinite-order Markov process. If you look past all 0s, you are not “in sync” with the model's internal hidden state. \(\Epsilon\)-machine. Therefore, there is no perfect one-to-one correspondence between observed symbol sequences and hidden states.

In a sense, a non-unity HMC is just a process generator.18 equivalent \(\Epsilon\)– There are an infinite number of causal relationships in the presentation of machines. In another sense, \(\Epsilon\)-The machine is very Special type of HMC generator \(\Epsilon\)– Machine causality actually represents clusters in the past that have the same conditional probability distribution over the future.14.As a result, the causal relationship etc. \(\Epsilon\)-The machine is predictive.

Consider observing the processes produced by a particular process. \(\Epsilon\)-machine so you can see the hidden status. (This happens with probability 1, but not always17As explained in the non-linear HMC example. ) Then we can build a prediction algorithm based on the known hidden states. In fact, the result is The best possible predictive algorithm you can build.Moreover, the latter is simple: if you synchronize to the hidden state \(\sigma\)predict the symbol \(\arg \max _x p(x|\sigma )\).

This has one important consequence in neural network tuning. It is the minimum time-averaged probability that can be achieved. \(P_\text {e}^\text {minute}\) The error in predicting the next symbol can be calculated explicitly as follows:

$$\begin{aligned} P_\text {e}^\text {min}= \sum _{\sigma } \left[ 1 – \max _x p(x|\sigma ) \right] p(\sigma ) ~. \end{Align}$$

(1)

(Below we consider a binary alphabet. \(1 – \max _x p(x|\sigma ) = \min _x p(x|\sigma )\).) You can also calculate Shannon's entropy rate. \(h_\mu\) directly from \(\Epsilon\)-machine14 via:

$$\begin{align} h_\mu&= H[X_0|\overleftarrow{X}_0] \nonumber \\&= – \sum _{\sigma } p(\sigma ) \sum _x p(x|\sigma ) \log p(x|\sigma )~. \end{Align}$$

(2)

In contrast, until recently decided \(h_\mu\) The case of processes generated by non-uniform HMCs was difficult to deal with. An important advance was the recent solution of Blackwell's integral equations for these processes.19,20.

Recurrent Neural Network

Let me \(s_t\in \mathbb {R}^d\) Let be the state of the learning system (perhaps a sensor), and \(x_t\in \mathbb {R}^N\) change over time N-Dimension input, both at the same time t. A discrete-time recurrent neural network (RNN) is an input-driven dynamic system of the form:

$$\begin{Alignment} s_{t+1} = f_{\theta }(s_t,x_t) \end{Alignment}$$

(3)

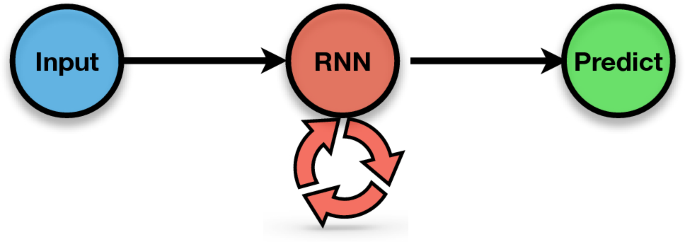

where \(f_{\theta }(\cdot ,\cdot )\) is a function of both sensor states \(s_t\) and input \(x_t\) with parameters \(\theta\). See Figure 2. These parameters are weights that determine how they are controlled. \(s_t\) and \(x_t\) Affect future sensor state \(s_{t+1}, s_{t+2}, \ldots\). Alternative RNN architectures arise from a variety of choices. f. Please refer to the following. Long Short Term Memory Units (LSTMs) and Gated Recurrent Units (GRUs) are often used to optimize input prediction. For simplicity, we consider scalar time series below. \(N = 1\).

In general, RNNs are difficult to train, both in terms of the required data sample size and computational resources (memory and time).twenty one. R.C.2,22also known as echo state networktwenty three and liquid state machine24,25was introduced to address these challenges.

A recurrent neural network (RNN) in which the future state of a recurrent node depends on its previous state and current input. It then uses the current state of the recurrent node to make predictions.

RC includes two components. The first one, reservoir—High-dimensional input-dependent dynamic systems d As in Eq. (3). And secondly, readout layer \(\hat{u}\)—A simple function of hidden reservoir conditions. Here, the readout layer typically uses logistic regression.

$$\begin{aligned} P(\hat{u}|s) = \frac{e^{a_{\hat{u}}^{\top }s+b_{\hat{u}}}}{ \sum _{\hat{u}'}e^{a_{\hat{u}'}^{\top }s+b_{\hat{u}'}}}~, \end{aligned}$$

With regression parameters \(a_{\hat{u}}\) and \(b_{\hat{u}}\). To model the binary-valued process we are focusing on here, we have:

$$\begin{aligned} P(\hat{u}=1|s) = \frac{e^{a^{\top }s+b}}{1+e^{a^{\top }s +b}}. \end{Align}$$

Regression parameters can be easily trained and regularization can be included if needed. however, s was used as input to the logistic regression probability, but it can also be used to transition to a nonlinear readout. \(ss^{\top }\) Reports the logistic regression probabilities.

Below we compare several types of RNNs: “typical” RC, “next generation” RC, and LSTM.

“Typical” RC

In the following, as a typical RC model, a subset of RC nodes are updated linearly, and other nodes are updated linearly. \(\tanh (\cdot )\) Activation function.Let me \(s = \begin{pmatrix} s^{\text {nl}} \\ s^{\text {l}} \end{pmatrix}\). we have:

$$\begin{aligned} s^{\text {nl}}_{t+1} = \tanh \bigl ( W^{\text {nl}} s^{\text {nl}}_t+v^ {\text {nl}} x_t + b^{\text {nl}} \bigr )~, \end{aligned}$$

and

$$\begin{aligned} s^{\text {l}}_{t+1} = W^{\text {l}} s^{\text {l}}_t + v^{\text {l }} x_t + b^{\text {l}} , \end{aligned}$$

where \(v^\text {l,nl}\) Controls how strongly the input affects the state. \(b^{\text {l,nl}}\) is a bias term; \(W^\text {l,nl}\) is the weight matrix.

weight matrix \(W^\text {l,nl}\) 0 entries based on a small-world network with density 0.1 and \(\beta =0.1\); Non-zero entries are normally distributed according to the standard normal.and spectral radius \(\sim 0.99\) To guarantee RC fading memory statetwenty three. Different recipes for selecting connected nodes (small world networks with different nodes) \(\beta\) and density), what distribution the weights were drawn from (normal vs. uniform), and whether there was a bias term. These variations had virtually no effect on the results. The only thing that clearly made a difference was whether the readout was linear (logistic regression) or nonlinear (logistic regression concatenated with next value). s and \(ss^{\top }\)). These two cases are clearly differentiated with appropriate diagrams. There was no bias term in the figure shown.

“Next generation” radio control

Next-generation RCs employ simple reservoirs that track some input history and more complex readout layers.Five To improve accuracy over the general approximation properties of RC. The reservoir records the input from the previous time. meter Compute the timestep and use a readout layer consisting of a polynomial of arbitrary degree. Technically, next generation RC is a subset of general RC in that the reservoir can be a shift register that records the last recording. meter time step. As introduced in Ref. Five Next-generation RC solves regression tasks, but can be easily modified to solve classification tasks. The following simply obtains the final quadratic polynomial combination. meter Generate timesteps and use them as features in a logistic regression layer. In other words, \(s_t = (x_t,x_{t-1},…,x_{t-m+1})\), the column vector will be the reservoir state.then we use \(s_t\) and \(s_t^{\top }s_t\) As input to logistic regression.

LSTM

in contrast, long short term memory network (LSTM)9 Take a different approach by optimizing \(f_{\theta }\) For training and memory retention. There are s is a combination of several hidden states, and the network update equation is given in Ref.9. The essential components of LSTM consist of linearly updated components. memory cell This makes training easier and avoids exploding and dying gradients. forgotten gate Allowing the network to access different timescales can potentially improve performance.Four.