are you human? Proving it might help robots get closer to us.

photograph: 123 RF

analysis – Artificial intelligence is developing so fast that almost anyone can fake it from the digital breadcrumbs we left behind.

New Zealand tech companies have debated the issue of deepfakes and how many options they have around unintentionally training AI to do things, but in total not much.

“Many ways and methods of collecting AI training data may in fact violate privacy and ethical principles,” Dr. Andrew Chen said at a webinar hosted by the Privacy Commission.

Take deepfakes.

The U.S. Republican Party just rolled out its first-ever all-AI attack ad that shows street commotion and a fake Joe Biden collapsing on an Oval Office desk.

That’s what prompted Casey Newton’s advice to the experts. new york times technology podcast hard fork: “We can start writing about what AI elections will look like in 2024.”

MSNBC called the AI political ad “a disturbing first step into a new world of manipulation,” but some thought it was just a prank.

Of course, New Zealand will have elections in October 2023 before the United States. The Independent Election Review is investigating the impact of AI and aims to report on it early next month.

“I don’t know what it is,” Newton added. [commentators] Joe Rogan, Ben Shapiro and Joe Biden Think [AI fakes], my guess is they don’t love it, yes, but… it’s happening to those people first, but it’s going to happen to all of us soon. ”

all of us?

Famous examples feature AI-manipulated image and dialogue screens. But as her Allyn Robins from her Brainbox Institute in New Zealand said during one of her webinars, it doesn’t need much more.

“They’re developing so quickly that all you need is five minutes of audio and maybe a few mug shots of someone from social media,” Robbins said.

“Anyone with these things, and in the age of social media, most people can apply these techniques very effectively to generate all sorts of fake personal information. .”

One technomaniac defense might be to flood the web with all sorts of images labeled as you, but not you, to confuse the AI. But this will be an “arms race,” Robbins said.

“There’s no easy solution,” he told an online audience.

“In terms of AI’s impact on privacy, it’s not all bad, but the overall impact is pretty severe.”

Not all bad things, but imagine you have an idea and write down what you want to see. The images are then displayed on the screen.

AI is already doing this, often very cheaply, through fancy-named image generators like Stable Diffusion.

“Think of it as a ChatGPT service for images,” says Popular Mechanics.

They may not even care that they are deepfakes or have a rogue avatar. But AI targets you in a different way.

During our webinars on privacy, we heard repeatedly about our insatiable appetite for data because this technology needs to learn from it.

Big tech companies gobble up all the time users spend on their smartphones, and consultant David Ding explains how they get their data for free, and even pay users for it. I’m wondering if I’m letting you down.

“There are people using chatbots and actually training models,” Ding said.

“You know, it’s your behavior, it’s really your data. You might have kids learning languages with apps. Data owners are paying to train models. .

“So we’re seeing things turn upside down.”

https://www.youtube.com/watch?v=oToqFp3NLK8

Mr. Chen of the University of Auckland was investigating whether this aspect falls under labor exploitation. Because it can be a more important issue for people than invasion of privacy.

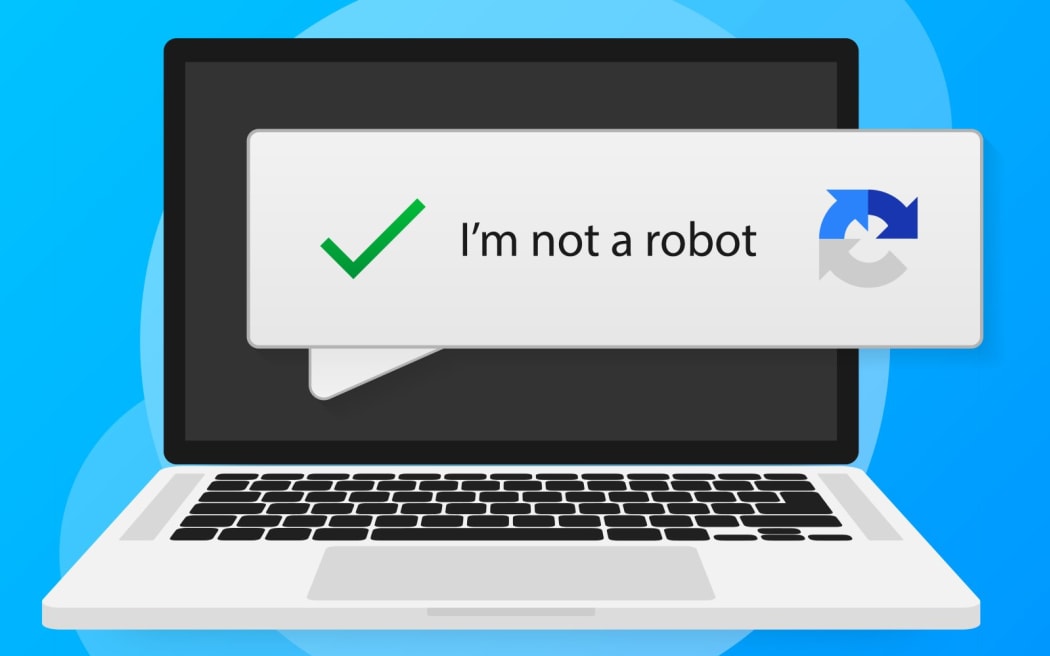

For example, if you’re asked to prove you’re human to buy concert tickets online, you might be asked to click on each image containing a traffic light, he said. But you probably don’t know if the system only uses half of the image to check your humanity and the other half to train the system itself.

“One of the questions is how did you let the tech giants go unchecked for so long?”

He said what he felt was insignificant to an individual had a big impact. Sometimes the data capture was hidden, sometimes it was hidden.

“There are people actually entering confidential and sensitive personal information into ChatGPT. In fact, we know that data is used for training purposes.”

But who reads the terms of use?

Robbins said it was normalized and largely unregulated.

“Regulators have not yet been given a chance to turn around, and everyone sees this as a race to the market, and there are likely to be few consequences for doing it wrong, so this sector ‘s incentives are very good for getting whatever you want. Collect information that you can get and don’t really care if it’s copyrighted or private to anyone, just rush it to market. just send it out.

“And when the model goes out, it goes out.”

The approach of collecting lots of data to get lots of attention has paid off.

Facebook owner Meta recently announced that AI increased the amount of time people spend on Instagram by 24% in the first three months of the year, adding $80 billion to the company’s market value.

The Privacy Commission webinar was conducted under the slogan “Protect the personal information you hold”. One of them addressed “why personal information should be considered taonga”.

https://www.youtube.com/watch?v=4q1RpG4ltJU

Tech companies, big and small, undoubtedly consider it a treasure.