participant

Survey material was collected from a digital clock drawing consortium between the University of Florida (UF) and the New Jersey Institute on Successful Aging (NJISA), the Memory Assessment Program, School of Osteopathic Medicine, and Rowan University. The Institutional Review Boards of the University of Florida and Rowan University approved this study. Study participants at both institutions gave written approval for inclusion in the study through informed consent forms. All research procedures were performed in accordance with the Declaration of Helsinki and the guidelines of the respective universities and his TRIPOD criteria.31This study consisted of two data cohorts:

training dataset A set of 23,521 clock drawings from 11,762 participants aged 65 years or older and speaking primary English was included to indicate and copy conditions as part of routine medical assessment in the preoperative setting. completed the drawing of the watch.32Exclusion criteria are as follows: Not fluent in English. Education < 4 years; visual, auditory, or motor limb limitations that potentially inhibit the production of effective clock diagrams.

classification dataset consists of a ‘fine-tuning’ and a ‘test’ dataset, which are used to fine-tune and test the dementia and non-dementia neural network classifiers, respectively. These datasets consist of clock drawings from individuals diagnosed with dementia and peers without dementia. Dementia watches were collected from his 56 participants who were assessed through his community-his memory assessment program within Rowan University. They were seen by a neuropsychologist, psychiatrist, and social worker. Inclusion Criteria: Age ≥55 years. Exclusion Criteria: Head injury, heart disease, or other major medical illness that may induce encephalopathy. documented learning disability; seizure disorder or other major neurological disorder; pre-6th grade education, and history of substance abuse. All individuals with dementia were evaluated using the Mini-Mental State Examination (MMSE), serum studies, and her MRI scans of the brain.These individuals have been described in previous studies33They were diagnosed with AD or VaD using standard diagnostic criteria, as reported in previous studies.34,35.

A total of 175 non-demented participants completed a study protocol consisting of neuropsychological measurements and neuroimaging. Two neuropsychologists reviewed all data.Inclusion Criteria: Age ≥60 years, English as first language, availability of intact activities of daily living (ADL) according to the Lawton and Brody scale of activities of daily living, completed by both the participant and their caregiver36Exclusion Criteria: Clinical evidence of major neurocognitive impairment at baseline according to the Diagnostic and Statistical Manual of Mental Disorders, 5th Edition37Presence of significant chronic medical condition, major psychiatric disorder, history of head injury/neurodegenerative disease, documented learning disability, epilepsy or other significant neurological disorder, education below grade 6, substance abuse in the past year , major heart disease, and encephalopathy due to chronic disease. These participants were screened for dementia by telephone using the Telephone Cognitive Status Interview (TICS).38) and a face-to-face interview with a neuropsychologist and study coordinator who also assessed an assessment of comorbidities39anxiety, depression, ADL, neuropsychological function, and digital clock drawing40Data from these participants are described in other studies3,19.

procedure

Cohort participants completed two clock drawings: (a) “Draw the clock face, fill in all the 10am to 11am”, and (b) a copy condition in which participants are presented with a watch model and asked to copy the same underneath2Anoto, Inc. digital pen and related smart paper17 Used to complete the drawing. The digital pen captures and measures the pen position on the smart paper 75 times per second. An 8.5 × 11 inch smart paper was folded in half to provide participants with an 8.5 × 5.5 inch drawing area. Only the final drawings were extracted and used for analysis in the current study.

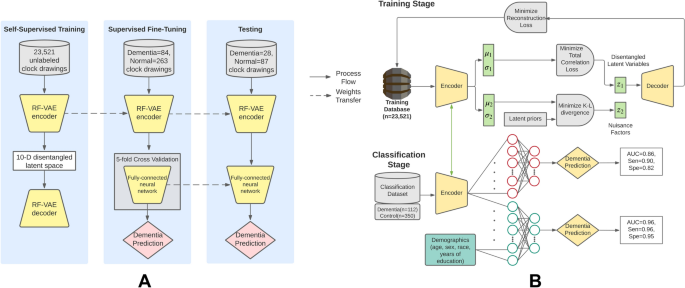

Clock drawings for both command and copy conditions from the training cohort were used for RF-VAE training. We then used the clock draws for both the command and copy conditions from the fine-tuning cohort to train the weights of the neural network classifier and fine-tune the weights of the RF-VAE encoder to match the dementia and control clocks. made a distinction. The command clock and copy clock were not separated in training. This was because we wanted the model to be agnostic of cognitive outcomes and to learn clock encodings that could be generalized to multiple different classification tasks. The fine-tuning dataset consisted of 84 dementia clocks and 263 non-dementia clocks. Finally, the classification network was tested on a test dataset containing 28 dementias and 87 control clocks.

Individual watch drawings were extracted from the files using contour detection. The extracted contours were cropped to the bounds of the clock drawing, padded with blanks to square, and resized to 64 × 64, as this is the only size supported by the RF-VAE implementation.twenty five Already used. Supplementary Fig. 3 shows the pretreatment pipeline described above.

statistical test

Latent features developed by RF-VAE were tested for statistical differences between dementia and non-dementia cohorts using a two-tailed Student’s T-test with multiple comparison correction using the Benjamini-Hochberg method. it was done.41 at FDR = 0.01. Confounding effects of age and education were removed using propensity score matching using an open-source Python library called PsmPy.42This yielded a propensity score-matched cohort of 110 dementia and 220 non-dementia clocks. As shown in Supplementary Table 1, the significance shown in Fig. 3A was based on the adjusted p-values estimated in this trend-matched cohort. Correlations between variables were calculated using Pearson’s product moment correlation coefficient. The correlation matrix was then thresholded at 0.2 and −0.2, as these values represent the 5th and 95th percentiles of the nonparametric distribution of correlation values. Thresholded binary matrices were used as adjacency matrices to generate cross-correlation graphs between latent variables.

Model and experimental set-up

A variational autoencoder (VAE) represents a generative model that can learn a low-dimensional representation of input data in the form of the mean and standard deviation of a Gaussian distribution that it samples to reconstruct the input data. The nonlinear output decoder network compensates for the generality loss caused by the prior normal distribution. One of the drawbacks of the VAE latent distribution is its inability to disentangle factors. Each latent variable contributes exclusively to its own aspect of variation in the input data. In this paper, we used an existing implementation of a VAE-based deep autoencoder model. The model is able to learn all meaningful sources of variation in the drawing of a clock with unentangled latent representations. This model, called RF-VAE, uses total correlation (TC) in the latent space to improve disentanglement of relevant sources of variation while allowing significant KL divergence from nuisance priors while allowing these We identify factors with low divergence from the nuisance prior distribution of as ‘nuisance sources’. variation”. This way you can learn “all meaningful sources of variation” in the latent space.

The preprocessed clock image was fed to the RF-VAE network with latent dimension 10. The RF-VAE network was trained with a learning rate of 10 for 1400 epochs.−4 If your batch size is 64, as recommended in the source article25,43The reconstruction loss was cross-entropy and the optimizer was Adam44RF-VAE training took 3.5 hours on a GeForce GP102 Titan × GPU from NVIDIA Corporation. The trained latent space of RF-VAE was fed into a fully connected feedforward neural network with two hidden layers, with 7 neurons in the first hidden layer and 4 neurons in the second hidden layer. rice field. Using the Adam optimizer, we trained a classifier with a batch size of 32 and a learning rate of 0.0075 using the fine-tuning dataset for 20 epochs. Classification loss was the binary cross-entropy. To improve class imbalance in the fine-tuning dataset, the dementia class was assigned his 3.125:1 weight during training. All hyperparameters were selected using the fine-tuning dataset within a 5-fold cross-validation design by maximizing the average fold-AUC of the model. Figure 6 shows the network architecture and represents the conceptual workflow of this method. The top of each panel in the figure shows the RF-VAE training process. The lower part of the figure shows how the RF-VAE trained encoder weights support task-specific classifiers. The performance of this trained classifier was tested on test data and several key performance metrics were reported: AUC, accuracy, sensitivity, specificity, precision, and negative predictive value (NPV). The test data were bootstrapped 100 times using random sampling and replacement to create confidence intervals. Median scores, 2.5 quartiles, and 97.5 quartiles were reported for these metrics on the bootstrapped test dataset.

Conceptual workflow of the proposed method. (a) A high-level conceptual diagram showing training, validation, and testing procedures. RF-VAE undergoes unsupervised training using 23,521 unlabeled clock drawings. Subsequently, the trained RF-VAE encoder is transferred to the ‘fine-tuning’ stage, where the fully connected neural network is optimized using 84 dementias and 263 normal clocks. Finally, the pretrained encoder and fine-tuned classifier are tested on 28 dementia and 87 normal clocks. (B.) detailed workflow showing different loss functions that are minimized during training and classification. In the training phase, the 10-dimensional RF-VAE latent space is obtained by minimizing the loss between the original and reconstructed clock maps and by minimizing the total correlation between the latent dimensions and unraveling them. be built. Furthermore, feature relevance is guaranteed in the latent space by eliminating latent variables that do not diverge significantly from their previously defined previous distributions. In the classification phase, the trained encoder is jointly fine-tuned with a fully connected neural network to classify dementia from non-dementia clocks in the classification phase. Additionally, we add age, gender, race, and years of education to the latent dimensions to train another classifier with better performance.

We added participants’ age, gender, race, and years of education to the model to assess the performance improvement of the classifier. The optimal classifier is 3 hidden layers with 10 input neurons, 512 neurons in the first hidden layer, 256 neurons in the second hidden layer, and 128 neurons in the third hidden layer. was configured. Training was done for 20 epochs on the fine-tuning data with a batch size of 8 and a learning rate of 0.0075. All hyperparameters were selected using the fine-tuning dataset within a 5-fold cross-validation design by maximizing the average fold-AUC of the model. Figure 6 shows the various steps in the workflow.